[Homepage] | [Colab Demo] | [Huggingface] | [Discord] | [Twitter] | [Blog]

OpenMoE is a project aimed at igniting the open-source MoE community! We are releasing a family of open-sourced Mixture-of-Experts (MoE) Large Language Models.

Our project began in the summer of 2023. On August 22, 2023, we released the first batch of intermediate checkpoints (OpenMoE-base&8B), along with the data and code [Twitter]. Subsequently, the OpenMoE-8B training was completed in November, 2023. After that, we embarked on explorations on 34B scale model, which is still ongoing.

As a small student team, instead of pursuing the best model with better data, computation, and human power, we devote to fully sharing our training data, strategies, model architecture, weights, and everything we have with the community. We hope this project will promote research on this promising field and invite more contributors to work on open-sourced MoE projects together!

[2024/01] 🔥 OpenMoE-8B-Chat is now available. We've provided a Colab inference demo for everyone to try, as well as a tutorial on converting JAX checkpoints to PyTorch checkpoints(Note: both require Colab Pro).

[2023/11] 🔥 Thanks to Colossal AI! They released one PyTorch OpenMoE implementation including both training and inference with expert parallelism.

[2023/08] 🔥 We released an intermediate OpenMoE-8B checkpoint (OpenMoE-v0.2) along with two other models. Check out the blog post.

- PyTorch Implementation with Colossal AI

- Continue Training to 1T tokens

- More Evaluation

- Paper

Currently, three models are released in total: OpenMoE-base, OpenMoE-8B(and its chat version), and OpenMoE-34B(intermediate checkpoint at 200B tokens).

We provide all these checkpoints on Huggingface(in pytorch) and Google Cloud Storage(in Jax).

| Model Name | Description | #Param | Huggingface | Gin File |

|---|---|---|---|---|

| OpenMoE-base | A small MoE model for debugging(only go through 128B tokens) | 637M | Link | Link |

| OpenLLaMA-base | A dense counter-part of OpenMoE-base | 310M | Link | Link |

| OpenMoE-8B-200B | 8B MoE with comparable FLOPs of a 1.6B LLaMA(No SFT) | 8B | Link | Link |

| OpenMoE-8B-890B | 8B MoE with comparable FLOPs of a 1.6B LLaMA(No SFT) | 8B | Link | Link |

| OpenMoE-8B-1.1T | 8B MoE with comparable FLOPs of a 1.6B LLaMA(No SFT) | 8B | Link | Link |

| OpenMoE-8B-Chat (1.1T+SFT) | OpenMoE-8B-1.1T supervised finetuned on the WildChat GPT-4 Subset | 8B | Link | Link |

| OpenMoE-34B/32E (200B) | 34B MoE with comparable FLOPs of a 7B LLaMA(No SFT) | 34B | Link | Link |

The base models, which were trained using 128 billion tokens, served primarily for debugging purposes. After validating the effectiveness of the model architexture, we did not pursue further training. Consequently, their performance might not be very well, and they are not designed for practical applications.

The OpenMoE-8B with 4 MoE layers and 32 experts has been trained by 1.1T tokens. The SFT version has also been released after we finetuned the OpenMoE-8B-1.1T on the wildchat dataset. Besides, we also prodive some intermediate checkpoints at 200B and 890B tokens for research purposes.

We are still training our OpenMoE-34B, which is a MoE model with 8 MoE layer and 32 experts. We released the intermediate checkpoint trained on 200B tokens on huggingface. If you are interested in the latest checkpoint, please feel free to drop Fuzhao an email ([email protected]).

We provide a Colab tutorial demonstrating the jax checkpoint conversion and execution of PyTorch model inference. You can experiment with OpenMoE-8B-Chat on Colab directly by this(Note: both require Colab Pro).

- Running OpenMoE-8B requires ~49GB of memory in float32 or ~23GB in bfloat16(float16 is not recommended because sometimes it will lead to performance degradation). It can be executed on a Colab

CPU High-RAMruntime or anA100-40GBruntime, both of which require Colab Pro. - Runing the OpenMoE-34B requries ~89GB of memory in bfloat16 or ~180GB in float32. To perform inference on multiple devices/offloading model weights to RAM, please refer to the script here.

For the inference environment setup, please refer to the script here

- On TPUs: Get a TPU-vm and run the following code on all TPUs. Researcher can apply TPU Research Cloud to get the TPU resource.

git clone https://github.com/XueFuzhao/OpenMoE.git

bash OpenMoE/script/run_pretrain.sh

- On GPUs: ColossalAI provides a PyTorch + GPU implementation for OpenMoE and has optimized expert parallel strategies. However, we have recently noticed some issues#5163,#5212 raised about convergence problems. We are actively following up on these concerns and will soon update our GPU training tutorials.

- On TPUs: Get a TPU-vm and run the following code to evaluate model on the BIG-bench-Lite.

git clone https://github.com/XueFuzhao/OpenMoE.git

bash OpenMoE/script/run_eval.sh

- On GPUs: You can evaluate our model on MT-Bench by runing code below.

git clone https://github.com/Orion-Zheng/FastChat.git

cd FastChat && pip install -e ".[model_worker,llm_judge]"

cd FastChat/fastchat/llm_judge

python gen_model_answer.py --model-path LOCAL_PATH_TO_MODEL_CKPT/openmoe_8b_chat_ckpt\

--model-id openmoe-chat\

--dtype bfloat16

50% The RedPajama + 50% The Stack Dedup. We use a high ratio of coding data to improve reasoning ability.

| dataset | Ratio (%) |

|---|---|

| redpajama_c4 | 15.0 |

| redpajama_wikipedia | 6.5 |

| wikipedia | 6.5 |

| redpajama_stackexchange | 2.5 |

| redpajama_arxiv | 4.5 |

| redpajama_book | 6.5 |

| redpajama_github | 5.0 |

| redpajama_common_crawl | 43.5 |

| the_stack_dedup | 10.0 |

We found model tends to learn code faster than language. So we decide to reduce the coding data at the later stage of training.

Below are scripts to generate TFDS for pre-training datasets:

The RedPajama: https://github.com/Orion-Zheng/redpajama_tfds

The-Stack-Dedup: https://github.com/Orion-Zheng/the_stack_tfds

We use the umt5 Tokenizer to support multi-lingual continue learning in the future, which can be downloaded on Huggingface or Google Cloud.

OpenMoE is based on ST-MoE but uses Decoder-only architecture. The detailed implementation can be found in Fuzhao's T5x and Flaxformer repo.

We use a modified UL2 training objective but Casual Attention Mask (We use more prefix LM and high mask ratio because it saves computation.):

- 50% prefix LM

- 10% span len=3 mask ratio=0.15

- 10% span len=8 mask ratio=0.15

- 10% span len=3 mask ratio=0.5

- 10% span len=8 mask ratio=0.5

- 10% span len=64 mask ratio=0.5

Vanilla next token prediction, because we observed that UL2 objective tends to saturate at the later stage of training, although it enables model to learn things faster at start.

RoPE, SwiGLU activation, 2K context length. We will release a more detailed report soon.

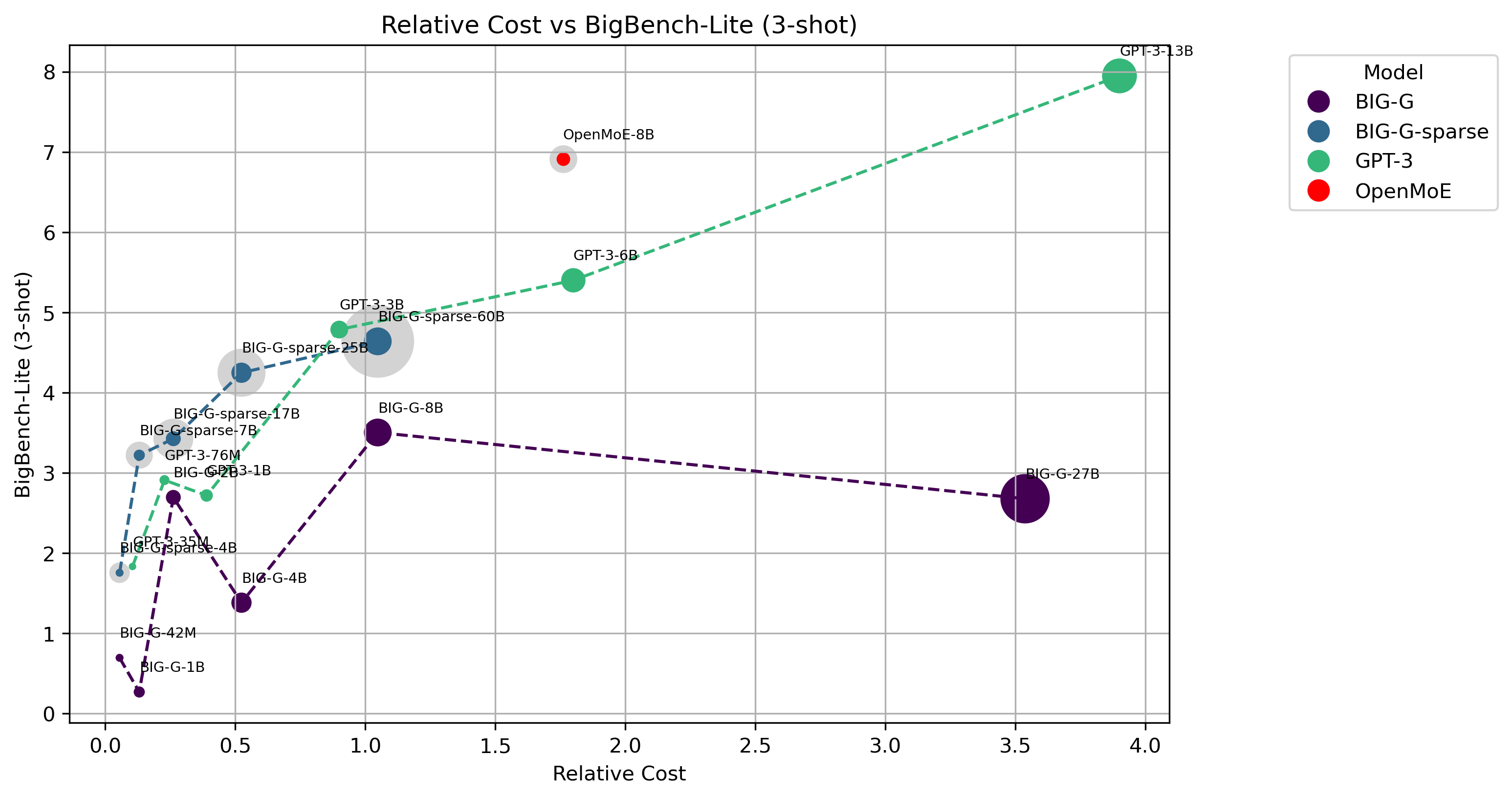

We evaluate our model on BigBench-Lite as our first step. We plot the cost-effectiveness curve in the figure below.

Relative Cost is approximated by multiplying activated parameters and training tokens. The size of dots denotes the number of activated parameters for each token. The lightgray dot denotes the total parameters of MoE models.

For more detailed results, please see our Blog

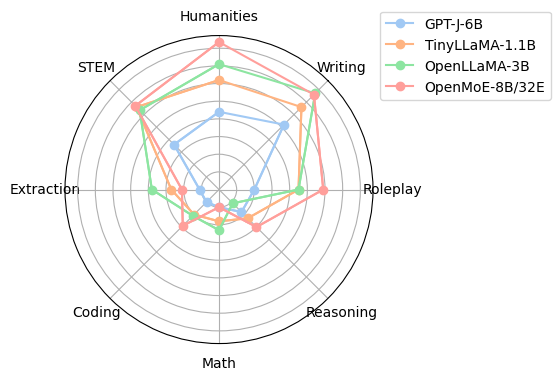

We perform evaluation on MT-Bench and observe that OpenMoE-8B-Chat outperformed some dense counterparts with two times training FLOPs on the first Turn results.

Our code is under Apache 2.0 License.

Since the models are trained on The Redpajama and The Stack dataset, please check the license of these two datasets for your model usage.

This project is currently contributed by the following authors:

The computational resources for this project were generously provided by the Google TPU Research Cloud(TRC). We extend our heartfelt thanks to TRC for their invaluable support, which has been fundamental to the success of our work. Besides, we are extremely grateful to the ColossalAI Team for their tremendous support with the PyTorch implementation, especially Xuanlei Zhao and Wenhao Chen, making training and inference of OpenMoE on GPUs a reality.

Please cite the repo if you use the model and code in this repo.

@misc{openmoe2023,

author = {Fuzhao Xue, Zian Zheng, Yao Fu, Jinjie Ni, Zangwei Zheng, Wangchunshu Zhou and Yang You},

title = {OpenMoE: Open Mixture-of-Experts Language Models},

year = {2023},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/XueFuzhao/OpenMoE}},

}