Its task is simple: It tells you which language some provided textual data is written in. This is very useful as a preprocessing step for linguistic data in natural language processing applications such as text classification and spell checking. Other use cases, for instance, might include routing e-mails to the right geographically located customer service department, based on the e-mails' languages.

Language detection is often done as part of large machine learning frameworks or natural language processing applications. In cases where you don't need the full-fledged functionality of those systems or don't want to learn the ropes of those, a small flexible library comes in handy.

Python is widely used in natural language processing, so there are a couple of comprehensive open source libraries for this task, such as Google's CLD 2 and CLD 3, langid, fastText and langdetect. Unfortunately, except for the last one they have two major drawbacks:

- Detection only works with quite lengthy text fragments. For very short text snippets such as Twitter messages, they do not provide adequate results.

- The more languages take part in the decision process, the less accurate are the detection results.

Lingua aims at eliminating these problems. She nearly does not need any configuration and yields pretty accurate results on both long and short text, even on single words and phrases. She draws on both rule-based and statistical methods but does not use any dictionaries of words. She does not need a connection to any external API or service either. Once the library has been downloaded, it can be used completely offline.

Compared to other language detection libraries, Lingua's focus is on quality over quantity, that is, getting detection right for a small set of languages first before adding new ones. Currently, the following 75 languages are supported:

- A

- Afrikaans

- Albanian

- Arabic

- Armenian

- Azerbaijani

- B

- Basque

- Belarusian

- Bengali

- Norwegian Bokmal

- Bosnian

- Bulgarian

- C

- Catalan

- Chinese

- Croatian

- Czech

- D

- Danish

- Dutch

- E

- English

- Esperanto

- Estonian

- F

- Finnish

- French

- G

- Ganda

- Georgian

- German

- Greek

- Gujarati

- H

- Hebrew

- Hindi

- Hungarian

- I

- Icelandic

- Indonesian

- Irish

- Italian

- J

- Japanese

- K

- Kazakh

- Korean

- L

- Latin

- Latvian

- Lithuanian

- M

- Macedonian

- Malay

- Maori

- Marathi

- Mongolian

- N

- Norwegian Nynorsk

- P

- Persian

- Polish

- Portuguese

- Punjabi

- R

- Romanian

- Russian

- S

- Serbian

- Shona

- Slovak

- Slovene

- Somali

- Sotho

- Spanish

- Swahili

- Swedish

- T

- Tagalog

- Tamil

- Telugu

- Thai

- Tsonga

- Tswana

- Turkish

- U

- Ukrainian

- Urdu

- V

- Vietnamese

- W

- Welsh

- X

- Xhosa

- Y

- Yoruba

- Z

- Zulu

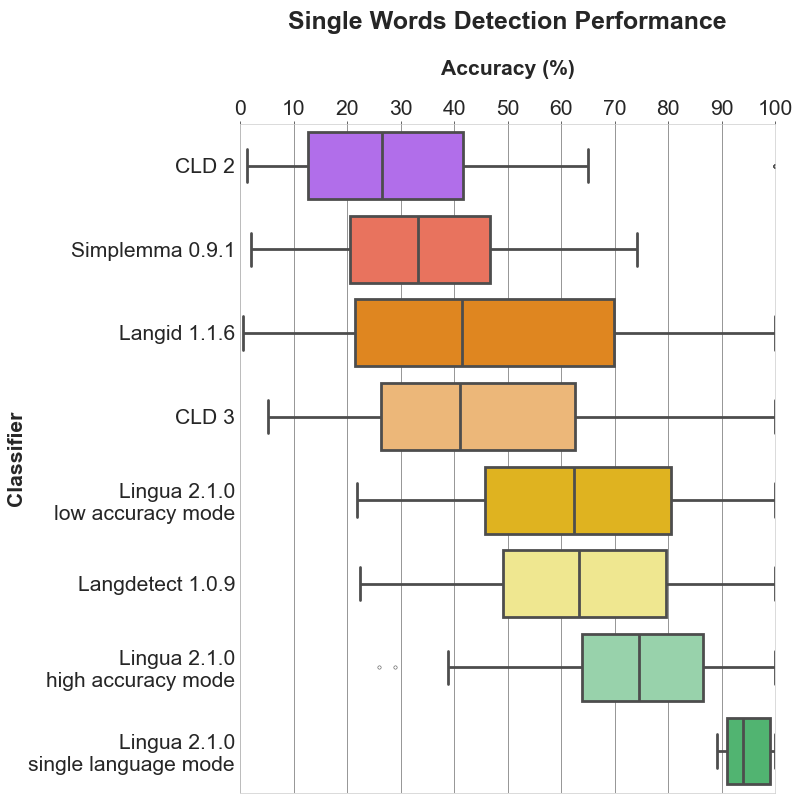

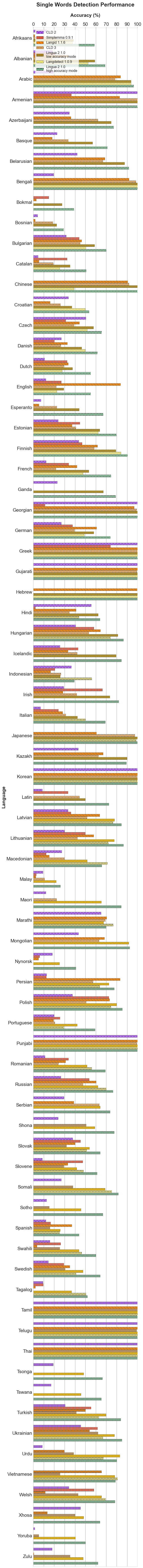

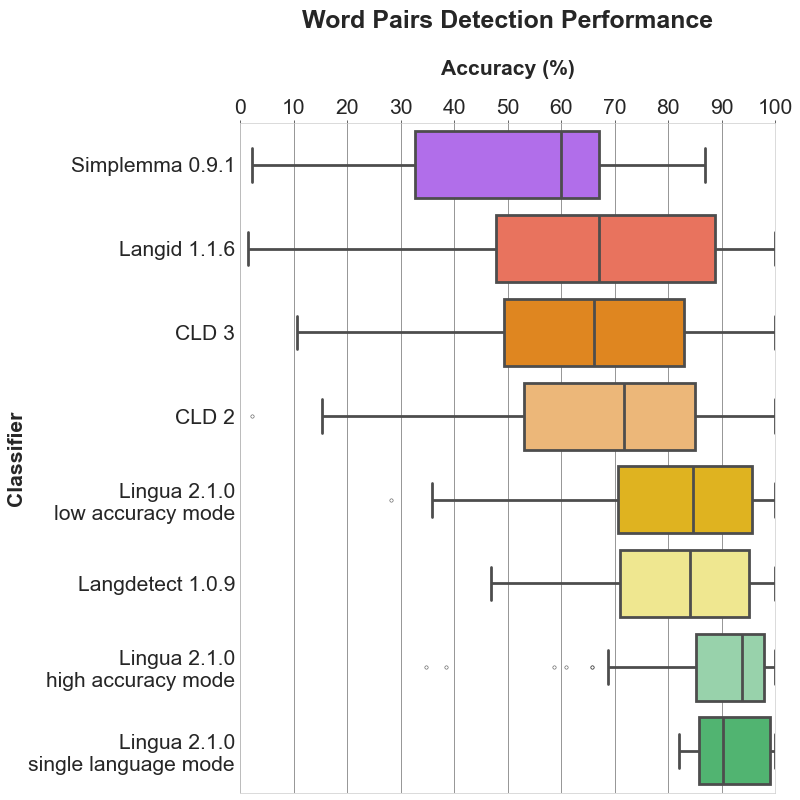

Lingua is able to report accuracy statistics for some bundled test data available for each supported language. The test data for each language is split into three parts:

- a list of single words with a minimum length of 5 characters

- a list of word pairs with a minimum length of 10 characters

- a list of complete grammatical sentences of various lengths

Both the language models and the test data have been created from separate documents of the Wortschatz corpora offered by Leipzig University, Germany. Data crawled from various news websites have been used for training, each corpus comprising one million sentences. For testing, corpora made of arbitrarily chosen websites have been used, each comprising ten thousand sentences. From each test corpus, a random unsorted subset of 1000 single words, 1000 word pairs and 1000 sentences has been extracted, respectively.

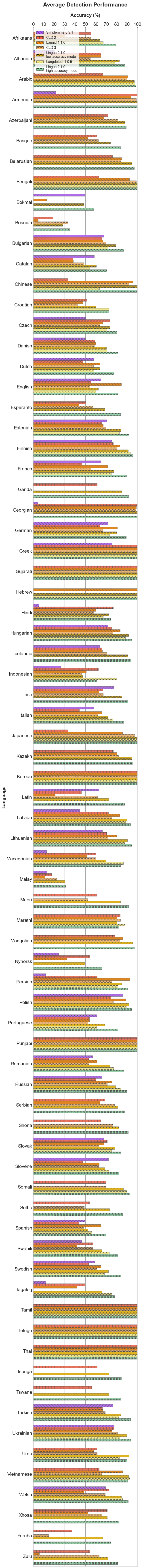

Given the generated test data, I have compared the detection results of Lingua, fastText, langdetect, langid, CLD 2 and CLD 3 running over the data of Lingua's supported 75 languages. Languages that are not supported by the other detectors are simply ignored for them during the detection process.

Each of the following sections contains two plots. The bar plot shows the detailed accuracy results for each supported language. The box plot illustrates the distributions of the accuracy values for each classifier. The boxes themselves represent the areas which the middle 50 % of data lie within. Within the colored boxes, the horizontal lines mark the median of the distributions.

The table below shows detailed statistics for each language and classifier including mean, median and standard deviation.

Open table

Every language detector uses a probabilistic n-gram model trained on the character distribution in some training corpus. Most libraries only use n-grams of size 3 (trigrams) which is satisfactory for detecting the language of longer text fragments consisting of multiple sentences. For short phrases or single words, however, trigrams are not enough. The shorter the input text is, the less n-grams are available. The probabilities estimated from such few n-grams are not reliable. This is why Lingua makes use of n-grams of sizes 1 up to 5 which results in much more accurate prediction of the correct language.

A second important difference is that Lingua does not only use such a statistical model, but also a rule-based engine. This engine first determines the alphabet of the input text and searches for characters which are unique in one or more languages. If exactly one language can be reliably chosen this way, the statistical model is not necessary anymore. In any case, the rule-based engine filters out languages that do not satisfy the conditions of the input text. Only then, in a second step, the probabilistic n-gram model is taken into consideration. This makes sense because loading less language models means less memory consumption and better runtime performance.

In general, it is always a good idea to restrict the set of languages to be considered in the classification process using the respective api methods. If you know beforehand that certain languages are never to occur in an input text, do not let those take part in the classifcation process. The filtering mechanism of the rule-based engine is quite good, however, filtering based on your own knowledge of the input text is always preferable.

If you want to reproduce the accuracy results above, you can generate the test reports yourself for all classifiers and languages by executing:

poetry install --extras "fasttext langdetect langid gcld3 pycld2 pandas matplotlib seaborn"

poetry run python3 scripts/accuracy_reporter.py

For each detector and language, a test report file is then written into

/accuracy-reports.

As an example, here is the current output of the Lingua German report:

##### German #####

>>> Accuracy on average: 89.27%

>> Detection of 1000 single words (average length: 9 chars)

Accuracy: 74.20%

Erroneously classified as Dutch: 2.30%, Danish: 2.20%, English: 2.20%, Latin: 1.80%, Bokmal: 1.60%, Italian: 1.30%, Basque: 1.20%, Esperanto: 1.20%, French: 1.20%, Swedish: 0.90%, Afrikaans: 0.70%, Finnish: 0.60%, Nynorsk: 0.60%, Portuguese: 0.60%, Yoruba: 0.60%, Sotho: 0.50%, Tsonga: 0.50%, Welsh: 0.50%, Estonian: 0.40%, Irish: 0.40%, Polish: 0.40%, Spanish: 0.40%, Tswana: 0.40%, Albanian: 0.30%, Icelandic: 0.30%, Tagalog: 0.30%, Bosnian: 0.20%, Catalan: 0.20%, Croatian: 0.20%, Indonesian: 0.20%, Lithuanian: 0.20%, Romanian: 0.20%, Swahili: 0.20%, Zulu: 0.20%, Latvian: 0.10%, Malay: 0.10%, Maori: 0.10%, Slovak: 0.10%, Slovene: 0.10%, Somali: 0.10%, Turkish: 0.10%, Xhosa: 0.10%

>> Detection of 1000 word pairs (average length: 18 chars)

Accuracy: 93.90%

Erroneously classified as Dutch: 0.90%, Latin: 0.90%, English: 0.70%, Swedish: 0.60%, Danish: 0.50%, French: 0.40%, Bokmal: 0.30%, Irish: 0.20%, Tagalog: 0.20%, Tsonga: 0.20%, Afrikaans: 0.10%, Esperanto: 0.10%, Estonian: 0.10%, Finnish: 0.10%, Italian: 0.10%, Maori: 0.10%, Nynorsk: 0.10%, Somali: 0.10%, Swahili: 0.10%, Turkish: 0.10%, Welsh: 0.10%, Zulu: 0.10%

>> Detection of 1000 sentences (average length: 111 chars)

Accuracy: 99.70%

Erroneously classified as Dutch: 0.20%, Latin: 0.10%

Lingua is available in the Python Package Index and can be installed with:

pip install lingua-language-detector

Lingua requires Python >= 3.8 and uses Poetry for packaging and dependency management. You need to install it first if you have not done so yet. Afterwards, clone the repository and install the project dependencies:

git clone https://github.com/pemistahl/lingua-py.git

cd lingua-py

poetry install

The library makes uses of type annotations which allow for static type checking with Mypy. Run the following command for checking the types:

poetry run mypy

The source code is accompanied by an extensive unit test suite. To run the tests, simply say:

poetry run pytest

>>> from lingua import Language, LanguageDetectorBuilder

>>> languages = [Language.ENGLISH, Language.FRENCH, Language.GERMAN, Language.SPANISH]

>>> detector = LanguageDetectorBuilder.from_languages(*languages).build()

>>> detector.detect_language_of("languages are awesome")

Language.ENGLISHBy default, Lingua returns the most likely language for a given input text. However, there are certain words that are spelled the same in more than one language. The word prologue, for instance, is both a valid English and French word. Lingua would output either English or French which might be wrong in the given context. For cases like that, it is possible to specify a minimum relative distance that the logarithmized and summed up probabilities for each possible language have to satisfy. It can be stated in the following way:

>>> from lingua import Language, LanguageDetectorBuilder

>>> languages = [Language.ENGLISH, Language.FRENCH, Language.GERMAN, Language.SPANISH]

>>> detector = LanguageDetectorBuilder.from_languages(*languages)\

.with_minimum_relative_distance(0.25)\

.build()

>>> print(detector.detect_language_of("languages are awesome"))

NoneBe aware that the distance between the language probabilities is dependent on

the length of the input text. The longer the input text, the larger the

distance between the languages. So if you want to classify very short text

phrases, do not set the minimum relative distance too high. Otherwise, None

will be returned most of the time as in the example above. This is the return

value for cases where language detection is not reliably possible.

Knowing about the most likely language is nice but how reliable is the computed likelihood? And how less likely are the other examined languages in comparison to the most likely one? These questions can be answered as well:

>>> from lingua import Language, LanguageDetectorBuilder

>>> languages = [Language.ENGLISH, Language.FRENCH, Language.GERMAN, Language.SPANISH]

>>> detector = LanguageDetectorBuilder.from_languages(*languages).build()

>>> confidence_values = detector.compute_language_confidence_values("languages are awesome")

>>> for language, value in confidence_values:

... print(f"{language.name}: {value:.2f}")

ENGLISH: 1.00

FRENCH: 0.79

GERMAN: 0.75

SPANISH: 0.70In the example above, a list of all possible languages is returned, sorted by their confidence value in descending order. The values that the detector computes are part of a relative confidence metric, not of an absolute one. Each value is a number between 0.0 and 1.0. The most likely language is always returned with value 1.0. All other languages get values assigned which are lower than 1.0, denoting how less likely those languages are in comparison to the most likely language.

The list returned by this method does not necessarily contain all languages which this LanguageDetector instance was built from. If the rule-based engine decides that a specific language is truly impossible, then it will not be part of the returned list. Likewise, if no ngram probabilities can be found within the detector's languages for the given input text, the returned list will be empty. The confidence value for each language not being part of the returned list is assumed to be 0.0.

By default, Lingua uses lazy-loading to load only those language models on demand which are considered relevant by the rule-based filter engine. For web services, for instance, it is rather beneficial to preload all language models into memory to avoid unexpected latency while waiting for the service response. If you want to enable the eager-loading mode, you can do it like this:

LanguageDetectorBuilder.from_all_languages().with_preloaded_language_models().build()Multiple instances of LanguageDetector share the same language models in

memory which are accessed asynchronously by the instances.

Lingua's high detection accuracy comes at the cost of being noticeably slower than other language detectors. The large language models also consume significant amounts of memory. These requirements might not be feasible for systems running low on resources. If you want to classify mostly long texts or need to save resources, you can enable a low accuracy mode that loads only a small subset of the language models into memory:

LanguageDetectorBuilder.from_all_languages().with_low_accuracy_mode().build()The downside of this approach is that detection accuracy for short texts consisting of less than 120 characters will drop significantly. However, detection accuracy for texts which are longer than 120 characters will remain mostly unaffected.

In high accuracy mode (the default), the language detector consumes approximately 800 MB of memory if all language models are loaded. In low accuracy mode, memory consumption is reduced to approximately 60 MB.

An alternative for a smaller memory footprint and faster performance is to reduce the set of languages when building the language detector. In most cases, it is not advisable to build the detector from all supported languages. When you have knowledge about the texts you want to classify you can almost always rule out certain languages as impossible or unlikely to occur.

There might be classification tasks where you know beforehand that your language data is definitely not written in Latin, for instance. The detection accuracy can become better in such cases if you exclude certain languages from the decision process or just explicitly include relevant languages:

from lingua import LanguageDetectorBuilder, Language, IsoCode639_1, IsoCode639_3

# Include all languages available in the library.

LanguageDetectorBuilder.from_all_languages()

# Include only languages that are not yet extinct (= currently excludes Latin).

LanguageDetectorBuilder.from_all_spoken_languages()

# Include only languages written with Cyrillic script.

LanguageDetectorBuilder.from_all_languages_with_cyrillic_script()

# Exclude only the Spanish language from the decision algorithm.

LanguageDetectorBuilder.from_all_languages_without(Language.SPANISH)

# Only decide between English and German.

LanguageDetectorBuilder.from_languages(Language.ENGLISH, Language.GERMAN)

# Select languages by ISO 639-1 code.

LanguageDetectorBuilder.from_iso_codes_639_1(IsoCode639_1.EN, IsoCode639_1.DE)

# Select languages by ISO 639-3 code.

LanguageDetectorBuilder.from_iso_codes_639_3(IsoCode639_3.ENG, IsoCode639_3.DEU)Take a look at the planned issues.

Any contributions to Lingua are very much appreciated. Please read the instructions

in CONTRIBUTING.md

for how to add new languages to the library.