Runs a load test on the selected HTTP or WebSockets URL. The API allows for easy integration in your own tests.

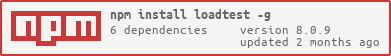

Install globally as root:

# npm install -g loadtest

On Ubuntu or Mac OS X systems install using sudo:

$ sudo npm install -g loadtest

For access to the API just add package loadtest to your package.json devDependencies:

{

...

"devDependencies": {

"loadtest": "*"

},

...

}Versions 6 and later should be used at least with Node.js v16 or later:

- Node.js v16 or later: ^6.0.0

- Node.js v10 or later: ^5.0.0

- Node.js v8 or later: 4.x.y.

- Node.js v6 or earlier: ^3.1.0.

Why use loadtest instead of any other of the available tools, notably Apache ab?

loadtest allows you to configure and tweak requests to simulate real world loads.

Run as a script to load test a URL:

$ loadtest [-n requests] [-c concurrency] [-k] URL

The URL can be "http://", "https://" or "ws://".

Set the max number of requests with -n, and the desired level of concurrency with the -c parameter.

Use keep-alive connections with -k whenever it makes sense,

which should be always except when you are testing opening and closing connections.

Single-dash parameters (e.g. -n) are designed to be compatible with

Apache ab,

except that here you can add the parameters after the URL.

To get online help, run loadtest without parameters:

$ loadtest

The set of basic options are designed to be compatible with Apache ab.

But while ab can only set a concurrency level and lets the server adjust to it,

loadtest allows you to set a rate or requests per second with the --rps option.

Example:

loadtest -c 10 --rps 200 http://mysite.com/

This command sends exactly 200 requests per second with concurrency 10,

so you can see how your server copes with sustained rps.

Even if ab reported a rate of 200 rps,

you will be surprised to see how a constant rate of requests per second affects performance:

no longer are the requests adjusted to the server, but the server must adjust to the requests!

Rps rates are usually lowered dramatically, at least 20~25% (in our example from 200 to 150 rps),

but the resulting figure is much more robust.

loadtest is also quite extensible.

Using the provided API it is very easy to integrate loadtest with your package, and run programmatic load tests.

loadtest makes it very easy to run load tests as part of systems tests, before deploying a new version of your software.

The result includes mean response times and percentiles,

so that you can abort deployment e.g. if 99% of the requests don't finish in 10 ms or less.

loadtest saturates a single CPU pretty quickly.

Do not use loadtest in this mode

if the Node.js process is above 100% usage in top, which happens approx. when your load is above 1000~4000 rps.

(You can measure the practical limits of loadtest on your specific test machines by running it against a simple

test server

and seeing when it reaches 100% CPU.)

In this case try using in multi-process mode using the --cores parameter,

see below.

There are better tools for that use case:

- Apache

abhas great performance, but it is also limited by a single CPU performance. Its practical limit is somewhere around ~40 krps. - weighttp is also

ab-compatible and is supposed to be very fast (the author has not personally used it). - wrk is multithreaded and fit for use when multiple CPUs are required or available.

It may need installing from source though, and its interface is not

ab-compatible.

The following parameters are compatible with Apache ab.

Number of requests to send out. Default is no limit; will keep on sending if not specified.

Note: the total number of requests sent can be bigger than the parameter if there is a concurrency parameter;

loadtest will report just the first n.

loadtest will create a certain number of clients; this parameter controls how many. Requests from them will arrive concurrently to the server. Default value is 1.

Note: requests are not sent in parallel (from different processes), but concurrently (a second request may be sent before the first has been answered).

Max number of seconds to wait until requests no longer go out. Default is no limit; will keep on sending if not specified.

Note: this is different than Apache ab, which stops receiving requests after the given seconds.

Open connections using keep-alive: use header 'Connection: Keep-alive' instead of 'Connection: Close'.

Note: Uses agentkeepalive, which performs better than the default node.js agent.

Send a cookie with the request. The cookie name=value is then sent to the server.

This parameter can be repeated as many times as needed.

Send a custom header with the request. The line header:value is then sent to the server.

This parameter can be repeated as many times as needed.

Example:

$ loadtest -H user-agent:tester/0.4 ...

Note: if not present, loadtest will add a few headers on its own: the "host" header parsed from the URL,

a custom user agent "loadtest/" plus version (loadtest/1.1.0), and an accept header for "*/*".

Note: when the same header is sent several times, only the last value will be considered. If you want to send multiple values with a header, separate them with semicolons:

$ loadtest -H accept:text/plain;text-html ...

Note: if you need to add a header with spaces, be sure to surround both header and value with quotes:

$ loadtest -H "Authorization: Basic xxx=="

Set the MIME content type for POST data. Default: text/plain.

Send the string as the POST body. E.g.: -P '{"key": "a9acf03f"}'

Send the string as the PATCH body. E.g.: -A '{"key": "a9acf03f"}'

Set method that will be sent to the test URL.

Accepts: GET, POST, PUT, DELETE, PATCH,

and lowercase versions. Default is GET.

Example: -m POST.

Add some data to send in the body. It does not support method GET.

Requires setting the method with -m and the type with -T.

Example: --data '{"username": "test", "password": "test"}' -T 'application/x-www-form-urlencoded' -m POST

Send the data contained in the given file in the POST body.

Remember to set -T to the correct content-type.

If POST-file has .js extension it will be imported. It should be a valid node module and it

should export a default function, which is invoked with an automatically generated request identifier

to provide the body of each request.

This is useful if you want to generate request bodies dynamically and vary them for each request.

Example:

export default function request(requestId) {

// this object will be serialized to JSON and sent in the body of the request

return {

key: 'value',

requestId: requestId

}

}See sample file in sample/post-file.js, and test in test/body-generator.js.

Send the data contained in the given file as a PUT request.

Remember to set -T to the correct content-type.

If PUT-file has .js extension it will be imported. It should be a valid node module and it

should export a default function, which is invoked with an automatically generated request identifier

to provide the body of each request.

This is useful if you want to generate request bodies dynamically and vary them for each request.

For examples see above for -p.

Send the data contained in the given file as a PATCH request.

Remember to set -T to the correct content-type.

If PATCH-file has .js extension it will be imported. It should be a valid node module and it

should export a default function, which is invoked with an automatically generated request identifier

to provide the body of each request.

This is useful if you want to generate request bodies dynamically and vary them for each request.

For examples see above for -p.

Recover from errors. Always active: loadtest does not stop on errors. After the tests are finished, if there were errors a report with all error codes will be shown.

The TLS/SSL method to use. (e.g. TLSv1_method)

Example:

$ loadtest -n 1000 -s TLSv1_method https://www.example.com

Show version number and exit.

The following parameters are not compatible with Apache ab.

Controls the number of requests per second that are sent.

Cannot be fractional, e.g. --rps 0.5.

In this mode each request is not sent as soon as the previous one is responded,

but periodically even if previous requests have not been responded yet.

Note: Concurrency doesn't affect the final number of requests per second, since rps will be shared by all the clients. E.g.:

loadtest <url> -c 10 --rps 10

will send a total of 10 rps to the given URL, from 10 different clients (each client will send 1 request per second).

Beware: if concurrency is too low then it is possible that there will not be enough clients

to send all of the rps, adjust it with -c if needed.

Note: --rps is not supported for websockets.

Start loadtest in multi-process mode on a number of cores simultaneously.

Useful when a single CPU is saturated.

Forks the requested number of processes using the

Node.js cluster module.

In this mode the total number of requests and the rps rate are shared among all processes. The result returned is the aggregation of results from all cores.

Note: this option is not available in the API, where it runs just in the provided process.

Timeout for each generated request in milliseconds. Setting this to 0 disables timeout (default).

Use a custom request generator function from an external file.

See an example of a request generator module in --requestGenerator below.

Also see sample/request-generator.js for some sample code including a body

(or sample/request-generator.ts for ES6/TypeScript).

Open connections using keep-alive.

Note: instead of using the default agent, this option is now an alias for -k.

Do not show any messages.

Show debug messages.

Note: deprecated in version 6+.

Allow invalid and self-signed certificates over https.

Sets the certificate for the http client to use. Must be used with --key.

Sets the key for the http client to use. Must be used with --cert.

loadtest bundles a test server. To run it:

$ testserver-loadtest [options] [port]

This command will show the number of requests received per second, the latency in answering requests and the headers for selected requests.

The server returns a short text 'OK' for every request, so that latency measurements don't have to take into account request processing.

If no port is given then default port 7357 will be used. The optional delay instructs the server to wait for the given number of milliseconds before answering each request, to simulate a busy server. You can also simulate errors on a given percent of requests.

The following optional parameters are available.

Wait the specified number of milliseconds before answering each request.

Return the given error for every request.

Return an error (default 500) only for the specified % of requests.

Number of cores to use. If not 1, will start in multi-process mode.

Note: since version v6.3.0 the test server uses half the available cores by default;

use --cores 1 to use in single-process mode.

Let us now see how to measure the performance of the test server.

First we install loadtest globally:

$ sudo npm install -g loadtest

Now we start the test server:

$ testserver-loadtest --cores 2

Listening on http://localhost:7357/

Listening on http://localhost:7357/

On a different console window we run a load test against it for 20 seconds with concurrency 10 (only relevant results are shown):

$ loadtest http://localhost:7357/ -t 20 -c 10

...

Requests: 9589, requests per second: 1915, mean latency: 10 ms

Requests: 16375, requests per second: 1359, mean latency: 10 ms

Requests: 16375, requests per second: 0, mean latency: 0 ms

...

Completed requests: 16376

Requests per second: 368

Total time: 44.503181166000005 s

Percentage of the requests served within a certain time

50% 4 ms

90% 5 ms

95% 6 ms

99% 14 ms

100% 35997 ms (longest request)

The result was quite erratic, with some requests taking up to 36 seconds; this suggests that Node.js is queueing some requests for a long time, and answering them irregularly. Now we will try a fixed rate of 1000 rps:

$ loadtest http://localhost:7357/ -t 20 -c 10 --rps 1000

...

Requests: 4551, requests per second: 910, mean latency: 0 ms

Requests: 9546, requests per second: 1000, mean latency: 0 ms

Requests: 14549, requests per second: 1000, mean latency: 20 ms

...

Percentage of the requests served within a certain time

50% 1 ms

90% 2 ms

95% 8 ms

99% 133 ms

100% 1246 ms (longest request)

Again erratic results. In fact if we leave the test running for 50 seconds we start seeing errors:

$ loadtest http://localhost:7357/ -t 50 -c 10 --rps 1000

...

Requests: 29212, requests per second: 496, mean latency: 14500 ms

Errors: 426, accumulated errors: 428, 1.5% of total requests

Let us lower the rate to 500 rps:

$ loadtest http://localhost:7357/ -t 20 -c 10 --rps 500

...

Requests: 0, requests per second: 0, mean latency: 0 ms

Requests: 2258, requests per second: 452, mean latency: 0 ms

Requests: 4757, requests per second: 500, mean latency: 0 ms

Requests: 7258, requests per second: 500, mean latency: 0 ms

Requests: 9757, requests per second: 500, mean latency: 0 ms

...

Requests per second: 500

Completed requests: 9758

Total errors: 0

Total time: 20.002735398000002 s

Requests per second: 488

Total time: 20.002735398000002 s

Percentage of the requests served within a certain time

50% 1 ms

90% 1 ms

95% 1 ms

99% 14 ms

100% 148 ms (longest request)

Much better: a sustained rate of 500 rps is seen most of the time, 488 rps average, and 99% of requests answered within 14 ms.

We now know that our server can accept 500 rps without problems. Not bad for a single-process naïve Node.js server... We may refine our results further to find at which point from 500 to 1000 rps our server breaks down.

But instead let us research how to improve the result. One obvious candidate is to add keep-alive to the requests so we don't have to create a new connection for every request. The result (with the same test server) is impressive:

$ loadtest http://localhost:7357/ -t 20 -c 10 -k

...

Requests per second: 4099

Percentage of the requests served within a certain time

50% 2 ms

90% 3 ms

95% 3 ms

99% 10 ms

100% 25 ms (longest request)

Now we're talking! The steady rate also goes up to 2 krps:

$ loadtest http://localhost:7357/ -t 20 -c 10 --keepalive --rps 2000

...

Requests per second: 1950

Percentage of the requests served within a certain time

50% 1 ms

90% 2 ms

95% 2 ms

99% 7 ms

100% 20 ms (longest request)

Not bad at all: 2 krps with a single core, sustained. However, it you try to push it beyond that, at 3 krps it will fail miserably.

loadtest is not limited to running from the command line; it can be controlled using an API,

thus allowing you to load test your application in your own tests.

To run a load test, just await for the exported function loadTest() with the desired options, described below:

import {loadTest} from 'loadtest'

const options = {

url: 'http://localhost:8000',

maxRequests: 1000,

}

const result = await loadTest(options)

result.show()

console.log('Tests run successfully')

})The call returns a Result object that contains all info about the load test, also described below.

Call result.show() to display the results in the standard format on the console.

As a legacy from before promises existed,

if an optional callback is passed as second parameter then it will not behave as async:

the callback function(error, result) will be invoked when the max number of requests is reached,

or when the max number of seconds has elapsed.

import {loadTest} from 'loadtest'

const options = {

url: 'http://localhost:8000',

maxRequests: 1000,

}

loadTest(options, function(error, result) {

if (error) {

return console.error('Got an error: %s', error)

}

result.show()

console.log('Tests run successfully')

})Beware: if there are no maxRequests and no maxSeconds, then tests will run forever

and will not call the callback.

The latency result returned at the end of the load test contains a full set of data, including: mean latency, number of errors and percentiles. A simplified example follows:

{

url: 'http://localhost:80/',

maxRequests: 1000,

maxSeconds: 0,

concurrency: 10,

agent: 'none',

requestsPerSecond: undefined,

totalRequests: 1000,

percentiles: {

'50': 7,

'90': 10,

'95': 11,

'99': 15

},

effectiveRps: 2824,

elapsedSeconds: 0.354108,

meanLatencyMs: 7.72,

maxLatencyMs: 20,

totalErrors: 3,

errorCodes: {

'0': 1,

'500': 2

},

}The result object also has a result.show() function

that displays the results on the console in the standard format.

All options but url are, as their name implies, optional.

The URL to invoke. Mandatory.

How many clients to start in parallel.

A max number of requests; after they are reached the test will end.

Note: the actual number of requests sent can be bigger if there is a concurrency level; loadtest will report just on the max number of requests.

Max number of seconds to run the tests.

Note: after the given number of seconds loadtest will stop sending requests,

but may continue receiving tests afterwards.

Timeout for each generated request in milliseconds. Setting this to 0 disables timeout (default).

An array of cookies to send. Each cookie should be a string of the form name=value.

A map of headers. Each header should be an entry in the map with the value given as a string. If you want to have several values for a header, write a single value separated by semicolons, like this:

{

accept: "text/plain;text/html"

}

Note: when using the API, the "host" header is not inferred from the URL but needs to be sent explicitly.

The method to use: POST, PUT. Default: GET.

The contents to send in the body of the message, for POST or PUT requests. Can be a string or an object (which will be converted to JSON).

The MIME type to use for the body. Default content type is text/plain.

How many requests each client will send per second.

Use a custom request generator function. The request needs to be generated synchronously and returned when this function is invoked.

Example request generator function could look like this:

function(params, options, client, callback) {

const message = generateMessage();

const request = client(options, callback);

options.headers['Content-Length'] = message.length;

options.headers['Content-Type'] = 'application/x-www-form-urlencoded';

request.write(message);

request.end();

return request;

}See sample/request-generator.js for some sample code including a body

(or sample/request-generator.ts for ES6/TypeScript).

Use an agent with 'Connection: Keep-alive'.

Note: Uses agentkeepalive, which performs better than the default node.js agent.

Do not show any messages.

Note: deprecated in version 6+, shows a warning.

The given string will be replaced in the final URL with a unique index.

E.g.: if URL is http://test.com/value and indexParam=value, then the URL

will be:

- http://test.com/1

- http://test.com/2

- ...

- body will also be replaced

body:{ userid: id_value }will bebody:{ userid: id_1 }

A function that would be executed to replace the value identified through indexParam through a custom value generator.

E.g.: if URL is http://test.com/value and indexParam=value and

indexParamCallback: function customCallBack() {

return Math.floor(Math.random() * 10); //returns a random integer from 0 to 9

}then the URL could be:

- http://test.com/1 (Randomly generated integer 1)

- http://test.com/5 (Randomly generated integer 5)

- http://test.com/6 (Randomly generated integer 6)

- http://test.com/8 (Randomly generated integer 8)

- ...

- body will also be replaced

body:{ userid: id_value }will bebody:{ userid: id_<value from callback> }

Allow invalid and self-signed certificates over https.

The TLS/SSL method to use. (e.g. TLSv1_method)

Example:

import {loadTest} from 'loadtest'

const options = {

url: 'https://www.example.com',

maxRequests: 100,

secureProtocol: 'TLSv1_method'

}

loadTest(options, function(error) {

if (error) {

return console.error('Got an error: %s', error)

}

console.log('Tests run successfully')

})If present, this function executes after every request operation completes. Provides immediate access to the test result while the test batch is still running. This can be used for more detailed custom logging or developing your own spreadsheet or statistical analysis of the result.

The result and error passed to the callback are in the same format as the result passed to the final callback.

In addition, the following three properties are added to the result object:

requestElapsed: time in milliseconds it took to complete this individual request.requestIndex: 0-based index of this particular request in the sequence of all requests to be made.instanceIndex: theloadtest(...)instance index. This is useful if you callloadtest()more than once.

You will need to check if error is populated in order to determine which object to check for these properties.

The second parameter contains info about the current request:

{

host: 'localhost',

path: '/',

method: 'GET',

statusCode: 200,

body: '<html><body>hi</body></html>',

headers: [...]

}Example:

import {loadTest} from 'loadtest'

function statusCallback(error, result, latency) {

console.log('Current latency %j, result %j, error %j', latency, result, error)

console.log('----')

console.log('Request elapsed milliseconds: ', result.requestElapsed)

console.log('Request index: ', result.requestIndex)

console.log('Request loadtest() instance index: ', result.instanceIndex)

}

const options = {

url: 'http://localhost:8000',

maxRequests: 1000,

statusCallback: statusCallback

}

loadTest(options, function(error) {

if (error) {

return console.error('Got an error: %s', error)

}

console.log('Tests run successfully')

})In some situations request data needs to be available in the statusCallBack.

This data can be assigned to request.labels in the requestGenerator:

const options = {

// ...

requestGenerator: (params, options, client, callback) => {

// ...

const randomInputData = Math.random().toString().substr(2, 8);

const message = JSON.stringify({ randomInputData })

const request = client(options, callback);

request.labels = randomInputData;

request.write(message);

return request;

}

};Then in statusCallBack the labels can be accessed through result.labels:

function statusCallback(error, result, latency) {

console.log(result.labels);

}Warning: The format for statusCallback has changed in version 2.0.0 onwards.

It used to be statusCallback(latency, result, error),

it has been changed to conform to the usual Node.js standard.

A function that would be executed after every request before its status be added to the final statistics.

The is can be used when you want to mark some result with 200 http status code to be failed or error.

The result object passed to this callback function has the same fields as the result object passed to statusCallback.

customError can be added to mark this result as failed or error. customErrorCode will be provided in the final statistics, in addtion to the http status code.

Example:

function contentInspector(result) {

if (result.statusCode == 200) {

const body = JSON.parse(result.body)

// how to examine the body depends on the content that the service returns

if (body.status.err_code !== 0) {

result.customError = body.status.err_code + " " + body.status.msg

result.customErrorCode = body.status.err_code

}

}

},To start the test server use the exported function startServer() with a set of options:

import {startServer} from 'loadtest'

const server = await startServer({port: 8000})

// do your thing

await server.close()This function returns when the server is up and running,

with an HTTP server which can be close()d when it is no longer useful.

As a legacy from before promises existed,

if an optional callback is passed as second parameter then it will not behave as async:

const server = startServer({port: 8000}, error => console.error(error))

The following options are available.

Optional port to use for the server.

Note: the default port is 7357, since port 80 requires special privileges.

Wait the given number of milliseconds to answer each request.

Return an HTTP error code.

Return an HTTP error code only for the given % of requests. If no error code was specified, default is 500.

It is possible to put configuration options in a file named .loadtestrc in your working directory or in a file whose name is specified in the loadtest entry of your package.json. The options in the file will be used only if they are not specified in the command line.

The expected structure of the file is the following:

{

"delay": "Delay the response for the given milliseconds",

"error": "Return an HTTP error code",

"percent": "Return an error (default 500) only for some % of requests",

"maxRequests": "Number of requests to perform",

"concurrency": "Number of requests to make",

"maxSeconds": "Max time in seconds to wait for responses",

"timeout": "Timeout for each request in milliseconds",

"method": "method to url",

"contentType": "MIME type for the body",

"body": "Data to send",

"file": "Send the contents of the file",

"cookies": {

"key": "value"

},

"headers": {

"key": "value"

},

"secureProtocol": "TLS/SSL secure protocol method to use",

"insecure": "Allow self-signed certificates over https",

"cert": "The client certificate to use",

"key": "The client key to use",

"requestGenerator": "JS module with a custom request generator function",

"recover": "Do not exit on socket receive errors (default)",

"agentKeepAlive": "Use a keep-alive http agent",

"proxy": "Use a proxy for requests",

"requestsPerSecond": "Specify the requests per second for each client",

"indexParam": "Replace the value of given arg with an index in the URL"

}See sample file in sample/.loadtestrc.

For more information about the actual configuration file name, read the confinode user manual. In the list of the supported file types, please note that only synchronous loaders can be used with loadtest.

The file test/integration.js shows a complete example, which is also a full integration test:

it starts the server, send 1000 requests, waits for the callback and closes down the server.

Version 3.x uses ES2015 (ES6) features,

such as const or let and arrow functions.

For ES5 support please use versions 2.x.

Copyright (c) 2013-9 Alex Fernández [email protected] and contributors.

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the 'Software'), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED 'AS IS', WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.