Perceptual Similarity Metric and Dataset [Project Page]

The Unreasonable Effectiveness of Deep Features as a Perceptual Metric

Richard Zhang, Phillip Isola, Alexei A. Efros, Eli Shechtman, Oliver Wang.

In CVPR, 2018.

This repository contains our perceptual metric (LPIPS) and dataset (BAPPS). It can also be used as a "perceptual loss". This uses PyTorch; a Tensorflow alternative is here.

Table of Contents

- Learned Perceptual Image Patch Similarity (LPIPS) metric

a. Basic Usage If you just want to run the metric through command line, this is all you need.

b. "Perceptual Loss" usage

c. About the metric - Berkeley-Adobe Perceptual Patch Similarity (BAPPS) dataset

a. Download

b. Evaluation

c. About the dataset

d. Train the metric using the dataset

- Install PyTorch 0.4+ and torchvision fom http://pytorch.org

pip install -r requirements.txt- Clone this repo:

git clone https://github.com/richzhang/PerceptualSimilarity

cd PerceptualSimilarityEvaluate the distance between image patches. Higher means further/more different.

Take the distance between

:

Two images python compute_dists.py --path0 imgs/ex_ref.png --path1 imgs/ex_p0.png --use_gpu

Two directories python compute_dists_dirs.py --dir0 imgs/ex_dir0 --dir1 imgs/ex_dir1 --out imgs/example_dists.txt --use_gpu

File test_network.py shows example usage. The following code loads the model.

from models import dist_model as dm

model = dm.DistModel()

model.initialize(use_gpu=True)

d = model.forward(im0,im1)Variables im0, im1 are PyTorch tensors with shape Nx3xHxW (N patches of size HxW, RGB images scaled in [-1,+1]). This returns d, a length N numpy array.

Run python test_network.py to take the distance between example reference image ex_ref.png to distorted images ex_p0.png and ex_p1.png. Before running it - which do you think should be closer?

Some Options By default in model.initialize:

net='alex': Networkalexis fastest, performs the best, and is the default. You can instead usesqueezeorvgg.model='net-lin': This adds a linear calibration on top of intermediate features in the net. Set this tomodel=netto equally weight all the features.

File perceptual_loss.py shows how to iteratively optimize using the metric. Run python perceptual_loss.py for a demo. The code can also be used to implement vanilla VGG loss, without our learned weights.

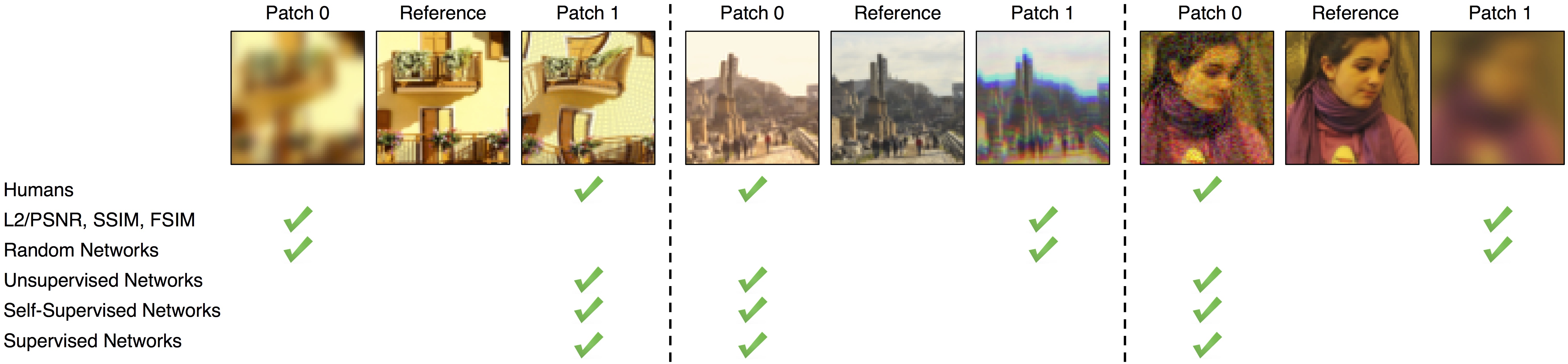

We found that deep network activations work surprisingly well as a perceptual similarity metric. This was true across network architectures (SqueezeNet [2.8 MB], AlexNet [9.1 MB], and VGG [58.9 MB] provided similar scores) and supervisory signals (unsupervised, self-supervised, and supervised all perform strongly). We slightly improved scores by linearly "calibrating" networks - adding a linear layer on top of off-the-shelf classification networks. We provide 3 variants, using linear layers on top of the SqueezeNet, AlexNet (default), and VGG networks.

If you use LPIPS in your publication, please specify which version you are using. The current version is 0.1. You can set version='0.0' for the initial release.

Run bash ./scripts/download_dataset.sh to download and unzip the dataset. Dataset will appear in directory ./dataset. Dataset takes [6.6 GB] total.

- 2AFC train [5.3 GB]

- 2AFC val [1.1 GB]

- JND val [0.2 GB]

Alternatively, runbash ./scripts/get_dataset_valonly.shto only download the validation set (no training set).

Script test_dataset_model.py evaluates a perceptual model on a subset of the dataset.

Dataset flags

dataset_mode:2afcorjnd, which type of perceptual judgment to evaluatedatasets: list the datasets to evaluate- if

dataset_modewas2afc, choices are [train/traditional,train/cnn,val/traditional,val/cnn,val/superres,val/deblur,val/color,val/frameinterp] - if

dataset_modewasjnd, choices are [val/traditional,val/cnn]

- if

Perceptual similarity model flags

model: perceptual similarity model to usenet-linfor our LPIPS learned similarity model (linear network on top of internal activations of pretrained network)netfor a classification network (uncalibrated with all layers averaged)l2for Euclidean distancessimfor Structured Similarity Image Metric

net: choices are [squeeze,alex,vgg] for thenet-linandnetmodels (ignored forl2andssimmodels)colorspace: choices are [Lab,RGB], used for thel2andssimmodels (ignored fornet-linandnetmodels)

Misc flags

batch_size: evaluation batch size (will default to 1 )--use_gpu: turn on this flag for GPU usage

An example usage is as follows: python ./test_dataset_model.py --dataset_mode 2afc --datasets val/traditional val/cnn --model net-lin --net alex --use_gpu --batch_size 50. This would evaluate our model on the "traditional" and "cnn" validation datasets.

The dataset contains two types of perceptual judgements: Two Alternative Forced Choice (2AFC) and Just Noticeable Differences (JND).

(1) Two Alternative Forced Choice (2AFC) - Data is contained in the 2afc subdirectory. Evaluators were given a reference patch, along with two distorted patches, and were asked to select which of the distorted patches was "closer" to the reference patch.

Training sets contain 2 human judgments/triplet.

train/traditional[56.6k triplets]train/cnn[38.1k triplets]train/mix[56.6k triplets]

Validation sets contain 5 judgments/triplet.

val/traditional[4.7k triplets]val/cnn[4.7k triplets]val/superres[10.9k triplets]val/deblur[9.4k triplets]val/color[4.7k triplets]val/frameinterp[1.9k triplets]

Each 2AFC subdirectory contains the following folders:

refcontains the original reference patchesp0,p1contain the two distorted patchesjudgecontains what the human evaluators chose - 0 if all humans preferred p0, 1 if all humans preferred p1

(2) Just Noticeable Differences (JND) - Data is contained in the jnd subdirectory. Evaluators were presented with two patches - a reference patch and a distorted patch - for a limited time, and were asked if they thought the patches were the same (identically) or difference.

Each set contains 3 human evaluations/example.

val/traditional[4.8k patch pairs]val/cnn[4.8k patch pairs]

Each JND subdirectory contains the following folders:

p0,p1contain the two patchessamecontains fraction of human evaluators who thought the patches were the same (0 if all humans thought patches were different, 1 if all humans thought patches were the same)

See script train_test_metric.sh for an example of training and testing the metric. The script will train a model on the full training set for 10 epochs, and then test the learned metric on all of the validation sets. The numbers should roughly match the Alex - lin row in Table 5 in the paper. The code supports training a linear layer on top of an existing representation. Training will add a subdirectory in the checkpoints directory.

You can also train "scratch" and "tune" versions by running train_test_metric_scratch.sh and train_test_metric_tune.sh, respectively.

Docker set up by SuperShinyEyes.

If you find this repository useful for your research, please use the following.

@inproceedings{zhang2018perceptual,

title={The Unreasonable Effectiveness of Deep Features as a Perceptual Metric},

author={Zhang, Richard and Isola, Phillip and Efros, Alexei A and Shechtman, Eli and Wang, Oliver},

booktitle={CVPR},

year={2018}

}

This repository borrows partially from the pytorch-CycleGAN-and-pix2pix repository. The average precision (AP) code is borrowed from the py-faster-rcnn repository. Backpropping through the metric was implemented by Angjoo Kanazawa.