Community-driven code for the book Natural Language Processing in Action.

A community-developed book about building socially responsible NLP pipelines that give back to the communities they interact with.

You'll need a bash shell on your machine. Git has installers that include bash shell for all three major OSes.

Once you have Git installed, launch a bash terminal.

It will usually be found among your other applications with the name git-bash.

Step 1. Install Anaconda3

If you're installing Anaconda3 using a GUI, be sure to check the box that updates your PATH variable. Also, at the end, the Anaconda3 installer will ask if you want to install VSCode. Microsoft's VSCode is supposed to be an OK editor for Python so feel free to use it.

You can skip this step if you are happy using jupyter notebook or VSCode or the editor built into Anaconda3.

I like Sublime Text. It's a lot cleaner more mature. Plus it has more plugins written by individual developers like you.

- Linux -- already installed

- MacOSX -- already installed

- Windows

If you're on Linux or Mac OS, you're good to go. Just figure out how to launch a terminal and make sure you can run ipython or jupyter notebook in it. This is where you'll play around with your own NLP pipeline.

On Windows you have a bit more work to do. Supposedly Windows 10 will let you install Ubuntu with a terminal and bash. But the terminal and shell that comes with git is probably a safer bet. It's mained by a broader open source community.

git clone https://github.com/totalgood/nlpia.gitYou have two alternative package managers you can use to install nlpia:

5.1. conda

5.2. pip

In most cases, conda will be able to install python packages faster and more reliably than pip. Without conda Some packages, such as python-levenshtein, require you to compile a C library during installation. Windows doesn't have an installer that will "just work."

Use conda (part of the Anaconda package that you installed in Step 1 above) to create an environment called nlpiaenv:

cd nlpia # make sure you're in the nlpia directory that contains `setup.py`

conda env create -n nlpiaenv -f conda/environment.yml

conda install pip # to get the latest version of pip

source activate nlpiaenv

pip install -e .Whenever you want to be able to import or run any nlpia modules, you'll need to activate this conda environment first:

$ source activate nlpiaenvOn Windows CMD prompt (Anaconda Prompt in Applications) there is no source command so:

C:\ activate nlpiaenv

Now you can finally make sure you can import nlpia with:

python -c "print(import nlpia)"Skip to Step 6 ("Have fun!") if you have successfully created and activated an environment containing the nlpia package and its dependencies.

You can try this first, if you're feeling lucky:

cd nlpia

pip install --upgrade pip

pip install -e .Or if you don't think you'll be editing any of the source code for nlpia your can just:

pip install nlpiaLinux-based OSes like Ubuntu and OSX come with C++ compilers built-in, so you may be able to install the dependencies using pip instead of conda.

But if you're on Windows and you want to install packages, like python-levenshtein that need compiled C++ libraries, you'll need a compiler.

Fortunately Microsoft still lets you download a compiler for free, just make sure you follow the links to the Visual Studio "Build Tools" and not the entire Visual Studio package.

Once you have a compiler on your OS you can install nlpia using pip:

cd nlpia # make sure you're in the nlpia directory that contains `setup.py`

pip install --upgrade pip

mkvirtualenv nlpiaenv

source nlpiaenv/bin/activate

pip install -r requirements-test.txt

pip install -e .

pip install -r requirements-deep.txtThe chatbots(including TTS and STT audio drivers) that come with nlpia may not be compatible with Windows due to problems install pycrypto.

If you are on a Linux or Darwin(Mac OSX) system or want to try to help us debug the pycrypto problem feel free to install the chatbot requirements:

# pip install -r requirements-chat.txt

# pip install -r requirements-voice.txtCheck out the code examples from the book in nlpia/nlpia/book/examples to get ideas:

cd nlpia/book/examples

lsHelp other NLP practicioners by contributing your code and knowledge. Here are some ideas for a few features others might find handy.

docker build -t nlpia .

docker run -p 8888:8888 nlpia- Copy the

tokenobtained from the run log - Open Browser and use the link

http://localhost:8888/?token=...

-

If you want to keep your notebook file or share a folder with the running container then use the command:

docker run -p 8888:8888 -v ~:/home/jovyan/work nlpia -

Open new notebook and test your code, and make sure save it inside

workdirectory.

Skeleton code and APIs that could be added to the https://github.com/totalgood/nlpia/blob/master/src/nlpia/transcoders.py:`transcoders.py` module.

def find_acronym(text):

"""Find parenthetical noun phrases in a sentence and return the acronym/abbreviation/term as a pair of strings.

>>> find_acronym('Support Vector Machine (SVM) are a great tool.')

('SVM', 'Support Vector Machine')

"""

return (abbreviation, noun_phrase)def glossary_from_dict(dict, format='asciidoc'):

""" Given a dict of word/acronym: definition compose a Glossary string in ASCIIDOC format """

return textdef glossary_from_file(path, format='asciidoc'):

""" Given an asciidoc file path compose a Glossary string in ASCIIDOC format """

return text

def glossary_from_dir(path, format='asciidoc'):

""" Given an path to a directory of asciidoc files compose a Glossary string in ASCIIDOC format """

return textUse a parser to extract only natural language sentences and headings/titles from a list of lines/sentences from an asciidoc book like "Natural Language Processing in Action". Use a sentence segmenter in https://github.com/totalgood/nlpia/blob/master/src/nlpia/transcoders.py:[nlpia.transcoders] to split a book, like NLPIA, into a seequence of sentences.

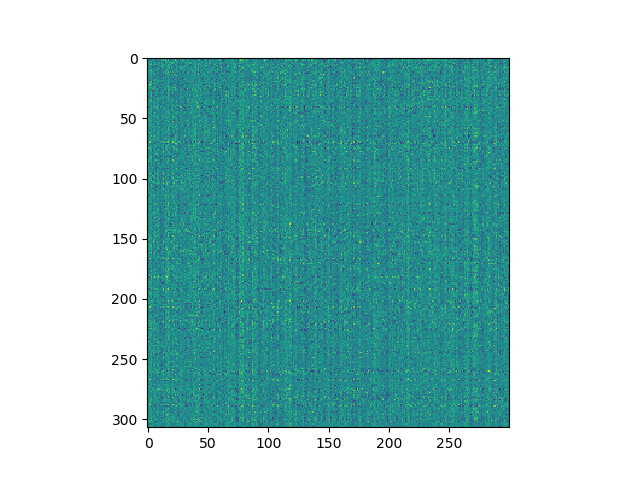

A sequence of word vectors or topic vectors forms a 2D array or matrix which can be displayed as an image. I used word2vec (nlpia.loaders.get_data('word2vec')) to embed the words in the last four paragraphs of Chapter 1 in NLPIA and it produced a spectrogram that was a lot noisier than I expected. Nonetheless stripes and blotches of meaning are clearly visible.

First, the imports:

>>> from nlpia.loaders import get_data

>>> from nltk.tokenize import casual_tokenize

>>> from matplotlib import pyplot as plt

>>> import seabornFirst get the raw text and tokenize it:

>>> lines = get_data('ch1_conclusion')

>>> txt = "\n".join(lines)

>>> tokens = casual_tokenize(txt)

>>> tokens[-10:]

['you',

'accomplish',

'your',

'goals',

'in',

'business',

'and',

'in',

'life',

'.']Then you'll have to download a word vector model like word2vec:

>>> wv = get_data('w2v') # this could take several minutes

>>> wordvectors = np.array([wv[tok] for tok in tokens if tok in wv])

>>> wordvectors.shape

(307, 300)Now you can display your 307x300 spectrogram or "wordogram":

>>> plt.imshow(wordvectors)

>>> plt.show()Can you think of some image processing or deep learning algorithms you could run on images of natural language text?

Once you've mastered word vectors you can play around with Google's Universal Sentence Encoder and create spectrograms of entire books.

If you have pairs of statements or words in two languages, you can build a sequence-to-sequence translator. You could even design your own language like you did in gradeschool with piglatin or build yourself a L337 translator.

There are a lot more project ideas mentioned in the "Resources" section at the end of NLPIA. Here's an early draft of that resource list.