npm install react-native-video-processing --save$ npm test or $ yarn test

Note: For RN 0.4x use 1.0 version, For RN 0.3x use 0.16

$ react-native link react-native-video-processing-

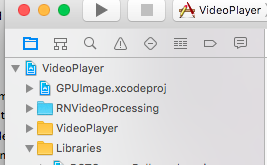

In Xcode, click the "Add Files to ".

-

Go to

node_modules➜react-native-video-processing/iosand addRNVideoProcessingdirectory. -

Make sure

RNVideoProcessingis "under" the "top-level". -

Add

GPUImage.xcodeprojfromnode_modules/react-native-video-processing/ios/GPUImage/frameworkdirectory to your project and make sure it is "under" the "top-level": -

In XCode, in the project navigator, select your project.

Add

- CoreMedia

- CoreVideo

- OpenGLES

- AVFoundation

- QuartzCore

- GPUImage

- MobileCoreServices

to your project's

Build Phases➜Link Binary With Libraries. -

Import

RNVideoProcessing.hinto yourproject_name-bridging-header.h. -

Clean and Run your project.

import React, { Component } from 'react';

import { View } from 'react-native';

import { VideoPlayer, Trimmer } from 'react-native-video-processing';

class App extends Component {

trimVideo() {

const options = {

startTime: 0,

endTime: 15,

quality: VideoPlayer.Constants.quality.QUALITY_1280x720, // iOS only

saveToCameraRoll: true, // default is false // iOS only

saveWithCurrentDate: true, // default is false // iOS only

};

this.videoPlayerRef.trim(options)

.then((newSource) => console.log(newSource))

.catch(console.warn);

}

// iOS only

compressVideo() {

const options = {

width: 720,

endTime: 1280,

bitrateMultiplier: 3,

saveToCameraRoll: true, // default is false

saveWithCurrentDate: true, // default is false

minimumBitrate: 300000

};

this.videoPlayerRef.compress(options)

.then((newSource) => console.log(newSource))

.catch(console.warn);

}

getPreviewImageForSecond(second) {

const maximumSize = { width: 640, height: 1024 }; // default is { width: 1080, height: 1080 } iOS only

this.videoPlayerRef.getPreviewForSecond(second, maximumSize) // maximumSize is iOS only

.then((base64String) => console.log('This is BASE64 of image', base64String))

.catch(console.warn);

}

getVideoInfo() {

this.videoPlayerRef.getVideoInfo()

.then((info) => console.log(info))

.catch(console.warn);

}

render() {

return (

<View style={{ flex: 1 }}>

<VideoPlayer

ref={ref => this.videoPlayerRef = ref}

startTime={30} // seconds

endTime={120} // seconds

play={true} // default false

replay={true} // should player play video again if it's ended

rotate={true} // use this prop to rotate video if it captured in landscape mode iOS only

source={'file:///sdcard/DCIM/....'}

playerWidth={300} // iOS only

playerHeight={500} // iOS only

style={{ backgroundColor: 'black' }}

onChange={({ nativeEvent }) => console.log({ nativeEvent })} // get Current time on every second

/>

<Trimmer

source={'file:///sdcard/DCIM/....'}

height={100}

width={300}

onTrackerMove={(e) => console.log(e.currentTime)} // iOS only

currentTime={this.video.currentTime} // use this prop to set tracker position iOS only

themeColor={'white'} // iOS only

trackerColor={'green'} // iOS only

onChange={(e) => console.log(e.startTime, e.endTime)}

/>

</View>

);

}

}##Contributing

- Please follow the eslint style guide.

- Please commit with

$ npm run commit

- Use FFMpeg instead of MP4Parser

- Add ability to add GLSL filters

- Android should be able to compress video

- More processing options

- Create native trimmer component for Android