- v0.1 - Pearl beta-version is now released! Announcements: Twitter Post, LinkedIn Post

- Highlighted on Meta NeurIPS 2023 Official Website: Website

- Highlighted by AI at Meta official handle on Twitter and LinkedIn: Twitter Post, LinkedIn Post.

More details of the library at our official website.

The Pearl paper is available at Arxiv.

Our NeurIPS 2023 Presentation Slides is released here.

Pearl is a new production-ready Reinforcement Learning AI agent library open-sourced by the Applied Reinforcement Learning team at Meta. Furthering our efforts on open AI innovation, Pearl enables researchers and practitioners to develop Reinforcement Learning AI agents. These AI agents prioritize cumulative long-term feedback over immediate feedback and can adapt to environments with limited observability, sparse feedback, and high stochasticity. We hope that Pearl offers the community a means to build state-of-the-art Reinforcement Learning AI agents that can adapt to a wide range of complex production environments.

To install Pearl, you can simply clone this repository and run pip install -e . (you need pip version ≥ 21.3 and setuptools version ≥ 64):

git clone https://github.com/facebookresearch/Pearl.git

cd Pearl

pip install -e .To kick off a Pearl agent with a classic reinforcement learning environment, here's a quick example.

from pearl.pearl_agent import PearlAgent

from pearl.action_representation_modules.one_hot_action_representation_module import (

OneHotActionTensorRepresentationModule,

)

from pearl.policy_learners.sequential_decision_making.deep_q_learning import (

DeepQLearning,

)

from pearl.replay_buffers.sequential_decision_making.basic_replay_buffer import (

BasicReplayBuffer,

)

from pearl.utils.instantiations.environments.gym_environment import GymEnvironment

env = GymEnvironment("CartPole-v1")

num_actions = env.action_space.n

agent = PearlAgent(

policy_learner=DeepQLearning(

state_dim=env.observation_space.shape[0],

action_space=env.action_space,

hidden_dims=[64, 64],

training_rounds=20,

action_representation_module=OneHotActionTensorRepresentationModule(

max_number_actions=num_actions

),

),

replay_buffer=BasicReplayBuffer(10_000),

)

observation, action_space = env.reset()

agent.reset(observation, action_space)

done = False

while not done:

action = agent.act(exploit=False)

action_result = env.step(action)

agent.observe(action_result)

agent.learn()

done = action_result.doneUsers can replace the environment with any real-world problems.

We provide a few tutorial Jupyter notebooks (and are currently working on more!):

-

A single item recommender system. We derived a small contrived recommender system environment using the MIND dataset (Wu et al. 2020).

-

Contextual bandits. Demonstrates contextual bandit algorithms and their implementation using Pearl using a contextual bandit environment for providing data from UCI datasets, and tested the performance of neural implementations of SquareCB, LinUCB, and LinTS.

-

Frozen Lake. A simple example showing how to use a one-hot observation wrapper to learn the classic problem with DQN.

-

Deep Q-Learning (DQN) and Double DQN. Demonstrates how to run DQN and Double DQN on the Cart-Pole environment.

-

Actor-critic algorithms with safety constraints. Demonstrates how to run Actor Critic methods, including a version with safe constraints.

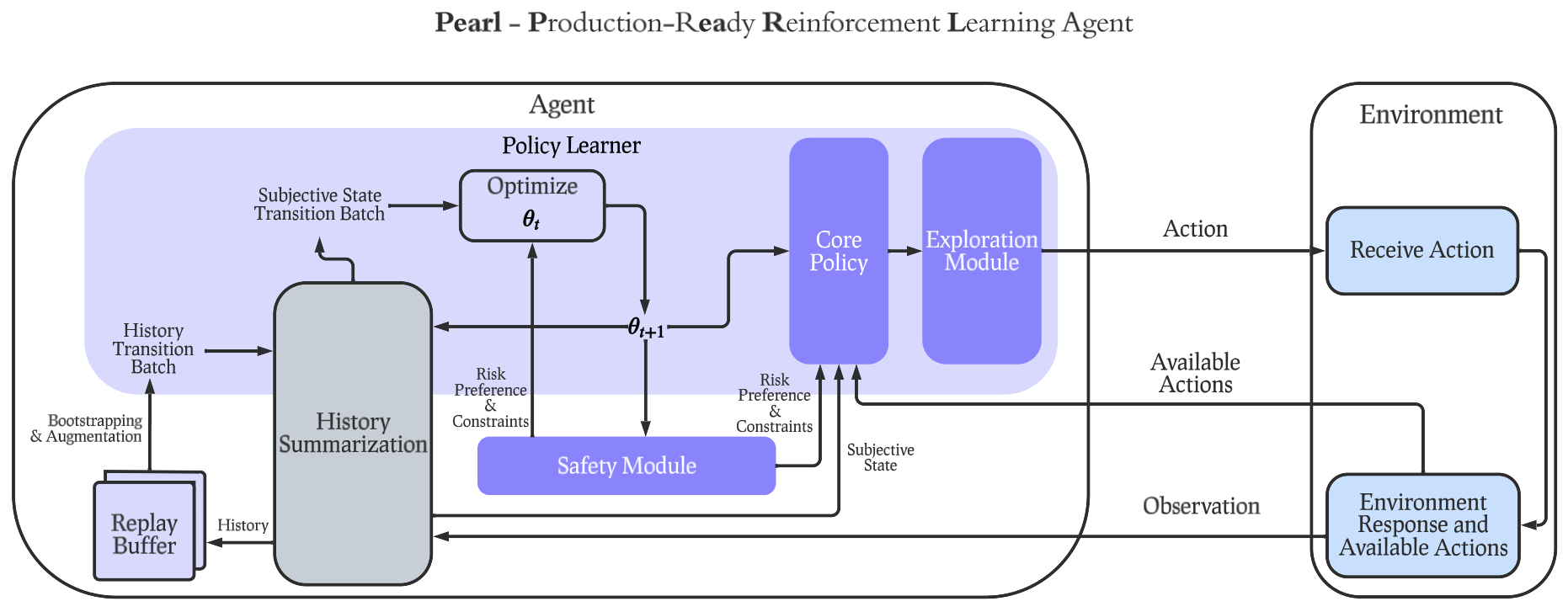

Pearl was built with a modular design so that industry practitioners or academic researchers can select any subset and flexibly combine features below to construct a Pearl agent customized for their specific use cases. Pearl offers a diverse set of unique features for production environments, including dynamic action spaces, offline learning, intelligent neural exploration, safe decision making, history summarization, and data augmentation.

Pearl was built with a modular design so that industry practitioners or academic researchers can select any subset and flexibly combine features below to construct a Pearl agent customized for their specific use cases. Pearl offers a diverse set of unique features for production environments, including dynamic action spaces, offline learning, intelligent neural exploration, safe decision making, history summarization, and data augmentation.

You can find many Pearl agent candidates with mix-and-match set of reinforcement learning features in utils/scripts/benchmark_config.py

Pearl is in progress supporting real-world applications, including recommender systems, auction bidding systems and creative selection. Each of them requires a subset of features offered by Pearl. To visualize the subset of features used by each of the applications above, see the table below.

| Pearl Features | Recommender Systems | Auction Bidding | Creative Selection |

|---|---|---|---|

| Policy Learning | ✅ | ✅ | ✅ |

| Intelligent Exploration | ✅ | ✅ | ✅ |

| Safety | ✅ | ||

| History Summarization | ✅ | ||

| Replay Buffer | ✅ | ✅ | ✅ |

| Contextual Bandit | ✅ | ||

| Offline RL | ✅ | ✅ | |

| Dynamic Action Space | ✅ | ✅ | |

| Large-scale Neural Network | ✅ |

| Pearl Features | Pearl | ReAgent (Superseded by Pearl) | RLLib | SB3 | Tianshou | Dopamine |

|---|---|---|---|---|---|---|

| Agent Modularity | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| Dynamic Action Space | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| Offline RL | ✅ | ✅ | ✅ | ✅ | ✅ | ❌ |

| Intelligent Exploration | ✅ | ❌ | ❌ | ❌ | ⚪ (limited support) | ❌ |

| Contextual Bandit | ✅ | ✅ | ⚪ (only linear support) | ❌ | ❌ | ❌ |

| Safe Decision Making | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| History Summarization | ✅ | ❌ | ✅ | ❌ | ⚪ (requires modifying environment state) | ❌ |

| Data Augmented Replay Buffer | ✅ | ❌ | ✅ | ✅ | ✅ | ❌ |

@article{pearl2023paper,

title = {Pearl: A Production-ready Reinforcement Learning Agent},

author = {Zheqing Zhu, Rodrigo de Salvo Braz, Jalaj Bhandari, Daniel Jiang, Yi Wan, Yonathan Efroni, Ruiyang Xu, Liyuan Wang, Hongbo Guo, Alex Nikulkov, Dmytro Korenkevych, Urun Dogan, Frank Cheng, Zheng Wu, Wanqiao Xu},

eprint = {arXiv preprint arXiv:2312.03814},

year = {2023}

}

Pearl is MIT licensed, as found in the LICENSE file.