Python building blocks to explore large language models on any computer with 512MB of RAM

This package is designed to be as simple as possible for learners and educators exploring how large language models intersect with modern software development. The interfaces to this package are all simple functions using standard types. The complexity of large language models is hidden from view while providing free local inference using light-weight, open models. All included models are free for educational use, no API keys are required, and all inference is performed locally by default.

This package can be installed using the following command:

pip install languagemodelsOnce installed, you should be able to interact with the package in Python as follows:

>>> import languagemodels as lm

>>> lm.do("What color is the sky?")

'The color of the sky is blue.'This will require downloading a significant amount of data (~250MB) on the first run. Models will be cached for later use and subsequent calls should be quick.

Here are some usage examples as Python REPL sessions. This should work in the REPL, notebooks, or in traditional scripts and applications.

>>> import languagemodels as lm

>>> lm.complete("She hid in her room until")

'she was sure she was safe'>>> import languagemodels as lm

>>> lm.do("Translate to English: Hola, mundo!")

'Hello, world!'

>>> lm.do("What is the capital of France?")

'Paris.'>>> lm.chat('''

... System: Respond as a helpful assistant.

...

... User: What time is it?

...

... Assistant:

... ''')

'I'm sorry, but as an AI language model, I don't have access to real-time information. Please provide me with the specific time you are asking for so that I can assist you better.'Helper functions are provided to retrieve text from external sources that can be used to augment prompt context.

>>> import languagemodels as lm

>>> lm.get_wiki('Chemistry')

'Chemistry is the scientific study...

>>> lm.get_weather(41.8, -87.6)

'Partly cloudy with a chance of rain...

>>> lm.get_date()

'Friday, May 12, 2023 at 09:27AM'Here's an example showing how this can be used (compare to previous chat example):

>>> lm.chat(f'''

... System: Respond as a helpful assistant. It is {lm.get_date()}

...

... User: What time is it?

...

... Assistant:

... ''')

'It is currently Wednesday, June 07, 2023 at 12:53PM.'Semantic search is provided to retrieve documents that may provide helpful context from a document store.

>>> import languagemodels as lm

>>> lm.store_doc(lm.get_wiki("Python"), "Python")

>>> lm.store_doc(lm.get_wiki("C language"), "C")

>>> lm.store_doc(lm.get_wiki("Javascript"), "Javascript")

>>> lm.get_doc_context("What does it mean for batteries to be included in a language?")

'From Python document: It is often described as a "batteries included" language due to its comprehensive standard library.Guido van Rossum began working on Python in the late 1980s as a successor to the ABC programming language and first released it in 1991 as Python 0.9.

From C document: It was designed to be compiled to provide low-level access to memory and language constructs that map efficiently to machine instructions, all with minimal runtime support.'A model tuned on Python code is included. It can be used to complete code snippets.

>>> import languagemodels as lm

>>> lm.code("""

... a = 2

... b = 5

...

... # Swap a and b

... """)

'a, b = b, a'This package currently outperforms Hugging Face transformers for CPU inference thanks to int8 quantization and the CTranslate2 backend. The following table compares CPU inference performance on identical models using the best available quantization on a 20 question test set.

| Backend | Inference Time | Memory Used |

|---|---|---|

| HuggingFace transformers | 22s | 1.77GB |

| This package | 11s | 0.34GB |

Note that quantization does technically harm output quality slightly, but it should be negligible at this level.

The models used by this package are 1000x smaller than the largest models in use today. They are useful as learning tools, but if you are expecting ChatGPT or similar performance, you will be very disappointed.

The base model should work on any system with 512MB of memory, but this memory limit can be increased. Setting this value higher will require more memory and generate results more slowly, but the results should be superior. Here's an example:

>>> import languagemodels as lm

>>> lm.do("If I have 7 apples then eat 5, how many apples do I have?")

'You have 8 apples.'

>>> lm.set_max_ram('4gb')

4.0

>>> lm.do("If I have 7 apples then eat 5, how many apples do I have?")

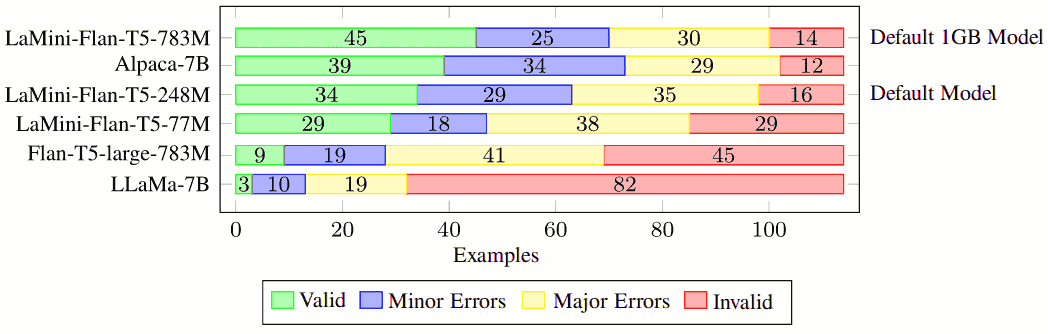

'I have 2 apples left.'This pacakge currently uses LaMini-Flan-T5-base as its default model. This is a fine-tuning of the T5 base model on top of the FLAN fine-tuning provided by Google. The model is tuned to respond to instructions in a human-like manner. The following human evaluations were reported in the paper associated with this model family:

This package itself is licensed for commerical use, but the models used may not be compatible with commercial use. In order to use this package commercially, you may want to filter models by their license type using the require_model_license function.

>>> import languagemodels as lm

>>> lm.do("What is your favorite animal.")

>>> "As an AI language model, I don't have preferences or emotions."

>>> lm.require_model_license("apache.*|mit")

>>> lm.do("What is your favorite animal.")

'Lion.'The commercially-licensed models may not perform as well as the default models. It is recommended to confirm that the models used do meet the licensing requirements for your software.

This package can be used to do the heavy lifting for a number of learning projects:

- CLI Chatbot (see examples/chat.py)

- Streamlit chatbot (see examples/streamlitchat.py)

- Chatbot with information retrieval

- Chatbot with access to real-time information

- Tool use

- Text classification

- Extractive question answering

- Semantic search over documents

- Document question answering

Several example programs and notebooks are included in the examples directory.