- Airflow Breeze CI environment

- Prerequisites

- Resources required

- Installation

- Regular development tasks

- Entering Breeze shell

- Building the documentation

- Generating short form names for Providers

- Running static checks

- Starting Airflow

- Launching multiple terminals in the same environment

- Compiling www assets

- Breeze cleanup

- Running arbitrary commands in container

- Running Breeze with Metrics

- Stopping the environment

- Troubleshooting

- Advanced commands

- Running tests

- Running Kubernetes tests

- Setting up K8S environment

- Creating K8S cluster

- Deleting K8S cluster

- Building Airflow K8s images

- Uploading Airflow K8s images

- Configuring K8S cluster

- Deploying Airflow to the Cluster

- Checking status of the K8S cluster

- Running k8s tests

- Running k8s complete tests

- Entering k8s shell

- Running k9s tool

- Dumping logs from all k8s clusters

- CI Image tasks

- PROD Image tasks

- Breeze setup

- CI tasks

- Release management tasks

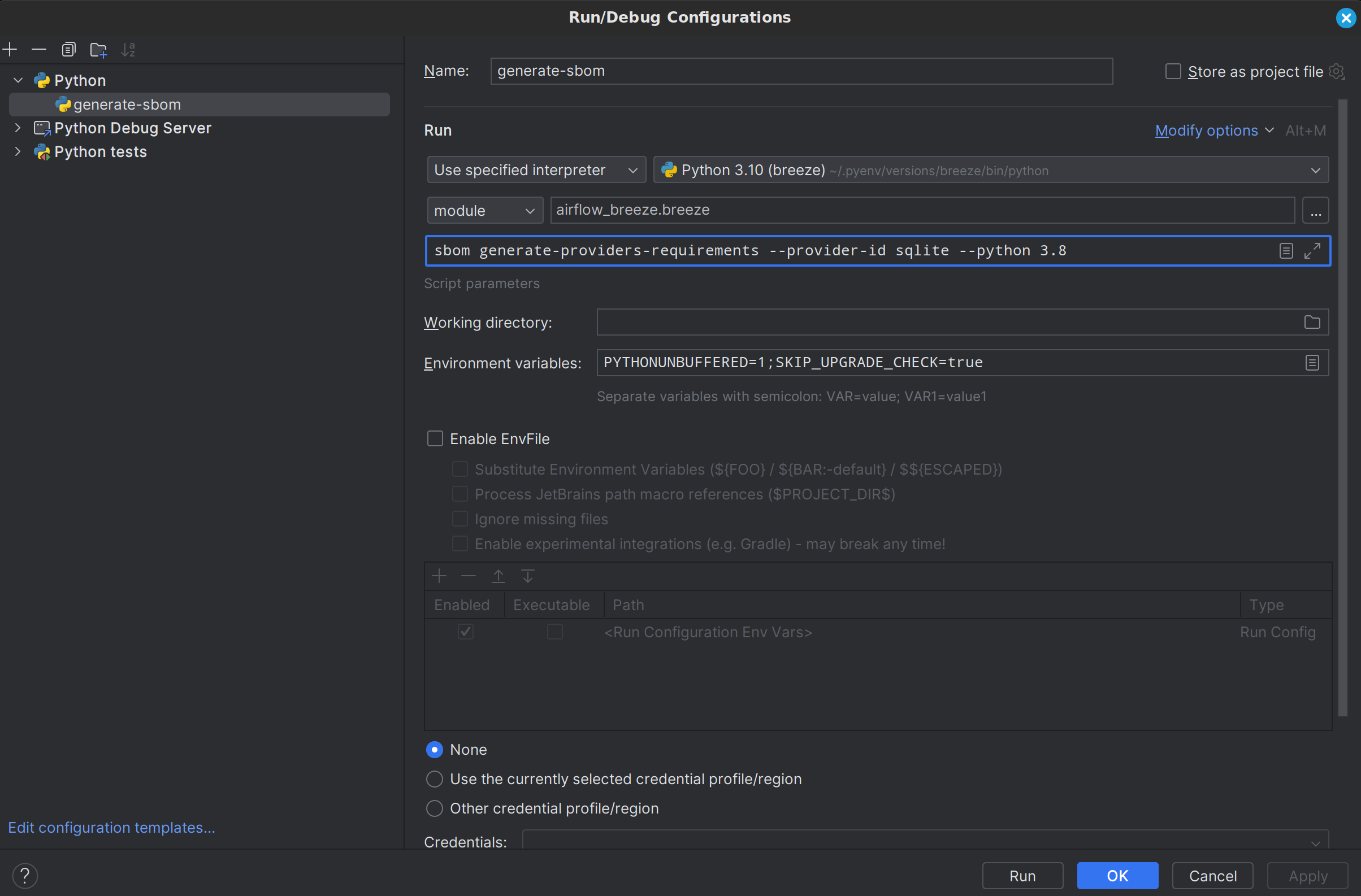

- SBOM generation tasks

- Details of Breeze usage

- Internal details of Breeze

- Recording command output

- Uninstalling Breeze

- Debugging/developing Breeze

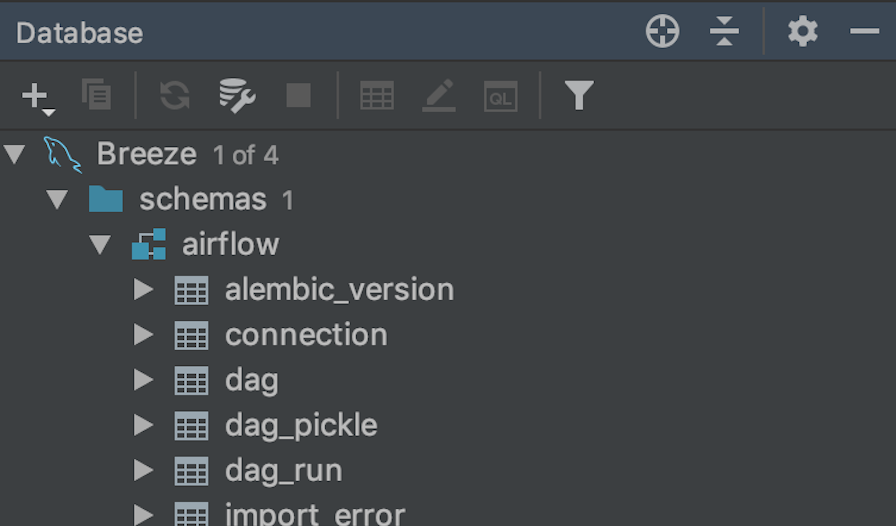

Airflow Breeze is an easy-to-use development and test environment using Docker Compose. The environment is available for local use and is also used in Airflow's CI tests.

We call it Airflow Breeze as It's a Breeze to contribute to Airflow.

The advantages and disadvantages of using the Breeze environment vs. other ways of testing Airflow are described in CONTRIBUTING.rst.

- Version: Install the latest stable Docker Desktop

and make sure it is in your PATH.

Breezedetects if you are using version that is too old and warns you to upgrade. - Permissions: Configure to run the

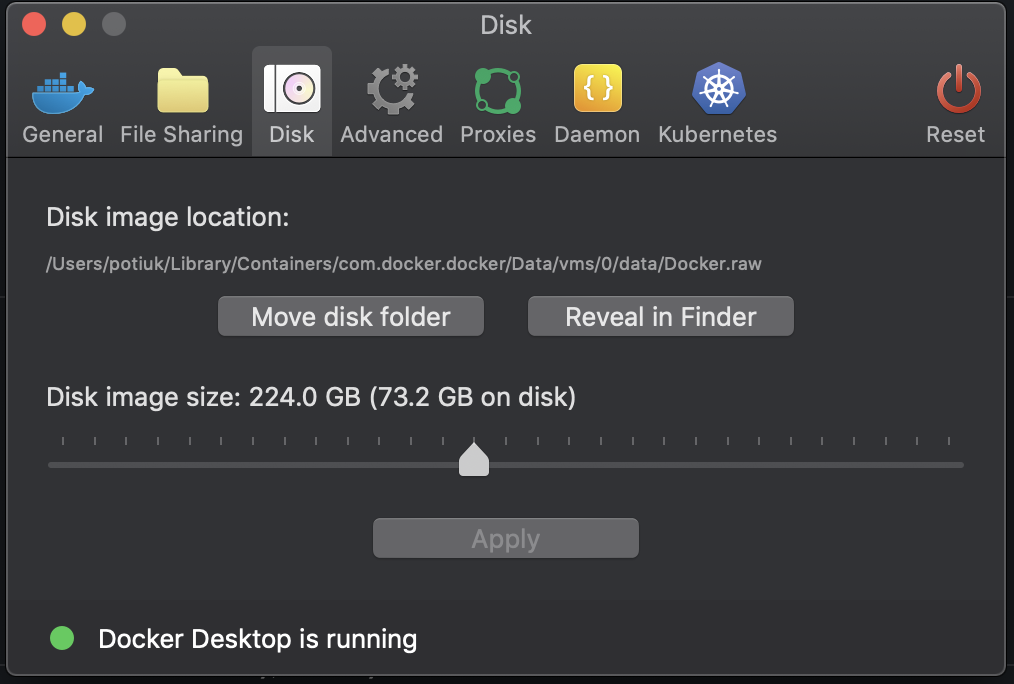

dockercommands directly and not only via root user. Your user should be in thedockergroup. See Docker installation guide for details. - Disk space: On macOS, increase your available disk space before starting to work with the environment. At least 20 GB of free disk space is recommended. You can also get by with a smaller space but make sure to clean up the Docker disk space periodically. See also Docker for Mac - Space for details on increasing disk space available for Docker on Mac.

- Docker problems: Sometimes it is not obvious that space is an issue when you run into

a problem with Docker. If you see a weird behaviour, try

breeze cleanupcommand. Also see pruning instructions from Docker. - Docker context: Recent versions of Docker Desktop are by default configured to use

desktop-linuxdocker context that uses docker socket created in user home directory. Older versions (and plain docker) uses/var/run/docker.socksocket anddefaultcontext. Breeze will attempt to detect if you havedesktop-linuxcontext configured and will use it if it is available, but you can force the context by adding--builderflag to the commands that build image or run the container and forward the socket to inside the image.

Here is an example configuration with more than 200GB disk space for Docker:

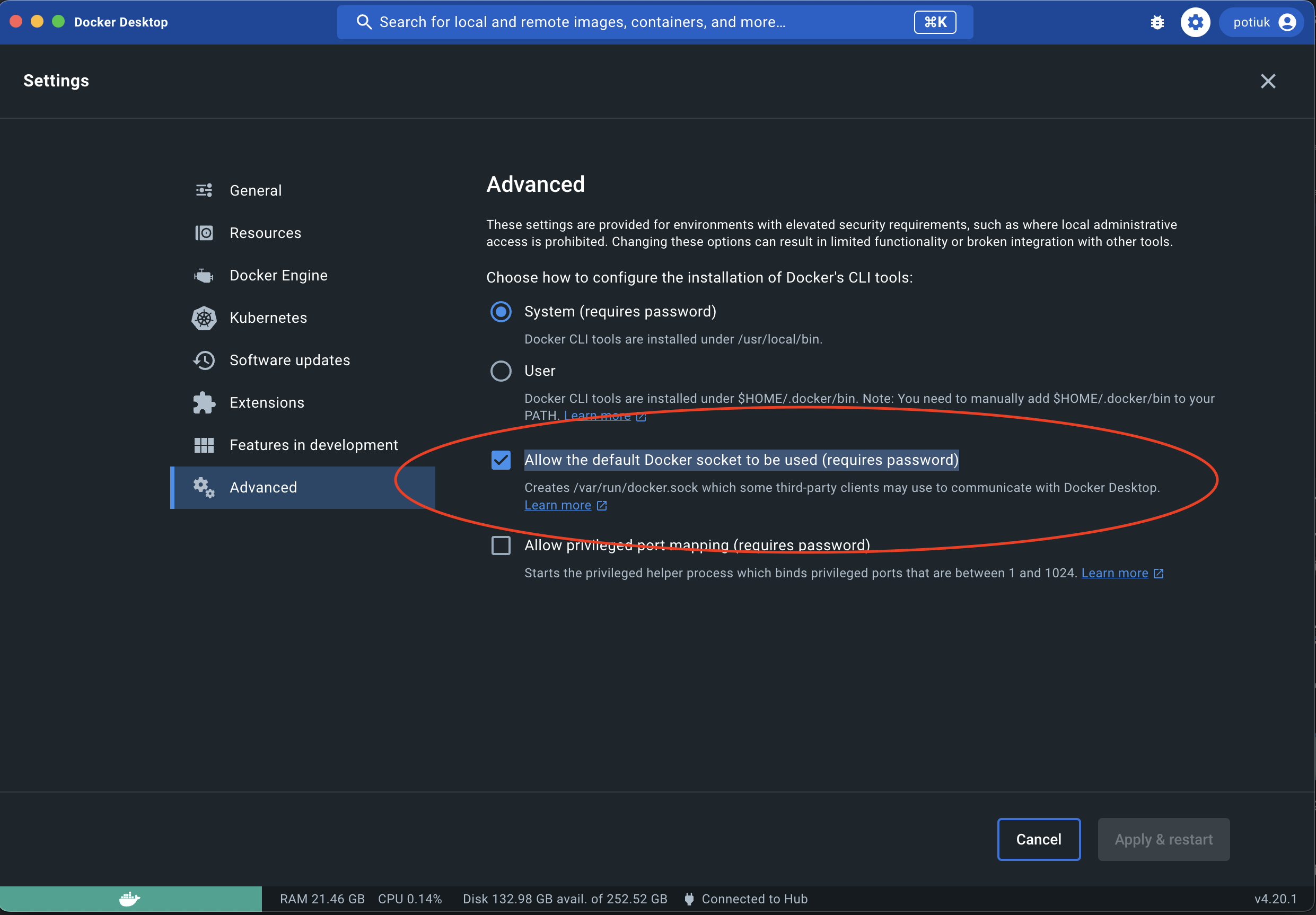

- Docker is not running - even if it is running with Docker Desktop. This is an issue specific to Docker Desktop 4.13.0 (released in late October 2022). Please upgrade Docker Desktop to 4.13.1 or later to resolve the issue. For technical details, see also docker/for-mac#6529.

Docker errors that may come while running breeze

- If docker not running in python virtual environment

- Solution

- Create the docker group if it does not exist

sudo groupadd docker- Add your user to the docker group.

sudo usermod -aG docker $USER- Log in to the new docker group

newgrp docker- Check if docker can be run without root

docker run hello-world- 5. In some cases you might make sure that "Allow the default Docker socket to be used" in "Advanced" tab of "Docker Desktop" settings is checked

Note: If you use Colima, please follow instructions at: Contributors Quick Start Guide

- Version: Install the latest stable Docker Compose

and add it to the PATH.

Breezedetects if you are using version that is too old and warns you to upgrade. - Permissions: Configure permission to be able to run the

docker-composecommand by your user.

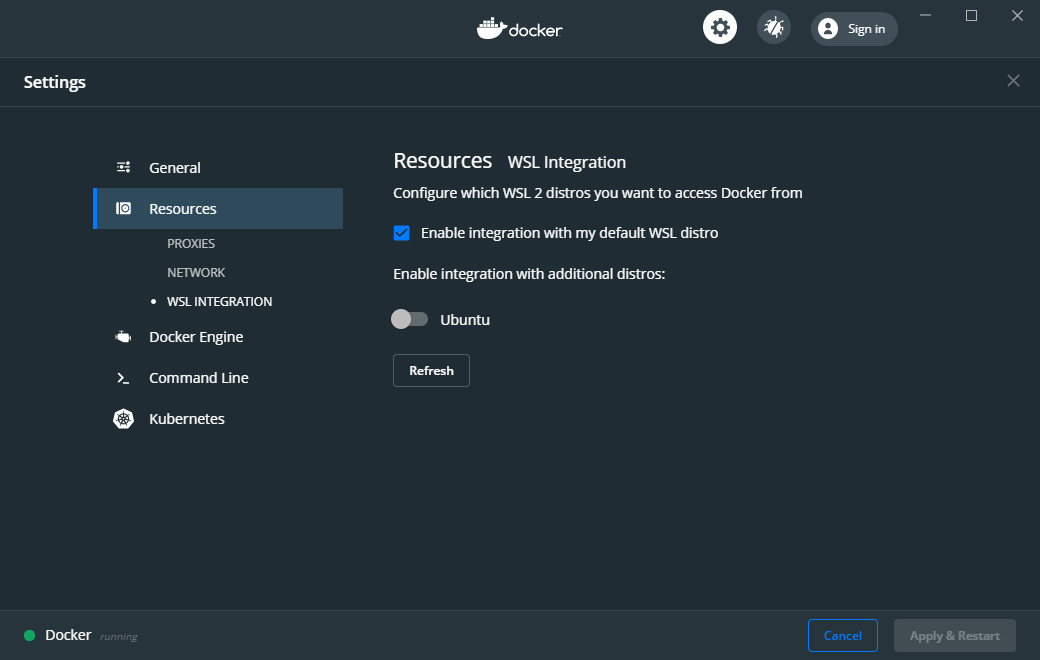

- WSL 2 installation :

- Install WSL 2 and a Linux Distro (e.g. Ubuntu) see WSL 2 Installation Guide for details.

- Docker Desktop installation :

- Install Docker Desktop for Windows. For Windows Home follow the Docker Windows Home Installation Guide. For Windows Pro, Enterprise, or Education follow the Docker Windows Installation Guide.

- Docker setting :

- WSL integration needs to be enabled

- WSL 2 Filesystem Performance :

- Accessing the host Windows filesystem incurs a performance penalty,

it is therefore recommended to do development on the Linux filesystem.

E.g. Run

cd ~and create a development folder in your Linux distro home and git pull the Airflow repo there.

- WSL 2 Docker mount errors:

- Another reason to use Linux filesystem, is that sometimes - depending on the length of

your path, you might get strange errors when you try start

Breeze, such ascaused: mount through procfd: not a directory: unknown:. Therefore checking out Airflow in Windows-mounted Filesystem is strongly discouraged.

- WSL 2 Docker volume remount errors:

- If you're experiencing errors such as

ERROR: for docker-compose_airflow_run Cannot create container for service airflow: not a directorywhen starting Breeze after the first time or an error likedocker: Error response from daemon: not a directory. See 'docker run --help'.when running the pre-commit tests, you may need to consider installing Docker directly in WSL 2 instead of using Docker Desktop for Windows.

- WSL 2 Memory Usage :

- WSL 2 can consume a lot of memory under the process name "Vmmem". To reclaim the memory after

development you can:

- On the Linux distro clear cached memory:

sudo sysctl -w vm.drop_caches=3 - If no longer using Docker you can quit Docker Desktop (right click system try icon and select "Quit Docker Desktop")

- If no longer using WSL you can shut it down on the Windows Host

with the following command:

wsl --shutdown

- On the Linux distro clear cached memory:

- Developing in WSL 2:

- You can use all the standard Linux command line utilities to develop on WSL 2.

Further VS Code supports developing in Windows but remotely executing in WSL.

If VS Code is installed on the Windows host system then in the WSL Linux Distro

you can run

code .in the root directory of you Airflow repo to launch VS Code.

We are using pipx tool to install and manage Breeze. The pipx tool is created by the creators

of pip from Python Packaging Authority

Note that pipx >= 1.2.1 is needed in order to deal with breaking packaging release in September

2023 that broke earlier versions of pipx.

Install pipx

pip install --user "pipx>=1.2.1"Breeze, is not globally accessible until your PATH is updated. Add <USER FOLDER>.localbin as a variable environments. This can be done automatically by the following command (follow instructions printed).

pipx ensurepathIn Mac

python -m pipx ensurepathMinimum 4GB RAM for Docker Engine is required to run the full Breeze environment.

On macOS, 2GB of RAM are available for your Docker containers by default, but more memory is recommended (4GB should be comfortable). For details see Docker for Mac - Advanced tab.

On Windows WSL 2 expect the Linux Distro and Docker containers to use 7 - 8 GB of RAM.

Minimum 40GB free disk space is required for your Docker Containers.

On Mac OS This might deteriorate over time so you might need to increase it or run breeze cleanup

periodically. For details see

Docker for Mac - Advanced tab.

On WSL2 you might want to increase your Virtual Hard Disk by following: Expanding the size of your WSL 2 Virtual Hard Disk

There is a command breeze ci resource-check that you can run to check available resources. See below

for details.

You may need to clean up your Docker environment occasionally. The images are quite big (1.5GB for both images needed for static code analysis and CI tests) and, if you often rebuild/update them, you may end up with some unused image data.

To clean up the Docker environment:

Stop Breeze with

breeze down. (If Breeze is already running)Run the

breeze cleanupcommand.Run

docker images --allanddocker ps --allto verify that your Docker is clean.Both commands should return an empty list of images and containers respectively.

If you run into disk space errors, consider pruning your Docker images with the docker system prune --all

command. You may need to restart the Docker Engine before running this command.

In case of disk space errors on macOS, increase the disk space available for Docker. See Prerequisites for details.

Set your working directory to root of (this) cloned repository.

Run this command to install Breeze (make sure to use -e flag):

pipx install -e ./dev/breezeWarning

If you see below warning - it means that you hit known issue

with packaging version 23.2:

The workaround is to downgrade packaging to 23.1 and re-running the pipx install command.

Note

Note for Windows users

The ./dev/breeze in command about is a PATH to sub-folder where breeze source packages are.

If you are on Windows, you should use Windows way to point to the dev/breeze sub-folder

of Airflow either as absolute or relative path. For example:

pipx install -e dev\breezeOnce this is complete, you should have breeze binary on your PATH and available to run by breeze

command.

Those are all available commands for Breeze and details about the commands are described below:

Breeze installed this way is linked to your checked out sources of Airflow, so Breeze will

automatically use latest version of sources from ./dev/breeze. Sometimes, when dependencies are

updated breeze commands with offer you to run self-upgrade.

You can always run such self-upgrade at any time:

breeze setup self-upgradeIf you have several checked out Airflow sources, Breeze will warn you if you are using it from a different source tree and will offer you to re-install from those sources - to make sure that you are using the right version.

You can skip Breeze's upgrade check by setting SKIP_BREEZE_UPGRADE_CHECK variable to non empty value.

By default Breeze works on the version of Airflow that you run it in - in case you are outside of the sources of Airflow and you installed Breeze from a directory - Breeze will be run on Airflow sources from where it was installed.

You can run breeze setup version command to see where breeze installed from and what are the current sources

that Breeze works on

Warning

Upgrading from earlier Python version

If you used Breeze with Python 3.7 and when running it, it will complain that it needs Python 3.8. In this

case you should force-reinstall Breeze with pipx:

pipx install --force -e ./dev/breeze

Note

Note for Windows users

The ./dev/breeze in command about is a PATH to sub-folder where breeze source packages are.

If you are on Windows, you should use Windows way to point to the dev/breeze sub-folder

of Airflow either as absolute or relative path. For example:

pipx install --force -e dev\breezeThe First time you run Breeze, it pulls and builds a local version of Docker images. It pulls the latest Airflow CI images from the GitHub Container Registry and uses them to build your local Docker images. Note that the first run (per python) might take up to 10 minutes on a fast connection to start. Subsequent runs should be much faster.

Once you enter the environment, you are dropped into bash shell of the Airflow container and you can run tests immediately.

To use the full potential of breeze you should set up autocomplete. The breeze command comes

with a built-in bash/zsh/fish autocomplete setup command. After installing,

when you start typing the command, you can use <TAB> to show all the available switches and get

auto-completion on typical values of parameters that you can use.

You should set up the autocomplete option automatically by running:

breeze setup autocompleteBreeze on POSIX-compliant systems (Linux, MacOS) can be automatically installed by running the

scripts/tools/setup_breeze bash script. This includes checking and installing pipx, setting up

breeze with it and setting up autocomplete.

When you enter the Breeze environment, automatically an environment file is sourced from

files/airflow-breeze-config/variables.env.

You can also add files/airflow-breeze-config/init.sh and the script will be sourced always

when you enter Breeze. For example you can add pip install commands if you want to install

custom dependencies - but there are no limits to add your own customizations.

You can override the name of the init script by setting INIT_SCRIPT_FILE environment variable before

running the breeze environment.

You can also customize your environment by setting BREEZE_INIT_COMMAND environment variable. This variable

will be evaluated at entering the environment.

The files folder from your local sources is automatically mounted to the container under

/files path and you can put there any files you want to make available for the Breeze container.

You can also copy any .whl or .sdist packages to dist and when you pass --use-packages-from-dist flag

as wheel or sdist line parameter, breeze will automatically install the packages found there

when you enter Breeze.

You can also add your local tmux configuration in files/airflow-breeze-config/.tmux.conf and

these configurations will be available for your tmux environment.

There is a symlink between files/airflow-breeze-config/.tmux.conf and ~/.tmux.conf in the container,

so you can change it at any place, and run

tmux source ~/.tmux.confinside container, to enable modified tmux configurations.

The regular Breeze development tasks are available as top-level commands. Those tasks are most often used during the development, that's why they are available without any sub-command. More advanced commands are separated to sub-commands.

This is the most often used feature of breeze. It simply allows to enter the shell inside the Breeze development environment (inside the Breeze container).

You can use additional breeze flags to choose your environment. You can specify a Python

version to use, and backend (the meta-data database). Thanks to that, with Breeze, you can recreate the same

environments as we have in matrix builds in the CI.

For example, you can choose to run Python 3.8 tests with MySQL as backend and with mysql version 8 as follows:

breeze --python 3.8 --backend mysql --mysql-version 8.0Note

Note for Windows WSL2 users

You may find error messages:

Current context is now "..."

protocol not available

Error 1 returnedTry adding --builder=default to your command. For example:

breeze --builder=default --python 3.8 --backend mysql --mysql-version 8.0The choices you make are persisted in the ./.build/ cache directory so that next time when you use the

breeze script, it could use the values that were used previously. This way you do not have to specify

them when you run the script. You can delete the .build/ directory in case you want to restore the

default settings.

You can see which value of the parameters that can be stored persistently in cache marked with >VALUE< in the help of the commands.

To build documentation in Breeze, use the build-docs command:

breeze build-docsResults of the build can be found in the docs/_build folder.

The documentation build consists of three steps:

- verifying consistency of indexes

- building documentation

- spell checking

You can choose only one stage of the two by providing --spellcheck-only or --docs-only after

extra -- flag.

breeze build-docs --spellcheck-onlyThis process can take some time, so in order to make it shorter you can filter by package, using the flag

--package-filter <PACKAGE-NAME>. The package name has to be one of the providers or apache-airflow. For

instance, for using it with Amazon, the command would be:

breeze build-docs --package-filter apache-airflow-providers-amazonYou can also use shorthand names as arguments instead of using the full names for airflow providers. To find the short hand names, follow the instructions in :ref:`generating_short_form_names`.

Often errors during documentation generation come from the docstrings of auto-api generated classes.

During the docs building auto-api generated files are stored in the docs/_api folder. This helps you

easily identify the location the problems with documentation originated from.

These are all available flags of build-docs command:

Skip the apache-airflow-providers- from the usual provider full names.

Now with the remaining part, replace every dash("-") with a dot(".").

Example:

If the provider name is apache-airflow-providers-cncf-kubernetes, it will be cncf.kubernetes.

Note: For building docs for apache-airflow-providers index, use apache-airflow-providers

as the short hand operator.

You can run static checks via Breeze. You can also run them via pre-commit command but with auto-completion Breeze makes it easier to run selective static checks. If you press <TAB> after the static-check and if you have auto-complete setup you should see auto-completable list of all checks available.

For example, this following command:

breeze static-checks --type mypy-corewill run mypy check for currently staged files inside airflow/ excluding providers.

Pre-commits run by default on staged changes that you have locally changed. It will run it on all the

files you run git add on and it will ignore any changes that you have modified but not staged.

If you want to run it on all your modified files you should add them with git add command.

With --all-files you can run static checks on all files in the repository. This is useful when you

want to be sure they will not fail in CI, or when you just rebased your changes and want to

re-run latest pre-commits on your changes, but it can take a long time (few minutes) to wait for the result.

breeze static-checks --type mypy-core --all-filesThe above will run mypy check for all files.

You can limit that by selecting specific files you want to run static checks on. You can do that by

specifying (can be multiple times) --file flag.

breeze static-checks --type mypy-core --file airflow/utils/code_utils.py --file airflow/utils/timeout.pyThe above will run mypy check for those to files (note: autocomplete should work for the file selection).

However, often you do not remember files you modified and you want to run checks for files that belong

to specific commits you already have in your branch. You can use breeze static check to run the checks

only on changed files you have already committed to your branch - either for specific commit, for last

commit, for all changes in your branch since you branched off from main or for specific range

of commits you choose.

breeze static-checks --type mypy-core --last-commitThe above will run mypy check for all files in the last commit in your branch.

breeze static-checks --type mypy-core --only-my-changesThe above will run mypy check for all commits in your branch which were added since you branched off from main.

breeze static-checks --type mypy-core --commit-ref 639483d998ecac64d0fef7c5aa4634414065f690The above will run mypy check for all files in the 639483d998ecac64d0fef7c5aa4634414065f690 commit.

Any commit-ish reference from Git will work here (branch, tag, short/long hash etc.)

breeze static-checks --type identity --verbose --from-ref HEAD^^^^ --to-ref HEADThe above will run the check for the last 4 commits in your branch. You can use any commit-ish references

in --from-ref and --to-ref flags.

These are all available flags of static-checks command:

Note

When you run static checks, some of the artifacts (mypy_cache) is stored in docker-compose volume

so that it can speed up static checks execution significantly. However, sometimes, the cache might

get broken, in which case you should run breeze down to clean up the cache.

Note

You cannot change Python version for static checks that are run within Breeze containers.

The --python flag has no effect for them. They are always run with lowest supported Python version.

The main reason is to keep consistency in the results of static checks and to make sure that

our code is fine when running the lowest supported version.

For testing Airflow you often want to start multiple components (in multiple terminals). Breeze has

built-in start-airflow command that start breeze container, launches multiple terminals using tmux

and launches all Airflow necessary components in those terminals.

When you are starting airflow from local sources, www asset compilation is automatically executed before.

breeze --python 3.8 --backend mysql start-airflowYou can also use it to start different executor.

breeze start-airflow --executor CeleryExecutorYou can also use it to start any released version of Airflow from PyPI with the

--use-airflow-version flag - useful for testing and looking at issues raised for specific version.

breeze start-airflow --python 3.8 --backend mysql --use-airflow-version 2.7.0When you are installing version from PyPI, it's also possible to specify extras that should be used

when installing Airflow - you can provide several extras separated by coma - for example to install

providers together with Airflow that you are installing. For example when you are using celery executor

in Airflow 2.7.0+ you need to add celery extra.

breeze start-airflow --use-airflow-version 2.7.0 --executor CeleryExecutor --airflow-extras celeryThese are all available flags of start-airflow command:

Often if you want to run full airflow in the Breeze environment you need to launch multiple terminals and

run airflow webserver, airflow scheduler, airflow worker in separate terminals.

This can be achieved either via tmux or via exec-ing into the running container from the host. Tmux

is installed inside the container and you can launch it with tmux command. Tmux provides you with the

capability of creating multiple virtual terminals and multiplex between them. More about tmux can be

found at tmux GitHub wiki page . Tmux has several useful shortcuts

that allow you to split the terminals, open new tabs etc - it's pretty useful to learn it.

Another way is to exec into Breeze terminal from the host's terminal. Often you can

have multiple terminals in the host (Linux/MacOS/WSL2 on Windows) and you can simply use those terminals

to enter the running container. It's as easy as launching breeze exec while you already started the

Breeze environment. You will be dropped into bash and environment variables will be read in the same

way as when you enter the environment. You can do it multiple times and open as many terminals as you need.

These are all available flags of exec command:

Airflow webserver needs to prepare www assets - compiled with node and yarn. The compile-www-assets

command takes care about it. This is needed when you want to run webserver inside of the breeze.

Sometimes you need to cleanup your docker environment (and it is recommended you do that regularly). There are several reasons why you might want to do that.

Breeze uses docker images heavily and those images are rebuild periodically and might leave dangling, unused

images in docker cache. This might cause extra disk usage. Also running various docker compose commands

(for example running tests with breeze testing tests) might create additional docker networks that might

prevent new networks from being created. Those networks are not removed automatically by docker-compose.

Also Breeze uses it's own cache to keep information about all images.

All those unused images, networks and cache can be removed by running breeze cleanup command. By default

it will not remove the most recent images that you might need to run breeze commands, but you

can also remove those breeze images to clean-up everything by adding --all command (note that you will

need to build the images again from scratch - pulling from the registry might take a while).

Breeze will ask you to confirm each step, unless you specify --answer yes flag.

These are all available flags of cleanup command:

More sophisticated usages of the breeze shell is using the breeze shell command - it has more parameters

and you can also use it to execute arbitrary commands inside the container.

breeze shell "ls -la"Those are all available flags of shell command:

You can launch an instance of Breeze pre-configured to emit StatsD metrics using

breeze start-airflow --integration statsd. This will launch an Airflow webserver

within the Breeze environment as well as containers running StatsD, Prometheus, and

Grafana. The integration configures the "Targets" in Prometheus, the "Datasources" in

Grafana, and includes a default dashboard in Grafana.

When you run Airflow Breeze with this integration, in addition to the standard ports (See "Port Forwarding" below), the following are also automatically forwarded:

- 29102 -> forwarded to StatsD Exporter -> breeze-statsd-exporter:9102

- 29090 -> forwarded to Prometheus -> breeze-prometheus:9090

- 23000 -> forwarded to Grafana -> breeze-grafana:3000

You can connect to these ports/databases using:

- StatsD Metrics: http://127.0.0.1:29102/metrics

- Prometheus Targets: http://127.0.0.1:29090/targets

- Grafana Dashboards: http://127.0.0.1:23000/dashboards

[Work in Progress] NOTE: This will launch the stack as described below but Airflow integration is still a Work in Progress. This should be considered experimental and likely to change by the time Airflow fully supports emitting metrics via OpenTelemetry.

You can launch an instance of Breeze pre-configured to emit OpenTelemetry metrics

using breeze start-airflow --integration otel. This will launch Airflow within

the Breeze environment as well as containers running OpenTelemetry-Collector,

Prometheus, and Grafana. The integration handles all configuration of the

"Targets" in Prometheus and the "Datasources" in Grafana, so it is ready to use.

When you run Airflow Breeze with this integration, in addition to the standard ports (See "Port Forwarding" below), the following are also automatically forwarded:

- 28889 -> forwarded to OpenTelemetry Collector -> breeze-otel-collector:8889

- 29090 -> forwarded to Prometheus -> breeze-prometheus:9090

- 23000 -> forwarded to Grafana -> breeze-grafana:3000

You can connect to these ports using:

- OpenTelemetry Collector: http://127.0.0.1:28889/metrics

- Prometheus Targets: http://127.0.0.1:29090/targets

- Grafana Dashboards: http://127.0.0.1:23000/dashboards

You can launch an instance of Breeze pre-configured to emit OpenLineage metrics using

breeze start-airflow --integration openlineage. This will launch an Airflow webserver

within the Breeze environment as well as containers running a [Marquez](https://marquezproject.ai/)

webserver and API server.

When you run Airflow Breeze with this integration, in addition to the standard ports (See "Port Forwarding" below), the following are also automatically forwarded:

- MARQUEZ_API_HOST_PORT (default 25000) -> forwarded to Marquez API -> marquez:5000

- MARQUEZ_API_ADMIN_HOST_PORT (default 25001) -> forwarded to Marquez Admin API -> marquez:5001

- MARQUEZ_HOST_PORT (default 23100) -> forwarded to Marquez -> marquez_web:3000

You can connect to these services using:

- Marquez Webserver: http://127.0.0.1:23100

- Marquez API: http://127.0.0.1:25000/api/v1

- Marquez Admin API: http://127.0.0.1:25001

Make sure to substitute the port numbers if you have customized them via the above env vars.

After starting up, the environment runs in the background and takes quite some memory which you might want to free for other things you are running on your host.

You can always stop it via:

breeze downThese are all available flags of down command:

If you are having problems with the Breeze environment, try the steps below. After each step you can check whether your problem is fixed.

- If you are on macOS, check if you have enough disk space for Docker (Breeze will warn you if not).

- Stop Breeze with

breeze down. - Git fetch the origin and git rebase the current branch with main branch.

- Delete the

.builddirectory and runbreeze ci-image build. - Clean up Docker images via

breeze cleanupcommand. - Restart your Docker Engine and try again.

- Restart your machine and try again.

- Re-install Docker Desktop and try again.

Note

If the pip is taking a significant amount of time and your internet connection is causing pip to be unable to download the libraries within the default timeout, it is advisable to modify the default timeout as follows and run the breeze again.

export PIP_DEFAULT_TIMEOUT=1000

In case the problems are not solved, you can set the VERBOSE_COMMANDS variable to "true":

export VERBOSE_COMMANDS="true"

Then run the failed command, copy-and-paste the output from your terminal to the Airflow Slack #airflow-breeze channel and describe your problem.

Warning

Some operating systems (Fedora, ArchLinux, RHEL, Rocky) have recently introduced Kernel changes that result in Airflow in Breeze consuming 100% memory when run inside the community Docker implementation maintained by the OS teams.

This is an issue with backwards-incompatible containerd configuration that some of Airflow dependencies have problems with and is tracked in a few issues:

There is no solution yet from the containerd team, but seems that installing Docker Desktop on Linux solves the problem as stated in This comment and allows to run Breeze with no problems.

When running breeze start-airflow, the following output might be observed:

Skip fixing ownership of generated files as Host OS is darwin

Waiting for asset compilation to complete in the background.

Still waiting .....

Still waiting .....

Still waiting .....

Still waiting .....

Still waiting .....

Still waiting .....

The asset compilation is taking too long.

If it does not complete soon, you might want to stop it and remove file lock:

* press Ctrl-C

* run 'rm /opt/airflow/.build/www/.asset_compile.lock'

Still waiting .....

Still waiting .....

Still waiting .....

Still waiting .....

Still waiting .....

Still waiting .....

Still waiting .....

The asset compilation failed. Exiting.

[INFO] Locking pre-commit directory

Error 1 returnedThis timeout can be increased by setting ASSET_COMPILATION_WAIT_MULTIPLIER a reasonable number

could be 3-4.

export ASSET_COMPILATION_WAIT_MULTIPLIER=3This error is actually caused by the following error during the asset compilation which resulted in

ETIMEOUT when npm command is trying to install required packages:

npm ERR! code ETIMEDOUT

npm ERR! syscall connect

npm ERR! errno ETIMEDOUT

npm ERR! network request to https://registry.npmjs.org/yarn failed, reason: connect ETIMEDOUT 2606:4700::6810:1723:443

npm ERR! network This is a problem related to network connectivity.

npm ERR! network In most cases you are behind a proxy or have bad network settings.

npm ERR! network

npm ERR! network If you are behind a proxy, please make sure that the

npm ERR! network 'proxy' config is set properly. See: 'npm help config'In this situation, notice that the IP address 2606:4700::6810:1723:443 is in IPv6 format, which was the

reason why the connection did not go through the router, as the router did not support IPv6 addresses in its DNS lookup.

In this case, disabling IPv6 in the host machine and using IPv4 instead resolved the issue.

The similar issue could happen if you are behind an HTTP/HTTPS proxy and your access to required websites are blocked by it, or your proxy setting has not been done properly.

Airflow Breeze is a Python script serving as a "swiss-army-knife" of Airflow testing. Under the hood it uses other scripts that you can also run manually if you have problem with running the Breeze environment. Breeze script allows performing the following tasks:

You can run tests with breeze. There are various tests type and breeze allows to run different test

types easily. You can run unit tests in different ways, either interactively run tests with the default

shell command or via the testing commands. The latter allows to run more kinds of tests easily.

Here is the detailed set of options for the breeze testing command.

You can simply enter the breeze container in interactive shell (via breeze or more comprehensive

breeze shell command) or use your local virtualenv and run pytest command there.

This is the best way if you want to interactively run selected tests and iterate with the tests.

The good thing about breeze interactive shell is that it has all the dependencies to run all the tests

and it has the running and configured backed database started for you when you decide to run DB tests.

It also has auto-complete enabled for pytest command so that you can easily run the tests you want.

(autocomplete should help you with autocompleting test name if you start typing pytest tests<TAB>).

Here are few examples:

Running single test:

pytest tests/core/test_core.py::TestCore::test_check_operatorsTo run the whole test class:

pytest tests/core/test_core.py::TestCoreYou can re-run the tests interactively, add extra parameters to pytest and modify the files before

re-running the test to iterate over the tests. You can also add more flags when starting the

breeze shell command when you run integration tests or system tests. Read more details about it

in the testing doc where all the test types and information on how to run them are explained.

This applies to all kind of tests - all our tests can be run using pytest.

An option you have is that you can also run tests via built-in breeze testing tests command - which

is a "swiss-army-knife" of unit testing with Breeze. This command has a lot of parameters and is very

flexible thus might be a bit overwhelming.

In most cases if you want to run tess you want to use dedicated breeze testing db-tests

or breeze testing non-db-tests commands that automatically run groups of tests that allow you to choose

subset of tests to run (with --parallel-test-types flag)

The breeze testing tests command is that you can easily specify sub-set of the tests -- including

selecting specific Providers tests to run.

For example this will only run provider tests for airbyte and http providers:

breeze testing tests --test-type "Providers[airbyte,http]"You can also exclude tests for some providers from being run when whole "Providers" test type is run.

For example this will run tests for all providers except amazon and google provider tests:

breeze testing tests --test-type "Providers[-amazon,google]"You can also run parallel tests with --run-in-parallel flag - by default it will run all tests types

in parallel, but you can specify the test type that you want to run with space separated list of test

types passed to --parallel-test-types flag.

For example this will run API and WWW tests in parallel:

breeze testing tests --parallel-test-types "API WWW" --run-in-parallelThere are few special types of tests that you can run:

All- all tests are run in single pytest run.All-Postgres- runs all tests that require Postgres databaseAll-MySQL- runs all tests that require MySQL databaseAll-Quarantine- runs all tests that are in quarantine (marked with@pytest.mark.quarantineddecorator)

Here is the detailed set of options for the breeze testing tests command.

The breeze testing db-tests command is simplified version of the breeze testing tests command

that only allows you to run tests that are not bound to a database - in parallel utilising all your CPUS.

The DB-bound tests are the ones that require a database to be started and configured separately for

each test type run and they are run in parallel containers/parallel docker compose projects to

utilise multiple CPUs your machine has - thus allowing you to quickly run few groups of tests in parallel.

This command is used in CI to run DB tests.

By default this command will run complete set of test types we have, thus allowing you to see result

of all DB tests we have but you can choose a subset of test types to run by --parallel-test-types

flag or exclude some test types by specifying --excluded-parallel-test-types flag.

Run all DB tests:

breeze testing db-testsOnly run DB tests from "API CLI WWW" test types:

breeze testing db-tests --parallel-test-types "API CLI WWW"Run all DB tests excluding those in CLI and WWW test types:

breeze testing db-tests --excluded-parallel-test-types "CLI WWW"Here is the detailed set of options for the breeze testing db-tests command.

The breeze testing non-db-tests command is simplified version of the breeze testing tests command

that only allows you to run tests that are not bound to a database - in parallel utilising all your CPUS.

The non-DB-bound tests are the ones that do not expect a database to be started and configured and we can

utilise multiple CPUs your machine has via pytest-xdist plugin - thus allowing you to quickly

run few groups of tests in parallel using single container rather than many of them as it is the case for

DB-bound tests. This command is used in CI to run Non-DB tests.

By default this command will run complete set of test types we have, thus allowing you to see result

of all DB tests we have but you can choose a subset of test types to run by --parallel-test-types

flag or exclude some test types by specifying --excluded-parallel-test-types flag.

Run all non-DB tests:

breeze testing non-db-testsOnly run non-DB tests from "API CLI WWW" test types:

breeze testing non-db-tests --parallel-test-types "API CLI WWW"Run all non-DB tests excluding those in CLI and WWW test types:

breeze testing non-db-tests --excluded-parallel-test-types "CLI WWW"Here is the detailed set of options for the breeze testing non-db-tests command.

You can also run integration tests via built-in breeze testing integration-tests command. Some of our

tests require additional integrations to be started in docker-compose. The integration tests command will

run the expected integration and tests that need that integration.

For example this will only run kerberos tests:

breeze testing integration-tests --integration kerberosHere is the detailed set of options for the breeze testing integration-tests command.

You can use Breeze to run all Helm unit tests. Those tests are run inside the breeze image as there are all

necessary tools installed there. Those tests are merely checking if the Helm chart of ours renders properly

as expected when given a set of configuration parameters. The tests can be run in parallel if you have

multiple CPUs by specifying --run-in-parallel flag - in which case they will run separate containers

(one per helm-test package) and they will run in parallel.

You can also iterate over those tests with pytest commands, similarly as in case of regular unit tests.

The helm tests can be found in tests/chart folder in the main repo.

You can use Breeze to run all docker-compose tests. Those tests are run using Production image and they are running test with the Quick-start docker compose we have.

You can also iterate over those tests with pytest command, but - unlike regular unit tests and

Helm tests, they need to be run in local virtual environment. They also require to have

DOCKER_IMAGE environment variable set, pointing to the image to test if you do not run them

through breeze testing docker-compose-tests command.

The docker-compose tests are in docker-tests/ folder in the main repo.

Breeze helps with running Kubernetes tests in the same environment/way as CI tests are run. Breeze helps to setup KinD cluster for testing, setting up virtualenv and downloads the right tools automatically to run the tests.

You can:

- Setup environment for k8s tests with

breeze k8s setup-env - Build airflow k8S images with

breeze k8s build-k8s-image - Manage KinD Kubernetes cluster and upload image and deploy Airflow to KinD cluster via

breeze k8s create-cluster,breeze k8s configure-cluster,breeze k8s deploy-airflow,breeze k8s status,breeze k8s upload-k8s-image,breeze k8s delete-clustercommands - Run Kubernetes tests specified with

breeze k8s testscommand - Run complete test run with

breeze k8s run-complete-tests- performing the full cycle of creating cluster, uploading the image, deploying airflow, running tests and deleting the cluster - Enter the interactive kubernetes test environment with

breeze k8s shellandbreeze k8s k9scommand - Run multi-cluster-operations

breeze k8s list-all-clustersandbreeze k8s delete-all-clusterscommands as well as running complete tests in parallel viabreeze k8s dump-logscommand

This is described in detail in Testing Kubernetes.

You can read more about KinD that we use in The documentation

Here is the detailed set of options for the breeze k8s command.

Kubernetes environment can be set with the breeze k8s setup-env command.

It will create appropriate virtualenv to run tests and download the right set of tools to run

the tests: kind, kubectl and helm in the right versions. You can re-run the command

when you want to make sure the expected versions of the tools are installed properly in the

virtualenv. The Virtualenv is available in .build/.k8s-env/bin subdirectory of your Airflow

installation.

You can create kubernetes cluster (separate cluster for each python/kubernetes version) via

breeze k8s create-cluster command. With --force flag the cluster will be

deleted if exists. You can also use it to create multiple clusters in parallel with

--run-in-parallel flag - this is what happens in our CI.

All parameters of the command are here:

You can delete current kubernetes cluster via breeze k8s delete-cluster command. You can also add

--run-in-parallel flag to delete all clusters.

All parameters of the command are here:

Before deploying Airflow Helm Chart, you need to make sure the appropriate Airflow image is build (it has

embedded test dags, pod templates and webserver is configured to refresh immediately. This can

be done via breeze k8s build-k8s-image command. It can also be done in parallel for all images via

--run-in-parallel flag.

All parameters of the command are here:

The K8S airflow images need to be uploaded to the KinD cluster. This can be done via

breeze k8s upload-k8s-image command. It can also be done in parallel for all images via

--run-in-parallel flag.

All parameters of the command are here:

In order to deploy Airflow, the cluster needs to be configured. Airflow namespace needs to be created

and test resources should be deployed. By passing --run-in-parallel the configuration can be run

for all clusters in parallel.

All parameters of the command are here:

Airflow can be deployed to the Cluster with breeze k8s deploy-airflow. This step will automatically

(unless disabled by switches) will rebuild the image to be deployed. It also uses the latest version

of the Airflow Helm Chart to deploy it. You can also choose to upgrade existing airflow deployment

and pass extra arguments to helm install or helm upgrade commands that are used to

deploy airflow. By passing --run-in-parallel the deployment can be run

for all clusters in parallel.

All parameters of the command are here:

You can delete kubernetes cluster and airflow deployed in the current cluster

via breeze k8s status command. It can be also checked for all clusters created so far by passing

--all flag.

All parameters of the command are here:

You can run breeze k8s tests command to run pytest tests with your cluster. Those tests are placed

in kubernetes_tests/ and you can either specify the tests to run as parameter of the tests command or

you can leave them empty to run all tests. By passing --run-in-parallel the tests can be run

for all clusters in parallel.

Run all tests:

Run selected tests:

All parameters of the command are here:

You can also specify any pytest flags as extra parameters - they will be passed to the

shell command directly. In case the shell parameters are the same as the parameters of the command, you

can pass them after --. For example this is the way how you can see all available parameters of the shell

you have:

The options that are not overlapping with the tests command options can be passed directly and mixed

with the specifications of tests you want to run. For example the command below will only run

test_kubernetes_executor.py and will suppress capturing output from Pytest so that you can see the

output during test execution.

You can run breeze k8s run-complete-tests command to combine all previous steps in one command. That

command will create cluster, deploy airflow and run tests and finally delete cluster. It is used in CI

to run the whole chains in parallel.

Run all tests:

Run selected tests:

All parameters of the command are here:

You can also specify any pytest flags as extra parameters - they will be passed to the

shell command directly. In case the shell parameters are the same as the parameters of the command, you

can pass them after --. For example this is the way how you can see all available parameters of the shell

you have:

The options that are not overlapping with the tests command options can be passed directly and mixed

with the specifications of tests you want to run. For example the command below will only run

test_kubernetes_executor.py and will suppress capturing output from Pytest so that you can see the

output during test execution.

You can have multiple clusters created - with different versions of Kubernetes and Python at the same time.

Breeze enables you to interact with the chosen cluster by entering dedicated shell session that has the

cluster pre-configured. This is done via breeze k8s shell command.

Once you are in the shell, the prompt will indicate which cluster you are interacting with as well as executor you use, similar to:

The shell automatically activates the virtual environment that has all appropriate dependencies installed and you can interactively run all k8s tests with pytest command (of course the cluster need to be created and airflow deployed to it before running the tests):

All parameters of the command are here:

You can also specify any shell flags and commands as extra parameters - they will be passed to the

shell command directly. In case the shell parameters are the same as the parameters of the command, you

can pass them after --. For example this is the way how you can see all available parameters of the shell

you have:

The k9s is a fantastic tool that allows you to interact with running k8s cluster. Since we can have

multiple clusters capability, breeze k8s k9s allows you to start k9s without setting it up or

downloading - it uses k9s docker image to run it and connect it to the right cluster.

All parameters of the command are here:

You can also specify any k9s flags and commands as extra parameters - they will be passed to the

k9s command directly. In case the k9s parameters are the same as the parameters of the command, you

can pass them after --. For example this is the way how you can see all available parameters of the

k9s you have:

KinD allows to export logs from the running cluster so that you can troubleshoot your deployment.

This can be done with breeze k8s logs command. Logs can be also dumped for all clusters created

so far by passing --all flag.

All parameters of the command are here:

The image building is usually run for users automatically when needed, but sometimes Breeze users might want to manually build, pull or verify the CI images.

For all development tasks, unit tests, integration tests, and static code checks, we use the CI image maintained in GitHub Container Registry.

The CI image is built automatically as needed, however it can be rebuilt manually with

ci image build command.

Building the image first time pulls a pre-built version of images from the Docker Hub, which may take some

time. But for subsequent source code changes, no wait time is expected.

However, changes to sensitive files like setup.py or Dockerfile.ci will trigger a rebuild

that may take more time though it is highly optimized to only rebuild what is needed.

Breeze has built in mechanism to check if your local image has not diverged too much from the latest image build on CI. This might happen when for example latest patches have been released as new Python images or when significant changes are made in the Dockerfile. In such cases, Breeze will download the latest images before rebuilding because this is usually faster than rebuilding the image.

These are all available flags of ci-image build command:

You can also pull the CI images locally in parallel with optional verification.

These are all available flags of pull command:

Finally, you can verify CI image by running tests - either with the pulled/built images or with an arbitrary image.

These are all available flags of verify command:

Users can also build Production images when they are developing them. However when you want to use the PROD image, the regular docker build commands are recommended. See building the image

The Production image is also maintained in GitHub Container Registry for Caching

and in apache/airflow manually pushed for released versions. This Docker image (built using official

Dockerfile) contains size-optimised Airflow installation with selected extras and dependencies.

However in many cases you want to add your own custom version of the image - with added apt dependencies,

python dependencies, additional Airflow extras. Breeze's prod-image build command helps to build your own,

customized variant of the image that contains everything you need.

You can building the production image manually by using prod-image build command.

Note, that the images can also be built using docker build command by passing appropriate

build-args as described in IMAGES.rst , but Breeze provides several flags that

makes it easier to do it. You can see all the flags by running breeze prod-image build --help,

but here typical examples are presented:

breeze prod-image build --additional-airflow-extras "jira"This installs additional jira extra while installing airflow in the image.

breeze prod-image build --additional-python-deps "torchio==0.17.10"This install additional pypi dependency - torchio in specified version.

breeze prod-image build --additional-dev-apt-deps "libasound2-dev" \

--additional-runtime-apt-deps "libasound2"This installs additional apt dependencies - libasound2-dev in the build image and libasound in the

final image. Those are development dependencies that might be needed to build and use python packages added

via the --additional-python-deps flag. The dev dependencies are not installed in the final

production image, they are only installed in the build "segment" of the production image that is used

as an intermediate step to build the final image. Usually names of the dev dependencies end with -dev

suffix and they need to also be paired with corresponding runtime dependency added for the runtime image

(without -dev).

breeze prod-image build --python 3.8 --additional-dev-deps "libasound2-dev" \

--additional-runtime-apt-deps "libasound2"Same as above but uses python 3.8.

These are all available flags of build-prod-image command:

You can also pull PROD images in parallel with optional verification.

These are all available flags of pull-prod-image command:

Finally, you can verify PROD image by running tests - either with the pulled/built images or with an arbitrary image.

These are all available flags of verify-prod-image command:

Breeze has tools that you can use to configure defaults and breeze behaviours and perform some maintenance operations that might be necessary when you add new commands in Breeze. It also allows to configure your host operating system for Breeze autocompletion.

These are all available flags of setup command:

You can configure and inspect settings of Breeze command via this command: Python version, Backend used as well as backend versions.

Another part of configuration is enabling/disabling cheatsheet, asciiart. The cheatsheet and asciiart can be disabled - they are "nice looking" and cheatsheet contains useful information for first time users but eventually you might want to disable both if you find it repetitive and annoying.

With the config setting colour-blind-friendly communication for Breeze messages. By default we communicate

with the users about information/errors/warnings/successes via colour-coded messages, but we can switch

it off by passing --no-colour to config in which case the messages to the user printed by Breeze

will be printed using different schemes (italic/bold/underline) to indicate different kind of messages

rather than colours.

These are all available flags of setup config command:

You get the auto-completion working when you re-enter the shell (follow the instructions printed). The command will warn you and not reinstall autocomplete if you already did, but you can also force reinstalling the autocomplete via:

breeze setup autocomplete --forceThese are all available flags of setup-autocomplete command:

You can display Breeze version and with --verbose flag it can provide more information: where

Breeze is installed from and details about setup hashes.

These are all available flags of version command:

You can self-upgrade breeze automatically. These are all available flags of self-upgrade command:

This documentation contains exported images with "help" of their commands and parameters. You can

regenerate those images that need to be regenerated because their commands changed (usually after

the breeze code has been changed) via regenerate-command-images command. Usually this is done

automatically via pre-commit, but sometimes (for example when rich or rich-click library changes)

you need to regenerate those images.

You can add --force flag (or FORCE="true" environment variable to regenerate all images (not

only those that need regeneration). You can also run the command with --check-only flag to simply

check if there are any images that need regeneration.

When you add a breeze command or modify a parameter, you are also supposed to make sure that "rich groups"

for the command is present and that all parameters are assigned to the right group so they can be

nicely presented in --help output. You can check that via check-all-params-in-groups command.

Breeze hase a number of commands that are mostly used in CI environment to perform cleanup.

Breeze requires certain resources to be available - disk, memory, CPU. When you enter Breeze's shell,

the resources are checked and information if there is enough resources is displayed. However you can

manually run resource check any time by breeze ci resource-check command.

These are all available flags of resource-check command:

When our CI runs a job, it needs all memory and disk it can have. We have a Breeze command that frees the memory and disk space used. You can also use it clear space locally but it performs a few operations that might be a bit invasive - such are removing swap file and complete pruning of docker disk space used.

These are all available flags of free-space command:

On Linux, there is a problem with propagating ownership of created files (a known Docker problem). The files and directories created in the container are not owned by the host user (but by the root user in our case). This may prevent you from switching branches, for example, if files owned by the root user are created within your sources. In case you are on a Linux host and have some files in your sources created by the root user, you can fix the ownership of those files by running :

breeze ci fix-ownership

These are all available flags of fix-ownership command:

When our CI runs a job, it needs to decide which tests to run, whether to build images and how much the test should be run on multiple combinations of Python, Kubernetes, Backend versions. In order to optimize time needed to run the CI Builds. You can also use the tool to test what tests will be run when you provide a specific commit that Breeze should run the tests on.

The selective-check command will produce the set of name=value pairs of outputs derived

from the context of the commit/PR to be merged via stderr output.

More details about the algorithm used to pick the right tests and the available outputs can be found in Selective Checks.

These are all available flags of selective-check command:

When our CI runs a job, it might be within one of several workflows. Information about those workflows is stored in GITHUB_CONTEXT. Rather than using some jq/bash commands, we retrieve the necessary information (like PR labels, event_type, where the job runs on, job description and convert them into GA outputs.

These are all available flags of get-workflow-info command:

Sometimes the CI build fails because pip timeouts when trying to resolve the latest set of dependencies

for that we have the find-backtracking-candidates command. This command will try to find the

backtracking candidates that might cause the backtracking.

The details on how to use that command are explained in Figuring out backtracking dependencies.

These are all available flags of find-backtracking-candidates command:

Maintainers also can use Breeze for other purposes (those are commands that regular contributors likely do not need or have no access to run). Those are usually connected with releasing Airflow:

Running airflow release commands is part of the release procedure performed by the release managers and it is described in detail in dev .

You can prepare airflow packages using Breeze:

breeze release-management prepare-airflow-packageThis prepares airflow .whl package in the dist folder.

Again, you can specify optional --package-format flag to build selected formats of airflow packages,

default is to build both type of packages sdist and wheel.

breeze release-management prepare-airflow-package --package-format=wheel

When we create a new minor branch of Airflow, we need to perform a few maintenance tasks. This command automates it.

breeze release-management create-minor-branch

When we prepare release candidate, we automate some of the steps we need to do.

breeze release-management start-rc-process

When we prepare final release, we automate some of the steps we need to do.

breeze release-management start-release

The Production image can be released by release managers who have permissions to push the image. This happens only when there is an RC candidate or final version of Airflow released.

You release "regular" and "slim" images as separate steps.

Releasing "regular" images:

breeze release-management release-prod-images --airflow-version 2.4.0Or "slim" images:

breeze release-management release-prod-images --airflow-version 2.4.0 --slim-imagesBy default when you are releasing the "final" image, we also tag image with "latest" tags but this

step can be skipped if you pass the --skip-latest flag.

These are all of the available flags for the release-prod-images command:

Preparing provider release is part of the release procedure by the release managers and it is described in detail in dev .

You can use Breeze to prepare provider documentation.

The below example perform documentation preparation for provider packages.

breeze release-management prepare-provider-documentationYou can also add --answer yes to perform non-interactive build.

You can use Breeze to prepare provider packages.

The packages are prepared in dist folder. Note, that this command cleans up the dist folder

before running, so you should run it before generating airflow package below as it will be removed.

The below example builds provider packages in the wheel format.

breeze release-management prepare-provider-packagesIf you run this command without packages, you will prepare all packages, you can however specify

providers that you would like to build. By default both types of packages are prepared (

wheel and sdist, but you can change it providing optional --package-format flag.

breeze release-management prepare-provider-packages google amazonYou can see all providers available by running this command:

breeze release-management prepare-provider-packages --help

In some cases we want to just see if the provider packages generated can be installed with airflow without

verifying them. This happens automatically on CI for sdist pcackages but you can also run it manually if you

just prepared provider packages and they are present in dist folder.

breeze release-management install-provider-packagesYou can also run the verification with an earlier airflow version to check for compatibility.

breeze release-management install-provider-packages --use-airflow-version 2.4.0All the command parameters are here:

Breeze can also be used to verify if provider classes are importable and if they are following the

right naming conventions. This happens automatically on CI but you can also run it manually if you

just prepared provider packages and they are present in dist folder.

breeze release-management verify-provider-packagesYou can also run the verification with an earlier airflow version to check for compatibility.

breeze release-management verify-provider-packages --use-airflow-version 2.4.0All the command parameters are here:

The release manager can generate providers metadata per provider version - information about provider versions including the associated Airflow version for the provider version (i.e first airflow version released after the provider has been released) and date of the release of the provider version.

These are all of the available flags for the generate-providers-metadata command:

You can use Breeze to generate a provider issue when you release new providers.

To publish the documentation generated by build-docs in Breeze to airflow-site,

use the release-management publish-docs command:

breeze release-management publish-docsThe publishing documentation consists steps:

- checking out the latest

mainof clonedairflow-site - copying the documentation to

airflow-site - running post-docs scripts on the docs to generate back referencing HTML for new versions of docs

breeze release-management publish-docs --package-filter apache-airflow-providers-amazonThe flag --package-filter can be used to selectively publish docs during a release. It can take

values such as apache-airflow, helm-chart, apache-airflow-providers, or any individual providers.

The documentation publication happens based on this flag.

breeze release-management publish-docs --override-versionedThe flag --override-versioned is a boolean flag that is used to override the versioned directories

while publishing the documentation.

breeze release-management publish-docs --airflow-site-directoryYou can also use shorthand names as arguments instead of using the full names for airflow providers. To find the short hand names, follow the instructions in :ref:`generating_short_form_names`.

The flag --airflow-site-directory takes the path of the cloned airflow-site. The command will

not proceed if this is an invalid path.

When you have multi-processor machine docs publishing can be vastly sped up by using --run-in-parallel option when

publishing docs for multiple providers.

These are all available flags of release-management publish-docs command:

Skip the apache-airflow-providers- from the usual provider full names.

Now with the remaining part, replace every dash("-") with a dot(".").

Example:

If the provider name is apache-airflow-providers-cncf-kubernetes, it will be cncf.kubernetes.

To add back references to the documentation generated by build-docs in Breeze to airflow-site,

use the release-management add-back-references command. This is important to support backward compatibility

the airflow documentation.

You have to specify which packages you run it on. For example you can run it for all providers:

release-management add-back-references --airflow-site-directory DIRECTORY all-providersThe flag --airflow-site-directory takes the path of the cloned airflow-site. The command will

not proceed if this is an invalid path.

You can also run the command for apache-airflow (core documentation):

breeze release-management publish-docs --airflow-site-directory DIRECTORY apache-airflowAlso for helm-chart package:

breeze release-management publish-docs --airflow-site-directory DIRECTORY helm-chartYou can also manually specify (it's auto-completable) list of packages to run the command for including individual providers - you can mix apache-airflow, helm-chart and provider packages this way:

breeze release-management publish-docs --airflow-site-directory DIRECTORY apache.airflow apache.beam googleThese are all available flags of release-management add-back-references command:

Whenever setup.py gets modified, the CI main job will re-generate constraint files. Those constraint

files are stored in separated orphan branches: constraints-main, constraints-2-0.

Those are constraint files as described in detail in the CONTRIBUTING.rst#pinned-constraint-files contributing documentation.

You can use breeze release-management generate-constraints command to manually generate constraints for

all or selected python version and single constraint mode like this:

Warning

In order to generate constraints, you need to build all images with --upgrade-to-newer-dependencies

flag - for all python versions.

breeze release-management generate-constraints --airflow-constraints-mode constraintsConstraints are generated separately for each python version and there are separate constraints modes:

- 'constraints' - those are constraints generated by matching the current airflow version from sources

- and providers that are installed from PyPI. Those are constraints used by the users who want to install airflow with pip.

- "constraints-source-providers" - those are constraints generated by using providers installed from current sources. While adding new providers their dependencies might change, so this set of providers is the current set of the constraints for airflow and providers from the current main sources. Those providers are used by CI system to keep "stable" set of constraints.

- "constraints-no-providers" - those are constraints generated from only Apache Airflow, without any providers. If you want to manage airflow separately and then add providers individually, you can use those.

These are all available flags of generate-constraints command:

In case someone modifies setup.py, the scheduled CI Tests automatically upgrades and pushes changes to the constraint files, however you can also perform test run of this locally using the procedure described in the Manually generating image cache and constraints which utilises multiple processors on your local machine to generate such constraints faster.

This bumps the constraint files to latest versions and stores hash of setup.py. The generated constraint

and setup.py hash files are stored in the files folder and while generating the constraints diff

of changes vs the previous constraint files is printed.

Sometimes (very rarely) we might want to update individual packages in constraints that we generated and

tagged already in the past. This can be done using breeze release-management update-constraints command.

These are all available flags of update-constraints command:

You can read more details about what happens when you update constraints in the Manually generating image cache and constraints

Maintainers also can use Breeze for SBOM generation:

Thanks to our constraints captured for all versions of Airflow we can easily generate SBOM information for

Apache Airflow. SBOM information contains information about Airflow dependencies that are possible to consume

by our users and allow them to determine whether security issues in dependencies affect them. The SBOM

information is written directly to docs-archive in airflow-site repository.

These are all of the available flags for the update-sbom-information command:

In order to generate providers requirements, we need docker images with all airflow versions pre-installed,

such images are built with the build-all-airflow-images command.

This command will build one docker image per python version, with all the airflow versions >=2.0.0 compatible.

In order to generate SBOM information for providers, we need to generate requirements for them. This is

done by the generate-providers-requirements command. This command generates requirements for the

selected provider and python version, using the airflow version specified.

Breeze keeps data for all it's integration in named docker volumes. Each backend and integration

keeps data in their own volume. Those volumes are persisted until breeze down command.

You can also preserve the volumes by adding flag --preserve-volumes when you run the command.

Then, next time when you start Breeze, it will have the data pre-populated.

These are all available flags of down command:

To shrink the Docker image, not all tools are pre-installed in the Docker image. But we have made sure that there is an easy process to install additional tools.

Additional tools are installed in /files/bin. This path is added to $PATH, so your shell will

automatically autocomplete files that are in that directory. You can also keep the binaries for your tools

in this directory if you need to.

Installation scripts

For the development convenience, we have also provided installation scripts for commonly used tools. They are

installed to /files/opt/, so they are preserved after restarting the Breeze environment. Each script

is also available in $PATH, so just type install_<TAB> to get a list of tools.

Currently available scripts:

install_aws.sh- installs the AWS CLI includinginstall_az.sh- installs the Azure CLI includinginstall_gcloud.sh- installs the Google Cloud SDK includinggcloud,gsutil.install_imgcat.sh- installs imgcat - Inline Images Protocol for iTerm2 (Mac OS only)install_java.sh- installs the OpenJDK 8u41install_kubectl.sh- installs the Kubernetes command-line tool, kubectlinstall_snowsql.sh- installs SnowSQLinstall_terraform.sh- installs Terraform

When Breeze starts, it can start additional integrations. Those are additional docker containers that are started in the same docker-compose command. Those are required by some of the tests as described in TESTING.rst#airflow-integration-tests.

By default Breeze starts only airflow container without any integration enabled. If you selected

postgres or mysql backend, the container for the selected backend is also started (but only the one

that is selected). You can start the additional integrations by passing --integration flag

with appropriate integration name when starting Breeze. You can specify several --integration flags

to start more than one integration at a time.

Finally you can specify --integration all-testable to start all testable integrations and

--integration all to enable all integrations.

Once integration is started, it will continue to run until the environment is stopped with

breeze down command.

Note that running integrations uses significant resources - CPU and memory.

You can set up your host IDE (for example, IntelliJ's PyCharm/Idea) to work with Breeze and benefit from all the features provided by your IDE, such as local and remote debugging, language auto-completion, documentation support, etc.

To use your host IDE with Breeze:

Create a local virtual environment:

You can use any of the following wrappers to create and manage your virtual environments: pyenv, pyenv-virtualenv, or virtualenvwrapper.

Use the right command to activate the virtualenv (

workonif you use virtualenvwrapper orpyenv activateif you use pyenv.Initialize the created local virtualenv:

./scripts/tools/initialize_virtualenv.pyWarning

Make sure that you use the right Python version in this command - matching the Python version you have in your local virtualenv. If you don't, you will get strange conflicts.

- Select the virtualenv you created as the project's default virtualenv in your IDE.

Note that you can also use the local virtualenv for Airflow development without Breeze. This is a lightweight solution that has its own limitations.

More details on using the local virtualenv are available in the LOCAL_VIRTUALENV.rst.

When you are in the CI container, the following directories are used:

/opt/airflow - Contains sources of Airflow mounted from the host (AIRFLOW_SOURCES).

/root/airflow - Contains all the "dynamic" Airflow files (AIRFLOW_HOME), such as:

airflow.db - sqlite database in case sqlite is used;

logs - logs from Airflow executions;

unittest.cfg - unit test configuration generated when entering the environment;

webserver_config.py - webserver configuration generated when running Airflow in the container.

/files - files mounted from "files" folder in your sources. You can edit them in the host as well

dags - this is the folder where Airflow DAGs are read from

airflow-breeze-config - this is where you can keep your own customization configuration of breeze

Note that when running in your local environment, the /root/airflow/logs folder is actually mounted

from your logs directory in the Airflow sources, so all logs created in the container are automatically

visible in the host as well. Every time you enter the container, the logs directory is

cleaned so that logs do not accumulate.

When you are in the production container, the following directories are used:

/opt/airflow - Contains sources of Airflow mounted from the host (AIRFLOW_SOURCES).

/root/airflow - Contains all the "dynamic" Airflow files (AIRFLOW_HOME), such as:

airflow.db - sqlite database in case sqlite is used;

logs - logs from Airflow executions;

unittest.cfg - unit test configuration generated when entering the environment;

webserver_config.py - webserver configuration generated when running Airflow in the container.

/files - files mounted from "files" folder in your sources. You can edit them in the host as well

dags - this is the folder where Airflow DAGs are read from

Note that when running in your local environment, the /root/airflow/logs folder is actually mounted

from your logs directory in the Airflow sources, so all logs created in the container are automatically

visible in the host as well. Every time you enter the container, the logs directory is

cleaned so that logs do not accumulate.

Sometimes during the build, you are asked whether to perform an action, skip it, or quit. This happens

when rebuilding or removing an image and in few other cases - actions that take a lot of time

or could be potentially destructive. You can force answer to the questions by providing an

--answer flag in the commands that support it.

For automation scripts, you can export the ANSWER variable (and set it to

y, n, q, yes, no, quit - in all case combinations).

export ANSWER="yes"

Important sources of Airflow are mounted inside the airflow container that you enter.

This means that you can continue editing your changes on the host in your favourite IDE and have them

visible in the Docker immediately and ready to test without rebuilding images. You can modify mounting