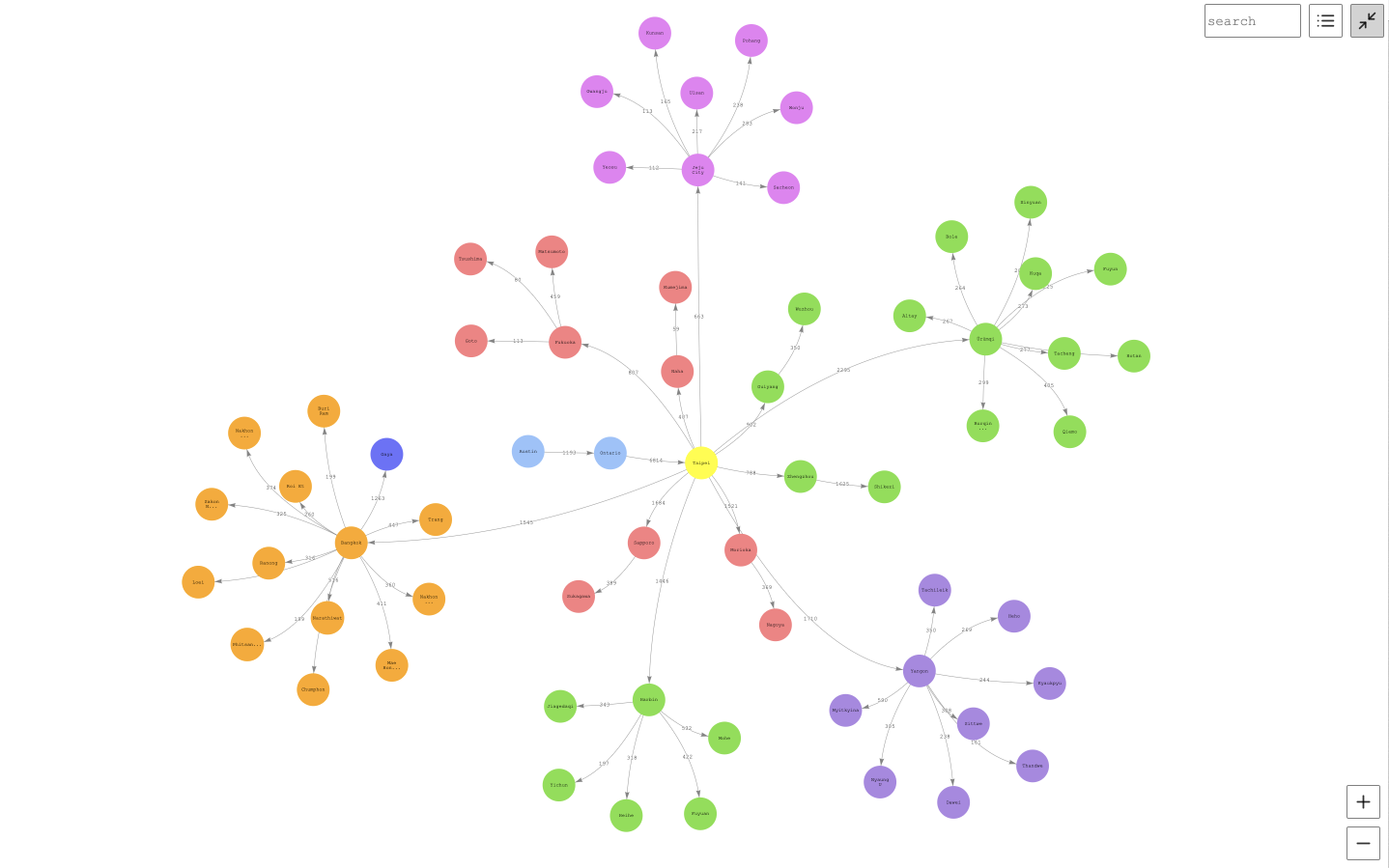

The graph notebook provides an easy way to interact with graph databases using Jupyter notebooks. Using this open-source Python package, you can connect to any graph database that supports the Apache TinkerPop, openCypher or the RDF SPARQL graph models. These databases could be running locally on your desktop or in the cloud. Graph databases can be used to explore a variety of use cases including knowledge graphs and identity graphs.

Instructions for connecting to the following graph databases:

| Endpoint | Graph model | Query language |

|---|---|---|

| Gremlin Server | property graph | Gremlin |

| Blazegraph | RDF | SPARQL |

| Amazon Neptune | property graph or RDF | Gremlin or SPARQL |

We encourage others to contribute configurations they find useful. There is an additional-databases folder where more information can be found.

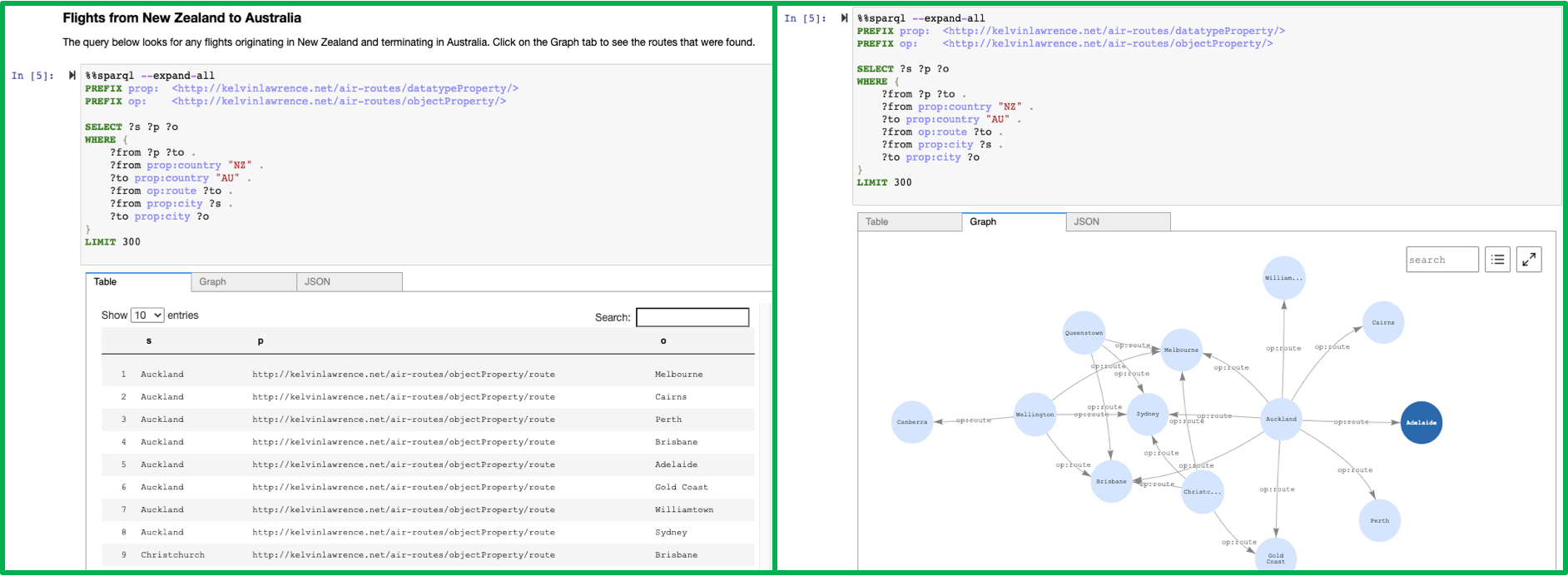

%%sparql - Executes a SPARQL query against your configured database endpoint.

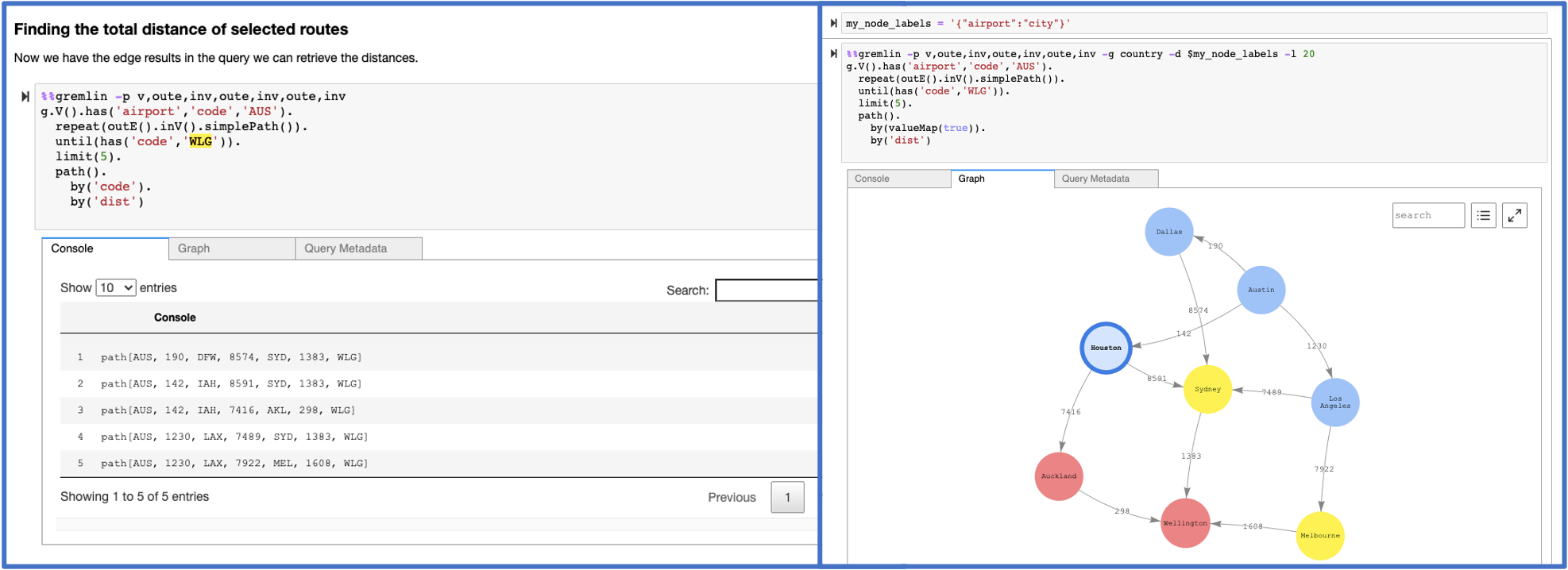

%%gremlin - Executes a Gremlin query against your database using web sockets. The results are similar to those a Gremlin console would return.

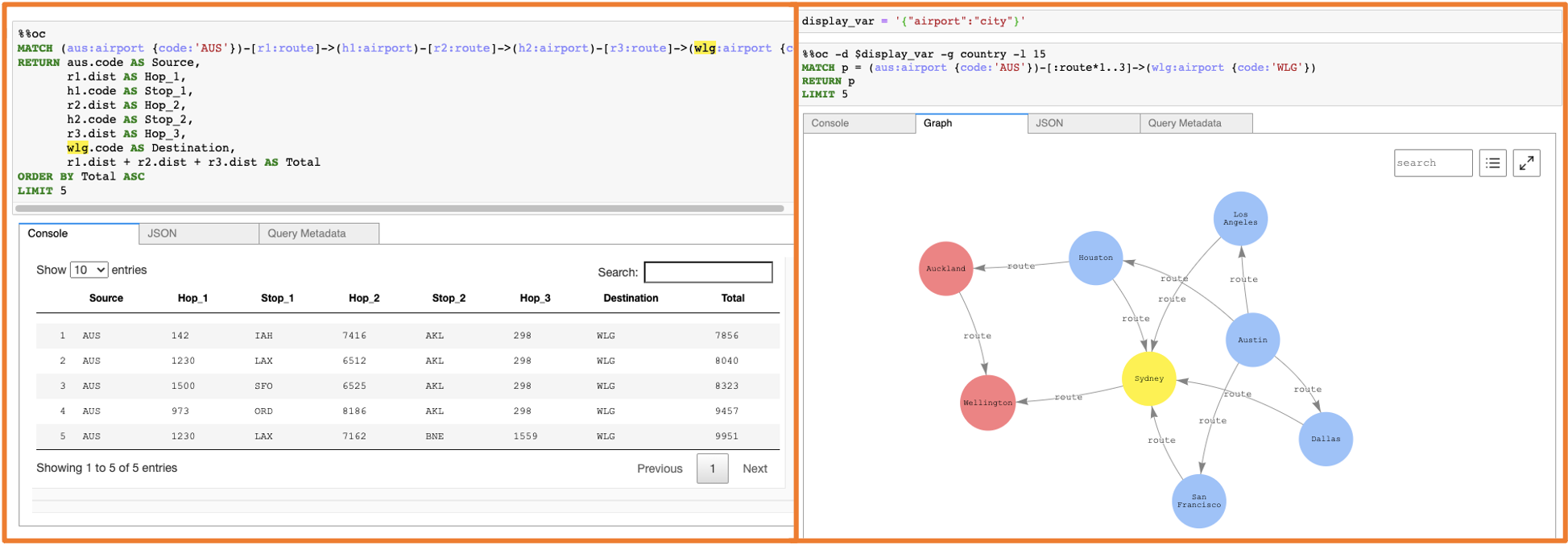

%%opencypher or %%oc Executes an openCypher query against your database.

%%graph_notebook_config - Sets the executing notebook's database configuration to the JSON payload provided in the cell body.

%%graph_notebook_vis_options - Sets the executing notebook's vis.js options to the JSON payload provided in the cell body.

%%neptune_ml - Set of commands to integrate with NeptuneML functionality. Documentation

TIP 👉 There is syntax highlighting for %%sparql, %%gremlin and %%oc cells to help you structure your queries more easily.

%gremlin_status - Obtain the status of Gremlin queries. Documentation

%sparql_status - Obtain the status of SPARQL queries. Documentation

%opencypher_status or %oc_status - Obtain the status of openCypher queries.

%load - Generate a form to submit a bulk loader job. Documentation

%load_ids - Get ids of bulk load jobs. Documentation

%load_status - Get the status of a provided load_id. Documentation

%neptune_ml - Set of commands to integrate with NeptuneML functionality. You can find a set of tutorial notebooks here.

Documentation

%status - Check the Health Status of the configured host endpoint. Documentation

%seed - Provides a form to add data to your graph without the use of a bulk loader. Supports both RDF and Property Graph data models.

%stream_viewer - Interactively explore the Neptune CDC stream (if enabled)

%graph_notebook_config - Returns a JSON payload that contains connection information for your host.

%graph_notebook_host - Set the host endpoint to send queries to.

%graph_notebook_version - Print the version of the graph-notebook package

%graph_notebook_vis_options - Print the Vis.js options being used for rendered graphs

TIP 👉 You can list all the magics installed in the Python 3 kernel using the %lsmagic command.

TIP 👉 Many of the magic commands support a --help option in order to provide additional information.

This project includes many example Jupyter notebooks. It is recommended to explore them. All of the commands and features supported by graph-notebook are explained in detail with examples within the sample notebooks. You can find them here. As this project has evolved, many new features have been added. If you are already familiar with graph-notebook but want a quick summary of new features added, a good place to start is the Air-Routes notebooks in the 02-Visualization folder.

It is recommended to check the ChangeLog.md file periodically to keep up to date as new features are added.

You will need:

- Python 3.6.13-3.9.7

- RDFLib 5.0.0

- A graph database that provides one or more of:

- A SPARQL 1.1 endpoint

- An Apache TinkerPop Gremlin Server compatible endpoint

- An endpoint compatible with openCypher

# pin specific versions of required dependencies

pip install rdflib==5.0.0

# install the package

pip install graph-notebook

# install and enable the visualization widget

jupyter nbextension install --py --sys-prefix graph_notebook.widgets

jupyter nbextension enable --py --sys-prefix graph_notebook.widgets

# copy static html resources

python -m graph_notebook.static_resources.install

python -m graph_notebook.nbextensions.install

# copy premade starter notebooks

python -m graph_notebook.notebooks.install --destination ~/notebook/destination/dir

# start jupyter

python -m graph_notebook.start_notebook --notebooks-dir ~/notebook/destination/dir

In a new cell in the Jupyter notebook, change the configuration using %%graph_notebook_config and modify the fields for host, port, and ssl. For a local Gremlin server (HTTP or WebSockets), you can use the following command:

%%graph_notebook_config

{

"host": "localhost",

"port": 8182,

"ssl": false

}

To setup a new local Gremlin Server for use with the graph notebook, check out additional-databases/gremlin server

Change the configuration using %%graph_notebook_config and modify the fields for host, port, and ssl. For a local Blazegraph database, you can use the following command:

%%graph_notebook_config

{

"host": "localhost",

"port": 9999,

"ssl": false,

"sparql": {

"path": "sparql"

}

}

You can also make use of namespaces for Blazegraph by specifying the path graph-notebook should use when querying your SPARQL like below:

%%graph_notebook_config

{

"host": "localhost",

"port": 9999,

"ssl": false,

"sparql": {

"path": "blazegraph/namespace/foo/sparql"

}

}

This will result in the url localhost:9999/blazegraph/namespace/foo/sparql being used when executing any %%sparql magic commands.

To setup a new local Blazegraph database for use with the graph notebook, check out the Quick Start from Blazegraph.

Change the configuration using %%graph_notebook_config and modify the defaults as they apply to your Neptune cluster:

%%graph_notebook_config

{

"host": "your-neptune-endpoint",

"port": 8182,

"auth_mode": "DEFAULT",

"load_from_s3_arn": "",

"ssl": true,

"aws_region": "your-neptune-region"

}

To setup a new Amazon Neptune cluster, check out the Amazon Web Services documentation.

When connecting the graph notebook to Neptune, make sure you have a network setup to communicate to the VPC that Neptune runs on. If not, you can follow this guide.

If you are running a SigV4 authenticated endpoint, ensure that your configuration has auth_mode set to IAM:

%%graph_notebook_config

{

"host": "your-neptune-endpoint",

"port": 8182,

"auth_mode": "IAM",

"load_from_s3_arn": "",

"ssl": true,

"aws_region": "your-neptune-region"

}

Additionally, you should have the following Amazon Web Services credentials available in a location accessible to Boto3:

- Access Key ID

- Secret Access Key

- Default Region

- Session Token (OPTIONAL. Use if you are using temporary credentials)

These variables must follow a specific naming convention, as listed in the Boto3 documentation

A list of all locations checked for Amazon Web Services credentials can also be found here.

See CONTRIBUTING for more information.

This project is licensed under the Apache-2.0 License.