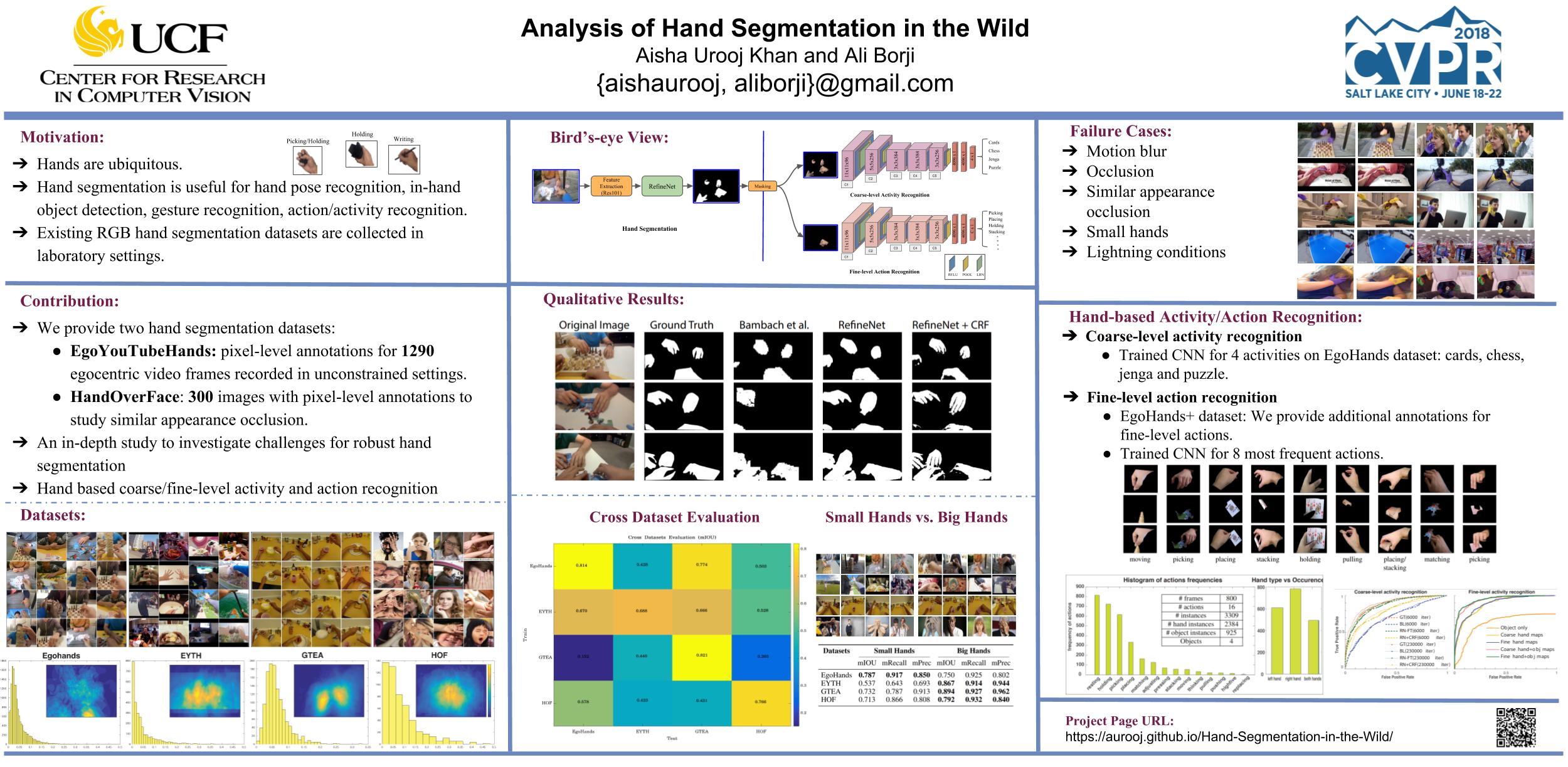

A large number of works in egocentric vision have concentrated on action and object recognition. Detection and segmentation of hands in first-person videos, however, has less been explored. For many applications in this domain, it is necessary to accurately segment not only hands of the camera wearer but also the hands of others with whom he is interacting. Here, we take an in-depth look at the hand segmentation problem. In the quest for robust hand segmentation methods, we evaluated the performance of the state of the art semantic segmentation methods, off the shelf and fine-tuned, on existing datasets. We fine-tune RefineNet, a leading semantic segmentation method, for hand segmentation and find that it does much better than the best contenders. Existing hand segmentation datasets are collected in the laboratory settings. To overcome this limitation, we contribute by collecting two new datasets: a) EgoYouTubeHands including egocentric videos containing hands in the wild, and b) HandOverFace to analyze the performance of our models in presence of similar appearance occlusions. We further explore whether conditional random fields can help refine generated hand segmentations. To demonstrate the benefit of accurate hand maps, we train a CNN for hand-based activity recognition and achieve higher accuracy when a CNN was trained using hand maps produced by the fine-tuned RefineNet. Finally, we annotate a subset of the EgoHands dataset for fine-grained action recognition and show that an accuracy of 58.6% can be achieved by just looking at a single hand pose which is much better than the chance level (12.5%).

We have uploaded the additional files needed to train, test and evaluate our models' performance. Code for multiscale evaluation is also provided. See the folder refinenet_files.

To test the models:

- you will need to download the refinenet code from their github repository.

- Copy the files provided in

refinenet_filesfolder torefinenet/mainfolder. - Place the refinenet-based hand segmentation model (see Models section) in

refinenet/model_trainedfolder. - For instance, to test the model trained on EgoHands dataset, copy the

refinenet_res101_egohands.matfile inrefinenet/model_trainedfolder. Set the path to test images folder indemo_refinenet_test_example_egohands.mand run the script. - The demo code is the same from the original refinenet demo files except minor changes.

You can download our refinenet-based hand segmentation models using the links given below:

- refinenet_res101_egohands.mat

- refinenet_res101_eyth.mat

- refinenet_res101_gtea.mat

- refinenet_res101_hof.mat

We used 4 hand segmentation datasets in our work, two of them(EgoYouTubeHands and HandOverFace datasets) are collected as part of our contribution:

- EgoHands dataset

- EgoYouTubeHands(EYTH) dataset [download]

- GTEA dataset

- HandOverFace(HOF) dataset [download]

Links to the videos used for EYTH dataset are given below. Each video is 3-6 minutes long. We cleaned the dataset before annotation and discarded unnecessary frames (e.g., frames containing text or if hands were out of view for a long time, etc).

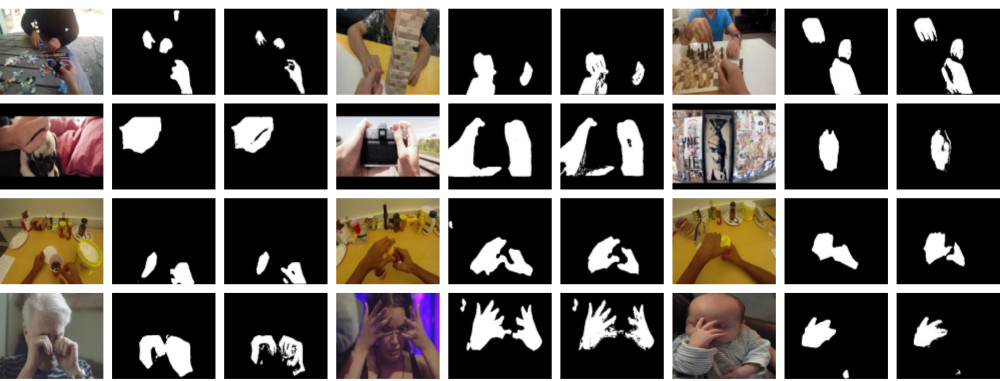

Test set for HandOverFace dataset is uploaded here.

Example images from EgoYouTubeHands dataset:

Example images from HandOverFace dataset:

- EgoHands+ dataset: To study fine-level action recognition, we provide additional annotations for a subset of EgoHands dataset. You can find more details here and download the dataset from this download link.

Hand segmentation results for all datasets:

We would like to thank undergraduate students Cristopher Matos, and Jose-Valentin Sera-Josef, and MS student Shiven Goyal for helping us in data annotations.

If this work and/or datasets is useful for your research, please cite our paper.

Please contact '[email protected]'