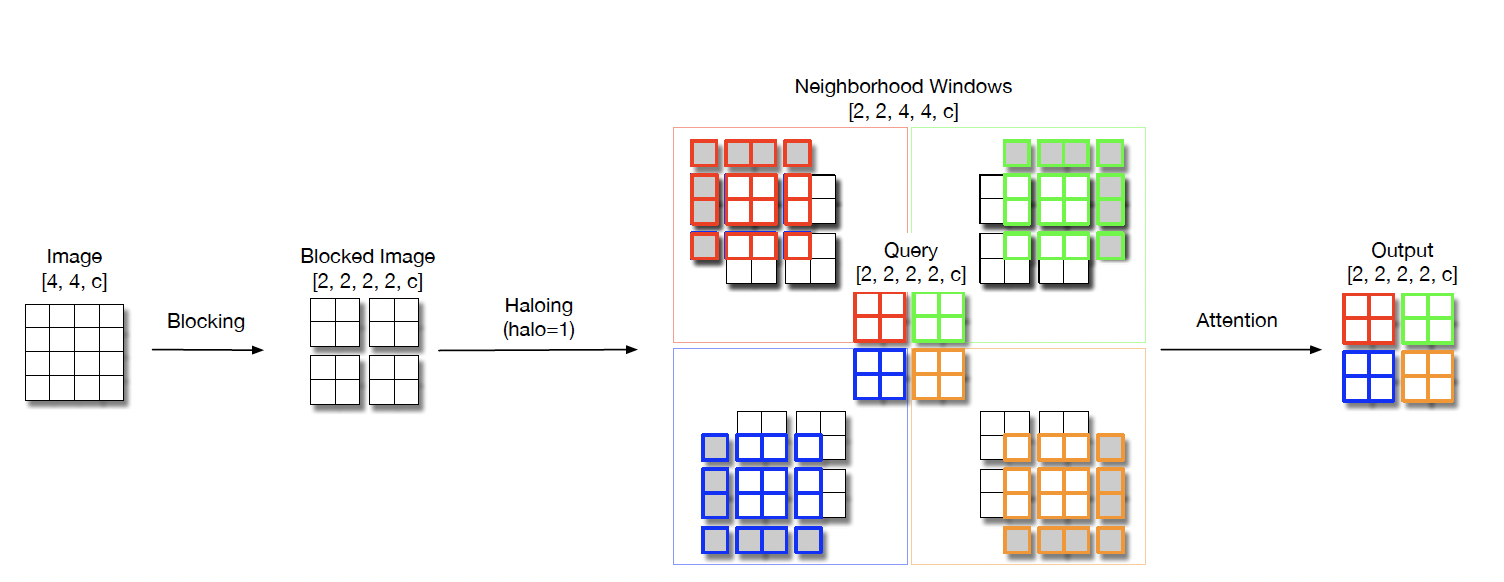

Scaling Local Self-Attention for Parameter Efficient Visual Backbones, arxiv

PaddlePaddle training/validation code and pretrained models for HaloNet.

The official pytorch implementation is N/A.

This implementation is developed by PaddleViT.

- Update (2021-12-09): Initial code and ported weights are released.

| Model | Acc@1 | Acc@5 | #Params | FLOPs | Image Size | Crop_pct | Interpolation | Link |

|---|---|---|---|---|---|---|---|---|

| halonet26t | 79.10 | 94.31 | 12.5M | 3.2G | 256 | 0.95 | bicubic | google/baidu(ednv) |

| halonet50ts | 81.65 | 95.61 | 22.8M | 5.1G | 256 | 0.94 | bicubic | google/baidu(3j9e) |

*The results are evaluated on ImageNet2012 validation set.

We provide a few notebooks in aistudio to help you get started:

*(coming soon)*

- Python>=3.6

- yaml>=0.2.5

- PaddlePaddle>=2.1.0

- yacs>=0.1.8

ImageNet2012 dataset is used in the following folder structure:

│imagenet/

├──train/

│ ├── n01440764

│ │ ├── n01440764_10026.JPEG

│ │ ├── n01440764_10027.JPEG

│ │ ├── ......

│ ├── ......

├──val/

│ ├── n01440764

│ │ ├── ILSVRC2012_val_00000293.JPEG

│ │ ├── ILSVRC2012_val_00002138.JPEG

│ │ ├── ......

│ ├── ......

To use the model with pretrained weights, download the .pdparam weight file and change related file paths in the following python scripts. The model config files are located in ./configs/.

For example, assume the downloaded weight file is stored in ./halonet_50ts_256.pdparams, to use the halonet_50ts_256 model in python:

from config import get_config

from halonet import build_halonet

# config files in ./configs/

config = get_config('./configs/halonet_50ts_256.yaml')

# build model

model = build_model(config)

# load pretrained weights, .pdparams is NOT needed

model_state_dict = paddle.load('./halonet_50ts_256')

model.set_dict(model_state_dict)To evaluate HaloNet model performance on ImageNet2012 with a single GPU, run the following script using command line:

sh run_eval.shor

CUDA_VISIBLE_DEVICES=0 \

python main_single_gpu.py \

-cfg='./configs/halonet_50ts_256.yaml' \

-dataset='imagenet2012' \

-batch_size=16 \

-data_path='/dataset/imagenet' \

-eval \

-pretrained='./halonet_50ts_256'Run evaluation using multi-GPUs:

sh run_eval_multi.shor

CUDA_VISIBLE_DEVICES=0,1,2,3 \

python main_multi_gpu.py \

-cfg='./configs/halonet_50ts_256.yaml' \

-dataset='imagenet2012' \

-batch_size=16 \

-data_path='/dataset/imagenet' \

-eval \

-pretrained='./halonet_50ts_256'To train the MobileVit XXS model on ImageNet2012 with single GPU, run the following script using command line:

sh run_train.shor

CUDA_VISIBLE_DEVICES=0 \

python main_singel_gpu.py \

-cfg='./configs/halonet_50ts_256.yaml' \

-dataset='imagenet2012' \

-batch_size=32 \

-data_path='/dataset/imagenet' \Run training using multi-GPUs:

sh run_train_multi.shor

CUDA_VISIBLE_DEVICES=0,1,2,3 \

python main_multi_gpu.py \

-cfg='./configs/halonet_50ts_256.yaml' \

-dataset='imagenet2012' \

-batch_size=16 \

-data_path='/dataset/imagenet' \(coming soon)

@inproceedings{vaswani2021scaling,

title={Scaling local self-attention for parameter efficient visual backbones},

author={Vaswani, Ashish and Ramachandran, Prajit and Srinivas, Aravind and Parmar, Niki and Hechtman, Blake and Shlens, Jonathon},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={12894--12904},

year={2021}

}