- Author: Andreas ten Pas ([email protected])

- Version: 1.0.0

- Author's website: http://www.ccs.neu.edu/home/atp/

- License: BSD

This package detects 6-DOF grasp poses for a 2-finger grasp (e.g. a parallel jaw gripper) in 3D point clouds.

Grasp pose detection consists of three steps: sampling a large number of grasp candidates, classifying these candidates as viable grasps or not, and clustering viable grasps which are geometrically similar.

The reference for this package is: High precision grasp pose detection in dense clutter.

- PCL 1.7 or later

- Eigen 3.0 or later

- Caffe

- ROS Indigo and Ubuntu 14.04 or ROS Kinetic and Ubuntu 16.04

The following instructions work for Ubuntu 14.04 or Ubuntu 16.04. Similar instructions should work for other Linux distributions that support ROS.

-

Install Caffe (Instructions). Follow the CMake Build instructions. Notice for Ubuntu 14.04: Due to a conflict between the Boost version required by Caffe (1.55) and the one installed as a dependency with the Debian package for ROS Indigo (1.54), you need to checkout an older version of Caffe that worked with Boost 1.54. So, when you clone Caffe, please use this command.

git clone https://github.com/BVLC/caffe.git && cd caffe git checkout 923e7e8b6337f610115ae28859408bc392d13136 -

Install ROS. In Ubuntu 14.04, install ROS Indigo (Instructions). In Ubuntu 16.04, install ROS Kinetic (Instructions).

-

Clone the grasp_pose_generator repository into some folder:

cd <location_of_your_workspace> git clone https://github.com/atenpas/gpg.git -

Build and install the grasp_pose_generator:

cd gpg mkdir build && cd build cmake .. make sudo make install

-

Clone this repository.

cd <location_of_your_workspace/src> git clone https://github.com/atenpas/gpd.git -

Build your catkin workspace.

cd <location_of_your_workspace> catkin_make

Launch the grasp pose detection on an example point cloud:

roslaunch gpd tutorial0.launch

Within the GUI that appears, press r to center the view, and q to quit the GUI and load the next visualization. The output should look similar to the screenshot shown below.

Brief explanations of parameters are given in launch/classify_candidates_file_15_channels.launch for using PCD files. For use on a robot, see launch/ur5_15_channels.launch.

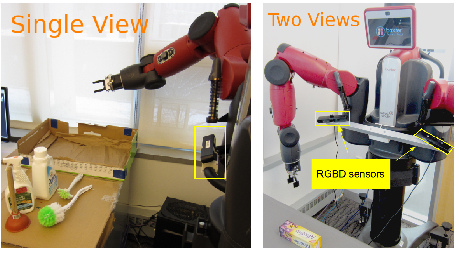

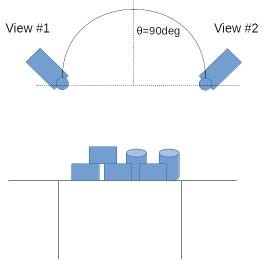

You can use this package with a single or with two depth sensors. The package comes with weight files for Caffe for both options. You can find these files in gpd/caffe/15channels. For a single sensor, use single_view_15_channels.caffemodel and for two depth sensors, use two_views_15_channels_[angle]. The [angle] is the angle between the two sensor views, as illustrated in the picture below. In the two-views setting, you want to register the two point clouds together before sending them to GPD.

To switch between one and two sensor views, change the parameter trained_file in the launch file launch/caffe/ur5_15channels.launch.

The package comes with weight files for two different input representations for the neural network that is used to decide if a grasp is viable or not: 3 or 15 channels. The default is 15 channels. However, you can use the 3 channels to achieve better runtime for a loss in grasp quality. For more details, please see the reference below.

If you like this package and use it in your own work, please cite our paper(s):

[1] Marcus Gualtieri, Andreas ten Pas, Kate Saenko, and Robert Platt. High precision grasp pose detection in dense clutter. IROS 2016. 598-605.

[2] Andreas ten Pas, Marcus Gualtieri, Kate Saenko, and Robert Platt. Grasp Pose Detection in Point Clouds. Conditionally accepted for IJRR.