MuseV: Infinite-length and High Fidelity Virtual Human Video Generation with Parallel Denoising

Zhiqiang Xia *,

Zhaokang Chen*,

Bin Wu†,

Chao Li,

Kwok-Wai Hung,

Chao Zhan,

Wenjiang Zhou

(*co-first author, †Corresponding Author)

[project](comming soon) Technical report (comming soon)

We have setup the world simulator vision since March 2023, believing diffusion models can simulate the world. MuseV was a milestone achieved around July 2023. Amazed by the progress of Sora, we decided to opensource MuseV, hopefully it will benefit the community.

Our next move will switch to the promising diffusion+transformer scheme. Please stay tuned.

We will soon release MuseTalk, a diffusion-baesd lip sync model, which can be applied with MuseV as a complete virtual human generation solution. Please stay tuned!

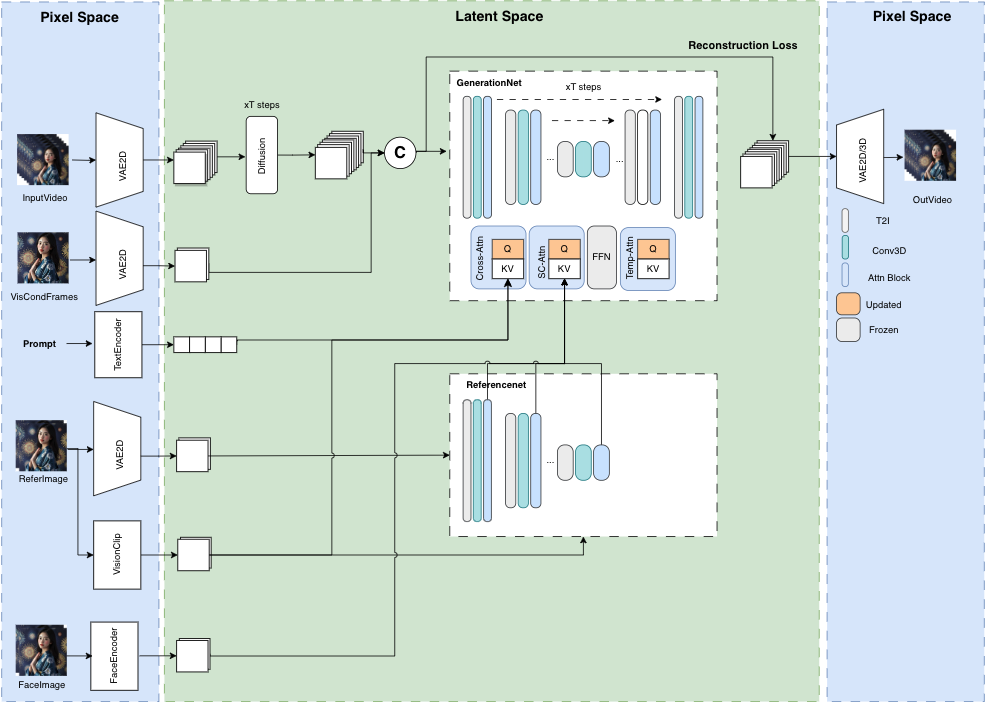

MuseV is a diffusion-based virtual human video generation framework, which

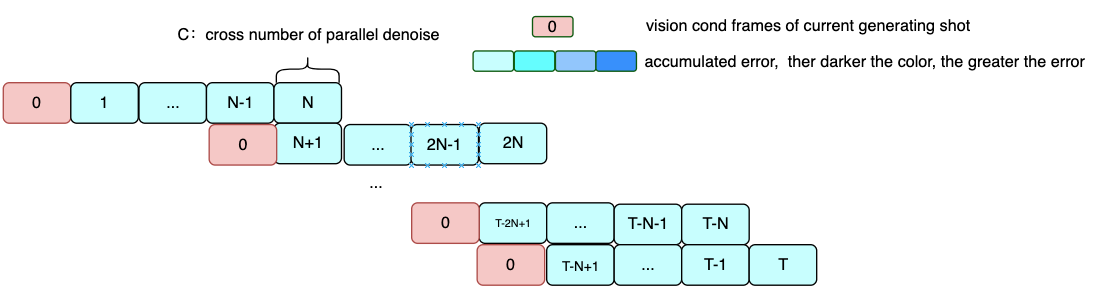

- supports infinite length generation using a novel Parallel Denoising scheme.

- checkpoint available for virtual human video generation trained on human dataset.

- supports Image2Video, Text2Image2Video, Video2Video.

- compatible with the Stable Diffusion ecosystem, including

base_model,lora,controlnet, etc. - supports multi reference image technology, including

IPAdapter,ReferenceOnly,ReferenceNet,IPAdapterFaceID. - training codes (comming very soon).

All frames are generated from text2video model, without any post process.

Bellow Case could be found in configs/tasks/example.yaml

- [03/22/2024] release

MuseVproject and trained modelmusev,muse_referencenet.

- technical report (comming soon).

- training codes.

- release pretrained unet model, which is trained with controlnet、referencenet、IPAdapter, which is better on pose2video.

- support diffusion transformer generation framework.

prepare python environment and install extra package like diffusers, controlnet_aux, mmcm.

suggest to use docker primarily to prepare python environment.

- pull docker image

docker pull docker pull anchorxia/musev:latest- run docker

docker run --gpus all -it --entrypoint /bin/bash anchorxia/musev:latestThe default conda env is musev.

create conda environment from environment.yaml

conda env create --name musev --file ./environment.yml

pip install -r requirements.txt

install mmlab package

pip install--no-cache-dir -U openmim

mim install mmengine

mim install "mmcv>=2.0.1"

mim install "mmdet>=3.1.0"

mim install "mmpose>=1.1.0" git clone --recursive https://github.com/TMElyralab/MuseV.gitprepare PYTHONPATH

current_dir=$(pwd)

export PYTHONPATH=${PYTHONPATH}:${current_dir}/MuseV

export PYTHONPATH=${PYTHONPATH}:${current_dir}/MuseV/MMCM

export PYTHONPATH=${PYTHONPATH}:${current_dir}/MuseV/diffusers/src

export PYTHONPATH=${PYTHONPATH}:${current_dir}/MuseV/controlnet_aux/src

cd MuseVMMCM: multi media, cross modal process package。diffusers: modified diffusers package based on diffuserscontrolnet_aux: modified based on controlnet_aux

git clone https://huggingface.co/TMElyralab/MuseV ./checkpointsmotion: text2video model.musev/unet: only has and trainunetmotion module.musev_referencenet: trainunetmodule,referencenet,IPAdapterunet:motionmodule, which hasto_k,to_vinAttentionlayer refer toIPAdapterreferencenet: similar toAnimateAnyoneip_adapter_image_proj.bin: images clip emb project layer, refer toIPAdapter

t2i/sd1.5: text2image model, paramter are frozen when training motion module.- majicmixRealv6Fp16: example, could be replaced with other t2i base. download from majicmixRealv6Fp16

IP-Adapter/models: download from IPAdapterimage_encoder: vision clip model.ip-adapter_sd15.bin: original IPAdapter model checkpoint.ip-adapter-faceid_sd15.bin: original IPAdapter model checkpoint.

skip this step when run example task with example inference command. set model path and abbreviation in config, to use abbreviation in inference script.

- T2I SD:ref to

musev/configs/model/T2I_all_model.py - Motion Unet: refer to

musev/configs/model/motion_model.py - task: refer to

musev/configs/tasks/example.yaml

python scripts/inference/text2video.py --sd_model_name majicmixRealv6Fp16 --unet_model_name musev_referencenet --referencenet_model_name musev_referencenet --ip_adapter_model_name musev_referencenet -test_data_path ./configs/tasks/example.yaml --output_dir ./output --n_batch 1 --target_datas yongen --vision_clip_extractor_class_name ImageClipVisionFeatureExtractor --vision_clip_model_path ./checkpoints/IP-Adapter/models/image_encoder --time_size 12 --fps 12 common parameters:

test_data_path: task_path in yaml extentiontarget_datas: sep is,, sample subtasks ifnameintest_data_pathis intarget_datas.sd_model_cfg_path: T2I sd models path, model config path or model path.sd_model_name: sd model name, which use to choose full model path in sd_model_cfg_path. multi model names with sep =,, orallunet_model_cfg_path: motion unet model config path or model path。unet_model_name: unet model name, use to get model path inunet_model_cfg_path, and init unet class instance inmusev/models/unet_loader.py. multi model names with sep=,, orall. Ifunet_model_cfg_pathis model path,unet_namemust be supported inmusev/models/unet_loader.pytime_size: num_frames per diffusion denoise generation。default=12.n_batch: generation numbers. Total_frames=n_batch*time_size+n_viscond, default=1。context_frames: context_frames num. Iftime_size>context_frame,time_sizewindow is split into many sub-windows for parallel denoising"。 default=12。

model parameters:

support referencenet, IPAdapter, IPAdapterFaceID, Facein.

- referencenet_model_name:

referencenetmodel name. - ImageClipVisionFeatureExtractor:

ImageEmbExtractorname, extractor vision clip emb used inIPAdapter. - vision_clip_model_path:

ImageClipVisionFeatureExtractormodel path. - ip_adapter_model_name: from

IPAdapter, it'sImagePromptEmbProj, used withImageEmbExtractor。 - ip_adapter_face_model_name:

IPAdapterFaceID, fromIPAdapterto keep faceid,should setface_image_path。

Some parameters that affect the amplitude and effect of generation:

video_guidance_scale: similar to text2image, control influence between cond and uncond,default=3.5guidance_scale: The parameter ratio in the first frame image between cond and uncond, default=3.5use_condition_image: Whether use the given first frame for video generation.redraw_condition_image: whether redraw the given first frame image.video_negative_prompt: abbreviation of fullnegative_promptin config path. default=V2.

python scripts/inference/video2video.py --sd_model_name majicmixRealv6Fp16 --unet_model_name musev_referencenet --referencenet_model_name musev_referencenet --ip_adapter_model_name musev_referencenet -test_data_path ./configs/tasks/example.yaml --vision_clip_extractor_class_name ImageClipVisionFeatureExtractor --vision_clip_model_path ./checkpoints/IP-Adapter/models/image_encoder --output_dir ./output --n_batch 1 --controlnet_name dwpose_body_hand --which2video "video_middle" --target_datas bilibili_queencard --fps 12 --time_size 12import parameters

Most of paramters are same as musev_text2video. Special parameters of video2video are

- need set

video_pathintest_data. Now supportrgb videoandcontrolnet_middle_video。

need_video2video: whetherrgbvideo influence initial noise.controlnet_name:whether usecontrolnet condition, such asdwpose,depth.video_is_middle:video_pathisrgb videoorcontrolnet_middle_video. could set for everytest_datain test_data_path.video_has_condition: whether condtion_images is aligned with the first frame of video_path. If Not, firstly generatecondition_imagesand align with concatation. set intest_data。

python scripts/inference/text2video.py --sd_model_name majicmixRealv6Fp16 --unet_model_name musev -test_data_path ./configs/tasks/example.yaml --output_dir ./output --n_batch 1 --target_datas yongen --time_size 12 --fps 12python scripts/inference/video2video.py --sd_model_name majicmixRealv6Fp16 --unet_model_name musev -test_data_path ./configs/tasks/example.yaml --output_dir ./output --n_batch 1 --controlnet_name dwpose_body_hand --which2video "video_middle" --target_datas bilibili_queencard --fps 12 --time_size 12MuseV provides gradio script to generate GUI in local machine to generate video conveniently.

cd scripts/gradio

python app.pyMuseV builds on TuneAVideo, diffusers. Thanks for open-sourcing!

paper comming soon

@article{musev,

title={MuseV: Infinite-length and High Fidelity Virtual Human Video Generation with Parallel Denoising},

author={Xia, Zhiqiang and Chen, Zhaokang and Wu, Bin and Li, Chao and Hung, Kwok-Wai and Zhan, Chao and Zhou, Wenjiang},

journal={arxiv},

year={2024}

}code: The code of MuseV is released under the MIT License. There is no limitation for both academic and commercial usage.model: The trained model are available for non-commercial research purposes only.other opensource model: Other open-source models used must comply with their license, such asinsightface,IP-Adapter,ft-mse-vae, etc.AIGC: This project strives to impact the domain of AI-driven video generation positively. Users are granted the freedom to create videos using this tool, but they are expected to comply with local laws and utilize it responsibly. The developers do not assume any responsibility for potential misuse by users.