Multi-dimensional arrays with broadcasting and lazy computing.

xtensor is a C++ library meant for numerical analysis with multi-dimensional

array expressions.

xtensor provides

- an extensible expression system enabling lazy broadcasting.

- an API following the idioms of the C++ standard library.

- tools to manipulate array expressions and build upon

xtensor.

Containers of xtensor are inspired by NumPy, the

Python array programming library. Adaptors for existing data structures to

be plugged into our expression system can easily be written.

In fact, xtensor can be used to process NumPy data structures inplace

using Python's buffer protocol.

Similarly, we can operate on Julia and R arrays. For more details on the NumPy,

Julia and R bindings, check out the xtensor-python,

xtensor-julia and

xtensor-r projects respectively.

xtensor requires a modern C++ compiler supporting C++14. The following C++

compilers are supported:

- On Windows platforms, Visual C++ 2015 Update 2, or more recent

- On Unix platforms, gcc 4.9 or a recent version of Clang

We provide a package for the mamba (or conda) package manager:

mamba install -c conda-forge xtensorxtensor is a header-only library.

You can directly install it from the sources:

cmake -D CMAKE_INSTALL_PREFIX=your_install_prefix

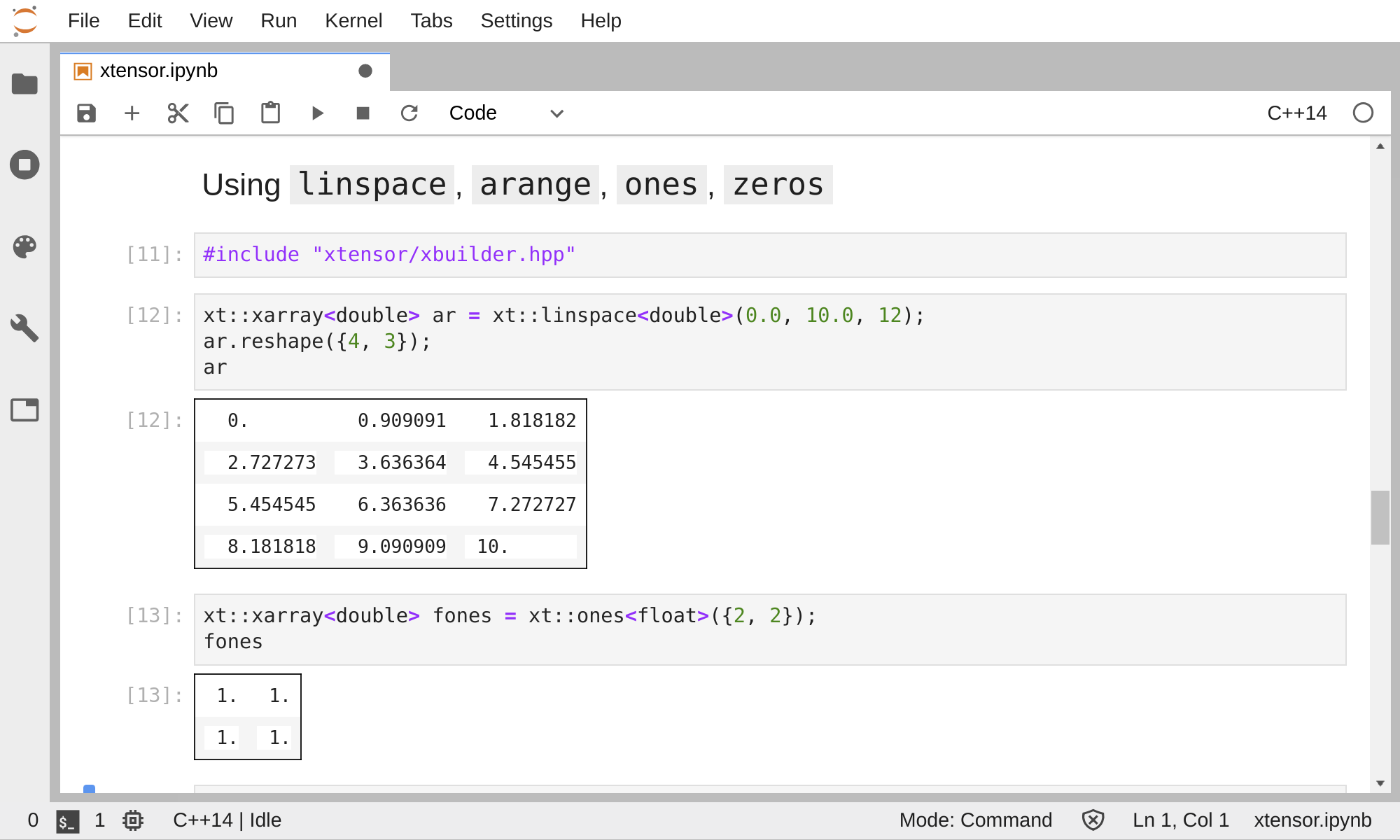

make installYou can play with xtensor interactively in a Jupyter notebook right now! Just click on the binder link below:

The C++ support in Jupyter is powered by the xeus-cling C++ kernel. Together with xeus-cling, xtensor enables a similar workflow to that of NumPy with the IPython Jupyter kernel.

For more information on using xtensor, check out the reference documentation

http://xtensor.readthedocs.io/

xtensor depends on the xtl library and

has an optional dependency on the xsimd

library:

xtensor |

xtl |

xsimd (optional) |

|---|---|---|

| master | ^0.7.0 | ^7.4.8 |

| 0.23.8 | ^0.7.0 | ^7.4.8 |

| 0.23.7 | ^0.7.0 | ^7.4.8 |

| 0.23.6 | ^0.7.0 | ^7.4.8 |

| 0.23.5 | ^0.7.0 | ^7.4.8 |

| 0.23.4 | ^0.7.0 | ^7.4.8 |

| 0.23.3 | ^0.7.0 | ^7.4.8 |

| 0.23.2 | ^0.7.0 | ^7.4.8 |

| 0.23.1 | ^0.7.0 | ^7.4.8 |

| 0.23.0 | ^0.7.0 | ^7.4.8 |

| 0.22.0 | ^0.6.23 | ^7.4.8 |

The dependency on xsimd is required if you want to enable SIMD acceleration

in xtensor. This can be done by defining the macro XTENSOR_USE_XSIMD

before including any header of xtensor.

Initialize a 2-D array and compute the sum of one of its rows and a 1-D array.

#include <iostream>

#include "xtensor/xarray.hpp"

#include "xtensor/xio.hpp"

#include "xtensor/xview.hpp"

xt::xarray<double> arr1

{{1.0, 2.0, 3.0},

{2.0, 5.0, 7.0},

{2.0, 5.0, 7.0}};

xt::xarray<double> arr2

{5.0, 6.0, 7.0};

xt::xarray<double> res = xt::view(arr1, 1) + arr2;

std::cout << res;Outputs:

{7, 11, 14}

Initialize a 1-D array and reshape it inplace.

#include <iostream>

#include "xtensor/xarray.hpp"

#include "xtensor/xio.hpp"

xt::xarray<int> arr

{1, 2, 3, 4, 5, 6, 7, 8, 9};

arr.reshape({3, 3});

std::cout << arr;Outputs:

{{1, 2, 3},

{4, 5, 6},

{7, 8, 9}}

Index Access

#include <iostream>

#include "xtensor/xarray.hpp"

#include "xtensor/xio.hpp"

xt::xarray<double> arr1

{{1.0, 2.0, 3.0},

{2.0, 5.0, 7.0},

{2.0, 5.0, 7.0}};

std::cout << arr1(0, 0) << std::endl;

xt::xarray<int> arr2

{1, 2, 3, 4, 5, 6, 7, 8, 9};

std::cout << arr2(0);Outputs:

1.0

1

If you are familiar with NumPy APIs, and you are interested in xtensor, you can check out the NumPy to xtensor cheat sheet provided in the documentation.

Xtensor can operate on arrays of different shapes of dimensions in an element-wise fashion. Broadcasting rules of xtensor are similar to those of NumPy and libdynd.

In an operation involving two arrays of different dimensions, the array with the lesser dimensions is broadcast across the leading dimensions of the other.

For example, if A has shape (2, 3), and B has shape (4, 2, 3), the

result of a broadcasted operation with A and B has shape (4, 2, 3).

(2, 3) # A

(4, 2, 3) # B

---------

(4, 2, 3) # Result

The same rule holds for scalars, which are handled as 0-D expressions. If A

is a scalar, the equation becomes:

() # A

(4, 2, 3) # B

---------

(4, 2, 3) # Result

If matched up dimensions of two input arrays are different, and one of them has

size 1, it is broadcast to match the size of the other. Let's say B has the

shape (4, 2, 1) in the previous example, so the broadcasting happens as

follows:

(2, 3) # A

(4, 2, 1) # B

---------

(4, 2, 3) # Result

With xtensor, if x, y and z are arrays of broadcastable shapes, the

return type of an expression such as x + y * sin(z) is not an array. It

is an xexpression object offering the same interface as an N-dimensional

array, which does not hold the result. Values are only computed upon access

or when the expression is assigned to an xarray object. This allows to

operate symbolically on very large arrays and only compute the result for the

indices of interest.

We provide utilities to vectorize any scalar function (taking multiple

scalar arguments) into a function that will perform on xexpressions, applying

the lazy broadcasting rules which we just described. These functions are called

xfunctions. They are xtensor's counterpart to NumPy's universal functions.

In xtensor, arithmetic operations (+, -, *, /) and all special

functions are xfunctions.

All xexpressions offer two sets of functions to retrieve iterator pairs (and

their const counterpart).

begin()andend()provide instances ofxiterators which can be used to iterate over all the elements of the expression. The order in which elements are listed isrow-majorin that the index of last dimension is incremented first.begin(shape)andend(shape)are similar but take a broadcasting shape as an argument. Elements are iterated upon in a row-major way, but certain dimensions are repeated to match the provided shape as per the rules described above. For an expressione,e.begin(e.shape())ande.begin()are equivalent.

Two container classes implementing multi-dimensional arrays are provided:

xarray and xtensor.

xarraycan be reshaped dynamically to any number of dimensions. It is the container that is the most similar to NumPy arrays.xtensorhas a dimension set at compilation time, which enables many optimizations. For example, shapes and strides ofxtensorinstances are allocated on the stack instead of the heap.

xarray and xtensor container are both xexpressions and can be involved

and mixed in universal functions, assigned to each other etc...

Besides, two access operators are provided:

- The variadic template

operator()which can take multiple integral arguments or none. - And the

operator[]which takes a single multi-index argument, which can be of size determined at runtime.operator[]also supports access with braced initializers.

Xtensor operations make use of SIMD acceleration depending on what instruction sets are available on the platform at hand (SSE, AVX, AVX512, Neon).

The xsimd project underlies the detection of the available instruction sets, and provides generic high-level wrappers and memory allocators for client libraries such as xtensor.

Xtensor operations are continuously benchmarked, and are significantly improved at each new version. Current performances on statically dimensioned tensors match those of the Eigen library. Dynamically dimension tensors for which the shape is heap allocated come at a small additional cost.

More generally, the library implement a promote_shape mechanism at build time

to determine the optimal sequence type to hold the shape of an expression. The

shape type of a broadcasting expression whose members have a dimensionality

determined at compile time will have a stack allocated sequence type. If at

least one note of a broadcasting expression has a dynamic dimension

(for example an xarray), it bubbles up to the entire broadcasting expression

which will have a heap allocated shape. The same hold for views, broadcast

expressions, etc...

Therefore, when building an application with xtensor, we recommend using statically-dimensioned containers whenever possible to improve the overall performance of the application.

The xtensor-python project

provides the implementation of two xtensor containers, pyarray and

pytensor which effectively wrap NumPy arrays, allowing inplace modification,

including reshapes.

Utilities to automatically generate NumPy-style universal functions, exposed to Python from scalar functions are also provided.

The xtensor-julia project

provides the implementation of two xtensor containers, jlarray and

jltensor which effectively wrap julia arrays, allowing inplace modification,

including reshapes.

Like in the Python case, utilities to generate NumPy-style universal functions are provided.

The xtensor-r project provides the

implementation of two xtensor containers, rarray and rtensor which

effectively wrap R arrays, allowing inplace modification, including reshapes.

Like for the Python and Julia bindings, utilities to generate NumPy-style universal functions are provided.

The xtensor-blas project provides bindings to BLAS libraries, enabling linear-algebra operations on xtensor expressions.

The xtensor-io project enables the loading of a variety of file formats into xtensor expressions, such as image files, sound files, HDF5 files, as well as NumPy npy and npz files.

Building the tests requires the GTest testing framework and cmake.

gtest and cmake are available as packages for most Linux distributions.

Besides, they can also be installed with the conda package manager (even on

windows):

conda install -c conda-forge gtest cmakeOnce gtest and cmake are installed, you can build and run the tests:

mkdir build

cd build

cmake -DBUILD_TESTS=ON ../

make xtestYou can also use CMake to download the source of gtest, build it, and use the

generated libraries:

mkdir build

cd build

cmake -DBUILD_TESTS=ON -DDOWNLOAD_GTEST=ON ../

make xtestxtensor's documentation is built with three tools

While doxygen must be installed separately, you can install breathe by typing

pip install breathe sphinx_rtd_themeBreathe can also be installed with conda

conda install -c conda-forge breatheFinally, go to docs subdirectory and build the documentation with the

following command:

make htmlWe use a shared copyright model that enables all contributors to maintain the copyright on their contributions.

This software is licensed under the BSD-3-Clause license. See the LICENSE file for details.