(简体中文|English)

PaddleSpeech is an open-source toolkit on PaddlePaddle platform for a variety of critical tasks in speech and audio, with the state-of-art and influential models.

PaddleSpeech won the NAACL2022 Best Demo Award, please check out our paper on Arxiv.

| Input Audio | Recognition Result |

|---|---|

|

|

I knocked at the door on the ancient side of the building. |

|

|

我认为跑步最重要的就是给我带来了身体健康。 |

For more synthesized audios, please refer to PaddleSpeech Text-to-Speech samples.

| Input Text | Output Text |

|---|---|

| 今天的天气真不错啊你下午有空吗我想约你一起去吃饭 | 今天的天气真不错啊!你下午有空吗?我想约你一起去吃饭。 |

Via the easy-to-use, efficient, flexible and scalable implementation, our vision is to empower both industrial application and academic research, including training, inference & testing modules, and deployment process. To be more specific, this toolkit features at:

- 📦 Ease of Use: low barriers to install, CLI, Server, and Streaming Server is available to quick-start your journey.

- 🏆 Align to the State-of-the-Art: we provide high-speed and ultra-lightweight models, and also cutting-edge technology.

- 🏆 Streaming ASR and TTS System: we provide production ready streaming asr and streaming tts system.

- 💯 Rule-based Chinese frontend: our frontend contains Text Normalization and Grapheme-to-Phoneme (G2P, including Polyphone and Tone Sandhi). Moreover, we use self-defined linguistic rules to adapt Chinese context.

- 📦 Varieties of Functions that Vitalize both Industrial and Academia:

- 🛎️ Implementation of critical audio tasks: this toolkit contains audio functions like Automatic Speech Recognition, Text-to-Speech Synthesis, Speaker Verfication, KeyWord Spotting, Audio Classification, and Speech Translation, etc.

- 🔬 Integration of mainstream models and datasets: the toolkit implements modules that participate in the whole pipeline of the speech tasks, and uses mainstream datasets like LibriSpeech, LJSpeech, AIShell, CSMSC, etc. See also model list for more details.

- 🧩 Cascaded models application: as an extension of the typical traditional audio tasks, we combine the workflows of the aforementioned tasks with other fields like Natural language processing (NLP) and Computer Vision (CV).

- 🔥 2022.11.07: U2/U2++ C++ High Performance Streaming Asr Deployment.

- 👑 2022.11.01: Add Adversarial Loss for Chinese English mixed TTS.

- 🔥 2022.10.26: Add Prosody Prediction for TTS.

- 🎉 2022.10.21: Add SSML for TTS Chinese Text Frontend.

- 👑 2022.10.11: Add Wav2vec2ASR, wav2vec2.0 fine-tuning for ASR on LibriSpeech.

- 🔥 2022.09.26: Add Voice Cloning, TTS finetune, and ERNIE-SAT in PaddleSpeech Web Demo.

- ⚡ 2022.09.09: Add AISHELL-3 Voice Cloning example with ECAPA-TDNN speaker encoder.

- ⚡ 2022.08.25: Release TTS finetune example.

- 🔥 2022.08.22: Add ERNIE-SAT models: ERNIE-SAT-vctk、ERNIE-SAT-aishell3、ERNIE-SAT-zh_en.

- 🔥 2022.08.15: Add g2pW into TTS Chinese Text Frontend.

- 🔥 2022.08.09: Release Chinese English mixed TTS.

- ⚡ 2022.08.03: Add ONNXRuntime infer for TTS CLI.

- 🎉 2022.07.18: Release VITS: VITS-csmsc、VITS-aishell3、VITS-VC.

- 🎉 2022.06.22: All TTS models support ONNX format.

- 🍀 2022.06.17: Add PaddleSpeech Web Demo.

- 👑 2022.05.13: Release PP-ASR、PP-TTS、PP-VPR.

- 👏🏻 2022.05.06:

PaddleSpeech Streaming Serveris available forStreaming ASRwithPunctuation RestorationandToken TimestampandText-to-Speech. - 👏🏻 2022.05.06:

PaddleSpeech Serveris available forAudio Classification,Automatic Speech RecognitionandText-to-Speech,Speaker VerificationandPunctuation Restoration. - 👏🏻 2022.03.28:

PaddleSpeech CLIis available forSpeaker Verification. - 🤗 2021.12.14: ASR and TTS Demos on Hugging Face Spaces are available!

- 👏🏻 2021.12.10:

PaddleSpeech CLIis available forAudio Classification,Automatic Speech Recognition,Speech Translation (English to Chinese)andText-to-Speech.

- Scan the QR code below with your Wechat, you can access to official technical exchange group and get the bonus ( more than 20GB learning materials, such as papers, codes and videos ) and the live link of the lessons. Look forward to your participation.

We strongly recommend our users to install PaddleSpeech in Linux with python>=3.7 and paddlepaddle>=2.4rc.

- gcc >= 4.8.5

- paddlepaddle >= 2.4rc

- python >= 3.7

- OS support: Linux(recommend), Windows, Mac OSX

PaddleSpeech depends on paddlepaddle. For installation, please refer to the official website of paddlepaddle and choose according to your own machine. Here is an example of the cpu version.

pip install paddlepaddle -i https://mirror.baidu.com/pypi/simpleYou can also specify the version of paddlepaddle or install the develop version.

# install 2.3.1 version. Note, 2.3.1 is just an example, please follow the minimum dependency of paddlepaddle for your selection

pip install paddlepaddle==2.3.1 -i https://mirror.baidu.com/pypi/simple

# install develop version

pip install paddlepaddle==0.0.0 -f https://www.paddlepaddle.org.cn/whl/linux/cpu-mkl/develop.htmlThere are two quick installation methods for PaddleSpeech, one is pip installation, and the other is source code compilation (recommended).

pip install pytest-runner

pip install paddlespeechgit clone https://github.com/PaddlePaddle/PaddleSpeech.git

cd PaddleSpeech

pip install pytest-runner

pip install .For more installation problems, such as conda environment, librosa-dependent, gcc problems, kaldi installation, etc., you can refer to this installation document. If you encounter problems during installation, you can leave a message on #2150 and find related problems

Developers can have a try of our models with PaddleSpeech Command Line or Python. Change --input to test your own audio/text and support 16k wav format audio.

You can also quickly experience it in AI Studio 👉🏻 PaddleSpeech API Demo

Test audio sample download

wget -c https://paddlespeech.bj.bcebos.com/PaddleAudio/zh.wav

wget -c https://paddlespeech.bj.bcebos.com/PaddleAudio/en.wav(Click to expand)Open Source Speech Recognition

command line experience

paddlespeech asr --lang zh --input zh.wavPython API experience

>>> from paddlespeech.cli.asr.infer import ASRExecutor

>>> asr = ASRExecutor()

>>> result = asr(audio_file="zh.wav")

>>> print(result)

我认为跑步最重要的就是给我带来了身体健康Open Source Speech Synthesis

Output 24k sample rate wav format audio

command line experience

paddlespeech tts --input "你好,欢迎使用百度飞桨深度学习框架!" --output output.wavPython API experience

>>> from paddlespeech.cli.tts.infer import TTSExecutor

>>> tts = TTSExecutor()

>>> tts(text="今天天气十分不错。", output="output.wav")- You can experience in Huggingface Spaces TTS Demo

An open-domain sound classification tool

Sound classification model based on 527 categories of AudioSet dataset

command line experience

paddlespeech cls --input zh.wavPython API experience

>>> from paddlespeech.cli.cls.infer import CLSExecutor

>>> cls = CLSExecutor()

>>> result = cls(audio_file="zh.wav")

>>> print(result)

Speech 0.9027186632156372Industrial-grade voiceprint extraction tool

command line experience

paddlespeech vector --task spk --input zh.wavPython API experience

>>> from paddlespeech.cli.vector import VectorExecutor

>>> vec = VectorExecutor()

>>> result = vec(audio_file="zh.wav")

>>> print(result) # 187维向量

[ -0.19083306 9.474295 -14.122263 -2.0916545 0.04848729

4.9295826 1.4780062 0.3733844 10.695862 3.2697146

-4.48199 -0.6617882 -9.170393 -11.1568775 -1.2358263 ...]Quick recovery of text punctuation, works with ASR models

command line experience

paddlespeech text --task punc --input 今天的天气真不错啊你下午有空吗我想约你一起去吃饭Python API experience

>>> from paddlespeech.cli.text.infer import TextExecutor

>>> text_punc = TextExecutor()

>>> result = text_punc(text="今天的天气真不错啊你下午有空吗我想约你一起去吃饭")

今天的天气真不错啊!你下午有空吗?我想约你一起去吃饭。End-to-end English to Chinese Speech Translation Tool

Use pre-compiled kaldi related tools, only support experience in Ubuntu system

command line experience

paddlespeech st --input en.wavPython API experience

>>> from paddlespeech.cli.st.infer import STExecutor

>>> st = STExecutor()

>>> result = st(audio_file="en.wav")

['我 在 这栋 建筑 的 古老 门上 敲门 。']Developers can have a try of our speech server with PaddleSpeech Server Command Line.

You can try it quickly in AI Studio (recommend): SpeechServer

Start server

paddlespeech_server start --config_file ./demos/speech_server/conf/application.yamlAccess Speech Recognition Services

paddlespeech_client asr --server_ip 127.0.0.1 --port 8090 --input input_16k.wavAccess Text to Speech Services

paddlespeech_client tts --server_ip 127.0.0.1 --port 8090 --input "您好,欢迎使用百度飞桨语音合成服务。" --output output.wavAccess Audio Classification Services

paddlespeech_client cls --server_ip 127.0.0.1 --port 8090 --input input.wavFor more information about server command lines, please see: speech server demos

Developers can have a try of streaming asr and streaming tts server.

Start Streaming Speech Recognition Server

paddlespeech_server start --config_file ./demos/streaming_asr_server/conf/application.yaml

Access Streaming Speech Recognition Services

paddlespeech_client asr_online --server_ip 127.0.0.1 --port 8090 --input input_16k.wav

Start Streaming Text to Speech Server

paddlespeech_server start --config_file ./demos/streaming_tts_server/conf/tts_online_application.yaml

Access Streaming Text to Speech Services

paddlespeech_client tts_online --server_ip 127.0.0.1 --port 8092 --protocol http --input "您好,欢迎使用百度飞桨语音合成服务。" --output output.wav

For more information please see: streaming asr and streaming tts

PaddleSpeech supports a series of most popular models. They are summarized in released models and attached with available pretrained models.

Speech-to-Text contains Acoustic Model, Language Model, and Speech Translation, with the following details:

| Speech-to-Text Module Type | Dataset | Model Type | Example |

|---|---|---|---|

| Speech Recogination | Aishell | DeepSpeech2 RNN + Conv based Models | deepspeech2-aishell |

| Transformer based Attention Models | u2.transformer.conformer-aishell | ||

| Librispeech | Transformer based Attention Models | deepspeech2-librispeech / transformer.conformer.u2-librispeech / transformer.conformer.u2-kaldi-librispeech | |

| TIMIT | Unified Streaming & Non-streaming Two-pass | u2-timit | |

| Alignment | THCHS30 | MFA | mfa-thchs30 |

| Language Model | Ngram Language Model | kenlm | |

| Speech Translation (English to Chinese) | TED En-Zh | Transformer + ASR MTL | transformer-ted |

| FAT + Transformer + ASR MTL | fat-st-ted | ||

Text-to-Speech in PaddleSpeech mainly contains three modules: Text Frontend, Acoustic Model and Vocoder. Acoustic Model and Vocoder models are listed as follow:

| Text-to-Speech Module Type | Model Type | Dataset | Example |

|---|---|---|---|

| Text Frontend | tn / g2p | ||

| Acoustic Model | Tacotron2 | LJSpeech / CSMSC | tacotron2-ljspeech / tacotron2-csmsc |

| Transformer TTS | LJSpeech | transformer-ljspeech | |

| SpeedySpeech | CSMSC | speedyspeech-csmsc | |

| FastSpeech2 | LJSpeech / VCTK / CSMSC / AISHELL-3 / ZH_EN / finetune | fastspeech2-ljspeech / fastspeech2-vctk / fastspeech2-csmsc / fastspeech2-aishell3 / fastspeech2-zh_en / fastspeech2-finetune | |

| ERNIE-SAT | VCTK / AISHELL-3 / ZH_EN | ERNIE-SAT-vctk / ERNIE-SAT-aishell3 / ERNIE-SAT-zh_en | |

| Vocoder | WaveFlow | LJSpeech | waveflow-ljspeech |

| Parallel WaveGAN | LJSpeech / VCTK / CSMSC / AISHELL-3 | PWGAN-ljspeech / PWGAN-vctk / PWGAN-csmsc / PWGAN-aishell3 | |

| Multi Band MelGAN | CSMSC | Multi Band MelGAN-csmsc | |

| Style MelGAN | CSMSC | Style MelGAN-csmsc | |

| HiFiGAN | LJSpeech / VCTK / CSMSC / AISHELL-3 | HiFiGAN-ljspeech / HiFiGAN-vctk / HiFiGAN-csmsc / HiFiGAN-aishell3 | |

| WaveRNN | CSMSC | WaveRNN-csmsc | |

| Voice Cloning | GE2E | Librispeech, etc. | GE2E |

| SV2TTS (GE2E + Tacotron2) | AISHELL-3 | VC0 | |

| SV2TTS (GE2E + FastSpeech2) | AISHELL-3 | VC1 | |

| SV2TTS (ECAPA-TDNN + FastSpeech2) | AISHELL-3 | VC2 | |

| GE2E + VITS | AISHELL-3 | VITS-VC | |

| End-to-End | VITS | CSMSC / AISHELL-3 | VITS-csmsc / VITS-aishell3 |

Audio Classification

| Task | Dataset | Model Type | Example |

|---|---|---|---|

| Audio Classification | ESC-50 | PANN | pann-esc50 |

Keyword Spotting

| Task | Dataset | Model Type | Example |

|---|---|---|---|

| Keyword Spotting | hey-snips | MDTC | mdtc-hey-snips |

Speaker Verification

| Task | Dataset | Model Type | Example |

|---|---|---|---|

| Speaker Verification | VoxCeleb1/2 | ECAPA-TDNN | ecapa-tdnn-voxceleb12 |

Speaker Diarization

| Task | Dataset | Model Type | Example |

|---|---|---|---|

| Speaker Diarization | AMI | ECAPA-TDNN + AHC / SC | ecapa-tdnn-ami |

Punctuation Restoration

| Task | Dataset | Model Type | Example |

|---|---|---|---|

| Punctuation Restoration | IWLST2012_zh | Ernie Linear | iwslt2012-punc0 |

Normally, Speech SoTA, Audio SoTA and Music SoTA give you an overview of the hot academic topics in the related area. To focus on the tasks in PaddleSpeech, you will find the following guidelines are helpful to grasp the core ideas.

- Installation

- Quick Start

- Some Demos

- Tutorials

- Released Models

- Community

- Welcome to contribute

- License

The Text-to-Speech module is originally called Parakeet, and now merged with this repository. If you are interested in academic research about this task, please see TTS research overview. Also, this document is a good guideline for the pipeline components.

- PaddleBoBo: Use PaddleSpeech TTS to generate virtual human voice.

-

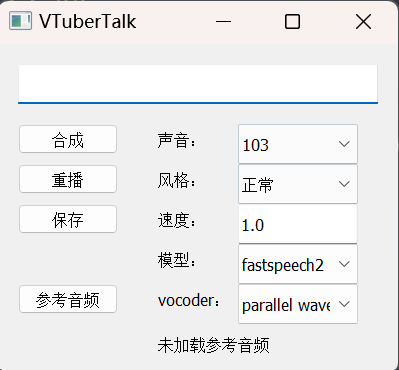

VTuberTalk: Use PaddleSpeech TTS and ASR to clone voice from videos.

To cite PaddleSpeech for research, please use the following format.

@InProceedings{pmlr-v162-bai22d,

title = {{A}$^3${T}: Alignment-Aware Acoustic and Text Pretraining for Speech Synthesis and Editing},

author = {Bai, He and Zheng, Renjie and Chen, Junkun and Ma, Mingbo and Li, Xintong and Huang, Liang},

booktitle = {Proceedings of the 39th International Conference on Machine Learning},

pages = {1399--1411},

year = {2022},

volume = {162},

series = {Proceedings of Machine Learning Research},

month = {17--23 Jul},

publisher = {PMLR},

pdf = {https://proceedings.mlr.press/v162/bai22d/bai22d.pdf},

url = {https://proceedings.mlr.press/v162/bai22d.html},

}

@inproceedings{zhang2022paddlespeech,

title = {PaddleSpeech: An Easy-to-Use All-in-One Speech Toolkit},

author = {Hui Zhang, Tian Yuan, Junkun Chen, Xintong Li, Renjie Zheng, Yuxin Huang, Xiaojie Chen, Enlei Gong, Zeyu Chen, Xiaoguang Hu, dianhai yu, Yanjun Ma, Liang Huang},

booktitle = {Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies: Demonstrations},

year = {2022},

publisher = {Association for Computational Linguistics},

}

@inproceedings{zheng2021fused,

title={Fused acoustic and text encoding for multimodal bilingual pretraining and speech translation},

author={Zheng, Renjie and Chen, Junkun and Ma, Mingbo and Huang, Liang},

booktitle={International Conference on Machine Learning},

pages={12736--12746},

year={2021},

organization={PMLR}

}

You are warmly welcome to submit questions in discussions and bug reports in issues! Also, we highly appreciate if you are willing to contribute to this project!

- Many thanks to HighCWu for adding VITS-aishell3 and VITS-VC examples.

- Many thanks to david-95 for fixing multi-punctuation bug、contributing to multiple program and data, and adding SSML for TTS Chinese Text Frontend.

- Many thanks to BarryKCL for improving TTS Chinses Frontend based on G2PW.

- Many thanks to yeyupiaoling/PPASR/PaddlePaddle-DeepSpeech/VoiceprintRecognition-PaddlePaddle/AudioClassification-PaddlePaddle for years of attention, constructive advice and great help.

- Many thanks to mymagicpower for the Java implementation of ASR upon short and long audio files.

- Many thanks to JiehangXie/PaddleBoBo for developing Virtual Uploader(VUP)/Virtual YouTuber(VTuber) with PaddleSpeech TTS function.

- Many thanks to 745165806/PaddleSpeechTask for contributing Punctuation Restoration model.

- Many thanks to kslz for supplementary Chinese documents.

- Many thanks to awmmmm for contributing fastspeech2 aishell3 conformer pretrained model.

- Many thanks to phecda-xu/PaddleDubbing for developing a dubbing tool with GUI based on PaddleSpeech TTS model.

- Many thanks to jerryuhoo/VTuberTalk for developing a GUI tool based on PaddleSpeech TTS and code for making datasets from videos based on PaddleSpeech ASR.

- Many thanks to vpegasus/xuesebot for developing a rasa chatbot,which is able to speak and listen thanks to PaddleSpeech.

- Many thanks to chenkui164/FastASR for the C++ inference implementation of PaddleSpeech ASR.

Besides, PaddleSpeech depends on a lot of open source repositories. See references for more information.

PaddleSpeech is provided under the Apache-2.0 License.