Official implementation of Hamburger, Is Attention Better Than Matrix Decomposition? (ICLR 2021, top 3%)

Squirtle (憨憨) invites you to enjoy Hamburger! 憨 shares the same pronunciation as ham, which means simple and plain in Chinese.

-

2022.04.01 - Add Light-Ham (VAN-Huge). Given 3 runs, Light-Ham (VAN-Base) produced an averaged mIoU (MS) of 49.6 on ADE20K

valset from results of 49.6, 49.9, and 49.2. Note that if we reduce steps K from 6 to 3 under Light-Ham (VAN-Base), the performance will drop to 48.8 (1 run), demonstrating the significance of optimization-driven strategy & MD in Hamburger. -

2022.03.26 - Release Light-Ham, a light-weight segmentation baseline for modern backbones. Using the VAN backbone, Light-Ham-VAN sets the best Pareto frontier (Params/FLOPs-mIoU curves) up to date for ADE20K.

Method Backbone Iters mIoU Params FLOPs Config Download Light-Ham-D256 VAN-Tiny 160K 40.9 4.2M 6.5G config Google Drive Light-Ham VAN-Tiny 160K 42.3 4.9M 11.3G config Google Drive Light-Ham-D256 VAN-Small 160K 45.2 13.8M 15.8G config Google Drive Light-Ham VAN-Small 160K 45.7 14.7M 21.4G config Google Drive Light-Ham VAN-Base 160K 49.6 27.4M 34.4G config Google Drive Light-Ham VAN-Large 160K 51.0 45.6M 55.0G config Google Drive Light-Ham VAN-Huge 160K 51.5 61.1M 71.8G config Google Drive - - - - - - - - Segformer VAN-Base 160K 48.4 29.3M 68.6G - - Segformer VAN-Large 160K 50.3 47.5M 89.2G - - - - - - - - - - HamNet VAN-Tiny-OS8 160K 41.5 11.9M 50.8G config Google Drive HamNet VAN-Small-OS8 160K 45.1 24.2M 100.6G config Google Drive HamNet VAN-Base-OS8 160K 48.7 36.9M 153.6G config Google Drive HamNet VAN-Large-OS8 160K 50.2 55.1M 227.7G config Google Drive -

2022.03.06 - Update HamNet using MMSegmentation. HamNet achieves SOTA performance for ResNet-101 backbone on ADE20K

valset, enabling R101 to match modern backbones like ResNeSt, Swin Transformer or ConvNeXt using similar computing budget. Code and checkpoint are available.Method Backbone Crop Size Lr schd mIoU (SS) mIoU (MS) Params FLOPs DANet ResNet-101 512x512 160000 - 45.2 69M 1119G OCRNet ResNet-101 520x520 150000 - 45.3 56M 923G DNL ResNet-101 512x512 160000 - 46.0 69M 1249G HamNet ResNet-101 512x512 160000 44.9 46.0 57M 918G HamNet+ ResNet-101 512x512 160000 45.6 46.8 69M 1111G - - - - - - - - DeeplabV3 ResNeSt-101 512x512 160000 45.7 46.6 66M 1051G UPerNet Swin-T 512x512 160000 44.5 45.8 60M 945G UPerNet ConvNeXt-T 512x512 160000 46.0 46.7 60M 939G -

2021.09.09 - Release the arXiv version. This is a short version including some future works based Hamburger. A long version concerning the implicit perspective of Hamburger will be updated later.

-

2021.05.12 - Release Chinese Blog 3.

-

2021.05.10 - Release Chinese Blog 1 and Blog 2 on Zhihu. Blog 3 is incoming.

-

2021.04.14 - Herald the incoming arXiv version concerning implicit models and one-step gradient.

-

2021.04.13 - Add poster and thumbnail icon for ICLR 2021.

This repo provides the official implementation of Hamburger for further research. We sincerely hope that this paper can bring you inspiration about the Attention Mechanism, especially how the low-rankness and the optimization-driven method can help model the so-called Global Information in deep learning. We also highlight Hamburger as a semi-implicit model and one-step gradient as an alternative for training both implicit and semi-implicit models.

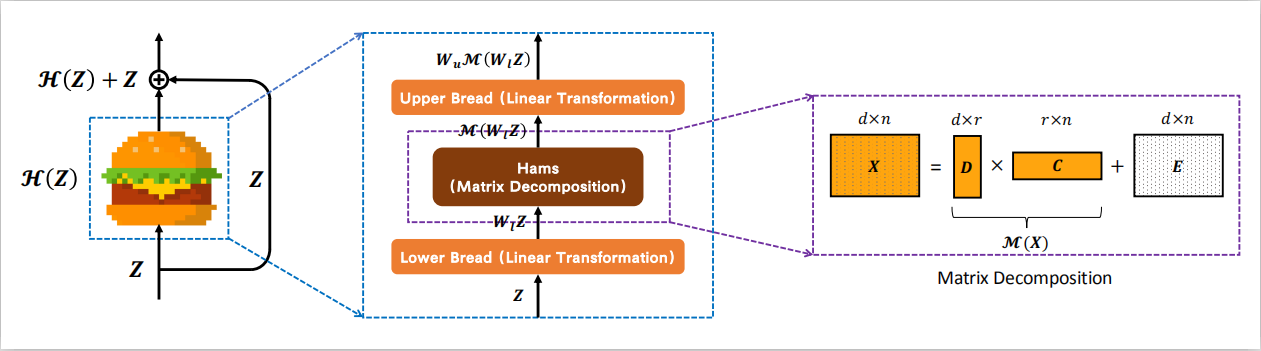

We model the global context issue as a low-rank completion problem and show that its optimization algorithms can help design global information blocks. This paper then proposes a series of Hamburgers, in which we employ the optimization algorithms for solving MDs to factorize the input representations into sub-matrices and reconstruct a low-rank embedding. Hamburgers with different MDs can perform favorably against the popular global context module self-attention when carefully coping with gradients back-propagated through MDs.

We are working on some exciting topics. Please wait for our new papers. :)

Enjoy Hamburger, please!

This section introduces the organization of this repo.

We strongly recommend our readers to enjoy the arXiv version or the blogs to more comprehensively understand this paper.

- blog.

- Some random thoughts on Hamburger and beyond (Chinese Blog 1).

- Connections and differences between Hamburger and implicit models. (incoming arXiv version, Chinese Blog 2)

- Highlight one-step gradient. (incoming arXiv version, Chinese Blog 2)

- Possible directions based on Hamburger. (current arXiv version, Chinese Blog 3)

- FAQ.

- seg.

- We provide the PyTorch implementation of Hamburger (V1) in the paper and an enhanced version (V2) flavored with Cheese. Some experimental features are included in V2+.

- We release the codebase for systematical research on the PASCAL VOC dataset, including the two-stage training on the

trainaugandtrainvaldatasets and the MSFlip test. - We offer three checkpoints of HamNet, in which one is 85.90+ with the test server link, while the other two are 85.80+ with the test server link 1 and link 2. You can reproduce the test results using the checkpoints combined with the MSFlip test code.

- Statistics about HamNet that might ease further research.

- gan.

- Official implementation of Hamburger in TensorFlow.

- Data preprocessing code for using ImageNet in tensorflow-datasets. (Possibly useful if you hope to run the JAX code of BYOL or other ImageNet training code with the Cloud TPUs.)

- Training and evaluation protocol of HamGAN on the ImageNet.

- Checkpoints of HamGAN-strong and HamGAN-baby.

TODO:

- Chinese Blog 1, Blog 2 and Blog 3.

- Release the arXiv version.

- English Blog.

- README doc for HamGAN.

- PyTorch Hamburger using less encapsulation.

- Suggestions for using and further developing Hamburger. (See arXiv)

-

We also consider adding a collection of popular context modules to this repo.It depends on the time. No Guarantee. Perhaps GuGu 🕊️ (which means standing someone up).

If you find our work interesting or helpful to your research, please consider citing Hamburger. :)

@inproceedings{

ham,

title={Is Attention Better Than Matrix Decomposition?},

author={Zhengyang Geng and Meng-Hao Guo and Hongxu Chen and Xia Li and Ke Wei and Zhouchen Lin},

booktitle={International Conference on Learning Representations},

year={2021},

}Feel free to contact me if you have additional questions or have interests in collaboration. Please drop me an email at [email protected]. Find me at Twitter or WeChat. Thank you!

Our research is supported with Cloud TPUs from Google's Tensorflow Research Cloud (TFRC). Nice and joyful experience with the TFRC program. Thank you!

We would like to sincerely thank MMSegmentation, EMANet, PyTorch-Encoding, YLG, and TF-GAN for their awesome released code.