Jinyuan Liu, Xin Fan*, Zhangbo Huang, Guanyao Wu, Risheng Liu , Wei Zhong, Zhongxuan Luo,“Target-aware Dual Adversarial Learning and a Multi-scenario Multi-Modality Benchmark to Fuse Infrared and Visible for Object Detection”, IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022. (Oral)

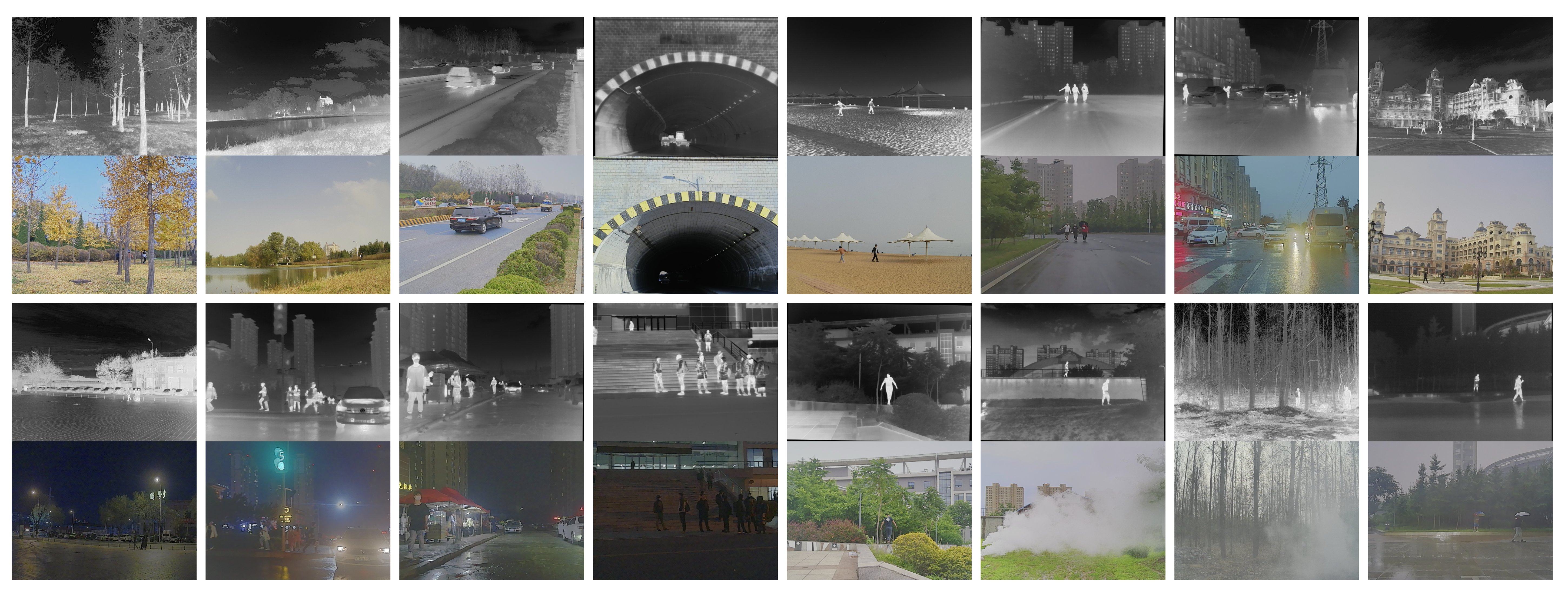

The preview of our dataset is as follows.

-

Sensor: A synchronized system containing one binocular optical camera and one binocular infrared sensor. More details are available in the paper.

-

Main scene:

- Campus of Dalian University of Technology.

- State Tourism Holiday Resort at the Golden Stone Beach in Dalian, China.

- Main roads in Jinzhou District, Dalian, China.

-

Total number of images:

- 8400 (for fusion, detection and fused-based detection)

- 600 (independent scene for fusion)

-

Total number of image pairs:

- 4200 (for fusion, detection and fused-based detection)

- 300 (independent scene for fusion)

-

Format of images:

- [Infrared] 24-bit grayscale bitmap

- [Visible] 24-bit color bitmap

-

Image size: 1024 x 768 pixels (mostly)

-

Registration: All image pairs are registered. The visible images are calibrated by using the internal parameters of our synchronized system, and the infrared images are artificially distorted by homography matrix.

-

Labeling: 34407 labels have been manually labeled, containing 6 kinds of targets: {People, Car, Bus, Motorcycle, Lamp, Truck}. (Limited by manpower, some targets may be mismarked or missed. We would appreciate if you would point out wrong or missing labels to help us improve the dataset)

If you have any question or suggestion about the dataset, please email to Guanyao Wu or Jinyuan Liu.

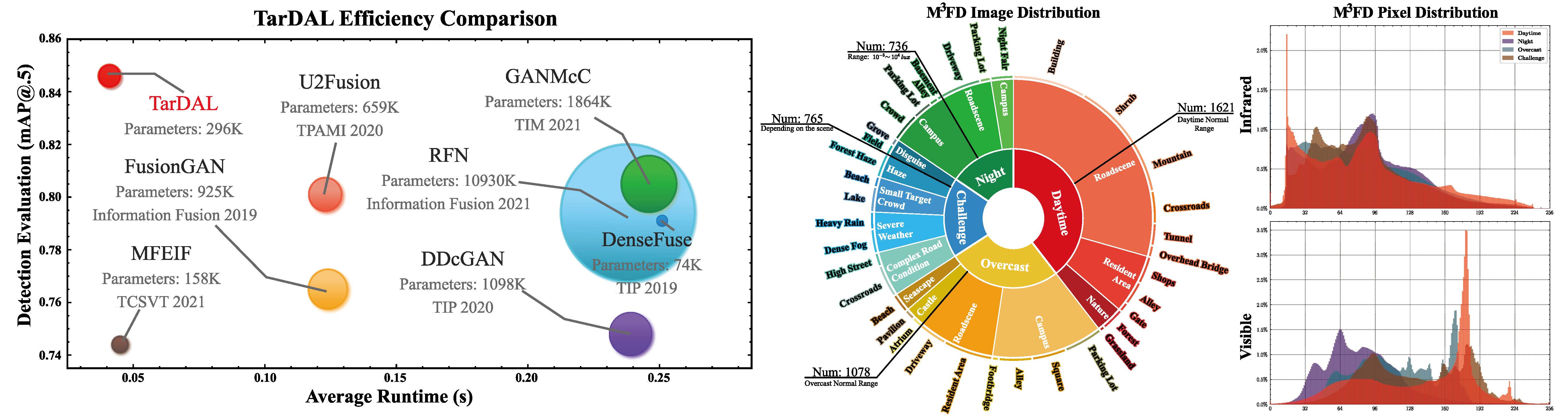

In the experiment process, we used the following outstanding work as our baseline.

Note: Sorted alphabetically

- AUIF (IEEE TCSVT 2021)

- DDcGAN (IJCAI 2019)

- Densefuse (IEEE TIP 2019)

- DIDFuse (IJCAI 2020)

- FusionGAN (Information Fusion 2019)

- GANMcC (IEEE TIM 2021)

- MFEIF (IEEE TCSVT 2021)

- RFN-Nest (Information Fusion 2021)

- SDNet (IJCV 2021)

- U2Fusion (IEEE TPAMI 2020)

Under normal circumstances, you may just be curious about the results of the fusion task, so we have prepared an online demonstration.

Our online preview (free) in Colab.

When you want to dive deeper or apply it on a larger scale, you can configure our TarDAL on your computer following the steps below.

We strongly recommend that you use Conda as a package manager.

# create virtual environment

conda create -n tardal python=3.10

conda activate tardal

# select pytorch version yourself

# install tardal requirements

pip install -r requirements.txt

# install yolov5 requirements

pip install -r module/detect/requirements.txtYou should put the data in the correct place in the following form.

TarDAL ROOT

├── data

| ├── m3fd

| | ├── ir # infrared images

| | ├── vi # visible images

| | ├── labels # labels in txt format (yolo format)

| | └── meta # meta data, includes: pred.txt, train.txt, val.txt

| ├── tno

| | ├── ir # infrared images

| | ├── vi # visible images

| | └── meta # meta data, includes: pred.txt, train.txt, val.txt

| ├── roadscene

| └── ...

You can directly download the TNO and RoadScene datasets organized in this format from here.

In this section, we will guide you to generate fusion images using our pre-trained model.

As we mentioned in our paper, we provide three pre-trained models.

| Name | Description |

|---|---|

| TarDAL-DT | Optimized for human vision. (Default) |

| TarDAL-TT | Optimized for object detection. |

| TarDAL-CT | Optimal solution for joint human vision and detection accuracy. |

You can find their corresponding configuration file path in configs.

Some settings you should pay attention to:

- config.yaml

strategy: save images (fuse) or save images & labels (fuse & detect)dataset: name & rootinference: each item in inference

- infer.py

--cfg: config file path, such asconfigs/official/tardal-dt.yaml--save_dir: result save folder

Under normal circumstances, you don't need to manually download the model parameters, our program will do it for you.

# TarDAL-DT

# use official tardal-dt infer config and save images to runs/tardal-dt

python infer.py --cfg configs/official/tardal-dt.yaml --save_dir runs/tardal-dt

# TarDAL-TT

# use official tardal-tt infer config and save images to runs/tardal-tt

python infer.py --cfg configs/official/tardal-tt.yaml --save_dir runs/tardal-tt

# TarDAL-CT

# use official tardal-ct infer config and save images to runs/tardal-ct

python infer.py --cfg configs/official/tardal-ct.yaml --save_dir runs/tardal-ctWe provide some training script for you to train your own model.

Please note: The training code is only intended to assist in understanding the paper and is not recommended for direct application in production environments.

Unlike previous code versions, you don't need to preprocess the data, we will automatically calculate the IQA weights and mask.

# TarDAL-DT

python train.py --cfg configs/official/tardal-dt.yaml --auth $YOUR_WANDB_KEY

# TarDAL-TT

python train.py --cfg configs/official/tardal-tt.yaml --auth $YOUR_WANDB_KEY

# TarDAL-CT

python train.py --cfg configs/official/tardal-ct.yaml --auth $YOUR_WANDB_KEYIf you want to base your approach on ours and extend it to a production environment, here are some additional suggestions for you.

Suggestion: A better train process for everyone.

If you have any other questions about the code, please email Zhanbo Huang.

Due to job changes, the previous link [email protected] is no longer available.

If this work has been helpful to you, please feel free to cite our paper!

@inproceedings{liu2022target,

title={Target-aware Dual Adversarial Learning and a Multi-scenario Multi-Modality Benchmark to Fuse Infrared and Visible for Object Detection},

author={Liu, Jinyuan and Fan, Xin and Huang, Zhanbo and Wu, Guanyao and Liu, Risheng and Zhong, Wei and Luo, Zhongxuan},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={5802--5811},

year={2022}

}