Pytorch implementation of EMNLP 2021 paper

TransferNet: An Effective and Transparent Framework for Multi-hop Question Answering over Relation Graph

Jiaxin Shi, Shulin Cao, Lei Hou, Juanzi Li, Hanwang Zhang

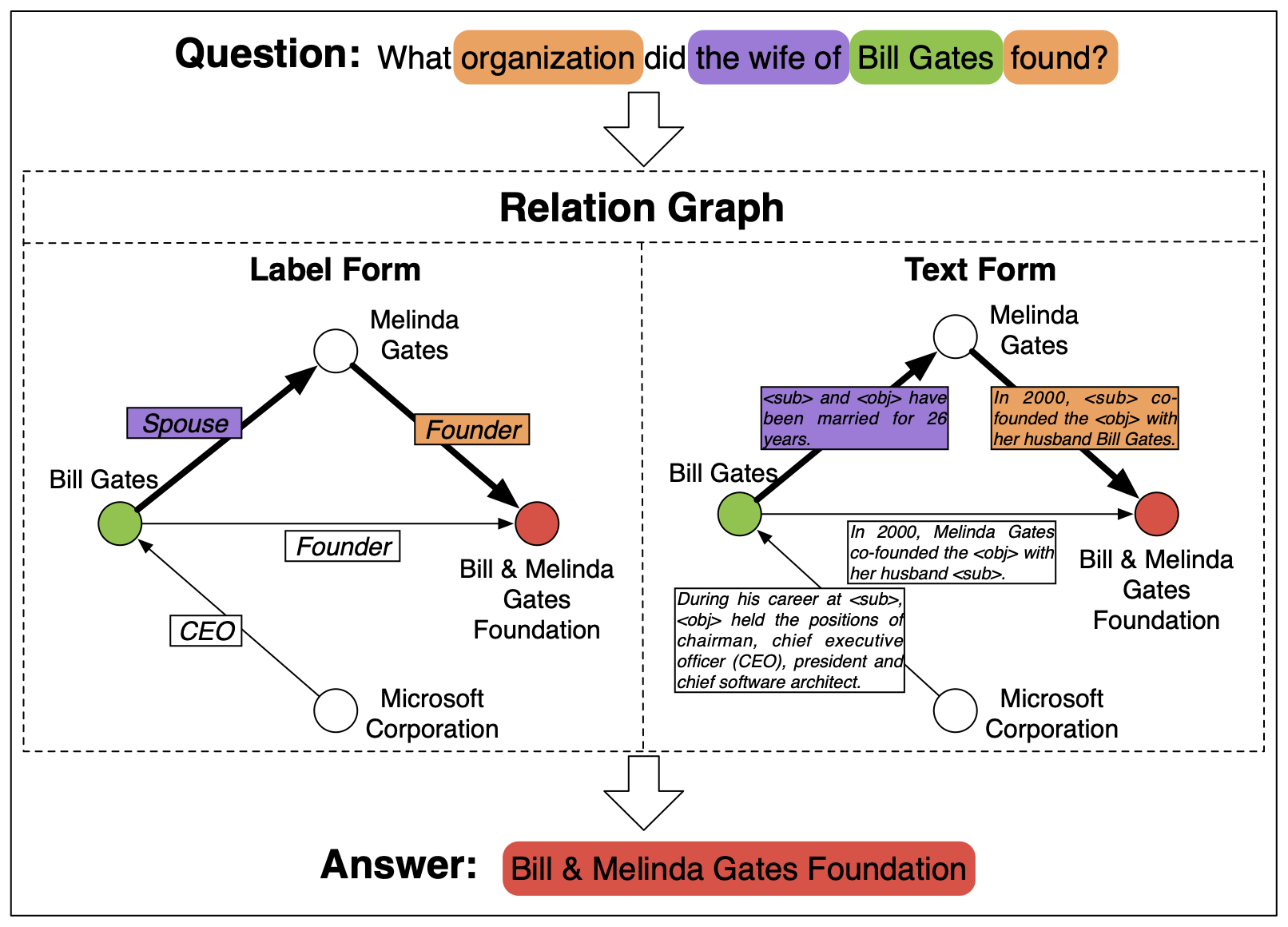

We perform transparent multi-hop reasoning over relation graphs of label form (i.e., knowledge graph) and text form. This is an example:

If you find this code useful in your research, please cite

@inproceedings{shi2021transfernet,

title={TransferNet: An Effective and Transparent Framework for Multi-hop Question Answering over Relation Graph},

author={Jiaxin Shi, Shulin Cao, Lei Hou, Juanzi Li, Hanwang Zhang},

booktitle={EMNLP},

year={2021}

}- pytorch>=1.2.0

- transformers

- tqdm

- nltk

- shutil

- MetaQA, we only use its vanilla version.

- MovieQA, we need its

knowledge_source/wiki.txtas the text corpus for our MetaQA-Text experiments. Copy the file into the folder of MetaQA, and put it together withkb.txt. The files of MetaQA should be something like

MetaQA

+-- kb

| +-- kb.txt

| +-- wiki.txt

+-- 1-hop

| +-- vanilla

| | +-- qa_train.txt

| | +-- qa_dev.txt

| | +-- qa_test.txt

+-- 2-hop

+-- 3-hop- WebQSP, which has been processed by EmbedKGQA.

- ComplexWebQuestions, which has been processed by NSM.

- GloVe 300d pretrained vector, which is used in the BiGRU model. After unzipping it, you need to convert the txt file to pickle file by

python pickle_glove.py --txt </path/to/840B.300d.txt> --pt </output/file/name>- Preprocess

python -m MetaQA-KB.preprocess --input_dir <PATH/TO/METAQA> --output_dir <PATH/TO/PROCESSED/FILES>- Train

python -m MetaQA-KB.train --glove_pt <PATH/TO/GLOVE/PICKLE> --input_dir <PATH/TO/PROCESSED/FILES> --save_dir <PATH/TO/CHECKPOINT>- Predict on the test set

python -m MetaQA-KB.predict --input_dir <PATH/TO/PROCESSED/FILES> --ckpt <PATH/TO/CHECKPOINT> --mode test- Visualize the reasoning process. It will enter an IPython environment after showing the information of each sample. You can print more variables that you are insterested in. To stop the process, you need to quit the IPython by

Ctrl+Dand then kill the loop byCtrl+Cimmediately.

python -m MetaQA-KB.predict --input_dir <PATH/TO/PROCESSED/FILES> --ckpt <PATH/TO/CHECKPOINT> --mode vis- Preprocess

python -m MetaQA-Text.preprocess --input_dir <PATH/TO/METAQA> --output_dir <PATH/TO/PROCESSED/FILES>- Train

python -m MetaQA-Text.train --glove_pt <PATH/TO/GLOVE/PICKLE> --input_dir <PATH/TO/PROCESSED/FILES> --save_dir <PATH/TO/CHECKPOINT>The scripts for inference and visualization are the same as MetaQA-KB. Just change the python module to MetaQA-Text.predict.

- Preprocess

python -m MetaQA-Text.preprocess --input_dir <PATH/TO/METAQA> --output_dir <PATH/TO/PROCESSED/FILES> --kb_ratio 0.5- Train, it needs more active paths than MetaQA-Text

python -m MetaQA-Text.train --input_dir <PATH/TO/PROCESSED/FILES> --save_dir <PATH/TO/CHECKPOINT> --max_active 800 --batch_size 32The scripts for inference and visualization are the same as MetaQA-Text.

WebQSP does not need preprocess. We can directly start the training:

python -m WebQSP.train --input_dir <PATH/TO/UNZIPPED/DATA> --save_dir <PATH/TO/CHECKPOINT>Similar to WebQSP, CWQ does not need preprocess. We can directly start the training:

python -m CompWebQ.train --input_dir <PATH/TO/UNZIPPED/DATA> --save_dir <PATH/TO/CHECKPOINT>