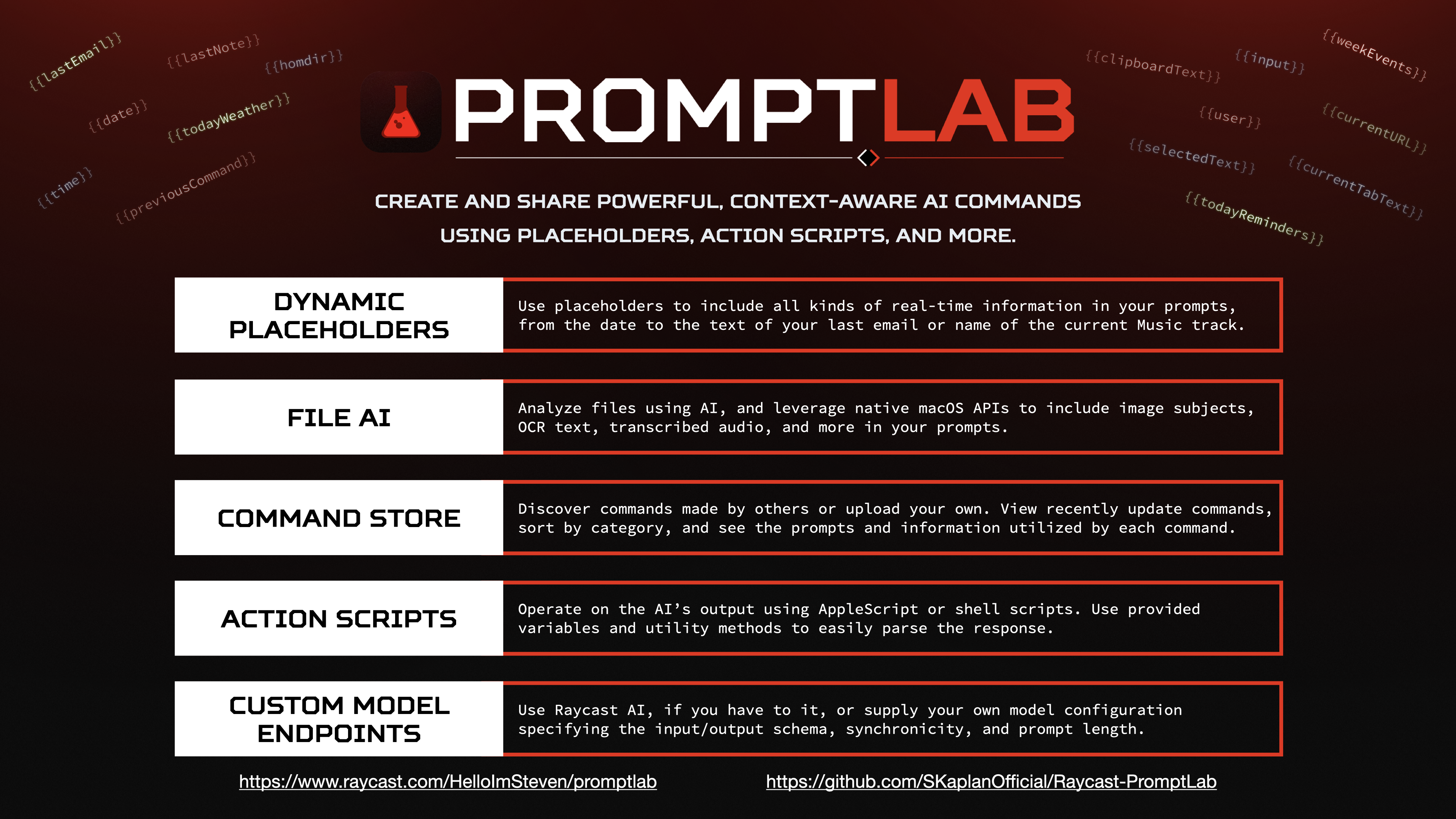

PromptLab is a Raycast extension for creating and sharing powerful, contextually-aware AI commands using placeholders, action scripts, and more.

PromptLab allows you to create custom AI commands with prompts that utilize contextual placeholders such as {{selectedText}}, {{todayEvents}}, or {{currentApplication}} to vastly expand the capabilities of Raycast AI. PromptLab can also extract information from selected files, if you choose, so that it can tell you about the subjects in an image, summarize a PDF, and more.

PromptLab also supports "action scripts" -- AppleScripts which run with the AI's response as input, as well as experimental autonomous agent features that allow the AI to run commands on your behalf. These capabilities, paired with PromptLab's extensive customization options, open a whole new world of possibilities for enhancing your workflows with AI.

Install PromptLab | My Other Extensions | Donate

- Feature Overview

- Top-Level Commands

- Images

- Create Your Own Commands

- Chats, Context Data, Statistics, and More

- Installation

- Custom Model Endpoints

- Troubleshooting

- Contributing

- Roadmap

- Privacy Policy

- Useful Resources

- Create, Edit, Run, and Share Custom Commands

- Detail, List, Chat, and No-View Command Types

- Utilize Numerous Contextual Placeholders in Prompts

- Use AppleScript, JXA, Shell Scripts, and JavaScript Placeholders

- Obtain Data from External APIs, Websites, and Applications

- Analyze Content of Selected Files

- Extract Text, Subjects, QR Codes, etc. from Images and Videos

- Quick Access to Commands via Menu Bar Item

- Import/Export Commands

- Save & Run Commands as Quicklinks with Optional Input Parameter

- Run AppleScript or Bash Scripts Upon Model Response

- Execute Siri Shortcuts and Use Their Output in Prompts

- PromptLab Chat with Autonomous Command Execution Capability

- Multiple Chats, Chat History, and Chat Statistics

- Chat-Specific Context Data Files

- Upload & Download Commands To/From PromptLab Command Store

- Use Custom Model Endpoints with Synchronous or Asynchronous Responses

- Favorite Commands, Chats, and Models

- Optionally Speak Responses and Provide Spoken Input

- Create Custom Placeholders with JSON

- New PromptLab Command

- Create a custom PromptLab command accessible via 'My PromptLab Commands'.

- My PromptLab Commands

- Search and run custom PromptLab commands that you've installed or created.

- Manage Models

- View, edit, add, and delete custom models.

- PromptLab Command Store

- Explore and search commands uploaded to the store by other PromptLab users.

- PromptLab Chat

- Start a back-and-forth conversation with AI with selected files provided as context.

- PromptLab Menu Item

- Displays a menu of PromptLab commands in your menu bar.

- Import PromptLab Commands

- Add custom commands from a JSON string.

DogSVG.webm

EditCommand.webm

InstallAll.webm

CPUPerformance.webm

View more images in the gallery.

You can create custom PromptLab commands, accessed via the "My PromptLab Commands" command, to execute your own prompts acting on the contents of selected files. A variety of useful defaults are provided, and you can find more in the PromptLab Command Store.

When creating custom commands, you can use placeholders in your prompts that will be substituted with relevant information whenever you run the command. These placeholders range from simple information, like the current date, to complex data retrieval operations such as getting the content of the most recent email or running a sequence of prompts in rapid succession and amalgamating the results. Placeholders are a powerful way to add context to your PromptLab prompts.

A few examples of placeholders are:

| Placeholder | Replaced With |

|---|---|

{{clipboardText}} |

The text content of your clipboard |

{{selectedFiles}} |

The paths of the files you have selected |

{{imageText}} |

Text extracted from the image(s) you have selected |

{{lastNote}} |

The HTML of the most recently modified note in the Notes app |

{{date format="d MMMM, yyyy"}} |

The current date, optionally specifying a format |

{{todayEvents}} |

The events scheduled for today, including their start and end times |

{{youtube:[search term]}} |

The transcription of the first YouTube video result for the specified search term |

{{prompt:...}} |

The result of running the specified prompt |

{{url:[url]}} |

The visible text at the specified URL |

{{as:...}} |

The result of the specified AppleScript code |

{{js:...}} |

The result of the specified JavaScript code |

These are just a few of the many placeholders available. View the full list here. You even create your own placeholders using JSON, if you want!

When configuring a PromptLab command, you can provide AppleScript code to execute once the AI finishes its response. You can access the response text via the response variable in AppleScript. Several convenient handlers for working with the response text are also provided, as listed below. Action Scripts can be used to build complex workflows using AI as a content provider, navigator, or decision-maker.

| Variable | Value | Type |

|---|---|---|

input |

The selected files or text input provided to the command. | String |

prompt |

The prompt component of the command that was run. | String |

response |

The full response received from the AI. | String |

| Handler | Purpose | Returns |

|---|---|---|

split(theText, theDelimiter) |

Splits text around the specified delimiter. | List of String |

trim(theText) |

Removes leading and trailing spaces from text. | String |

replaceAll(theText, textToReplace, theReplacement) |

Replaces all occurrences of a string within the given text. | String |

rselect(theArray, numItems) |

Randomly selects the specified number of items from a list. | List |

When creating a command, you can use the Unlock Setup Fields action to enable custom configuration fields that must be set before the command can be run. You'll then be able to use actions to add text fields, boolean (true/false) fields, and/or number fields, providing instructions as you see fit. In your prompt, use the {{config:fieldName}} placeholder, camel-cased, to insert the field's current value. When you share the command to the store and others install it, they'll be prompted to fill out the custom fields before they can run the command. This is a great way to make your commands more flexible and reusable.

Using the "PromptLab Chat" command, you can chat with AI while making use of features like placeholders and selected file contents. Chat are preserved for later reference or continuation, and you can customize each chat's name, icon, color, and other settings. Chats can have "Context Data" associated with them, ensuring that the LLM stays aware of the files, websites, and other information relevant to your conversation. Within a chat's settings, you can view various statistics highlighting how you've interacted with the AI, and you can export the chat's contents (including the statistics) to JSON for portability.

When using PromptLab Chat, or any command that uses a chat view, you can choose to enable autonomous agent features by checking the "Allow AI To Run Commands" checkbox. This will allow the AI to run PromptLab commands on your behalf, supplying input as needed, in order to answer your queries. For example, if you ask the AI "What's the latest news?", it might run the "Recent Headlines From 68k News" command to fulfil your request, then return the results to you. This feature is disabled by default, and can be enabled or disabled at any time.

PromptLab is now available on the Raycast extensions store! Download it now.

Alternatively, you can install the extension manually from this repository by following the instructions below.

git clone https://github.com/SKaplanOfficial/Raycast-PromptLab.git && cd Raycast-PromptLab

npm install && npm run devWhen you first run PromptLab, you'll have the option to configure a custom model API endpoint. If you have access to Raycast AI, you can just leave everything as-is, unless you have a particular need for a different model. You can, of course, adjust the configuration via the Raycast preferences at any time.

To use any arbitrary endpoint, put the endpoint URL in the Model Endpoint preference field and provide your API Key alongside the corresponding Authorization Type. Then, specify the Input Schema in JSON notation, using {prompt} to indicate where PromptLab should input its prompt. Alternatively, you can specify {basePrompt} and {input} separately, for example if you want to provide content for the user and system roles separately when using the OpenAI API. Next, specify the Output Key of the output text within the returned JSON object. If the model endpoint returns a string, rather than a JSON object, leave this field empty. Finally, specify the Output Timing of the model endpoint. If the model endpoint returns the output immediately, select Synchronous. If the model endpoint returns the output asynchronously, select Asynchronous.

To use Anthropic's Claude API as the model endpoint, configure the extension as follows:

| Preference Name | Value |

|---|---|

| Model Endpoint | https://api.anthropic.com/v1/complete |

| API Authorization Type | X-API-Key |

| API Key | Your API key |

| Input Schema | { "prompt": "\n\nHuman: {prompt}\n\nAssistant: ", "model": "claude-instant-v1-100k", "max_tokens_to_sample": 300, "stop_sequences": ["\n\nHuman:"] , "stream": true } |

| Output Key Path | completion |

| Output Timing | Asynchronous |

To use the OpenAI API as the model endpoint, configure the extension as follows:

| Preference Name | Value |

|---|---|

| Model Endpoint | https://api.openai.com/v1/chat/completions |

| API Authorization Type | Bearer Token |

| API Key | Your API key |

| Input Schema | { "model": "gpt-4", "messages": [{"role": "user", "content": "{prompt}"}], "stream": true } |

| Output Key Path | choices[0].delta.content |

| Output Timing | Asynchronous |

If you encounter any issues with the extension, you can try the following steps to resolve them:

- Make sure you're running the latest version of Raycast and PromptLab. I'm always working to improve the extension, so it's possible that your issue has already been fixed.

- If you're having trouble with a command not outputting the desired response, try adjusting the command's configuration. You might just need to make small adjustments to the wording of the prompt. See the Useful Resources section below for help with prompt engineering. You can also try adjusting the included information settings to add or remove context from the prompt and guide the AI towards the desired response.

- If you're having trouble with PromptLab Chat responding in unexpected ways, make sure the chat settings are configured correctly. If you are trying to reference selected files, you need to enable "Use Selected Files As Context". Likewise, to run other PromptLab commands automatically, you need to enable "Allow AI To Run Commands". To have the AI remember information about your conversation, you'll need to enable "Use Conversation As Context". Having multiple of these settings enabled can sometimes cause unexpected behavior, so try disabling them one at a time to see if that resolves the issue.

- Check the PromptLab Wiki to see if a solution to your problem is provided there.

- If you're still having trouble, create a new issue on GitHub with a detailed description of the issue and any relevant screenshots or information. I'll do my best to help you out!

Contributions are welcome! Please see the contributing guidelines for more information.

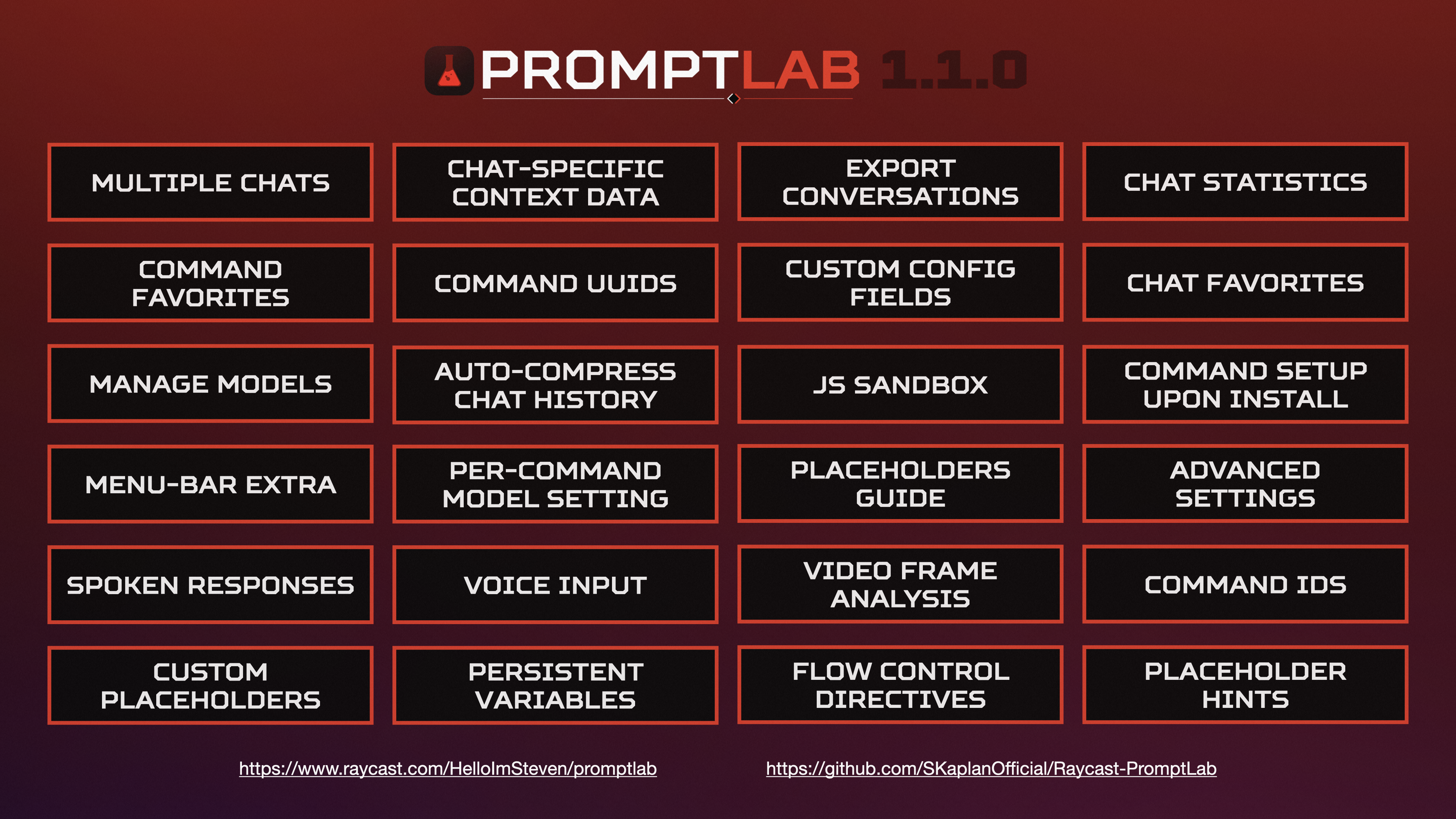

- Create, Edit, and Run Custom Commands

- Detail, List, Chat, and No-View Command Types

- Placeholders in Prompts

- Get Content of Selected Files

- Extract Text, Subjects, QR Codes, etc. from Images

- Import/Export Commands

- Run AppleScript or Bash Scripts On Model Response

- PromptLab Chat with Autonomous Command Execution Capability

- Upload & Download Commands To/From PromptLab Command Store

- Custom Model Endpoints with Synchronous or Asynchronous Responses

- Save & Run Commands as Quicklinks

- Video Feature Extraction example

- Switch Between Chats & Export Chat History example

- Auto-Compress Chat History

- Chat Settings

- Command Setup On Install

- Spoken Responses

- Voice Input

- New Placeholders

- Persistent Variables

- Flow Control Directives

- Configuration Placeholders

- JS Sandbox

- Manage Models example

- Menu Bar Extra example

- Placeholders Guide

- Record Previous Runs of a Command and Use Them as Input

- Saved Responses

- Command Templates

- Improved Chat UI

- TF-IDF

- Autonomous Web Search

- LangChain Integration

- Dashboard

- Chat Merging

- GPT Function Calling

- New Placeholders

| Link | Category | Description |

|---|---|---|

| Best practices for prompt engineering with OpenAI API | Prompt Engineering | Strategies for creating effective ChatGPT prompts, from OpenAI itself |

| Brex's Prompt Engineering Guide | Prompt Engineering | A guide to prompt engineering, with examples and in-depth explanations |

| Techniques to improve reliability | Prompt Engineering | Strategies for improving reliability of GPT responses |