This repository is the official implementation of the paper:

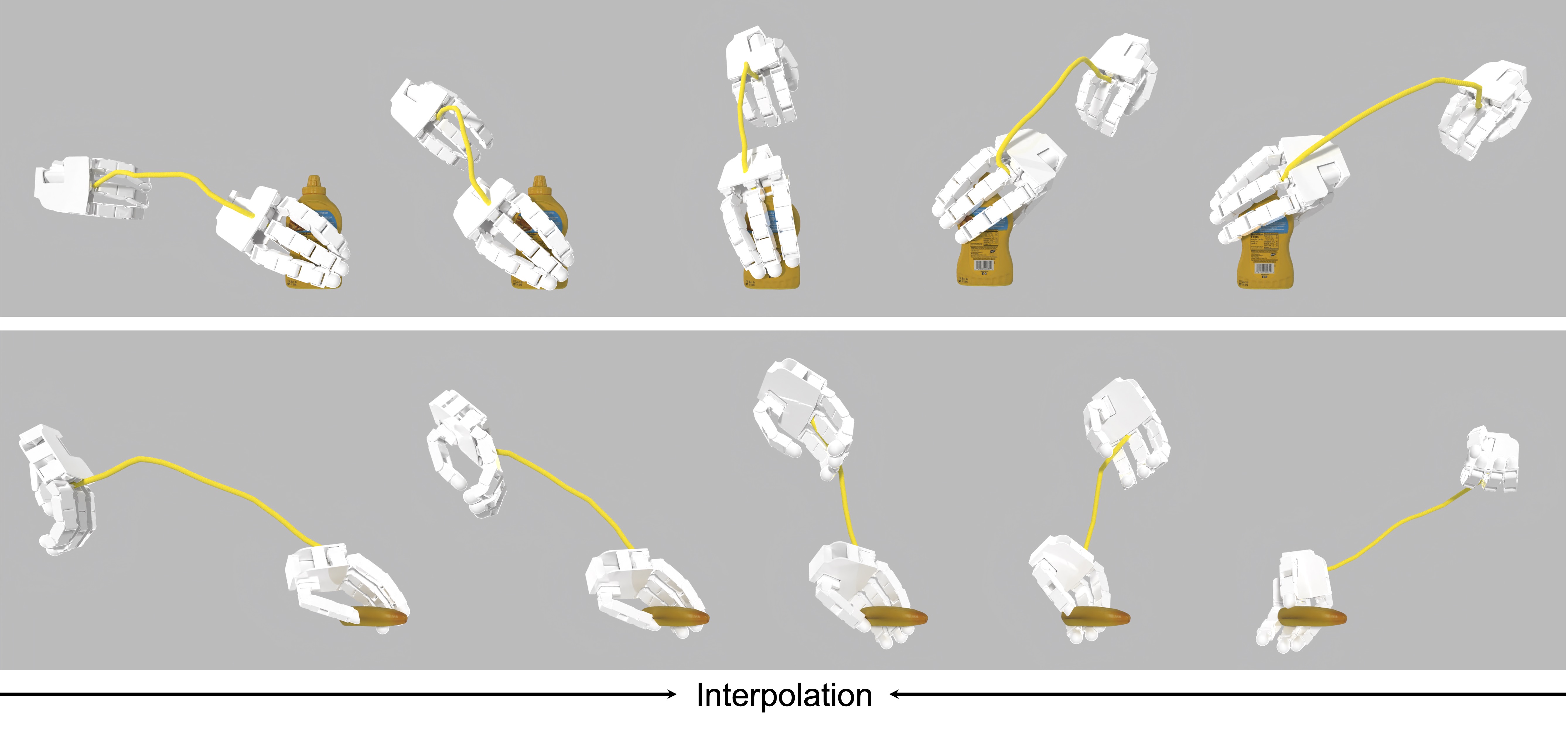

Learning Continuous Grasping Function with a Dexterous Hand from Human Demonstrations

Jianglong Ye*, Jiashun Wang*, Binghao Huang, Yuzhe Qin, Xiaolong Wang

RA-L 2023, IROS 2023

Project Page / ArXiv / Video

(Our code has been tested on Ubuntu 20.04, python 3.10, torch 1.13.1, CUDA 11.7 and RTX 3090)

To set up the environment, follow these steps:

conda create -n cgf python=3.10 -y && conda activate cgf

conda install pytorch==1.13.1 torchvision==0.14.1 torchaudio==0.13.1 pytorch-cuda=11.7 -c pytorch -c nvidia -y

pip install six numpy==1.23.1 tqdm pyyaml scipy opencv-python trimesh einops lxml transforms3d fvcore viser==0.1.34

pip install git+https://github.com/hassony2/chumpy.git

pip install git+https://github.com/hassony2/manopth

pip install git+https://github.com/facebookresearch/[email protected]

# installing pytorch3d from source is usually more robust, for pytorch 1.13.1, CUDA 11.7, python 3.10, the following command *may* work:

# pip install --no-index --no-cache-dir pytorch3d -f https://dl.fbaipublicfiles.com/pytorch3d/packaging/wheels/py310_cu117_pyt1131/download.html

pip install git+https://github.com/UM-ARM-Lab/[email protected]We provide processed data for training CGF which can be downloaded from here and unzip it under PROJECT_ROOT/data/. The final file structure should look like this:

PROJECT_ROOT

├── ...

└── data

├── ...

├── geometry

├── mano_v1_2_models

├── original_root

└── processed

├── ...

├── meta.json

├── pose_m_aug.npz

└── pose_m_object_coord.npz

Alternatively, you can prepare the data by following the instructions below.

- Download DexYCB data from the official website to

DEXYCB_DATA_ROOTand organize the data as follows:DEXYCB_DATA_ROOT ├── 20200709-subject-01/ ├── 20200813-subject-02/ ├── ... ├── calibration/ └── models/ - Run the following script to process the data:

The processed data will be saved to

python scripts/prepare_dexycb.py --data_root DEXYCB_DATA_ROOT python scripts/retargeting.py --mano_side left python scripts/retargeting.py --mano_side right

PROJECT_ROOT/data/processed. Retargeting results can be visualized by running the following command:python scripts/visualize_retargeting.py

To train the CGF model, run the following command:

# cd PROJECT_ROOT

python scripts/main.pyTo sample from the trained CGF model, run the following command (replace TIMESTAMP with the timestamp of the checkpoint in the PROJECT_ROOT/output directory):

We provide a pre-trained model which can be downloaded from here and unzip it under PROJECT_ROOT/output/.

# cd PROJECT_ROOT

python scripts/main.py --mode sample --ts TIMESTAMPThe sampled results will be saved to PROJECT_ROOT/output/TIMESTAMP/sample. They can be visualized by running the following command:

python scripts/visualize_sampling.py --ts TIMESTAMPTo evaluate the smoothness of the sampled grasps (table 1 in the paper), run the following command:

python scripts/eval_generation.py --ts TIMESTAMPNote that the evaluation results may vary slightly due to the randomness in the sampling process.

Our simulation environment is adapted from dex-hand-teleop. To setup the simulation environment, follow these steps:

pip install sapien==2.2.2 gym open3dTo run the simulation, run the following command (replace SAPIEN_SAMPLE_DATA_PATH with the path to a sample data in the PROJECT_ROOT/output/TIMESTAMP/sample/result_filter_sapien directory):

python scripts/sim.py --data_path SAPIEN_SAMPLE_DATA_PATH@article{ye2023learning,

title={Learning continuous grasping function with a dexterous hand from human demonstrations},

author={Ye, Jianglong and Wang, Jiashun and Huang, Binghao and Qin, Yuzhe and Wang, Xiaolong},

journal={IEEE Robotics and Automation Letters},

year={2023}

}