- Introduction

- Performance

- Building Instruction

- Usage

- Parameters used in Our Paper

- Performance on Taobao's E-commerce Data

- Reference

- TODO

- License

NSG is a graph-based approximate nearest neighbor search (ANNS) algorithm. It provides a flexible and efficient solution for the metric-free large-scale ANNS on dense real vectors. It implements the algorithm of our PVLDB paper - Fast Approximate Nearest Neighbor Search With The Navigating Spread-out Graphs. NSG has been intergrated into the search engine of Taobao (Alibaba Group) for billion scale ANNS in E-commerce scenario.

- SIFT1M and GIST1M

- Synthetic datasets: RAND4M and GAUSS5M

- RAND4M: 4 million 128-dimension vectors sampled from a uniform distribution of [-1, 1].

- GAUSS5M: 5 million 128-dimension vectors sampled from a gaussion ditribution N(0,3).

- kGraph

- FANNG : FANNG: Fast Approximate Nearest Neighbour Graphs

- HNSW (code) : Efficient and robust approximate nearest neighbor search using Hierarchical Navigable Small World graphs

- DPG (code) : Approximate Nearest Neighbor Search on High Dimensional Data --- Experiments, Analyses, and Improvement (v1.0)

- EFANNA (code) : EFANNA: An Extremely Fast Approximate Nearest Neighbor Search Algorithm Based on kNN Graph

- NSG-naive: a designed based-line, please refer to our PVLDB paper.

- NSG: This project, please refer to our PVLDB paper.

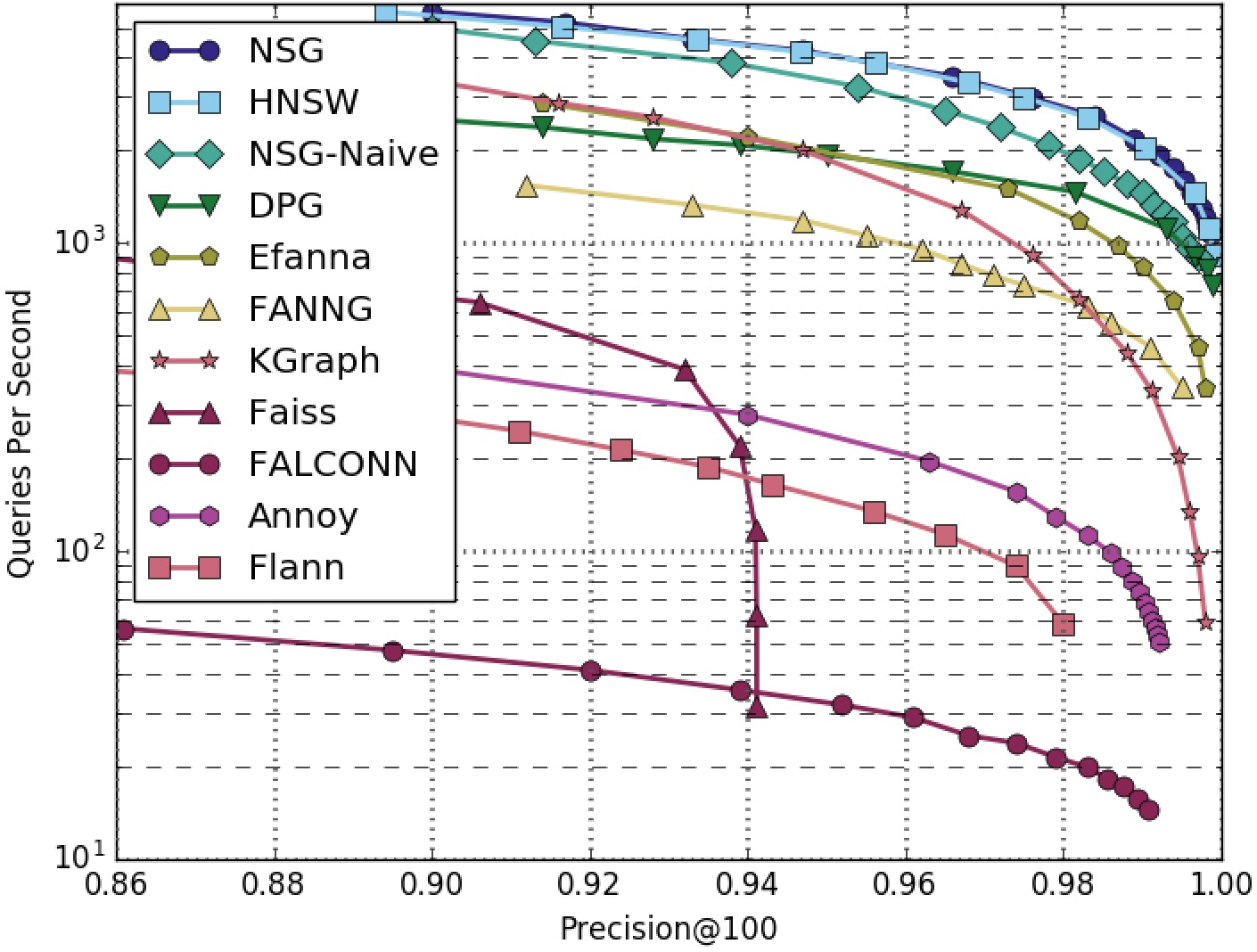

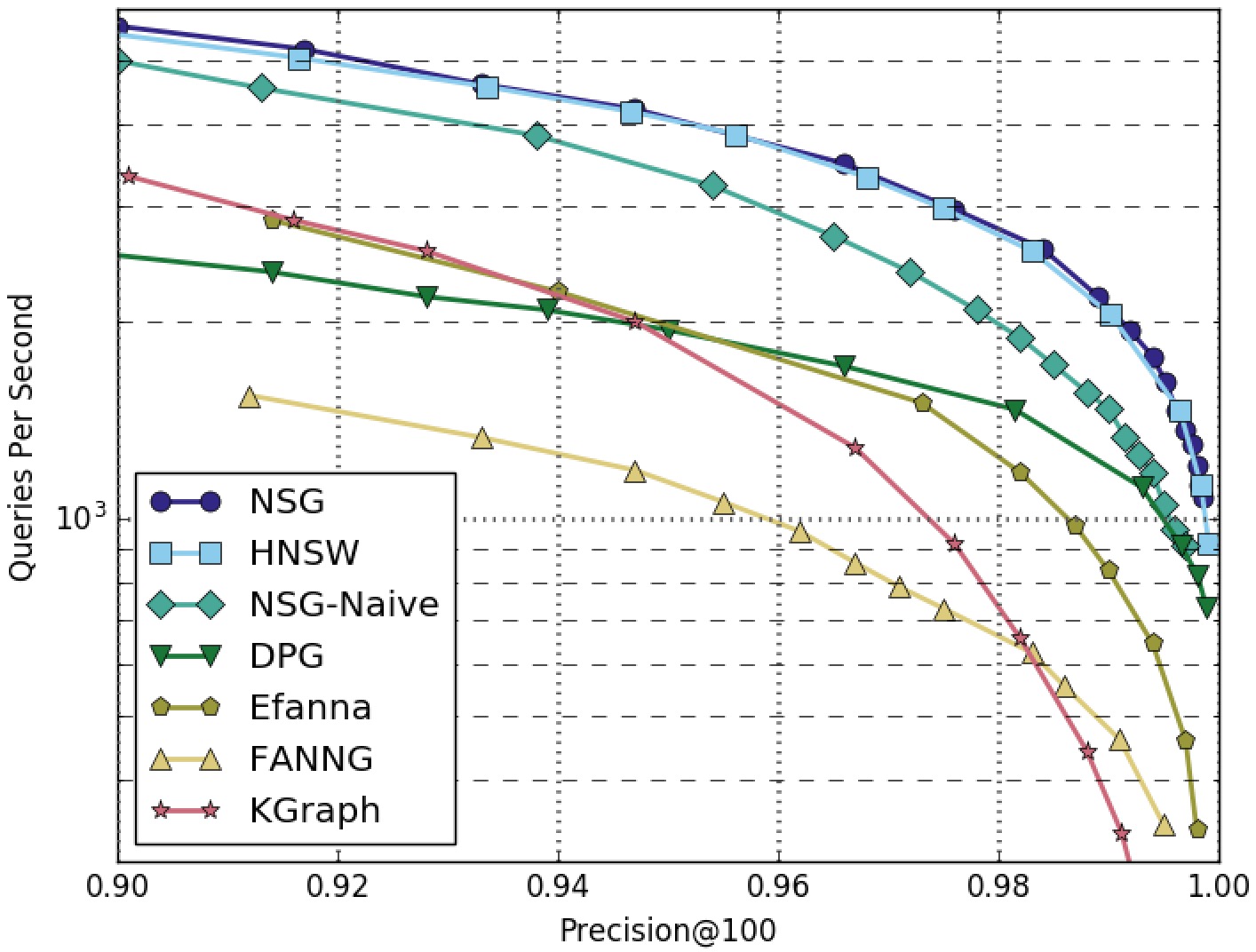

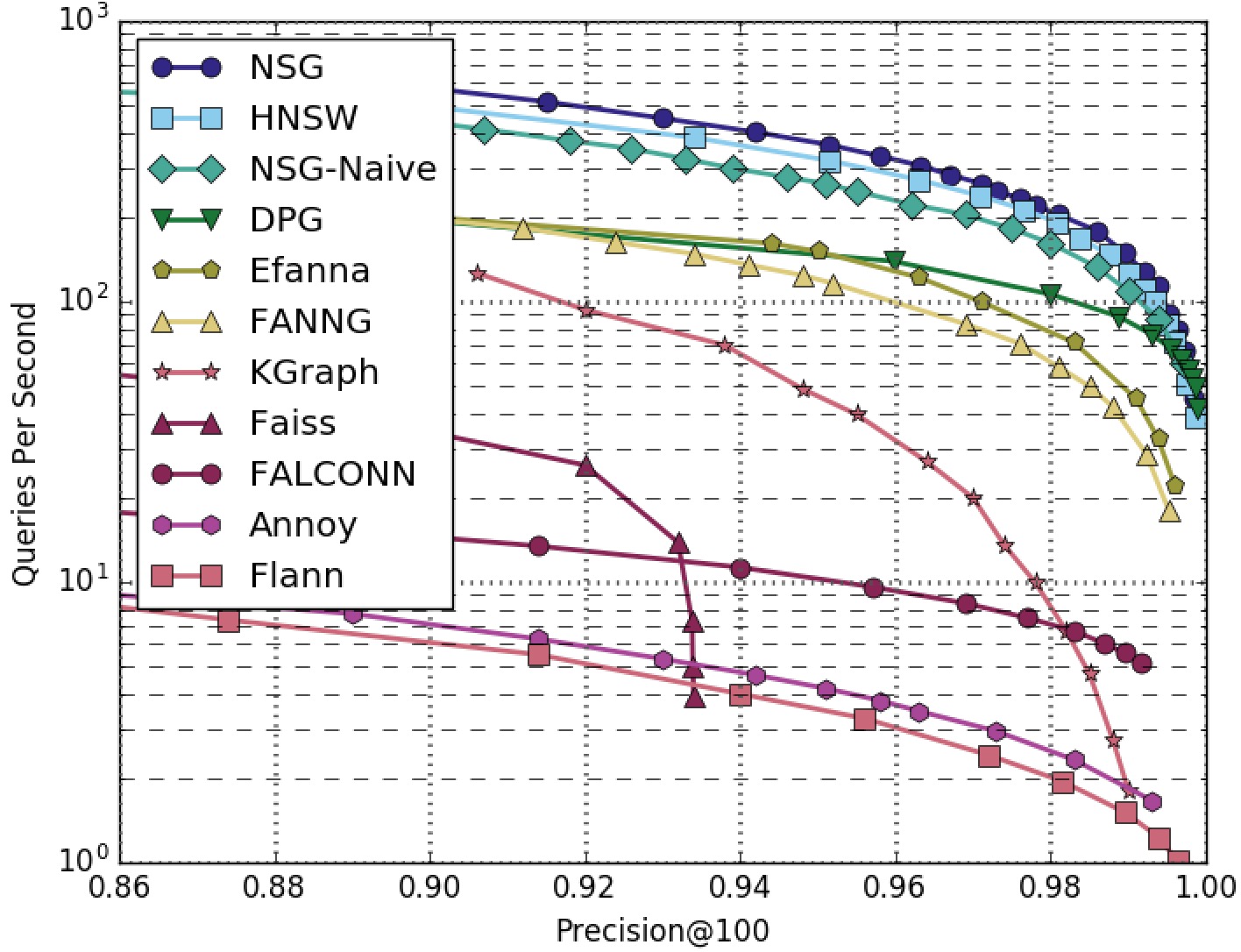

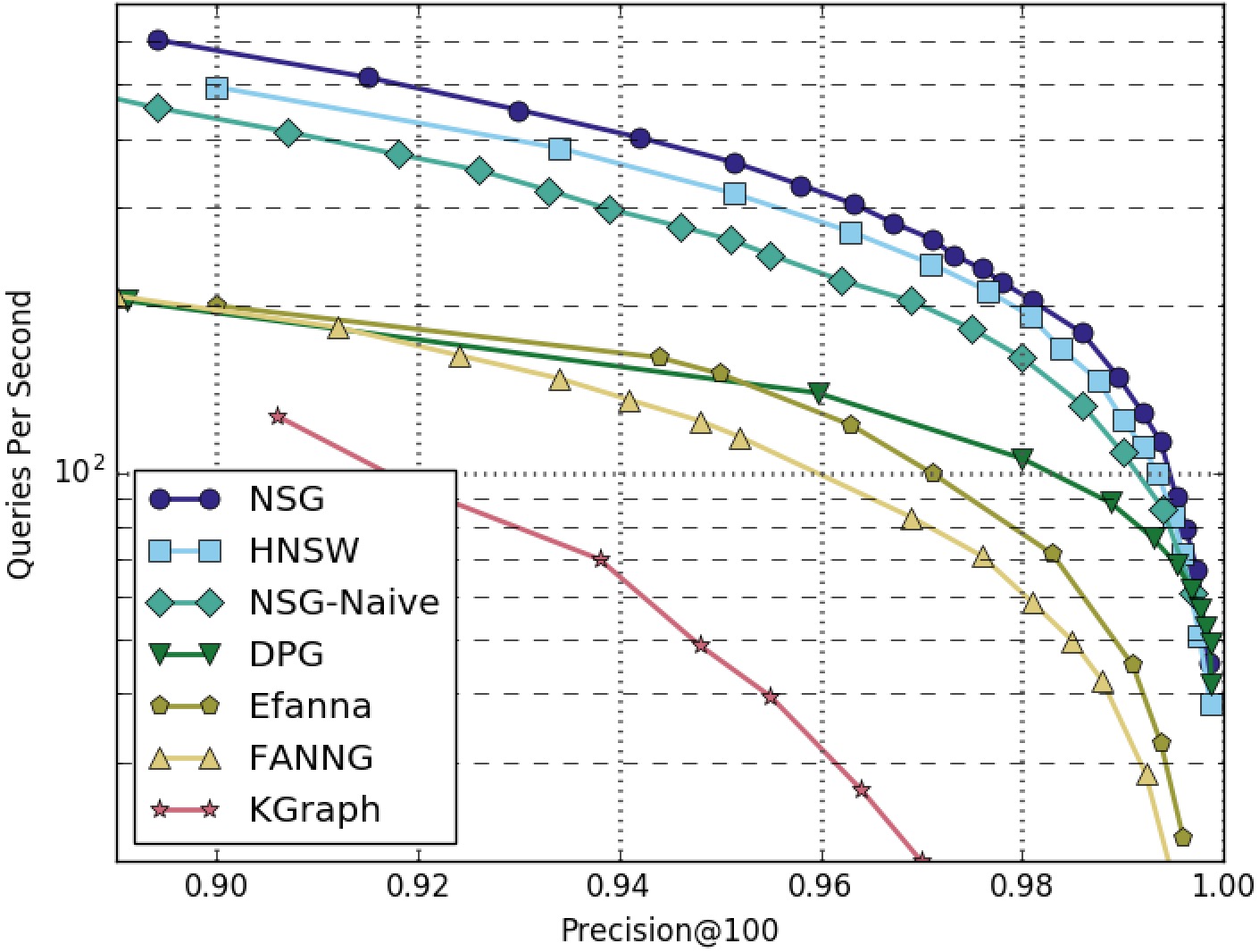

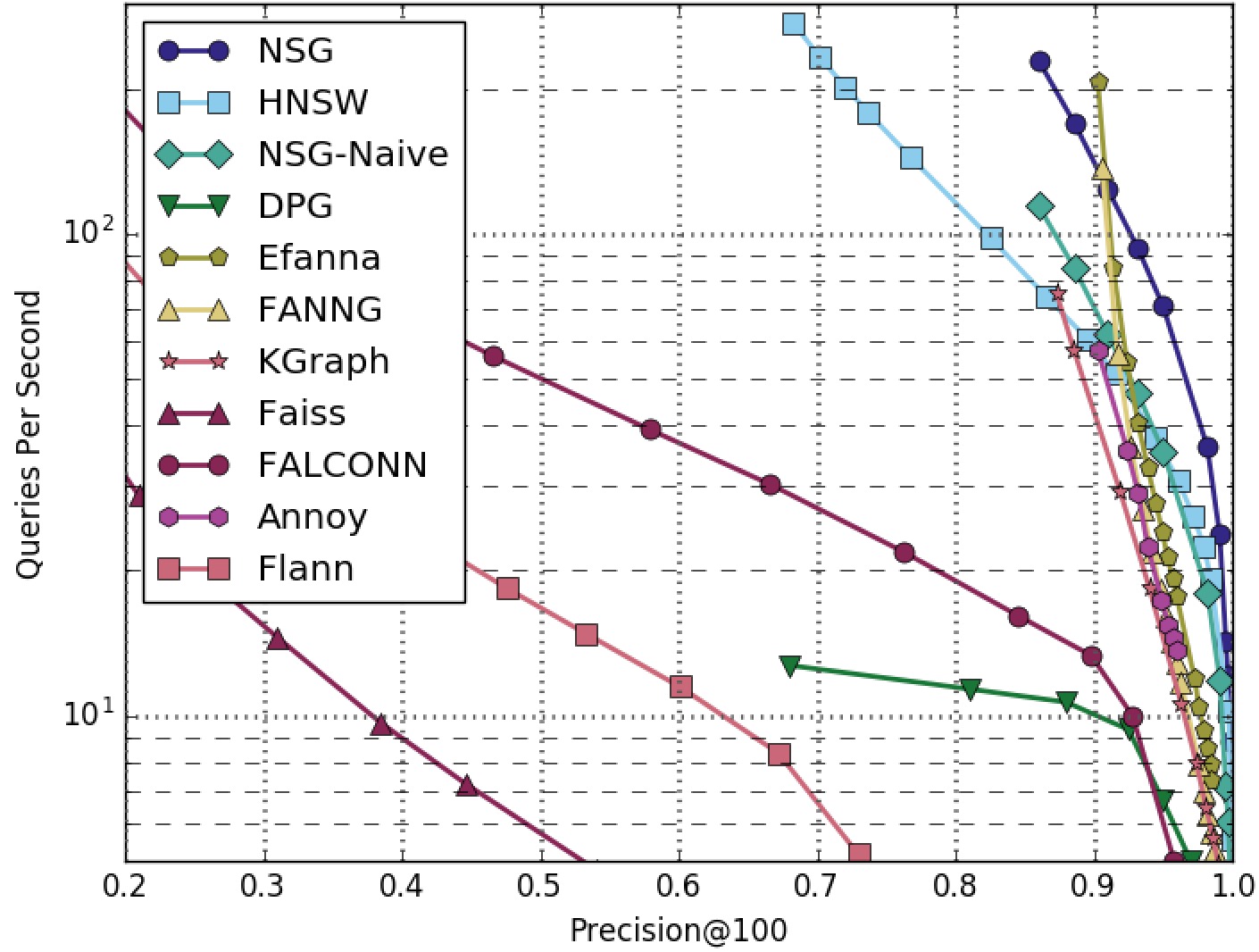

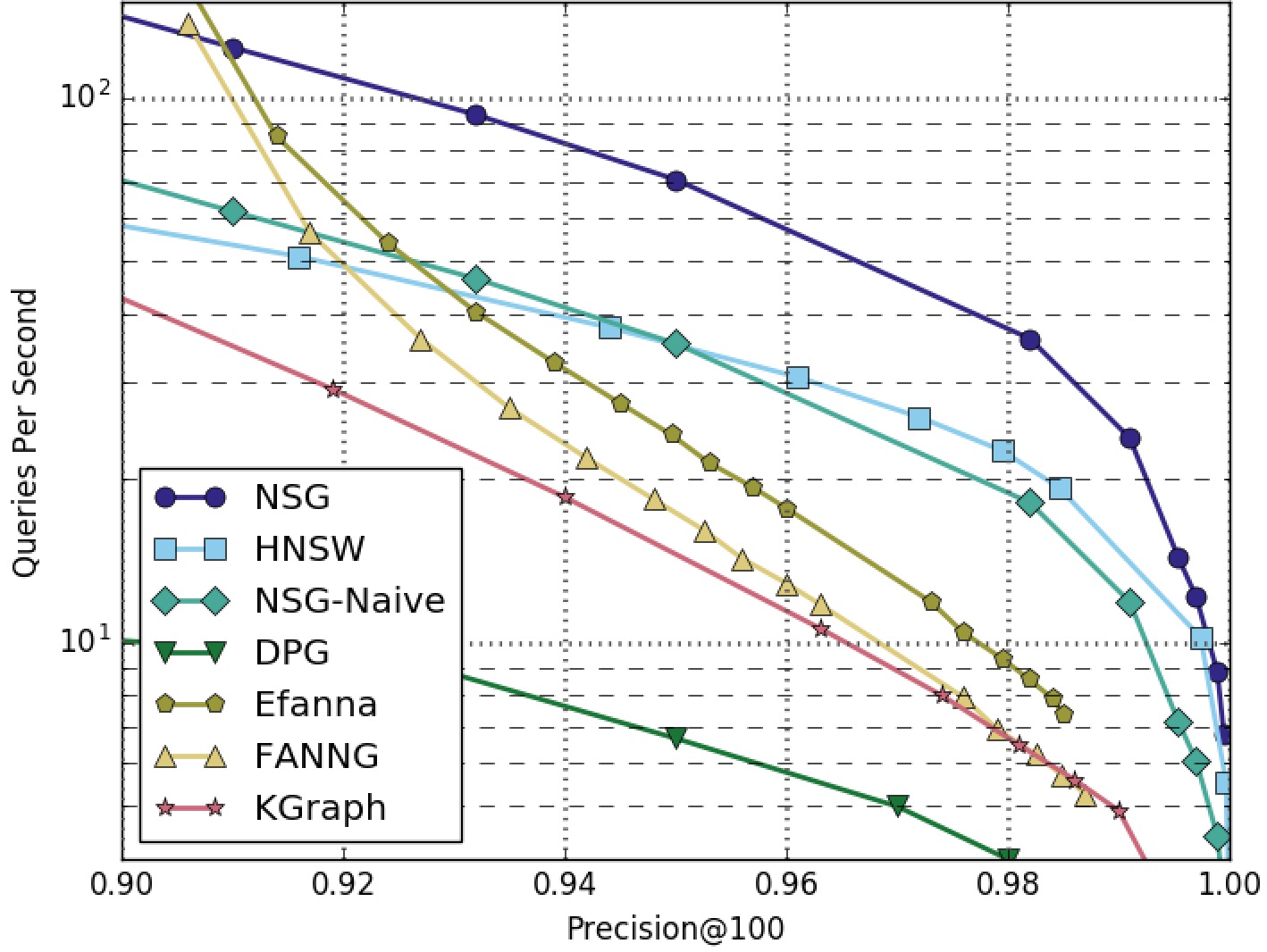

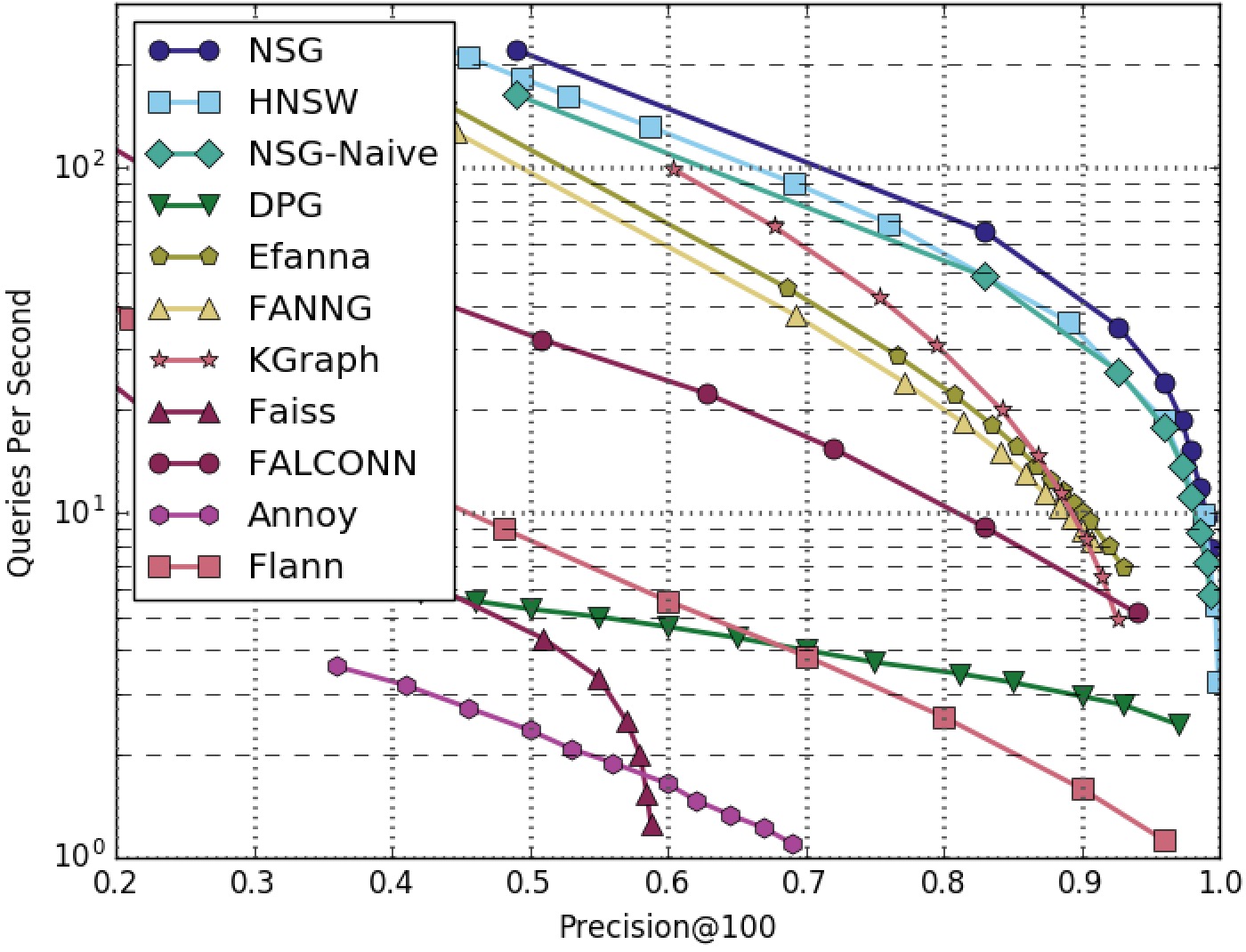

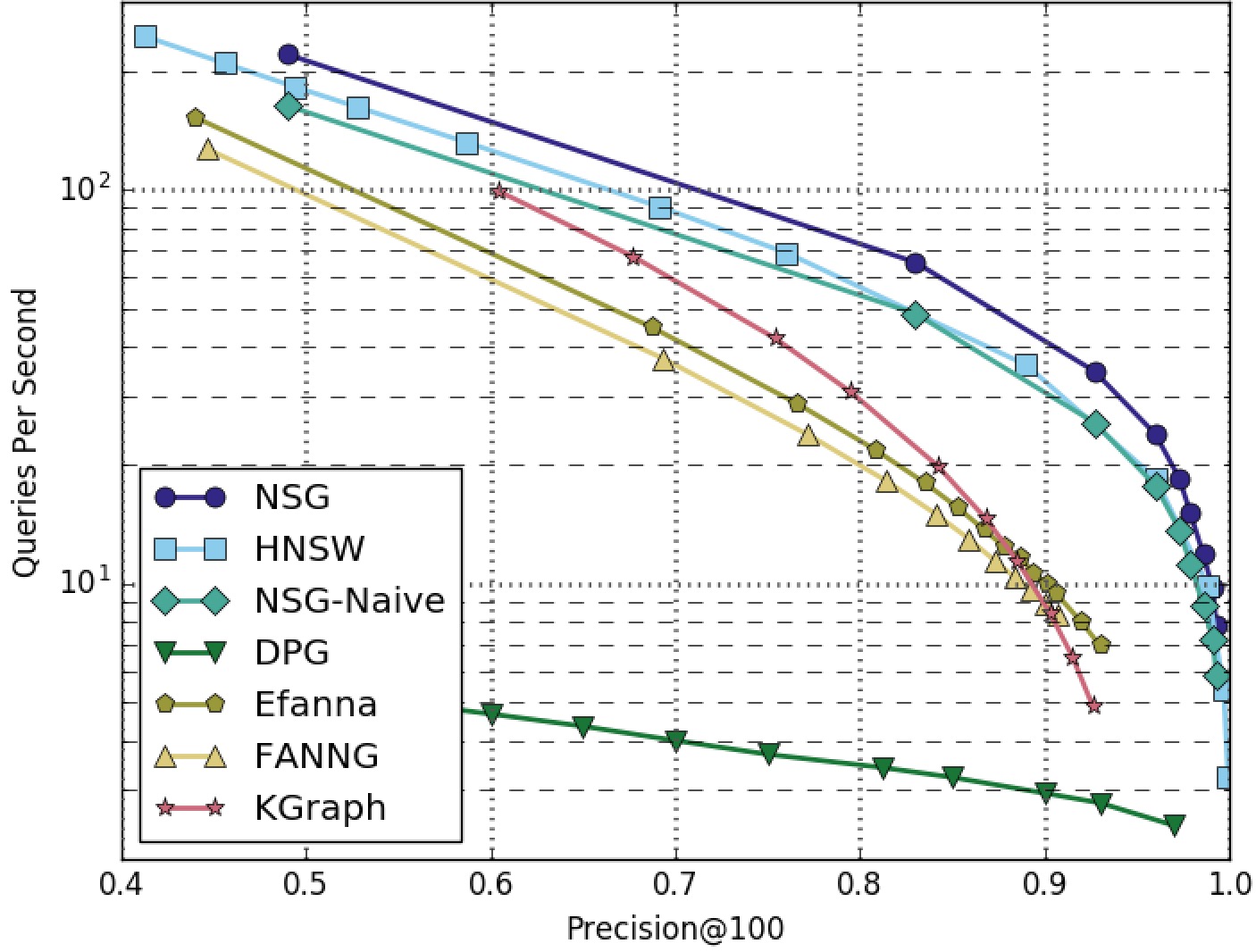

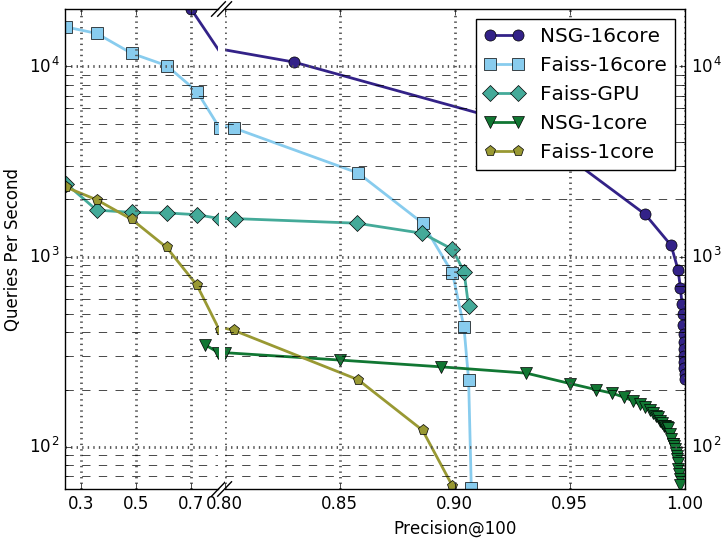

NSG achieved the best search performance among all the compared algorithms on all the four datasets. Among all the graph-based algorithms, NSG has the smallest index size and the best search performance.

NOTE: The performance was tested without parallelism (search one query at a time and no multi-threads)

SIFT1M-100NN-All-Algorithms

SIFT1M-100NN-Graphs-Only

GIST1M-100NN-All-Algorithms

GIST1M-100NN-Graphs-Only

RAND4M-100NN-All-Algorithms

RAND4M-100NN-Graphs-Only

GAUSS5M-100NN-All-Algorithms

GAUSS5M-100NN-Graphs-Only

DEEP1B-100NN

- GCC 4.9+ with OpenMP

- CMake 2.8+

- Boost 1.55+

- TCMalloc

IMPORTANT NOTE: this code uses AVX-256 intructions for fast distance computation, so your machine MUST support AVX-256 intructions, this can be checked using cat /proc/cpuinfo | grep avx2.

- Install Dependencies:

$ sudo apt-get install g++ cmake libboost-dev libgoogle-perftools-dev- Compile NSG:

$ git clone https://github.com/ZJULearning/nsg.git

$ cd nsg/

$ mkdir build/ && cd build/

$ cmake -DCMAKE_BUILD_TYPE=Release ..

$ make -j- Build Docker Image

$ docker build -t nsg .- Run and log into Docker container

$ docker run -it --name nsg nsg bash

You can modify the Dockerfile under the project as you need.

The main interfaces and classes have its respective test codes under directory tests/

To use NSG for ANNS, an NSG index must be built first. Here are the instructions for building NSG.

Firstly, we need to prepare a kNN graph.

We suggest you use our efanna_graph to build this kNN graph. But you can also use any alternatives you like, such as KGraph or faiss.

Secondly, we will convert the kNN graph to our NSG index.

You can use our demo code to achieve this converstion as follows:

$ cd build/tests/

$ ./test_nsg_index DATA_PATH KNNG_PATH L R C NSG_PATHDATA_PATHis the path of the base data infvecsformat.KNNG_PATHis the path of the pre-built kNN graph in Step 1..Lcontrols the quality of the NSG, the larger the better.Rcontrols the index size of the graph, the best R is related to the intrinsic dimension of the dataset.Ccontrols the maximum candidate pool size during NSG contruction.NSG_PATHis the path of the generated NSG index.

Here are the instructions of how to use NSG index for searching.

You can use our demo code to perform kNN searching as follows:

$ cd build/tests/

$ ./test_nsg_optimized_search DATA_PATH QUERY_PATH NSG_PATH SEARCH_L SEARCH_K RESULT_PATHDATA_PATHis the path of the base data infvecsformat.QUERY_PATHis the path of the query data infvecsformat.NSG_PATHis the path of the pre-built NSG index in previous section.SEARCH_Lcontrols the quality of the search results, the larger the better but slower. TheSEARCH_Lcannot be samller than theSEARCH_KSEARCH_Kcontrols the number of result neighbors we want to query.RESULT_PATHis the query results inivecsformat.

There is another program in tests/ folder named test_nsg_search. The parameters of test_nsg_search are exactly same as test_nsg_optimized_search. test_nsg_search is slower than test_nsg_optimized_search but requires less memory. In the situations memory consumption is extremely important, one can use test_nsg_search instead of test_nsg_optimized_search.

NOTE: Only data-type int32 and float32 are supported for now.

HINT: The

data_align()function we provided is essential for the correctness of our procedure, because we use SIMD instructions for acceleration of numerical computing such as AVX and SSE2. You should use it to ensure your data elements (feature) is aligned with 8 or 16 int or float. For example, if your features are of dimension 70, then it should be extend to dimension 72. And the last 2 dimension should be filled with 0 to ensure the correctness of the distance computing. And this is whatdata_align()does.

HINT: Please refer here for the desciption of

fvecs/ivecsformat.

We use the following parameters to get the index in Fig. 6 of our paper.

We use efanna_graph to build the kNN graph.

- Tool: efanna_graph

- Parameters:

| Dataset | K | L | iter | S | R |

|---|---|---|---|---|---|

| SIFT1M | 200 | 200 | 10 | 10 | 100 |

| GIST1M | 400 | 400 | 12 | 15 | 100 |

- Commands:

$ efanna_graph/tests/test_nndescent sift.fvecs sift_200nn.graph 200 200 10 10 100 # SIFT1M

$ efanna_graph/tests/test_nndescent gist.fvecs gist_400nn.graph 400 400 12 15 100 # GIST1M- Parameters:

| Dataset | L | R | C |

|---|---|---|---|

| SIFT1M | 40 | 50 | 500 |

| GIST1M | 60 | 70 | 500 |

- Commands:

$ nsg/build/tests/test_nsg_index sift.fvecs sift_200nn.graph 40 50 500 sift.nsg # SIFT1M

$ nsg/build/tests/test_nsg_index gist.fvecs gist_400nn.graph 60 70 500 gist.nsg # GIST1MHere we also provide our pre-built kNN graph and NSG index files used in our papar's experiments.

- kNN Graph:

- SIFT1M - sift_200nn.graph

- GIST1M - gist_400nn.graph

- NSG Index:

Environments:

- CPU: Xeon E5-2630.

Single Thread Test:

- Dataset: 10,000,000 128-dimension vectors.

- Latency: 1ms (average) on 10,000 query.

Distributed Search Test:

- Dataset: 45,000,000 128-dimension vectors. Distribute: randomly divide the dataset into 12 subsets and build 12 NSGs. Search in parallel and merge results.

- Latency: 1ms (average) on 10,000 query.

Reference to cite when you use NSG in a research paper:

@article{FuNSG17,

author = {Cong Fu and Chao Xiang and Changxu Wang and Deng Cai},

title = {Fast Approximate Nearest Neighbor Search With The Navigating Spreading-out Graphs},

journal = {{PVLDB}},

volume = {12},

number = {5},

pages = {461 - 474},

year = {2019},

url = {http://www.vldb.org/pvldb/vol12/p461-fu.pdf},

doi = {10.14778/3303753.3303754}

}

- Add Docker support

- Improve compatibility of SIMD-related codes

- Python wrapper

- Add travis CI

NSG is MIT-licensed.