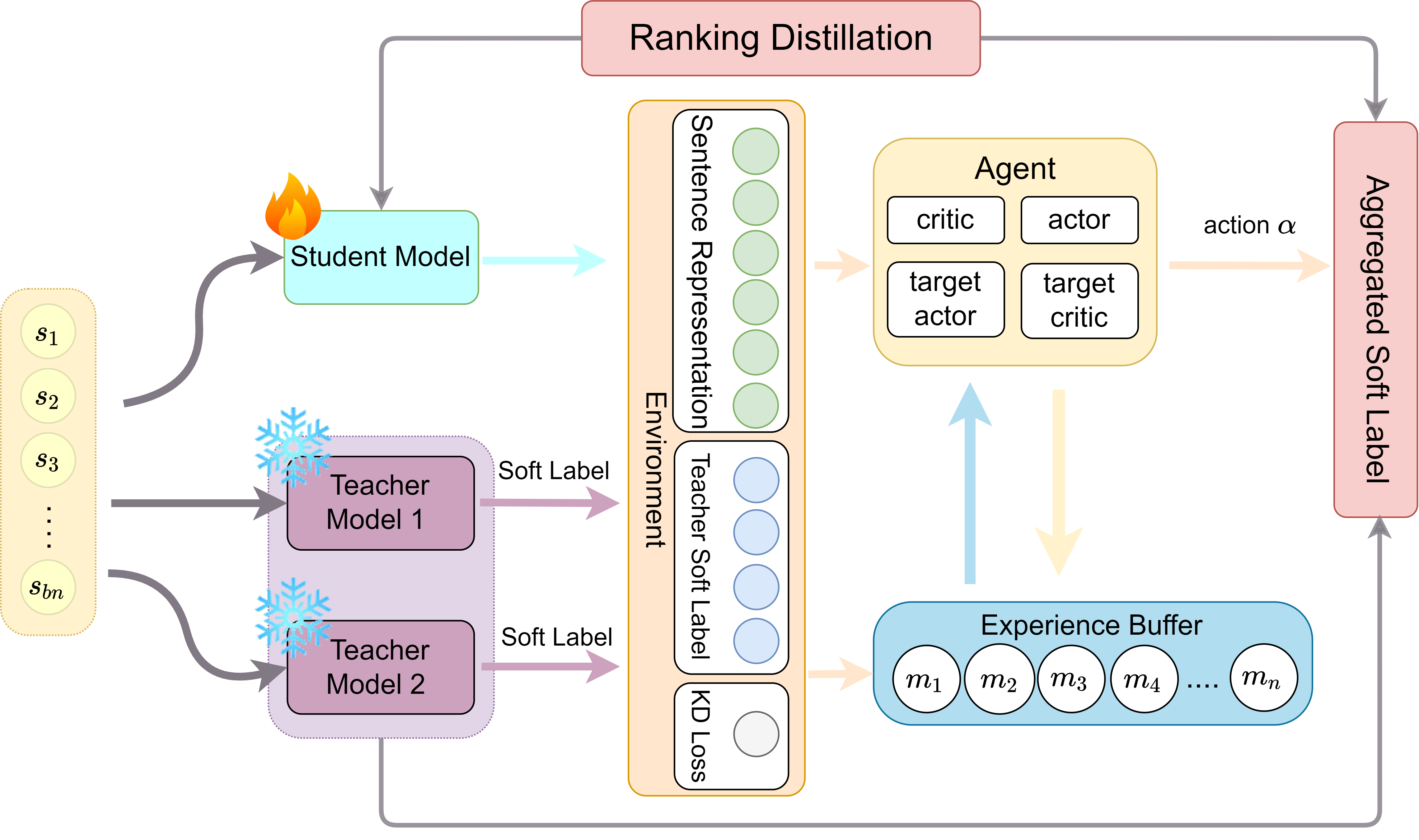

DynCSE: Dynamic Contrastive Learning of Sentence Embeddings via Reinforcement Learning from Multiple Teachers

First, install PyTorch by following the instructions from the official website.

pip install torch==1.7.1+cu110 -f https://download.pytorch.org/whl/torch_stable.htmlIf you instead use CUDA <11 or CPU, install PyTorch by the following command,

pip install torch==1.7.1Then run the following script to install the remaining dependencies,

pip install -r requirements.txtcd data

bash download_wiki.sh

cd SentEval/data/downstream/

bash download_dataset.sh

(The same as run_rankcse.sh.)

bash run_train_roberta.shOur new arguments:

--<first/second>_teacher_name_or_path: the model name of of the teachers for distilling ranking information. In the paper, two teachers are used, but we provide functionality for one or two (just don't set the second path).--distillation_loss: whether to use the ListMLE or ListNet problem formulation for computing distillation loss.--alpha_: in the paper, alpha is used to balance the ground truth similarity scores produced from two teachers, ie. alpha * teacher_1_rankings + (1 - alpha) * teacher_2_rankings. Unused if only one teacher is set.--beta_: weight/coefficient for the ranking consistency loss term--gamma_: weight/coefficient for the ranking distillation loss term--tau2: temperature for softmax computed by teachers. If ListNet is set, tau3 is set to tau2 / 2 by default, as suggested in the paper.

Arguments from SimCSE:

--train_file: Training file path (data/wiki1m_for_simcse.txt).--model_name_or_path: Pre-trained checkpoints to start with such as BERT-based models (bert-base-uncased,bert-large-uncased, etc.) and RoBERTa-based models (RoBERTa-base,RoBERTa-large).--temp: Temperature for the contrastive loss. We always use0.05.--pooler_type: Pooling method.--mlp_only_train: For unsupervised SimCSE-based models, it works better to train the model with MLP layer but test the model without it.

You can run the commands below for evaluation after using the repo to train a model:

python evaluation.py \

--model_name_or_path <your_output_model_dir> \

--pooler cls_before_pooler \

--task_set <sts|transfer|full> \

--mode testFor more detailed information, please check SimCSE's GitHub repo.

- RankCSE-ListMLE-BERT-base (reproduced): https://huggingface.co/perceptiveshawty/rankcse-listmle-bert-base-uncased

- RankCSE-ListNet-BERT-base (reproduced): https://huggingface.co/perceptiveshawty/rankcse-listnet-bert-base-uncased

We can load the models using the API provided by SimCSE. See Getting Started for more information.