Mooncake is the serving platform for  Kimi, a leading LLM service provided by

Kimi, a leading LLM service provided by  Moonshot AI.

This repository hosts its technical report and will also be utilized for the forthcoming open sourcing of traces. Stay tuned!

Moonshot AI.

This repository hosts its technical report and will also be utilized for the forthcoming open sourcing of traces. Stay tuned!

- June 27, 2024: We present a Chinese blog with more discussions on zhihu.

- June 26, 2024: Initial technical report release.

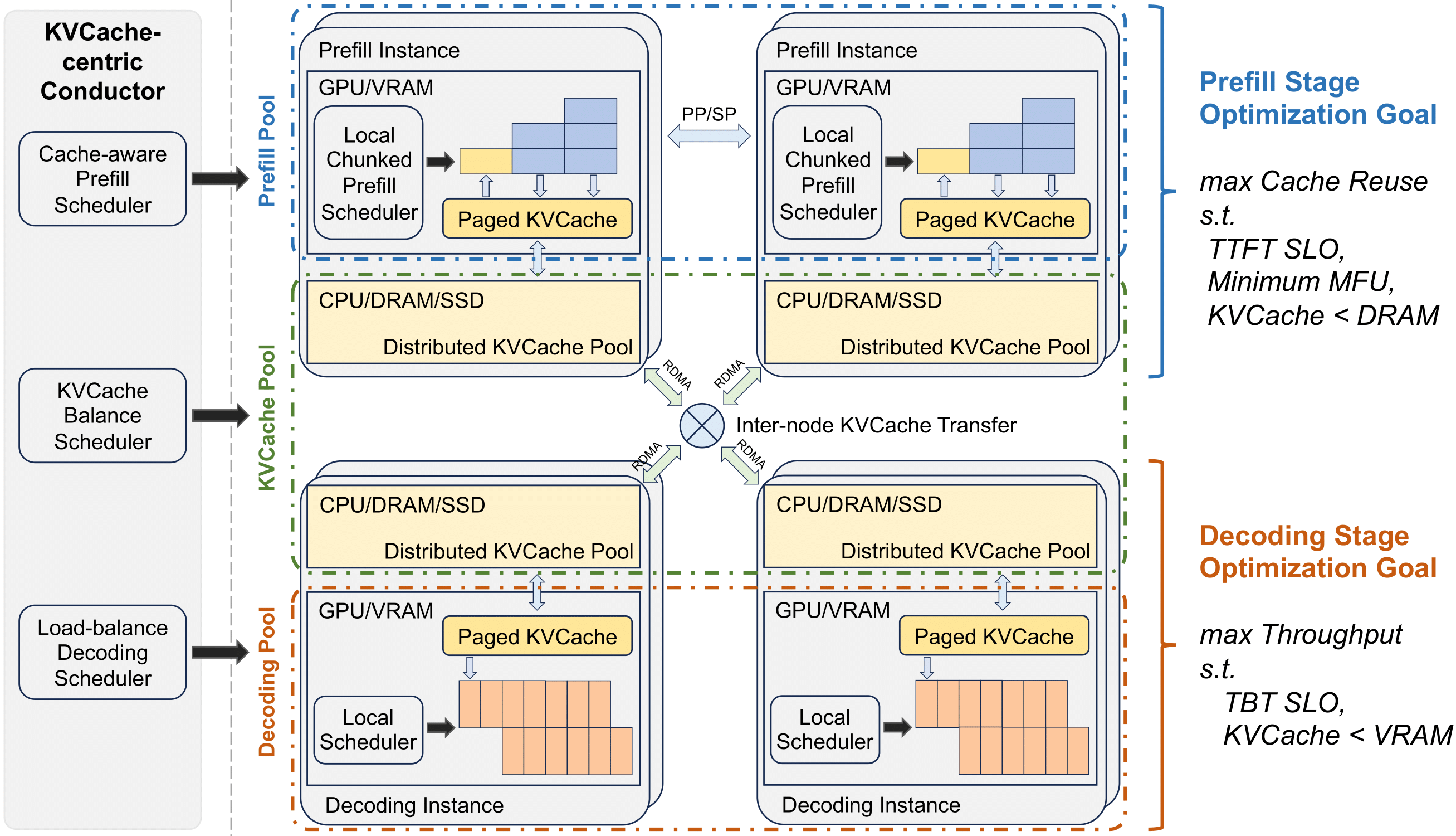

Mooncake features a KVCache-centric disaggregated architecture that separates the prefill and decoding clusters. It also leverages the underutilized CPU, DRAM, and SSD resources of the GPU cluster to implement a disaggregated cache of KVCache.

The core of Mooncake is its KVCache-centric scheduler, which balances maximizing overall effective throughput while meeting latency-related Service Level Objectives (SLOs) requirements. Unlike traditional studies that assume all requests will be processed, Mooncake faces challenges due to highly overloaded scenarios. To mitigate these, we developed a prediction-based early rejection policy. Experiments show that Mooncake excels in long-context scenarios. Compared to the baseline method, Mooncake can achieve up to a 525% increase in throughput in certain simulated scenarios while adhering to SLOs. Under real workloads, Mooncake’s innovative architecture enables Kimi to handle 75% more requests.

Please kindly cite our paper if you find it is useful:@article{qin2024mooncake,

title = {Mooncake: A KVCache-centric Disaggregated Architecture for LLM Serving},

author = {Ruoyu Qin, Zheming Li, Weiran He, Mingxing Zhang, Yongwei Wu, Weimin Zheng, and Xinran Xu},

year = {2024}

}Remark: arXiv version is still on holding.