Kube-router is a distributed load balancer, firewall and router designed for Kubernetes networking with aim to provide operational simplicity and high performance.

kube-router does it all.

With all features enabled, kube-router is a lean yet powerful alternative to several network components used in typical Kubernetes clusters. All this from a single DaemonSet/Binary. It doesn't get any easier.

kube-router uses the Linux kernel's IPVS features to implement its K8s Services Proxy. This feature has been requested for some time in kube-proxy, but you can have it right now with kube-router.

Read more about the advantages of IPVS for container load balancing:

- Kubernetes network services proxy with IPVS/LVS

- Kernel Load-Balancing for Docker Containers Using IPVS

kube-router handles Pod networking efficiently with direct routing thanks to the BGP protocol and the GoBGP Go library. It uses the native Kubernetes API to maintain distributed pod networking state. That means no dependency on a separate datastore to maintain in your cluster.

kube-router's elegant design also means there is no dependency on another CNI plugin. The official "bridge" plugin provided by the CNI project is all you need -- and chances are you already have it in your CNI binary directory!

Read more about the advantages and potential of BGP with Kubernetes:

Enabling Kubernetes Network Policies is easy with kube-router -- just add a flag to kube-router. It uses ipsets with iptables to ensure your firewall rules have as little performance impact on your cluster as possible.

Kube-router supports the networking.k8s.io/NetworkPolicy API or network policy V1/GA semantics and also network policy beta semantics.

Read more about kube-router's approach to Kubernetes Network Policies:

If you have other networking devices or SDN systems that talk BGP, kube-router will fit in perfectly. From a simple full node-to-node mesh to per-node peering configurations, most routing needs can be attained. The configuration is Kubernetes native (annotations) just like the rest of kube-router, so use the tools you already know! Since kube-router uses GoBGP, you have access to a modern BGP API platform as well right out of the box.

For more details please refer to the BGP documentation.

Although it does the work of several of its peers in one binary, kube-router does it all with a relatively tiny codebase, partly because IPVS is already there on your Kuberneres nodes waiting to help you do amazing things. kube-router brings that and GoBGP's modern BGP interface to you in an elegant package designed from the ground up for Kubernetes.

A primary motivation for kube-router is performance. The combination of BGP for inter-node Pod networking and IPVS for load balanced proxy Services is a perfect recipe for high-performance cluster networking at scale. BGP ensures that the data path is dynamic and efficient, and IPVS provides in-kernel load balancing that has been thouroughly tested and optimized.

Project is in alpha stage. We are working towards beta release milestone and are activley incorporating users feedback.

We encourage all kinds of contributions, be they documentation, code, fixing typos, tests — anything at all. Please read the contribution guide.

If you experience any problems please reach us on our gitter community forum for quick help. Feel free to leave feedback or raise questions at any time by opening an issue here.

Kube-router can be run as an agent or a Pod (via DaemonSet) on each node and leverages standard Linux technologies iptables, ipvs/lvs, ipset, iproute2

Kubernetes network services proxy with IPVS/LVS

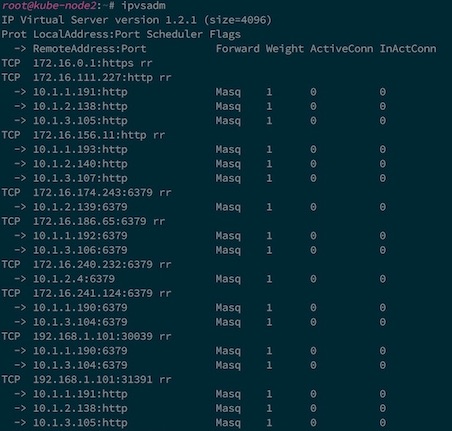

Kube-router uses IPVS/LVS technology built in Linux to provide L4 load balancing. Each ClusterIP, NodePort, and LoadBalancer Kubernetes Service type is configured as an IPVS virtual service. Each Service Endpoint is configured as real server to the virtual service. The standard ipvsadm tool can be used to verify the configuration and monitor the active connections.

Below is example set of Services on Kubernetes:

and the Endpoints for the Services:

and how they got mapped to the IPVS by kube-router:

Kube-router watches the Kubernetes API server to get updates on the Services/Endpoints and automatically syncs the IPVS configuration to reflect the desired state of Services. Kube-router uses IPVS masquerading mode and uses round robin scheduling currently. Source pod IP is preserved so that appropriate network policies can be applied.

Enforcing Kubernetes network policies with iptables

Kube-router provides an implementation of Kubernetes Network Policies through the use of iptables, ipset and conntrack. All the Pods in a Namespace with 'DefaultDeny' ingress isolation policy has ingress blocked. Only traffic that matches whitelist rules specified in the network policies are permitted to reach those Pods. The following set of iptables rules and chains in the 'filter' table are used to achieve the Network Policies semantics.

Each Pod running on the Node which needs ingress blocked by default is matched in FORWARD and OUTPUT chains of the fliter table and are sent to a pod specific firewall chain. Below rules are added to match various cases

- Traffic getting switched between the Pods on the same Node through the local bridge

- Traffic getting routed between the Pods on different Nodes

- Traffic originating from a Pod and going through the Service proxy and getting routed to a Pod on the same Node

Each Pod specific firewall chain has default rule to block the traffic. Rules are added to jump traffic to the Network Policy specific policy chains. Rules cover only policies that apply to the destination pod ip. A rule is added to accept the the established traffic to permit the return traffic.

Each policy chain has rules expressed through source and destination ipsets. Set of pods matching ingress rule in network policy spec forms a source Pod ip ipset. set of Pods matching pod selector (for destination Pods) in the Network Policy forms destination Pod ip ipset.

Finally ipsets are created that are used in forming the rules in the Network Policy specific chain

Kube-router at runtime watches Kubernetes API server for changes in the namespace, network policy and pods and dynamically updates iptables and ipset configuration to reflect desired state of ingress firewall for the the pods.

Kubernetes pod networking and beyond with BGP

Kube-router is expected to run on each Node. The subnet of the Node is obtained from the CNI configuration file on the Node or through the Node.PodCidr. Each kube-router instance on the Node acts as a BGP router and advertises the Pod CIDR assigned to the Node. Each Node peers with rest of the Nodes in the cluster forming full mesh. Learned routes about the Pod CIDR from the other Nodes (BGP peers) are injected into local Node routing table. On the data path, inter Node Pod-to-Pod communication is done by the routing stack on the Node.

Kube-router build upon following libraries: