Empowering Large Pre-Trained Language Models to Follow Complex Instructions

At present, our core contributors are preparing the 65B version and we expect to empower WizardLM with the ability to perform instruction evolution itself, aiming to evolve your specific data at a low cost.

-

🔥🔥🔥 we released the latest optimized version of Evol-Instruct training data of WizardLM model. Please refer to this HuggingFace Repo to download.

-

🔥🔥🔥 We released WizardCoder-15B-V1.0 (trained with 78k evolved code instructions), which surpasses Claude-Plus (+6.8), Bard (+15.3) and InstructCodeT5+ (+22.3) on the HumanEval Benchmarks. For more details (Paper, Demo (Only support code-related English instructions now.), Backup Demo1, Backup Demo2, Backup Demo3, Backup Demo4, Model Weights), please refer to WizardCoder.

-

🔥 Our WizardLM-13B-V1.0 model achieves the 1st-rank of the opensource models on the AlpacaEval Leaderboard.

-

📣 Please refer to our Twitter account https://twitter.com/WizardLM_AI and HuggingFace Repo https://huggingface.co/WizardLM . We will use them to announce any new release at the 1st time.

-

🔥 We released WizardLM-30B-V1.0 (Demo_30B and WizardLM-13B-V1.0 (Demo_13B) trained with 250k evolved instructions (from ShareGPT), and WizardLM-7B-V1.0 (Demo_7B) trained with 70k evolved instructions (from Alpaca data). Please checkout the Delta Weights and paper.

-

📣 We are looking for highly motivated students to join us as interns to create more intelligent AI together. Please contact [email protected]

Note for 30B and 13B model usage:

To obtain results identical to our demo, please strictly follow the prompts and invocation methods provided in the "src/infer_wizardlm13b.py" to use our 13B model for inference. Unlike the 7B model, the 13B model adopts the prompt format from Vicuna and supports multi-turn conversation.

For WizardLM-13B-V1.0, WizardLM-30B-V1.0 , the Prompt should be as following:

A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful, detailed, and polite answers to the user's questions. USER: hello, who are you? ASSISTANT:

For WizardLM-7B-V1.0 , the Prompt should be as following:

"{instruction}\n\n### Response:"

For WizardCoder-15B-V1.0 , the Prompt should be as following:

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response:"

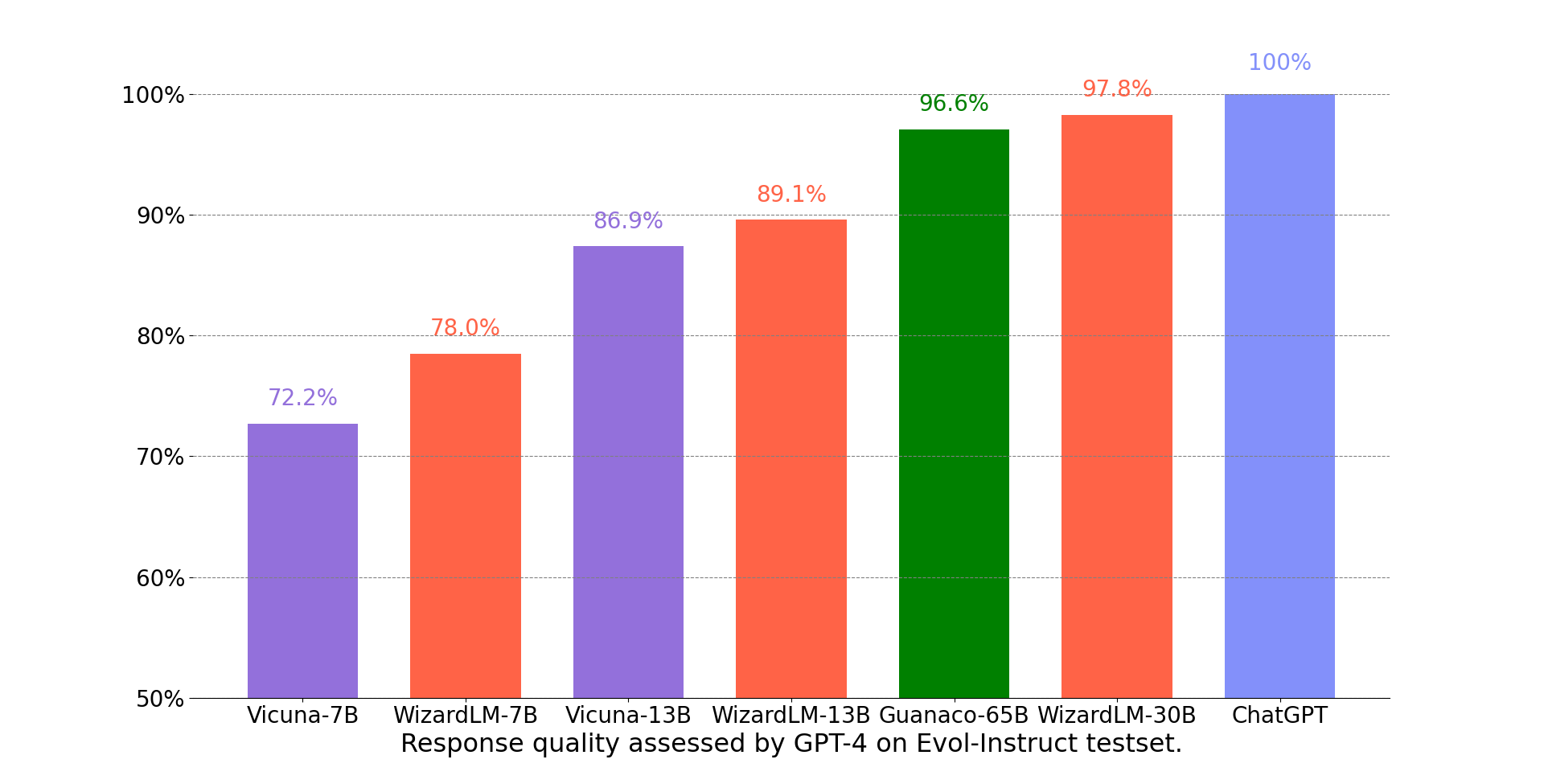

We adopt the automatic evaluation framework based on GPT-4 proposed by FastChat to assess the performance of chatbot models. As shown in the following figure, WizardLM-30B achieved better results than Guanaco-65B.

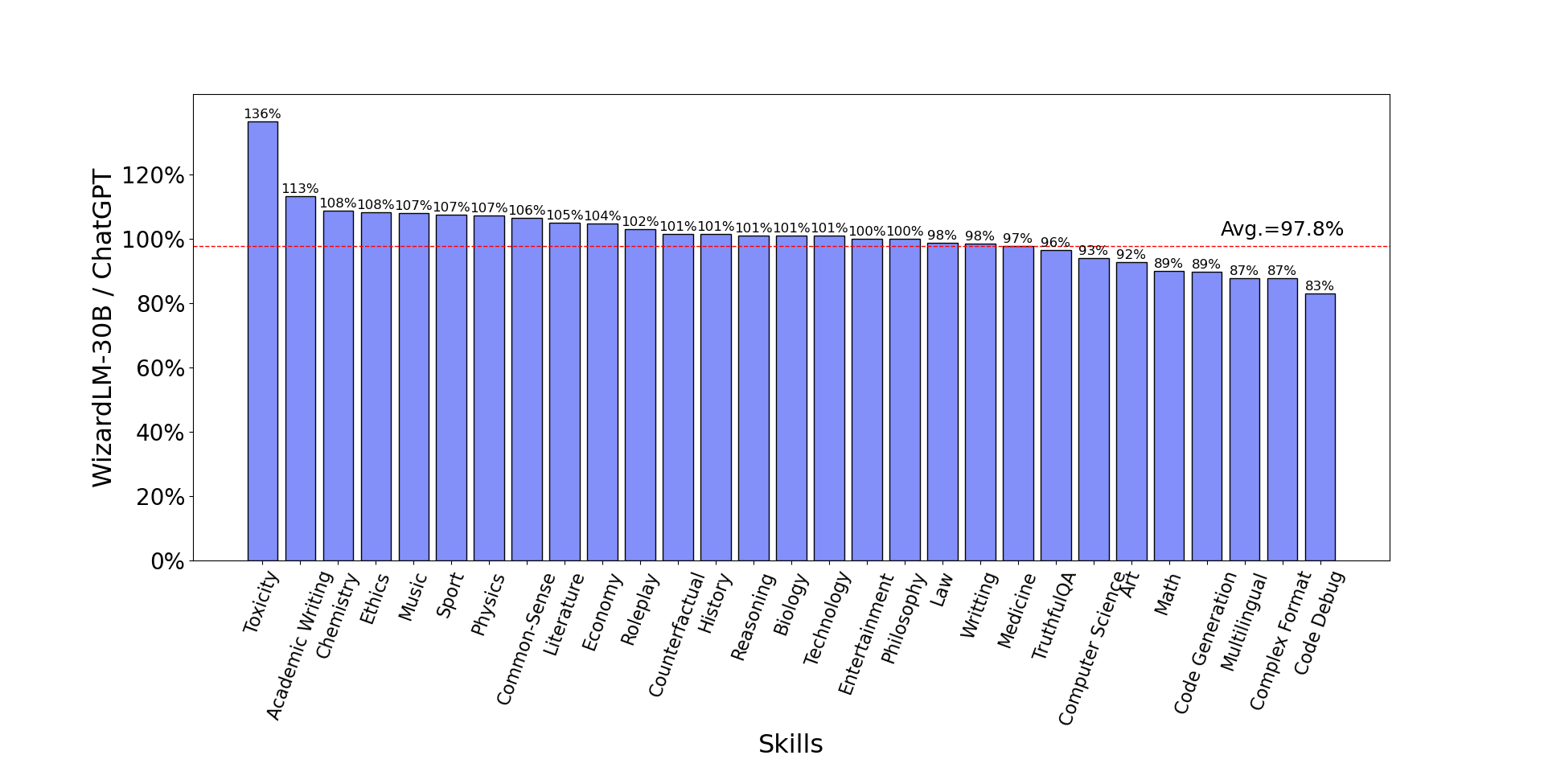

The following figure compares WizardLM-30B and ChatGPT’s skill on Evol-Instruct testset. The result indicates that WizardLM-30B achieves 97.8% of ChatGPT’s performance on average, with almost 100% (or more than) capacity on 18 skills, and more than 90% capacity on 24 skills.

The following table provides a comparison of WizardLMs and other LLMs on NLP foundation tasks. The results indicate that WizardLMs consistently exhibit superior performance in comparison to the LLaMa models of the same size. Furthermore, our WizardLM-30B model showcases comparable performance to OpenAI's Text-davinci-003 on the MMLU and HellaSwag benchmarks.

| Model | MMLU 5-shot | ARC 25-shot | TruthfulQA 0-shot | HellaSwag 10-shot | Average |

|---|---|---|---|---|---|

| Text-davinci-003 | 56.9 | 85.2 | 59.3 | 82.2 | 70.9 |

| Vicuna-13b 1.1 | 51.3 | 53.0 | 51.8 | 80.1 | 59.1 |

| Guanaco 30B | 57.6 | 63.7 | 50.7 | 85.1 | 64.3 |

| WizardLM-7B 1.0 | 42.7 | 51.6 | 44.7 | 77.7 | 54.2 |

| WizardLM-13B 1.0 | 52.3 | 57.2 | 50.5 | 81.0 | 60.2 |

| WizardLM-30B 1.0 | 58.8 | 62.5 | 52.4 | 83.3 | 64.2 |

The following table provides a comprehensive comparison of WizardLMs and several other LLMs on the code generation task, namely HumanEval. The evaluation metric is pass@1. The results indicate that WizardLMs consistently exhibit superior performance in comparison to the LLaMa models of the same size. Furthermore, our WizardLM-30B model surpasses StarCoder and OpenAI's code-cushman-001. Moreover, our Code LLM, WizardCoder, demonstrates exceptional performance, achieving a pass@1 score of 57.3, surpassing the open-source SOTA by approximately 20 points.

| Model | HumanEval Pass@1 |

|---|---|

| LLaMA-7B | 10.5 |

| LLaMA-13B | 15.8 |

| CodeGen-16B-Multi | 18.3 |

| CodeGeeX | 22.9 |

| LLaMA-33B | 21.7 |

| LLaMA-65B | 23.7 |

| PaLM-540B | 26.2 |

| CodeGen-16B-Mono | 29.3 |

| code-cushman-001 | 33.5 |

| StarCoder | 33.6 |

| WizardLM-7B 1.0 | 19.1 |

| WizardLM-13B 1.0 | 24.0 |

| WizardLM-30B 1.0 | 37.8 |

| WizardCoder-15B 1.0 | 57.3 |

We welcome everyone to use your professional and difficult instructions to evaluate WizardLM, and show us examples of poor performance and your suggestions in the issue discussion area. We are focusing on improving the Evol-Instruct now and hope to relieve existing weaknesses and issues in the the next version of WizardLM. After that, we will open the code and pipeline of up-to-date Evol-Instruct algorithm and work with you together to improve it.

Thanks to the enthusiastic friends, their video introductions are more lively and interesting.

- GET WizardLM NOW! 7B LLM KING That Can Beat ChatGPT! I'm IMPRESSED!

- WizardLM: Enhancing Large Language Models to Follow Complex Instructions

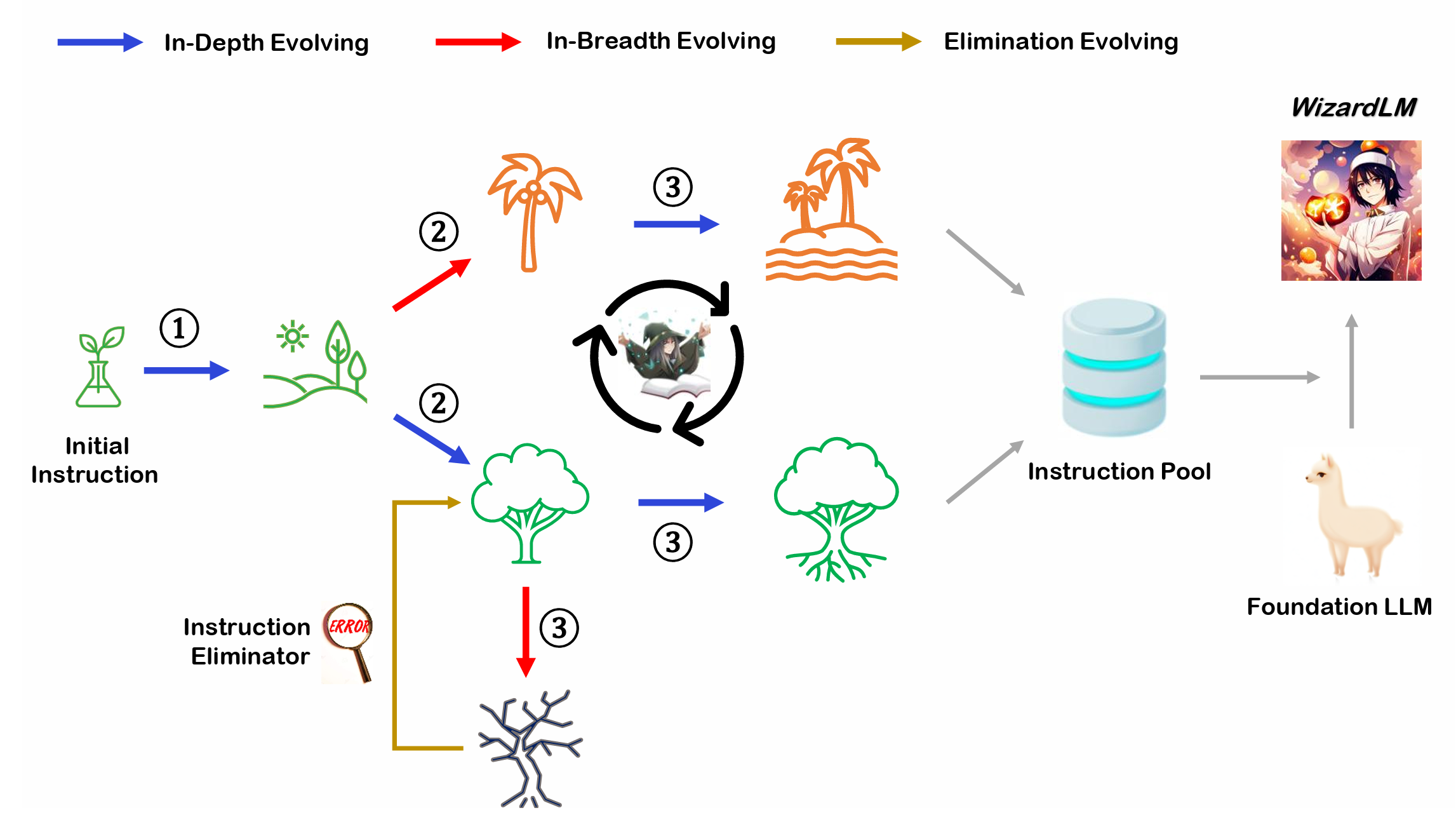

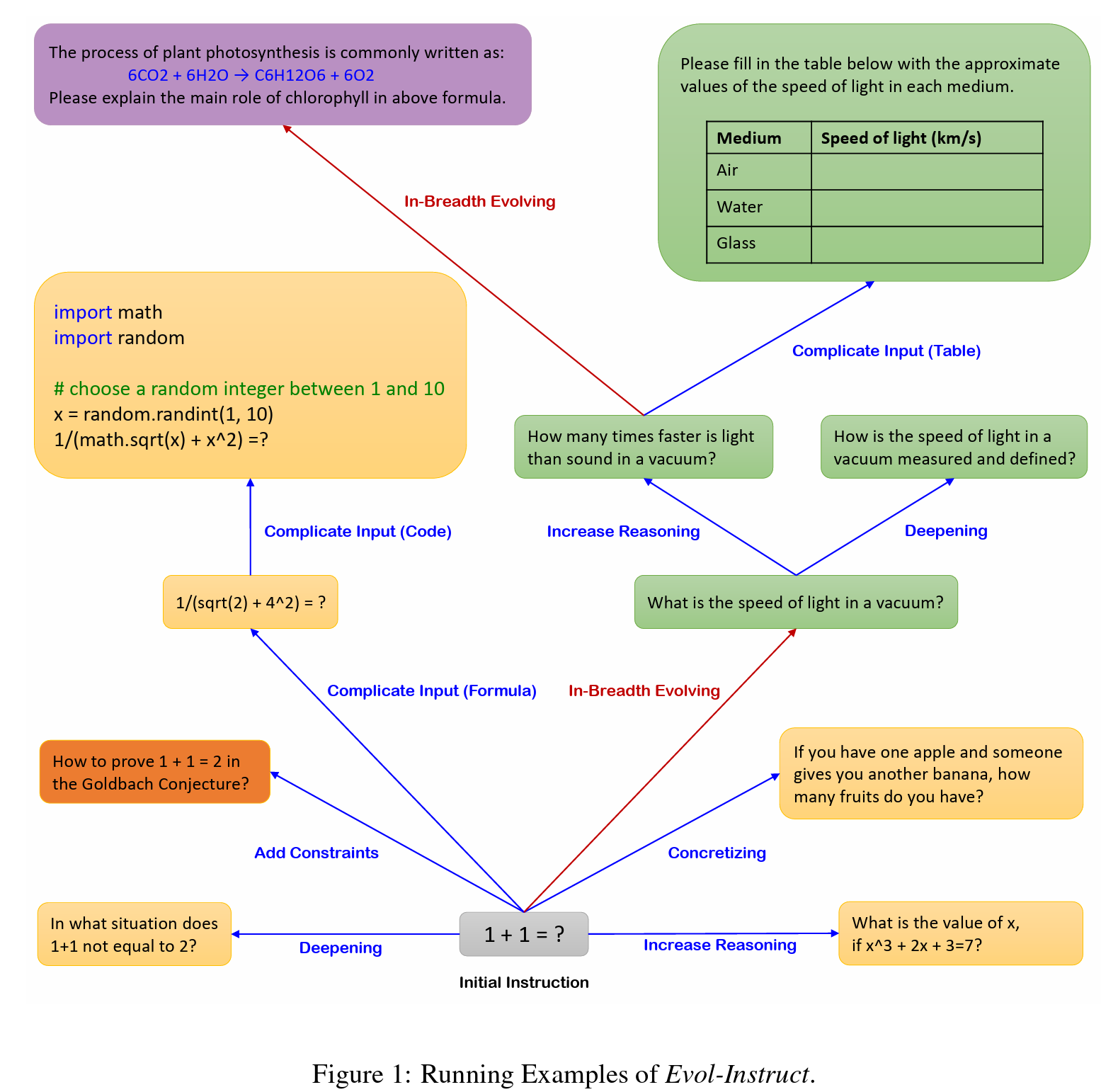

Evol-Instruct is a novel method using LLMs instead of humans to automatically mass-produce open-domain instructions of various difficulty levels and skills range, to improve the performance of LLMs.

Please cite the repo if you use the data or code in this repo.

@misc{xu2023wizardlm,

title={WizardLM: Empowering Large Language Models to Follow Complex Instructions},

author={Can Xu and Qingfeng Sun and Kai Zheng and Xiubo Geng and Pu Zhao and Jiazhan Feng and Chongyang Tao and Daxin Jiang},

year={2023},

eprint={2304.12244},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

The resources, including code, data, and model weights, associated with this project are restricted for academic research purposes only and cannot be used for commercial purposes. The content produced by any version of WizardLM is influenced by uncontrollable variables such as randomness, and therefore, the accuracy of the output cannot be guaranteed by this project. This project does not accept any legal liability for the content of the model output, nor does it assume responsibility for any losses incurred due to the use of associated resources and output results.