| page_type | languages | products | urlFragment | name | description | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

sample |

|

|

contoso-chat |

Contoso Chat - Retail RAG Copilot with Azure AI Studio and Prompty (Python Implementation) |

Build, evaluate, and deploy, a RAG-based retail copilot that responds to customer questions with responses grounded in the retailer's product and customer data. |

- Overview

- Features · Architecture Diagram

- Pre-Requisites

- Getting Started

- Development

- Testing

- Deployment

- Guidance - Region Availability · Costs · Security

- Workshop 🆕 · Versions

- Resources · Code of Conduct · Responsible AI Guidelines

This template, the application code and configuration it contains, has been built to showcase Microsoft Azure specific services and tools. We strongly advise our customers not to make this code part of their production environments without implementing or enabling additional security features.

For a more comprehensive list of best practices and security recommendations for Intelligent Applications, visit our official documentation.

Warning

Some of the features used in this repository are in preview. Preview versions are provided without a service level agreement, and they are not recommended for production workloads. Certain features might not be supported or might have constrained capabilities. For more information, see Supplemental Terms of Use for Microsoft Azure Previews.

Sample application code is included in this project. You can use or modify this app code or you can rip it out and include your own.

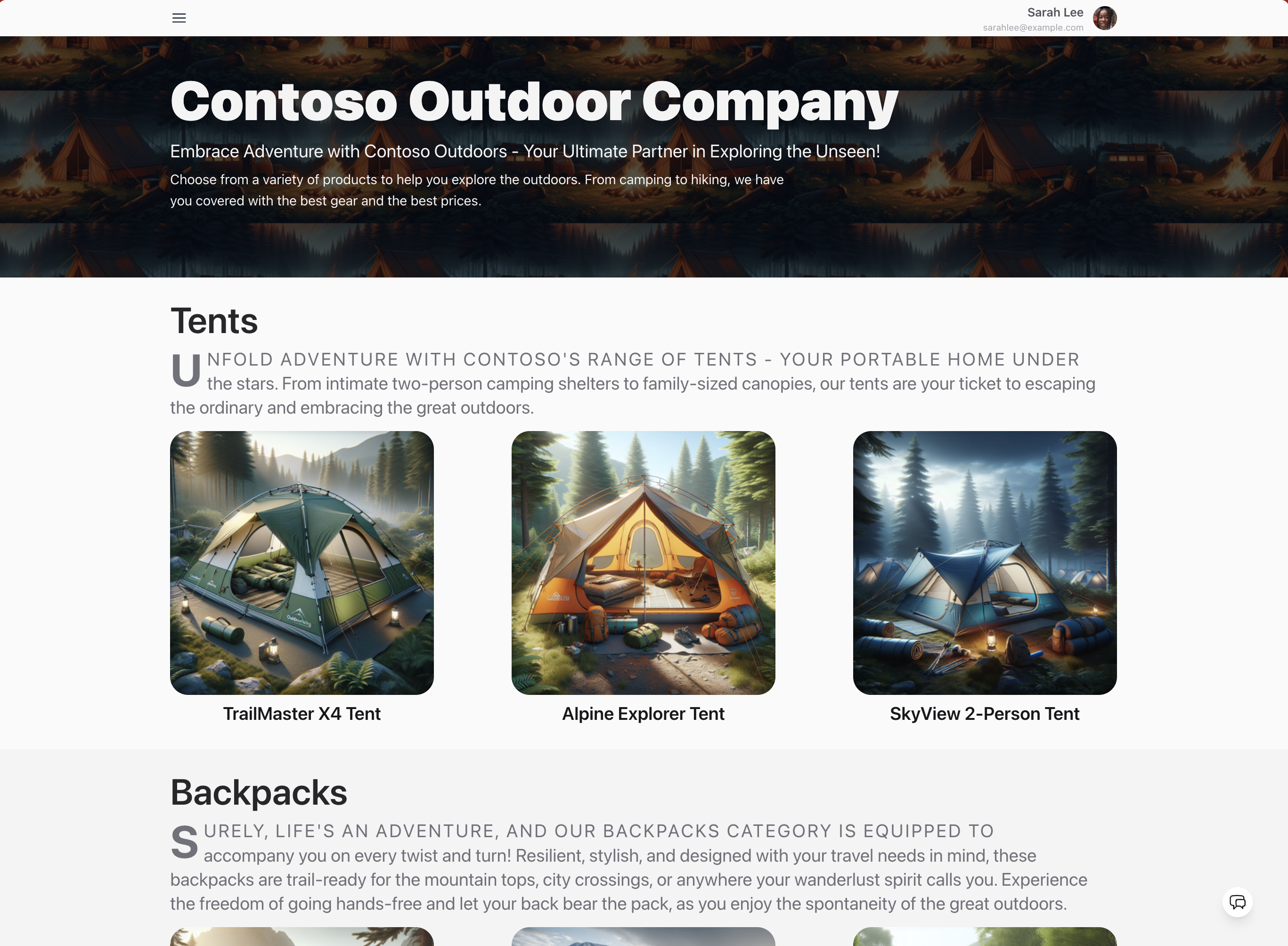

Contoso Outdoor is an online retailer specializing in hiking and camping equipment for outdoor enthusiasts. The website offers an extensive catalog of products - resulting in customers needing product information and recommendations to assist them in making relevant purchases.

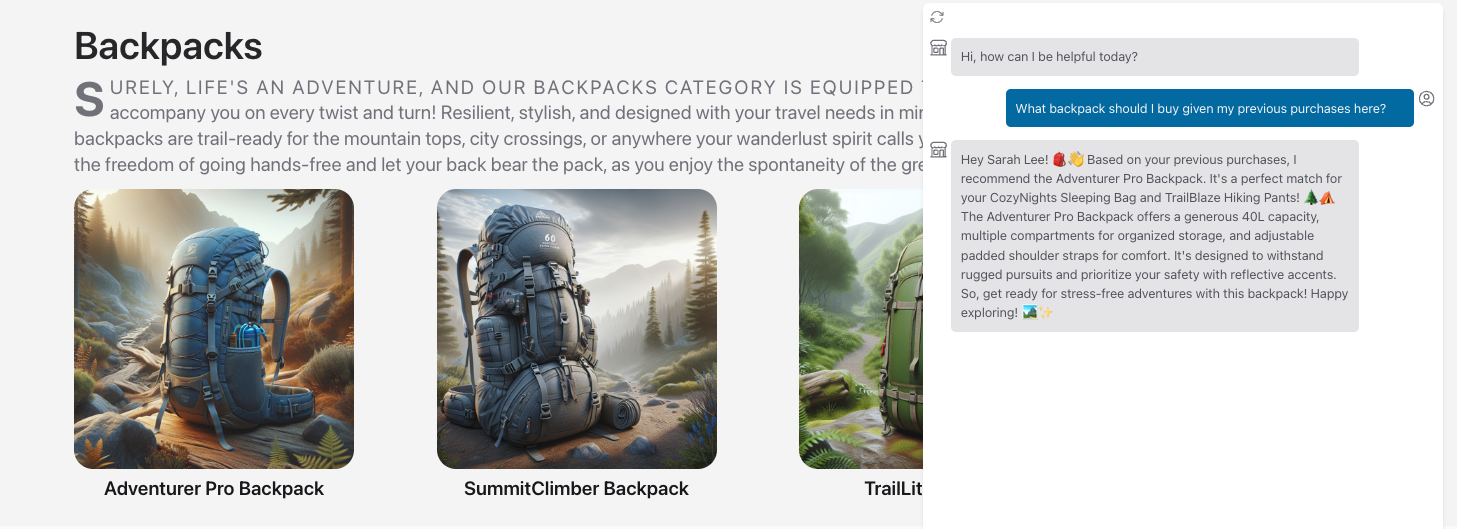

This sample implements Contoso Chat - a retail copilot solution for Contoso Outdoor that uses a retrieval augmented generation design pattern to ground chatbot responses in the retailer's product and customer data. Customers can ask questions from the website in natural language, and get relevant responses with potential recommendations based on their purchase history - with responsible AI practices to ensure response quality and safety.

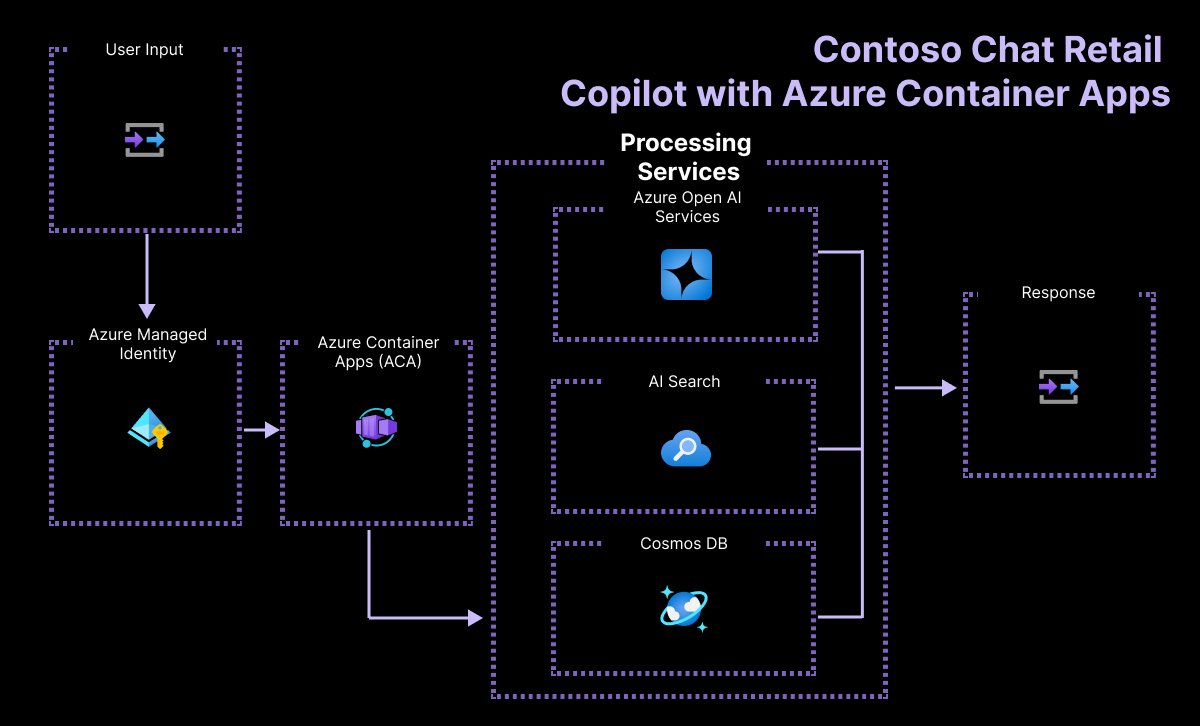

The sample illustrates the end-to-end workflow (GenAIOps) for building a RAG-based copilot code-first with Azure AI and Prompty. By exploring and deploying this sample, you will learn to:

- Ideate and iterate rapidly on app prototypes using Prompty

- Deploy and use Azure OpenAI models for chat, embeddings and evaluation

- Use Azure AI Search (indexes) and Azure CosmosDB (databases) for your data

- Evaluate chat responses for quality using AI-assisted evaluation flows

- Host the application as a FastAPI endpoint deployed to Azure Container Apps

- Provision and deploy the solution using the Azure Developer CLI

- Support Responsible AI practices with content safety & assessments

The project template provides the following features:

- Azure OpenAI for embeddings, chat, and evaluation models

- Prompty for creating and managing prompts for rapid ideati

- Azure AI Search for performing semantic similarity search

- Azure CosmosDB for storing customer orders in a noSQL database

- Azure Container Apps for hosting the chat AI endpoint on Azure

It also comes with:

- Sample product and customer data for rapid prototyping

- Sample application code for chat and evaluation workflows

- Sample datasets and custom evaluators using prompty assets

To deploy and explore the sample, you will need:

- An active Azure subscription - Signup for a free account here

- An active GitHub account - Signup for a free account here

- Access to Azure OpenAI Services - Learn about Limited Access here

- Access to Azure AI Search - With Semantic Ranker (premiun feature)

- Available Quota for:

text-embedding-ada-002,gpt-35-turbo. andgpt-4

We recommend deployments to swedencentral or francecentral as regions that can support all these models. In addition to the above, you will also need the ability to:

- provision Azure Monitor (free tier)

- provision Azure Container Apps (free tier)

- provision Azure CosmosDB for noSQL (free tier)

From a tooling perspective, familiarity with the following is useful:

- Visual Studio Code (and extensions)

- GitHub Codespaces and dev containers

- Python and Jupyter Notebooks

- Azure CLI, Azure Developer CLI and commandline usage

You have three options for setting up your development environment:

- Use GitHub Codespaces - for a prebuilt dev environment in the cloud

- Use Docker Desktop - for a prebuilt dev environment on local device

- Use Manual Setup - for control over all aspects of local env setup

We recommend going with GitHub Codespaces for the fastest start and lowest maintenance overheads. Pick one option below - click to expand the section and view the details.

-

You can run this template virtually by using GitHub Codespaces. Click this button to open a web-based VS Code instance in your browser:

-

Once the codespaces environment is ready (this can take several minutes), open a new terminal in that VS Code instance - and proceed to the Development step.

A related option is to use VS Code Dev Containers, which will open the project in your local Visual Studio Code editor using the Dev Containers extension:

-

Install Docker Desktop (if not installed), then start it.

-

Open the project in your local VS Code by clicking the button below:

-

Once the VS Code window shows the project files (this can take several minutes), open a new terminal in that VS Code instance - and proceed to the Development step.

-

Install the required tools in your local device:

Note for Windows users: If you are not using a container to run this sample, note that our post-provisioning hooks make use of shell scripts. While we update scripts for different local device environments, we recommend using git bash to run samples correctly.

-

Initialize the project in your local device:

- Create a new folder

contoso-chatandcdinto it - Run this command to download project template. Note that this command will initialize a git repository, so you do not need to clone this repository.

azd init -t contoso-chat-openai-prompty

- Create a new folder

-

Install dependencies for the project, manually. Note that this is done for you automatically if you use the dev container options above.

cd src/api pip install -r requirements.txt

You can now proceed to the next step - Development - where we will provision the required Azure infrastructure and deploy the application from the template using azd.

Once you've completed the setup the project (using Codespaces, Dev Containers, or local environment) you should now have a Visual Studio Code editor open, with the project files loaded, and a terminal open for running commands. Let's verify that all required tools are installed.

az version

azd version

prompty --version

python --versionWe can now proceed with next steps - click to expand for detailed instructions.

1️⃣ | Authenticate With Azure

-

Open a VS Code terminal and authenticate with Azure CLI. Use the

--use-device-codeoption if authenticating from GitHub Codespaces. Complete the auth workflow as guided.az login --use-device-code

-

Now authenticate with Azure Developer CLI in the same terminal. Complete the auth workflow as guided.

azd auth login --use-device-code

-

You should see: Logged in on Azure. This will create a folder under

.azure/in your project to store the configuration for this deployment. You may have multiple azd environments if desired.

2️⃣ | Provision-Deploy with AZD

-

Run

azd upto provision infrastructure and deploy the application, with one command. (You can also useazd provision,azd deployseparately if needed)azd up

-

You will be asked for a subscription for provisioning resources, an environment name that maps to the resource group, and a location for deployment. Refer to the Region Availability guidance to select the region that has the desired models and quota available.

-

The

azd upcommand can take 15-20 minutes to complete. Successful completion sees aSUCCESS: ...messages posted to the console. We can now validate the outcomes.

3️⃣ | Validate the Infrastructure

- Visit the Azure Portal - look for the

rg-ENVNAMEresource group created above - Click the

Deploymentslink in the Essentials section - wait till all are completed. - Return to

Overviewpage - you should see: 35 deployments, 15 resources - Click on the

Azure CosmosDB resourcein the list- Visit the resource detail page - click "Data Explorer"

- Verify that it has created a

customersdatabase with data items in it

- Click on the

Azure AI Searchresource in the list- Visit the resource detail page - click "Search Explorer"

- Verify that it has created a

contoso-productsindex with data items in it

- Click on the

Azure Container Appsresource in the list- Visit the resource detail page - click

Application Url - Verify that you see a hosted endpoint with a

Hello Worldmessage on page

- Visit the resource detail page - click

- Next, visit the Azure AI Studio portal

- Sign in - you should be auto-logged in with existing Azure credential

- Click on

All Resources- you should see anAIServicesandHubresources - Click the hub resource - you should see an

AI Projectresource listed - Click the project resource - look at Deployments page to verify models

- ✅ | Congratulations! - Your Azure project infrastructure is ready!

4️⃣ | Validate the Deployment

- The

azd upprocess also deploys the application as an Azure Container App - Visit the ACA resource page - click on

Application Urlto view endpoint - Add a

/docssuffix to default deployed path - to get a Swagger API test page - Click

Try it outto unlock inputs - you seequestion,customer_id,chat_history- Enter

question= "Tell me about the waterproof tents" - Enter

customer_id= 2 - Enter

chat_history= [] - Click Execute to see results: You should see a valid response with a list of matching tents from the product catalog with additional details.

- Enter

- ✅ | Congratulations! - Your Chat AI Deployment is working!

We can think about two levels of testing - manual validation and automated evaluation. The first is interactive, using a single test prompt to validate the prototype as we iterate. The second is code-driven, using a test prompt dataset to assess quality and safety of prototype responses for a diverse set of prompt inputs - and score them for criteria like coherence, fluency, relevance and groundedness based on built-in or custom evaluators.

1️⃣ | Manual Testing (interactive)

The Contoso Chat application is implemented as a FastAPI application that can be deployed to a hosted endpoint in Azure Container Apps. The API implementation is defined in src/api/main.py and currently exposes 2 routes:

/- which shows the default "Hello World" message/api/create_request- which is our chat AI endpoint for test prompts

To test locally, we run the FastAPI dev server, then use the Swagger endpoint at the /docs route to test the locally-served endpoint in the same way we tested the deployed version/

- Change to the root folder of the repository

- Run

fastapi dev ./src/api/main.py- it should launch a dev server - Click

Open in browserto preview the dev server page in a new tab- You should see: "Hello, World" with route at

/

- You should see: "Hello, World" with route at

- Add

/docsto the end of the path URL in the browser tab- You should see: "FASTAPI" page with 2 routes listed

- Click the

POSTroute then clickTry it outto unlock inputs

- Try a test input

- Enter

question= "Tell me about the waterproof tents" - Enter

customer_id= 2 - Enter

chat_history= [] - Click Execute to see results: You should see a valid response with a list of matching tents from the product catalog with additional details.

- Enter

- ✅ | Congratulations! - You successfully tested the app locally

2️⃣ | AI-Assisted Evaluation (code-driven)

Testing a single prompt is good for rapid prototyping and ideation. But once we have our application designed, we want to validate the quality and safety of responses against diverse test prompts. The sample shows you how to do AI-Assisted Evaluation using custom evaluators implemented with Prompty.

- Visit the

src/api/evaluators/folder - Open the

evaluate-chat-flow.ipynbnotebook - "Select Kernel" to activate - Clear inputs and then

Run all- starts evaluaton flow withdata.jsonltest dataset - Once evaluation completes (takes 10+ minutes), you should see

results.jsonl= the chat model's responses to test inputsevaluated_results.jsonl= the evaluation model's scoring of the responses- tabular results = coherence, fluency, relevance, groundedness scores

Want to get a better understanding of how custom evaluators work? Check out the src/api/evaluators/custom_evals folder and explore the relevant Prompty assets and their template instructions.

The Prompty tooling also has support for built-in tracing for observability. Look for a .runs/ subfolder to be created during the evaluation run, with .tracy files containing the trace data. Click one of them to get a trace-view display in Visual Studio Code to help you drill down or debug the interaction flow. This is a new feature so look for more updates in usage soon.

The solution is deployed using the Azure Developer CLI. The azd up command effectively calls azd provision and then azd deploy - allowing you to provision infrastructure and deploy the application with a single command. Subsequent calls to azd up (e.g., ,after making changes to the application) should be faster, re-deploying the application and updating infrastructure provisioning only if required. You can then test the deployed endpoint as described earlier.

This template currently uses the following models: gpt35-turbo, gpt-4 and text-embedding-ada-002, which may not be available in all Azure regions, or may lack sufficient quota for your subscription in supported regions. Check for up-to-date region availability and select a region during deployment accordingly

We recommend using francecentral

Pricing for services may vary by region and usage and exact costs are hard to determine. You can estimate the cost of this project's architecture with Azure's pricing calculator with these services:

- Azure OpenAI - Standard tier, GPT-35-turbo and Ada models. See Pricing

- Azure AI Search - Basic tier, Semantic Ranker enabled. See Pricing

- Azure Cosmos DB for NoSQL - Serverless, Free Tier. See Pricing

- Azure Monitor - Serverless, Free Tier. See Pricing

- Azure Container Apps - Severless, Free Tier. See Pricing

This template uses Managed Identity for authentication with key Azure services including Azure OpenAI, Azure AI Search, and Azure Cosmos DB. Applications can use managed identities to obtain Microsoft Entra tokens without having to manage any credentials. This also removes the need for developers to manage these credentials themselves and reduces their complexity.

Additionally, we have added a GitHub Action tool that scans the infrastructure-as-code files and generates a report containing any detected issues. To ensure best practices we recommend anyone creating solutions based on our templates ensure that the Github secret scanning setting is enabled in your repo.

The sample has a docs/workshop folder with step-by-step guidance for developers, to help you deconstruct the codebase, and understand how to to provision, ideate, build, evaluate, and deploy, the application yourself, with your own data.

- The workshop may be offered as an instructor-guided option (e.g., on Microsoft AI Tour)

- The workshop can be completed at home as a self-paced lab with your own subscription.

- View Workshop Online - view a pre-built workshop version in your browser

- View Workshop Locally - The workshop is built using Mkdocs. To preview it locally,

- install mkdocs:

pip install mkdocs-material - switch to folder:

cd docs/workshop - launch preview:

mkdocs serve - open browser to the preview URL specified

- install mkdocs:

Have issues or questions about the workshop? Submit a new issue with a documentation tag.

- Prompty Documentation

- Azure AI Studio Documentation

- Develop AI Apps using Azure AI Services

- Azure AI Templates with Azure Developer CLI

This project has adopted the Microsoft Open Source Code of Conduct. Learn more here:

- Microsoft Open Source Code of Conduct

- Microsoft Code of Conduct FAQ

- Contact [email protected] with questions or concerns

For more information see the Code of Conduct FAQ or contact [email protected] with any additional questions or comments.

This project follows below responsible AI guidelines and best practices, please review them before using this project:

- Microsoft Responsible AI Guidelines

- Responsible AI practices for Azure OpenAI models

- Safety evaluations transparency notes