ICCV 2023 [Paper] [Demo on HF 🤗] [Colab Demo]

Authors Yunji Kim1, Jiyoung Lee1, Jin-Hwa Kim1, Jung-Woo Ha1, Jun-Yan Zhu2

1NAVER AI Lab, 2Carnegie Mellon University

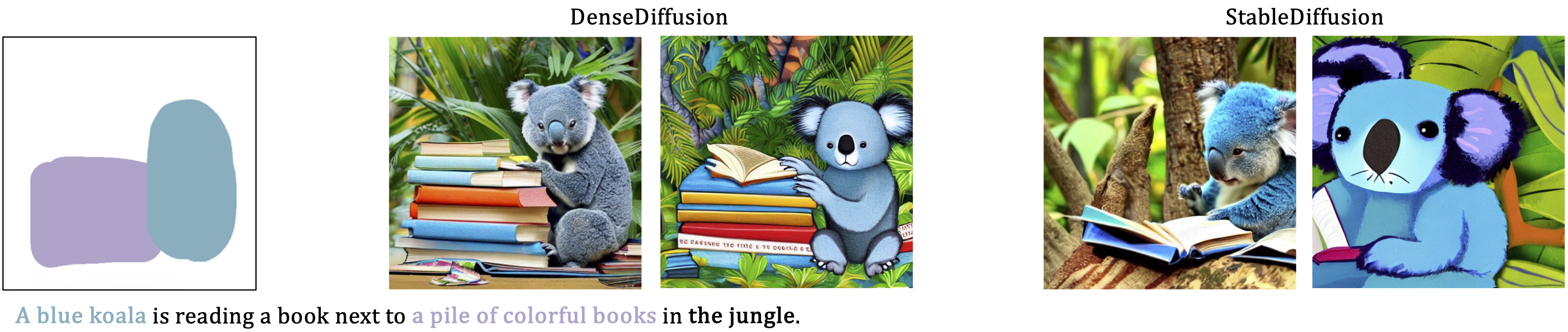

Existing text-to-image diffusion models struggle to synthesize realistic images given dense captions, where each text prompt provides a detailed description for a specific image region. To address this, we propose DenseDiffusion, a training-free method that adapts a pre-trained text-to-image model to handle such dense captions while offering control over the scene layout. We first analyze the relationship between generated images' layouts and the pre-trained model's intermediate attention maps. Next, we develop an attention modulation method that guides objects to appear in specific regions according to layout guidance. Without requiring additional fine-tuning or datasets, we improve image generation performance given dense captions regarding both automatic and human evaluation scores. In addition, we achieve similar-quality visual results with models specifically trained with layout conditions.

Our goal is to improve the text-to-image model's ability to reflect textual and spatial conditions without fine-tuning.

We formally define our condition as a set of

-

Put your access token to Hugging Face Hub here.

-

Run the Gradio app.

python gradio_app.py

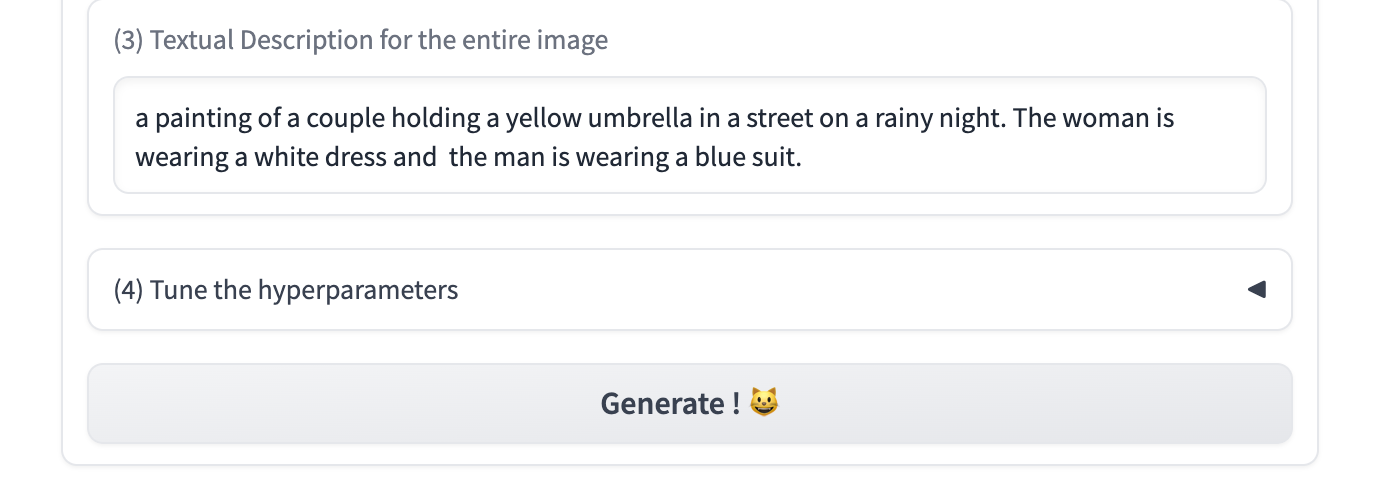

- Create the image layout.

- Label each segment with a text prompt.

- Adjust the full text. The default full text is automatically concatenated from each segment's text. The default one works well, but refineing the full text will further improve the result.

- Check the generated images, and tune the hyperparameters if needed.

wc : The degree of attention modulation at cross-attention layers.

ws : The degree of attention modulation at self-attention layers.

We share the benchmark used in our model development and evaluation here. The code for preprocessing segment conditions is in here.

@inproceedings{densediffusion,

title={Dense Text-to-Image Generation with Attention Modulation},

author={Kim, Yunji and Lee, Jiyoung and Kim, Jin-Hwa and Ha, Jung-Woo and Zhu, Jun-Yan},

year={2023},

booktitle = {ICCV}

}

The demo was developed referencing this source code. Thanks for the inspiring work! 🙏