Yuanzhi Liu, Yujia Fu†, Minghui Qin†, Yufeng Xu†, Baoxin Xu, et al. († Contributed equally)

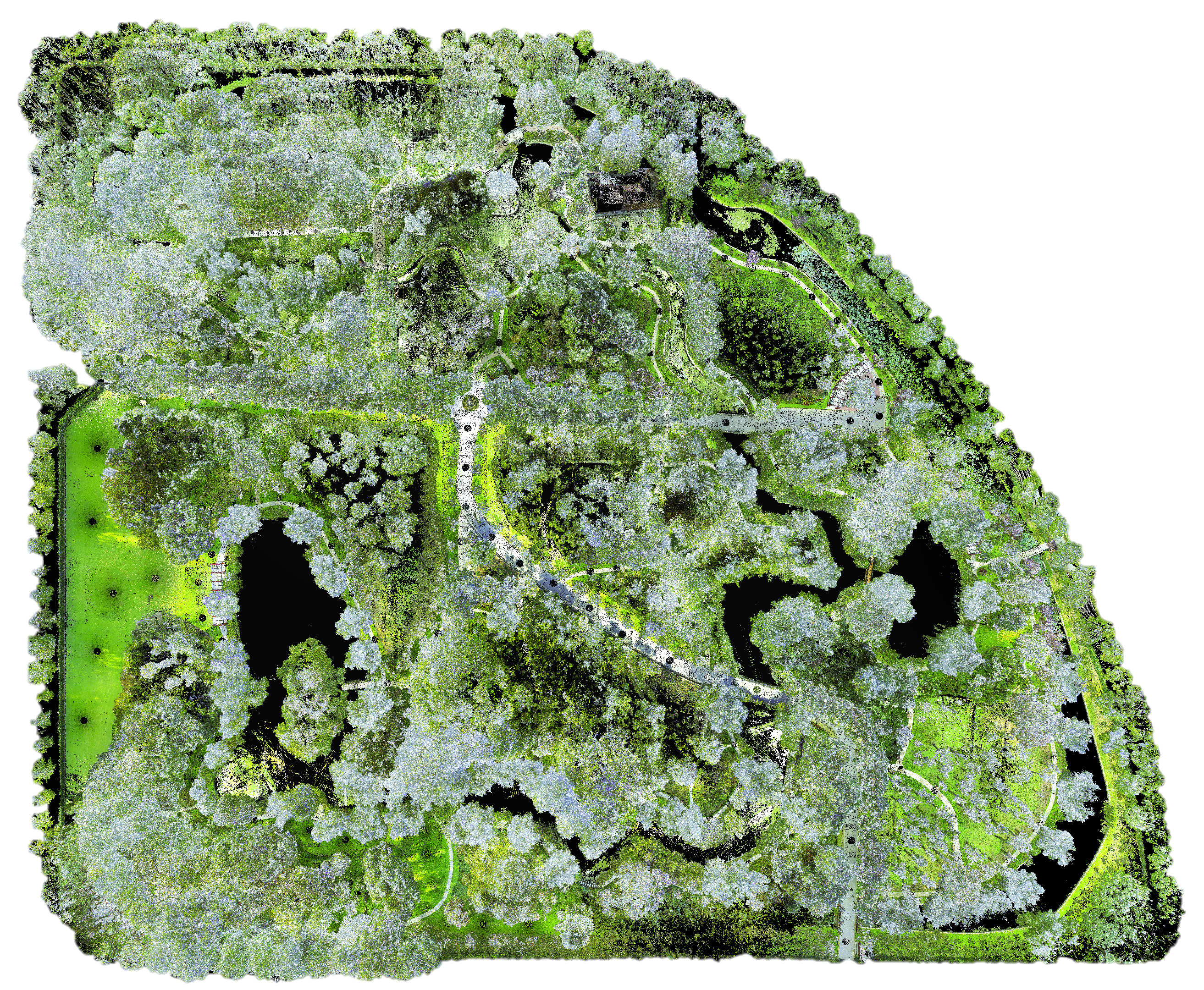

The rapid developments of mobile robotics and autonomous navigation over the years are largely empowered by public datasets for testing and upgrading, such as sensor odometry and SLAM tasks. Impressive demos and benchmark scores have arisen, which may suggest the maturity of existing navigation techniques. However, these results are primarily based on moderate structured scenario testing. When transitioning to challenging unstructured environments, especially in GNSS-denied, texture-monotonous, and dense-vegetated natural fields, their performance can hardly sustain at a high level and requires further validation and improvement. To bridge this gap, we build a novel robot navigation dataset in a luxuriant botanic garden of more than 48000m2. Comprehensive sensors are used, including Gray and RGB stereo cameras, spinning and MEMS 3D LiDARs, and low-cost and industrial-grade IMUs, all of which are well calibrated and hardware-synchronized. An all-terrain wheeled robot is employed for data collection, traversing through thick woods, riversides, narrow trails, bridges, and grasslands, which are scarce in previous resources. This yields 33 short and long sequences, forming 17.1km trajectories in total. Excitedly, both highly-accurate ego-motions and 3D map ground truth are provided, along with fine-annotated vision semantics. We firmly believe that our dataset can advance robot navigation and sensor fusion research to a higher level.

- We build a novel multi-sensory dataset in an over 48000m2 botanic garden with 33 long and short sequences and 17.1km trajectories in total, containing dense and diverse natural elements that are scarce in previous resources.

- We employed comprehensive sensors, including high-res and high-rate stereo gray and RGB cameras, spinning and MEMS 3D LiDARs, and low-cost and industrial-grade IMUs, supporting a wide range of applications. By elaborate development of the system, we have achieved highly-precise hardware-synchronization. Both the sensors availability and sync-quality are at top-level of this field.

- We provide both highly-precise 3D map and trajectories ground truth by dedicated surveying works and advanced map-based localization algorithm. We also provide dense vision semantics labeled by experienced annotators. This is the first field robot navigation dataset that provides such all-sided and high-quality reference data.

| Sensor/Device | Model | Specification |

|---|---|---|

| Gray Stereo | DALSA M1930 | 1920*1200, 2/3", 71°×56°FoV, 40Hz |

| RGB Stereo | DALSA C1930 | 1920*1200, 2/3", 71°×56°FoV, 40Hz |

| LiDAR | Velodyne VLP16 | 16C, 360°×30°FoV, ±3cm@100m, 10Hz |

| MEMS LiDAR | Livox AVIA | 70°×77°FoV, ±2cm@200m, 10Hz |

| D-GNSS/INS | Xsens Mti-680G | 9-axis, 400Hz, GNSS not in use |

| Consumer IMU | BMI088 | 6-axis, 200Hz, Livox built-in |

| Wheel Encoder | Scout V1.0 | 4WD, 3-axis, 200Hz |

| GT 3D Scanner | Leica RTC360 | 130m range, 1mm+10ppm accuracy |

To ensure the global accuracy, we have not used any mobile-mapping based techniques (e.g., SLAM), instead we employ a tactical-grade stationary 3D laser scanner and conduct a qualified surveying and mapping job with professional colleagues from the College of Surveying and Geo-Informatics, Tongji University. The scanner is the RTC360 from Leica, which can output very dense and colored point cloud with a 130m scan radius and mm-level ranging accuracy, as shown the specifications in above table. The survey job takes in total 20 workdays and more than 900 individual scans, and get an accuracy of 11mm std. from Leica's report.

Some survey photos and registration works:Our dataset consists of 33 data sequences in total. The sequences info is shown below, including statistics of LiDAR scans, trajectory length, duration, images, and intersactions. Their trajectory images can be found here.

At present, we have comprehensively evaluated the state-of-the-arts(SOTA) on 7 sample sequences. You can directly download their rosbags and raw files for benchmarking purposes. More sequences can be requested from Yuanzhi Liu via E-mail.

| Stat/Sequence | 1005-00 | 1005-01 | 1005-07 | 1006-01 | 1008-03 | 1018-00 | 1018-13 |

|---|---|---|---|---|---|---|---|

| Size/GB1 | 66.8 | 49.0 | 59.8 | 83.1 | 71.0 | 13.0 | 20.9 |

| rosbag2 | onedrive | onedrive | onedrive | onedrive | onedrive | onedrive | onedrive |

| rosbag | baidu | baidu | baidu | baidu | baidu | baidu | baidu |

| imagezip | onedrive | onedrive | onedrive | onedrive | onedrive | onedrive | onedrive |

| GT-pose | onedrive | onedrive | onedrive | onedrive | onedrive | onedrive | onedrive |

| Stat/Sequence | 1005-05 | 1006-03 | 1008-01 |

|---|---|---|---|

| Size/GB | 31.4/6.2 | 29.6/5.9 | 34.4/6.7 |

| VLIO-rosbag | onedrive baidu | onedrive baidu | onedrive baidu |

| LIO-rosbag | onedrive baidu | onedrive baidu | onedrive baidu |

| GT-pose | onedrive baidu | onedrive baidu | onedrive baidu |

The rostopics and corresponding message types are listed below:

| ROS Topic | Message Type | Description |

|---|---|---|

| /dalsa_rgb/left/image_raw | sensor_msgs/Image | Left RGB camera |

| /dalsa_rgb/right/image_raw | sensor_msgs/Image | Right RGB camera |

| /dalsa_gray/left/image_raw | sensor_msgs/Image | Left Gray camera |

| /dalsa_gray/right/image_raw | sensor_msgs/Image | Right Gray camera |

| /velodyne_points | sensor_msgs/PointCloud2 | Velodyne VLP16 LiDAR |

| /livox/lidar | livox_ros_driver/CustomMsg | Livox AVIA LiDAR |

| /imu/data | sensor_msgs/Imu | Xsens IMU |

| /livox/imu3 | sensor_msgs/Imu | Livox BMI088 IMU |

| /gt_poses | geometry_msgs/PoseStamped | Ground truth poses |

1Imagezip and no-vision rosbag size. 2The rosbags contain downsampled vision data (960x600@10Hz) to ease the downloads. Full res&rate frames (1920x1200@40Hz) are available in raw imagezips. 3The accelerometer of the BMI088 IMU has a gravity scale of 1. To use this IMU, multiply the accelerometer data by 9.8 to obtain acceleration in meters per second squared (m/s²). However, some algorithms designed specifically for Livox LiDAR may not require this multiplication (e.g., Fast-LIO2 and R3LIVE).

Our ground truth trajectories were generated within a survey-grade 3D map. With our dedicated survey works, the map was with ~1cm precision in the global coordinates, ensuring a cm-level precision for robot localization. All the 33 trajectories can be found in GT_traj folder.

We have tested the performance of visual (ORB-SLAM3), visual-inertial (ORB-SLAM3, VINS-Mono), LiDAR (LOAM), LiDAR-inertial (Fast-LIO2), and visual-LiDAR-inertial fusion (LVI-SAM, R3LIVE) systems on the above 7 sample sequences, check our Leaderboard for detail results.

To simplify the user testing procedure, We have provided the calibration and config files of the State-Of-The-Arts, which can be accessed in calib and config folders.

Testing of LVI-SAM on 1005-00 sequence:

All data are provided in LabelMe format and support future reproducing. It is expected that these data can well facilitate robust motion estimation and semantic mapping. The number of labeled images, as well as the semantic classes and their distribution are shown below.

| Sequence | 1005-05 | 1005-07 | 1006-01 | 1006-03 | 1008-01 |

|---|---|---|---|---|---|

| Labeled Images | 233 | 206 | 270 | 216 | 256 |

Our dataset is captured in rosbag and raw formats. For the convenience of usage, we have provided a toolbox to convert between different structures, check the rosbag_tools folder for usage.

The semantics are labelled in LabelMe json format. For the convenience of usage, we have provided a toolbox to convert to PASCAL VOC and MS COCO formats, check the semantic_tools folder for usage.

We have designed a concise toolbox for camera-LiDAR calibration based on several 2D checker boards. Check the calibration_tools folder for usage.

We recommend to use the open-source tool EVO for algorithm evaluation. Our Ground truth Poses are provided in TUM format consisting of timestamps, translations x-y-z, and quaternions x-y-z-w, which are concise and enable trajactory alignment based on time correspondances. Note that, the GT poses are tracking the VLP16 frame, so you must transform your poses to VLP16 side by hand-eye formula AX=XB before evaluation.

The authors would like to thank the colleagues from TongJi University and Sun Yat-sen University for their assistances in the rigorous survey works and post-processings, especially Xiaohang Shao, Chen Chen, and Kunhua Liu. We also thank A/Prof. Hangbin Wu for his guidance in data collection. Besides, we acknowledge Grace Xu from Livox for the support on Avia LiDAR, we acknowledge Claude Ng from Leica for the support on high-definition surveying, and we appreciate the colleagues of Appen for their professional works in visual semantic annotations. Yuanzhi Liu would like to thank Chenbo Gong for scene preparation work, and thank Jingxin Dong for her job-loggings and photographs during our data collection.

This work was supported by National Key R&D Program of China under Grant 2018YFB1305005.

Feb 6, 2023 Open the GitHub website: https://github.com/robot-pesg/BotanicGarden

Feb 10, 2023 Rosbag tools open-sourced

Feb 13, 2023 Semantic tools open-sourced

Feb 14, 2023 Calibration params available

Feb 17, 2023 Calibration tools open-sourced

Feb 18, 2023 Paper submitted to IEEE Robotics and Automation Letters (RA-L)

Sep 18, 2023 Trajectories info updated

Sep 20, 2023 More sequences available

Dec 31, 2023 Update Leaderboard and invite more algorithms to join

This dataset is provided for academic purposes. If you meet some technical problems, please open an issue or contact <Yuanzhi Liu: [email protected]> and <Yufeng Xu: [email protected]>.

If our dataset helps your research, please cite:

@ARTICLE{liu2023botanicgarden,

author={Liu, Yuanzhi and Fu, Yujia and Qin, Minghui and Xu, Yufeng and Xu, Baoxin and Chen, Fengdong and Goossens, Bart and Sun, Poly Z.H. and Yu, Hongwei and Liu, Chun and Chen, Long and Tao, Wei and Zhao, Hui},

journal={IEEE Robotics and Automation Letters},

title={BotanicGarden: A High-Quality Dataset for Robot Navigation in Unstructured Natural Environments},

year={2024},

volume={9},

number={3},

pages={2798-2805},

doi={10.1109/LRA.2024.3359548}}