✨

TangoFlux: Super Fast and Faithful Text to Audio Generation with Flow Matching and Clap-Ranked Preference Optimization

✨✨✨

| Colab |

|---|

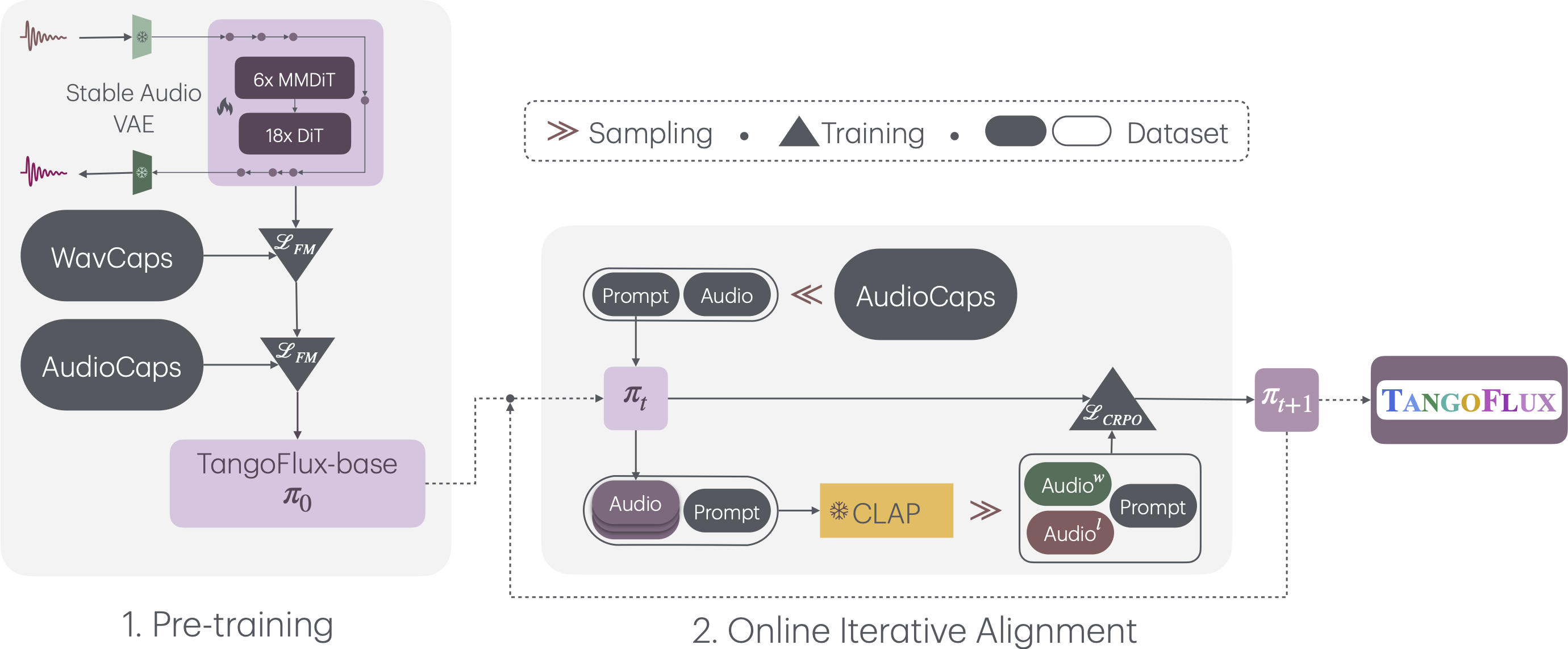

TangoFlux consists of FluxTransformer blocks, which are Diffusion Transformers (DiT) and Multimodal Diffusion Transformers (MMDiT) conditioned on a textual prompt and a duration embedding to generate a 44.1kHz audio up to 30 seconds long. TangoFlux learns a rectified flow trajectory to an audio latent representation encoded by a variational autoencoder (VAE). TangoFlux training pipeline consists of three stages: pre-training, fine-tuning, and preference optimization with CRPO. CRPO, particularly, iteratively generates new synthetic data and constructs preference pairs for preference optimization using DPO loss for flow matching.

🚀 TangoFlux can generate up to 30 seconds long 44.1kHz stereo audios in about 3 seconds on an A40 GPU.

We use the accelerate package from HuggingFace for multi-gpu training. Run accelerate config from terminal and set up your run configuration by the answering the questions asked. We have placed the default accelerator config in the configs folder. Please specify the path to your training files in the configs/tangoflux_config.yaml. A sample of train.json and val.json has been provided. Replace them with your own audio.

tangoflux_config.yaml defines the training file paths and model hyperparameters:

CUDA_VISIBLE_DEVICES=0,1 accelerate launch --config_file='configs/accelerator_config.yaml' src/train.py --checkpointing_steps="best" --save_every=5 --config='configs/tangoflux_config.yaml'To perform DPO training, modify the training files such that each data point contains a "chosen","reject","caption" and "duration". Please specify the path to your training files in the configs/tangoflux_config.yaml. An example has been provided in train_dpo.json. Replace them with your own audio.

CUDA_VISIBLE_DEVICES=0,1 accelerate launch --config_file='configs/accelerator_config.yaml' src/train_dpo.py --checkpointing_steps="best" --save_every=5 --config='configs/tangoflux_config.yaml'Download the TangoFlux model and generate audio from a text prompt.

TangoFlux can generate audios up to 30 second long through passing in a duration variable in the model.generate function. Please note that duration should be strictly greather than 1 and lesser than 30.

import torchaudio

from tangoflux import TangoFluxInference

from IPython.display import Audio

model = TangoFluxInference(name='declare-lab/TangoFlux')

audio = model.generate('Hammer slowly hitting the wooden table', steps=50, duration=10)

Audio(data=audio, rate=44100)Our evaluation shows that inferring with 50 steps yield the best results. A CFG scale of 3.5, 4, and 4.5 yield simliar quality output. For faster inference, consider setting steps to 25 that yield similar audio quality.

This key comparison metrics include:

- Output Length: Represents the duration of the generated audio.

- FDopenl3: Fréchet Distance.

- KLpasst: KL divergence.

- CLAPscore: Alignment score.

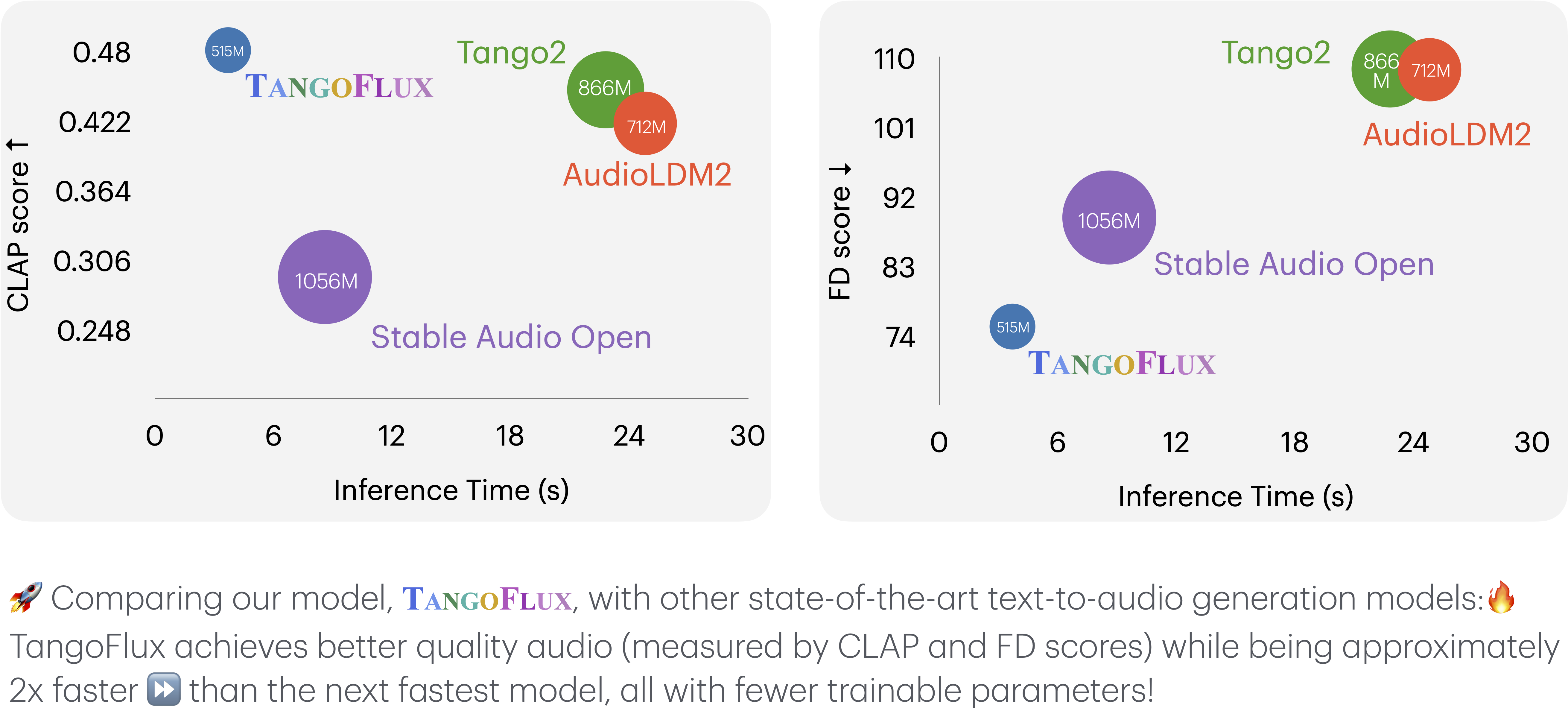

All the inference times are observed on the same A40 GPU. The counts of trainable parameters are reported in the #Params column.

| Model | #Params | Duration | Steps | FDopenl3 ↓ | KLpasst ↓ | CLAPscore ↑ | IS ↑ | Inference Time (s) |

|---|---|---|---|---|---|---|---|---|

| AudioLDM 2-large | 712M | 10 sec | 200 | 108.3 | 1.81 | 0.419 | 7.9 | 24.8 |

| Stable Audio Open | 1056M | 47 sec | 100 | 89.2 | 2.58 | 0.291 | 9.9 | 8.6 |

| Tango 2 | 866M | 10 sec | 200 | 108.4 | 1.11 | 0.447 | 9.0 | 22.8 |

| TangoFlux-base | 515M | 30 sec | 50 | 80.2 | 1.22 | 0.431 | 11.7 | 3.7 |

| TangoFlux | 515M | 30 sec | 50 | 75.1 | 1.15 | 0.480 | 12.2 | 3.7 |

@misc{hung2024tangofluxsuperfastfaithful,

title={TangoFlux: Super Fast and Faithful Text to Audio Generation with Flow Matching and Clap-Ranked Preference Optimization},

author={Chia-Yu Hung and Navonil Majumder and Zhifeng Kong and Ambuj Mehrish and Rafael Valle and Bryan Catanzaro and Soujanya Poria},

year={2024},

eprint={2412.21037},

archivePrefix={arXiv},

primaryClass={cs.SD},

url={https://arxiv.org/abs/2412.21037},

}