This is the implementation and dataset for Learning To Reconstruct High Speed and High Dynamic Range Videos From Events, CVPR 2021, by Yunhao Zou, Yinqiang Zheng, Tsuyoshi Takatani and Ying Fu.

- 2023/09/19: Both the training and testing datasets are avalable at OneDrive

- 2023/09/27: Release the evaluation code and pre-trained model

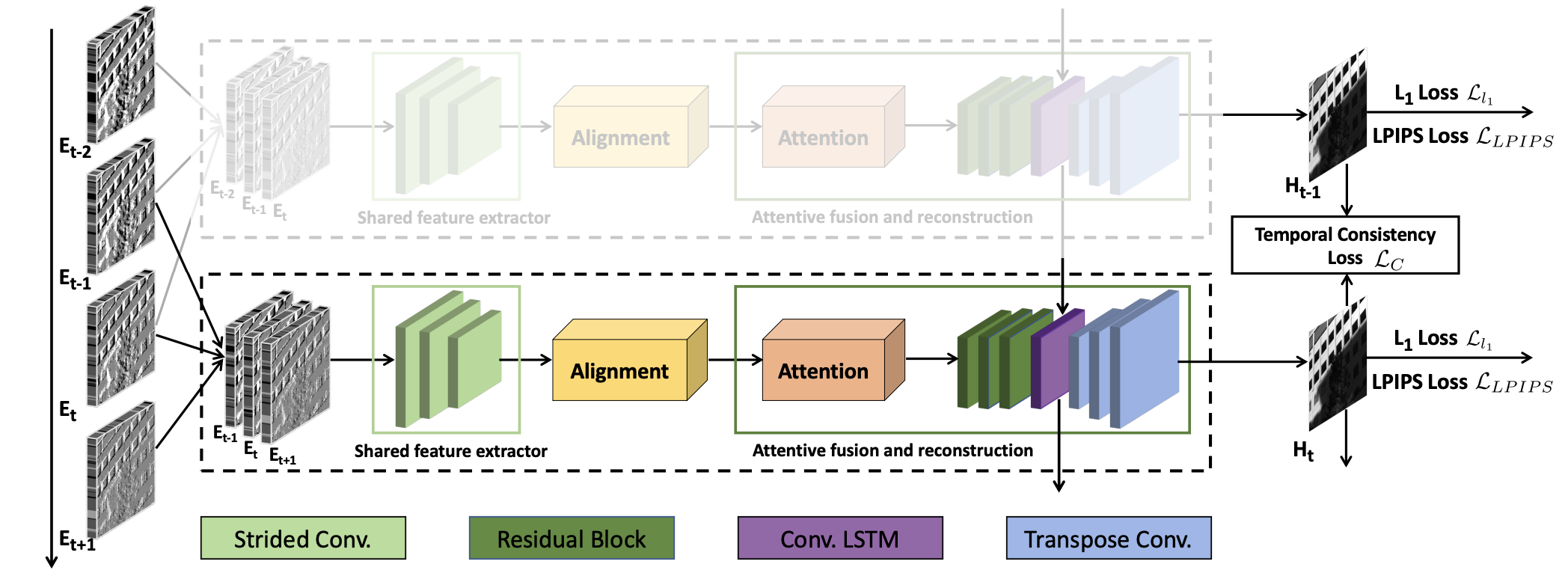

In this work, we present a convolutional recurrent neural network which takes a sequence of neighboring event frames to reconstruct high speed HDR videos. To facilitate the process of network learning, we design a novel optical system and collect a real-world dataset with paired high speed HDR videos and event streams

- We propose a convolutional recurrent neural network for the reconstruction of high speed HDR videos from events. Our architecture carefully considers the alignment and temporal correlation for events

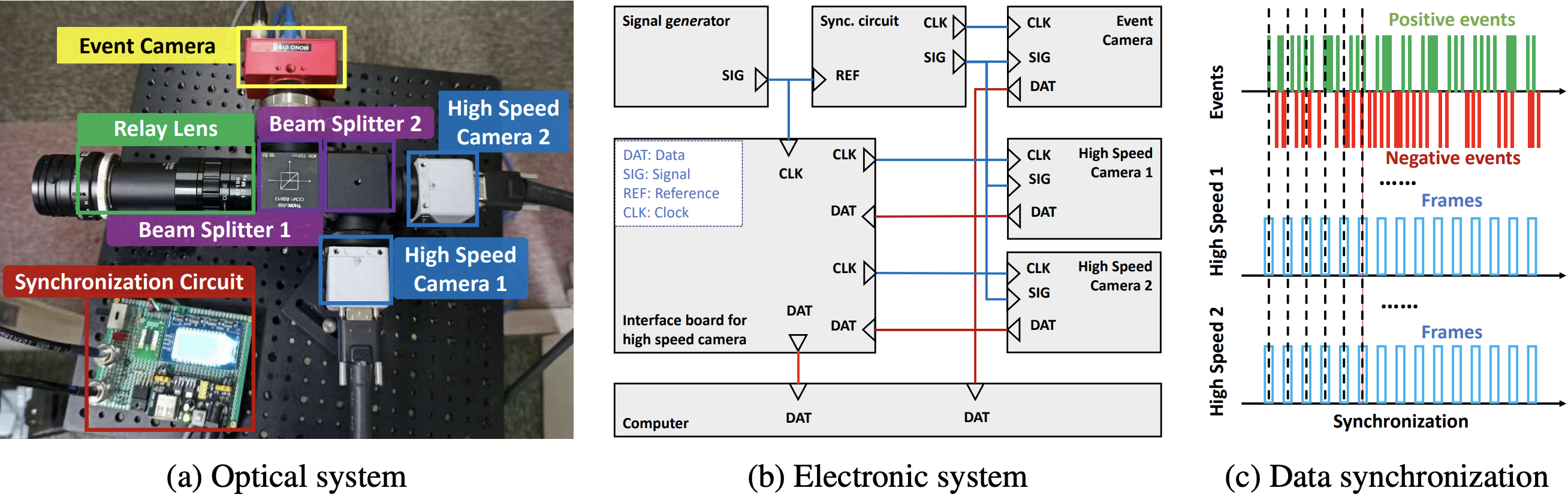

- To bridge the gap between simulated and real HDR videos, we design an elaborate system to synchronously capture paired high speed HDR video and the corresponding event stream

- We collect a real-world dataset with paired high speed HDR videos (high-bit) and event streams. Each frame of our HDR videos are merged from two precisely synchronized LDR frames

- Download our EventHDR dataset at OneDrive

- Both the training and testing data are stored in

hdf5format, you can useh5pytool to read the data. To create a pytorch dataset, please refer to DynamicH5Dataset - We build our dataset based on the data structure of events_contrast_maximization, and the structure tree is shown below

EventHDR Dataset Structure Tree

+-- '/'

| +-- attribute "duration"

| +-- attribute "num_events"

| +-- attribute "num_flow"

| +-- attribute "num_neg"

| +-- attribute "num_pos"

| +-- attribute "sensor_resolution"

| +-- attribute "t0"

| +-- group "events"

| | +-- dataset "ps"

| | +-- dataset "ts"

| | +-- dataset "xs"

| | +-- dataset "ys"

| |

| +-- group "images"

| | +-- attribute "num_images"

| | +-- dataset "image000000000"

| | | +-- attribute "event_idx"

| | | +-- attribute "size"

| | | +-- attribute "timestamp"

| | | +-- attribute "type"

| | |

| ...

| +-- group "images"

| | +-- attribute "num_images"

| | +-- dataset "image000002827"

| | | +-- attribute "event_idx"

| | | +-- attribute "size"

| | | +-- attribute "timestamp"

| | | +-- attribute "type"

| | |

| |

|

- Create

condaenvironment and download our repository

conda create -n eventhdr python=3.6

conda activate eventhdr

git clone https://github.com/jackzou233/EventHDR

cd EventHDR

pip install -r requirements.txt

- Compile dependencies for deformable convolution with

BASICSR_EXT=True python setup.py develop

- Download our

hdf5foramt evaluation data from OneDrive, and put it in folder./eval_data - Download the pre-trained model at pretrained, and move it to

./pretrained - Run the following script to reconstruct HDR videos from event streams!

bash test.sh

- To make a comparing video of the reconstruction, please run

python mk_video.py

Then, you will obtain results like

The quantitative results are shown below

| Method | PSNR | SSIM | LPIPS | TC |

|---|---|---|---|---|

| HF | 10.99 | 0.2708 | 0.4434 | 1.2051 |

| E2VID | 12.78 | 0.5753 | 0.3541 | 1.0305 |

| EF | 13.23 | 0.5914 | 0.3030 | 0.9729 |

| Ours | 15.31 | 0.7084 | 0.2424 | 0.5198 |

If you find this work useful for your research, please cite:

@inproceedings{zou2021learning,

title={Learning To Reconstruct High Speed and High Dynamic Range Videos From Events},

author={Zou, Yunhao and Zheng, Yinqiang and Takatani, Tsuyoshi and Fu, Ying},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={2024--2033},

year={2021}

}

If you have any problems, please feel free to contact me at [email protected]

The code borrows from event_cnn_minimal, EDVR and E2VID, please also cite their great work